Home >Technology peripherals >AI >Mass production killer! P-Mapnet: Using the low-precision map SDMap prior, the mapping performance is violently improved by nearly 20 points!

Mass production killer! P-Mapnet: Using the low-precision map SDMap prior, the mapping performance is violently improved by nearly 20 points!

- WBOYWBOYWBOYWBOYWBOYWBOYWBOYWBOYWBOYWBOYWBOYWBOYWBforward

- 2024-03-28 14:36:34808browse

Written before

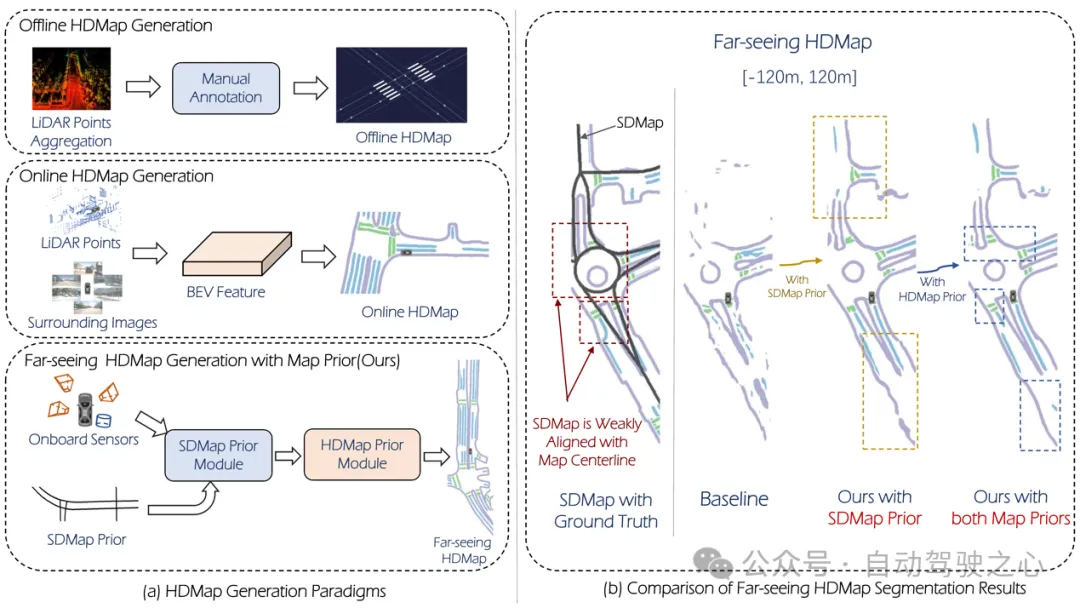

One of the algorithms used by the current autonomous driving system to get rid of its dependence on high-precision maps is to take advantage of the fact that the perception performance at long distances is still poor. Still worse. To this end, we propose P-MapNet, where the “P” focuses on fusing map priors to improve model performance. Specifically, we exploit the prior information in SDMap and HDMap: on the one hand, we extract weakly aligned SDMap data from OpenStreetMap and encode it into independent terms to support the input. There is a problem of weak alignment between strictly modified input and the actual HD Map. Our structure based on the Cross-attention mechanism can adaptively focus on the SDMap skeleton and bring significant performance improvements; on the other hand, we propose a method using MAE to The refine module captures the prior distribution of HDMap. This module helps generate a distribution that is more consistent with the actual map and helps reduce the effects of occlusion, artifacts, etc. We conduct extensive experimental validation on nuScenes and Argoverse2 datasets.

Figure 1

Figure 1

In summary, our contributions are as follows:

Our SDMap advanced can improve the performance of online map generation, including rasterization (up to Improved map performance by 18.73 mIoU) and quantized (up to 8.50 mAP improved).

(2) Our HDMap prior can improve the map awareness index by up to 6.34%.

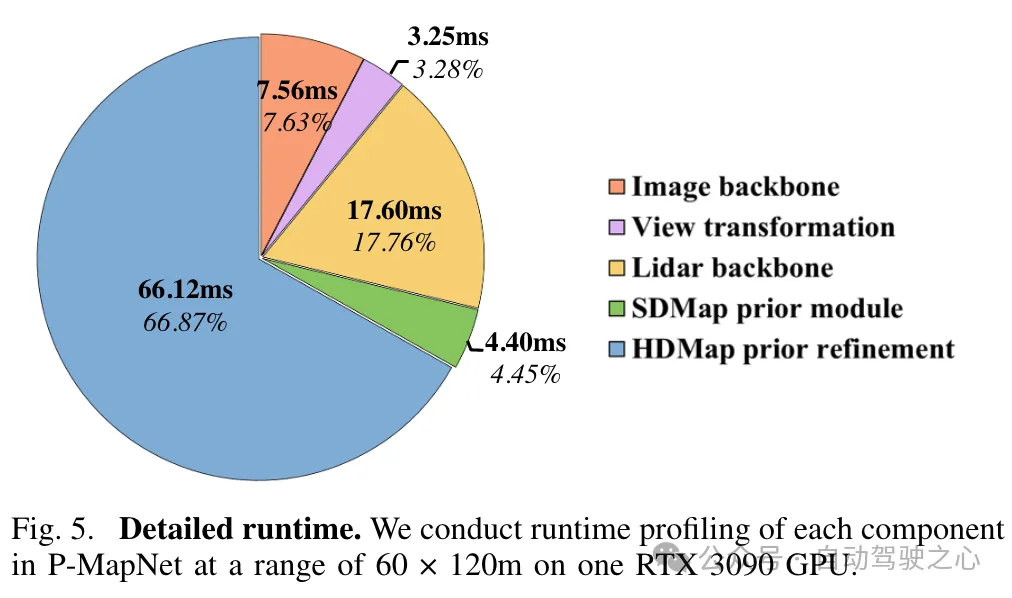

(3) P-MapNet can switch to different inference modes to trade off accuracy and efficiency.

P-MapNet is a long-distance HD Map generation solution that can bring greater improvements to farther sensing ranges. Our code and model have been publicly released at https://jike5.github.io/P-MapNet/.

Review of related work

(1)Online map generation

The production of HD Map mainly includes SLAM mapping, automatic Annotation, manual annotation and other steps. This results in high cost and limited freshness of HD Map. Therefore, online map generation is crucial for autonomous driving systems. HDMapNet expresses map elements through gridding and uses pixel-wise prediction and post-processing methods to obtain vectorized prediction results. Some recent methods, such as MapTR, PivotNet, Streammapnet, etc., implement end-to-end vectorized prediction based on the Transformer architecture. However, these methods only use sensor input, and their performance is still limited in complex environments such as occlusion and extreme weather.

(2)Long-distance map perception

In order to make the results generated by online maps better used by downstream modules, some research attempts to further expand the scope of map perception . SuperFusion[7] achieves forward 90m long-distance prediction by fusing lidar and cameras and using depth-aware BEV transformation. NeuralMapPrior[8] enhances the quality of current online observations and expands the scope of perception by maintaining and updating global neural map priors. [6] obtains BEV features by aggregating satellite images and vehicle sensor data, and further predicts them. MV-Map focuses on offline, long-distance map generation. This method optimizes BEV features by aggregating all associated frame features and using neural radiation fields.

Overview of P-MapNet

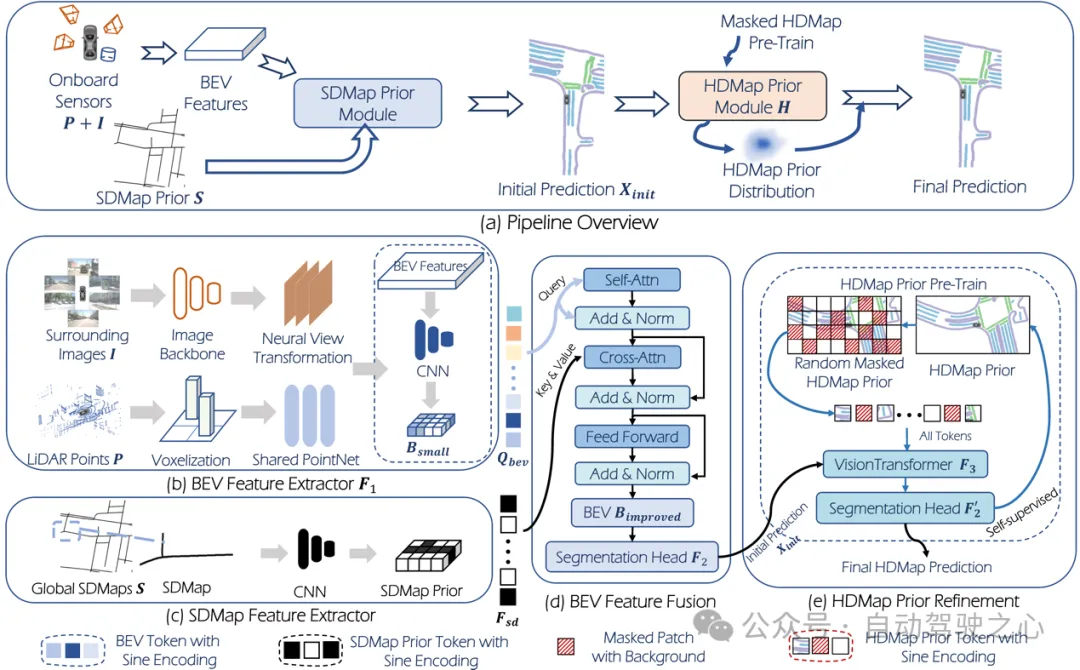

The overall framework is shown in Figure 2.

Figure 2

Figure 2

Input: The system input is point cloud: , surround camera:, among which is the number of surround cameras. Common HDMap generation tasks (such as HDMapNet) can be defined as:

where represents feature extraction, represents segmentation head, is HDMap forecast result.

The P-MapNet we proposed combines SD Map and HD Map priors. This new task ( setting) can be expressed as:

where, represents SDMap prior, represents the refinement module mentioned in this article. The module learns the HD Map distribution prior through pre-training. Similarly, when only using SDMap prior, you get -only setting:

Output: For map generation tasks, there are usually two map representations: Rasterization and vectorization. In the research of this article, since the two a priori modules designed in this article are more suitable for rasterized output, we mainly focus on rasterized representation.

3.1 SDMap Prior module

SDMap data generation

This article is based on nuScenes and Argoverse2 data sets for research, using OpenStreetMap data The SD Map data of the corresponding area of the above data set is generated, and the coordinate system is transformed through the vehicle GPS to obtain the SD Map of the corresponding area.

BEV Query

As shown in Figure 2, we first perform feature extraction and perspective conversion on the image data and feature extraction on the point cloud to obtain BEV features. Then the BEV features are downsampled through the convolutional network to obtain the new BEV features:, and the feature map is flattened to obtain the BEV Query.

SD Map prior fusion

For SD Map data, after feature extraction through the convolutional network, the obtained features are compared with BEV Query Cross-attention mechanism:

The BEV features obtained after the cross-attention mechanism can obtain the initial prediction of map elements through the segmentation head.

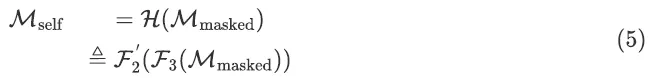

3.2. HDMap Prior module

directly uses the rasterized HD Map as the input of the original MAE, and the MAE will be trained through MSE Loss, resulting in the inability to use refinement module. So in this article, we replace the output of MAE with our segmentation head. In order to make the predicted map elements have continuity and authenticity (closer to the distribution of the actual HD Map), we use a pre-trained MAE module for refinement. Training this module consists of two steps: the first step is to use self-supervised learning to train the MAE module to learn the distribution of HD Map, and the second step is to fine-tune all modules of the network by using the weights obtained in the first step as initial weights.

In the first step of pre-training, the real HD Map obtained from the data set is passed through a random mask and used as network input , and the training goal is to complete the HD Map:

In the second step of fine-tune, use the pre-trained weights of the first step as the initial weights. The complete network is:

4. Experiment

4.1 Datasets and indicators

We conducted evaluation on two mainstream data sets :nuScenes and Argoverse2. In order to prove the effectiveness of our proposed method at long distances, we set three different detection distances:, , . Among them, the resolution of BEV Grid in the range is 0.15m, and the resolution in the other two ranges is 0.3m. We use the mIOU metric to evaluate rasterized prediction results and mAP to evaluate vectorized prediction results. In order to evaluate the authenticity of the map, we also use the LPIPS metric as the map awareness metric.

4.2 Results

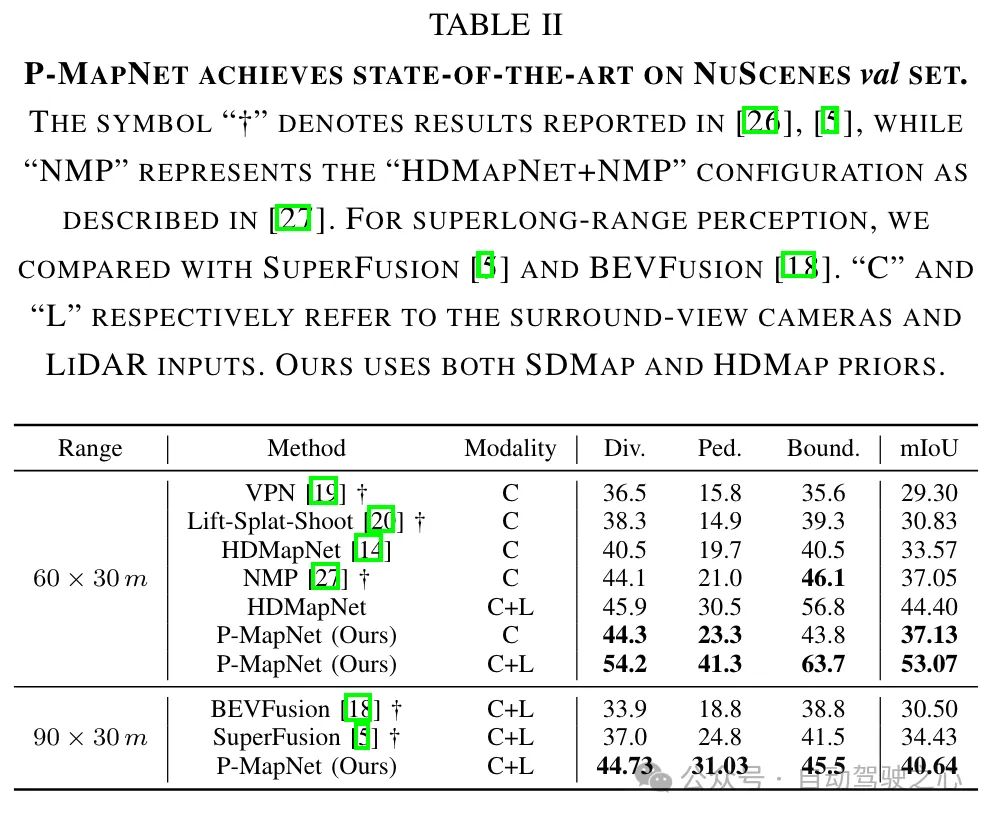

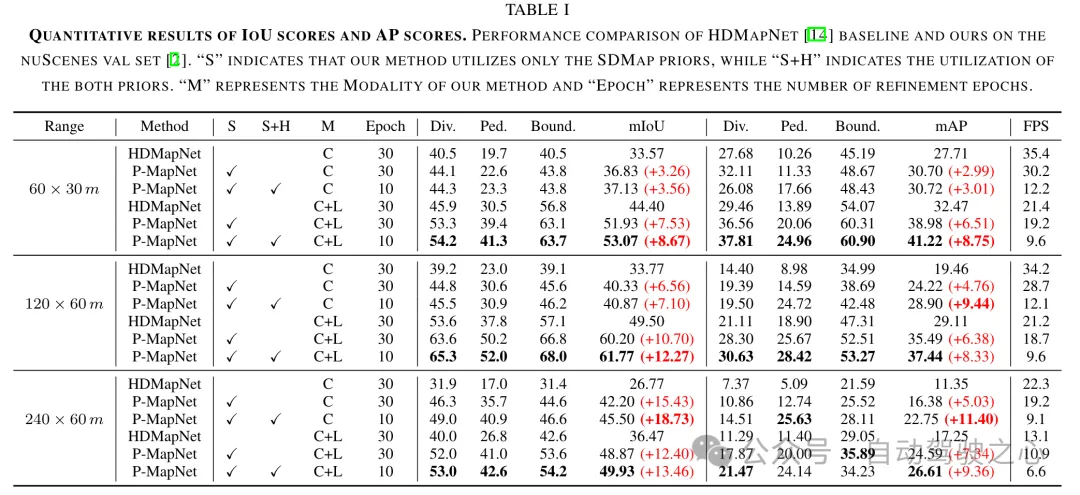

Comparison with SOTA results: We compare the proposed method with the current SOTA method in short distance (60m × 30m) and long distance (90m × 30m) ) to compare the map generation results. As shown in Table II, our method shows superior performance compared to existing vision-only and multi-modal (RGB LiDAR) methods.

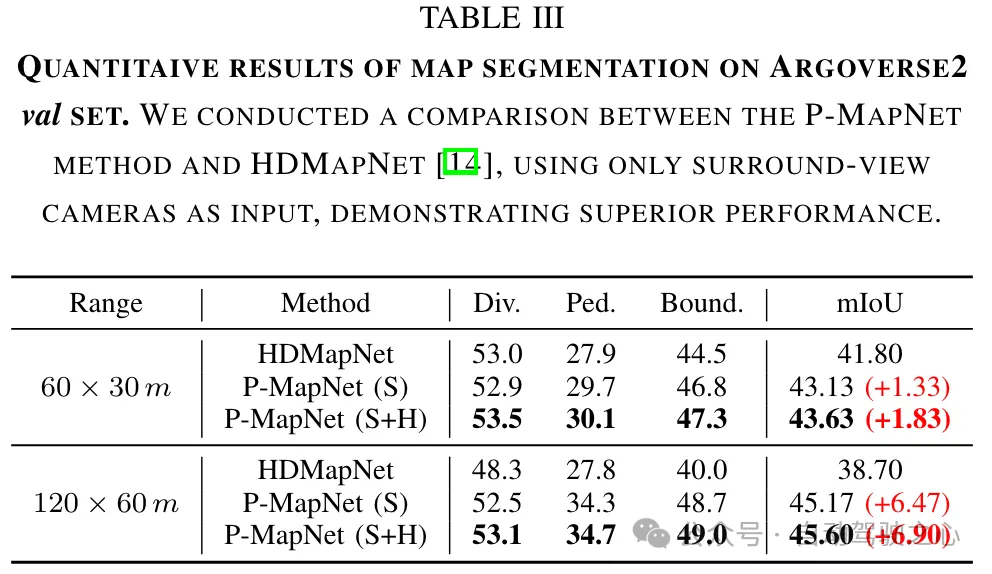

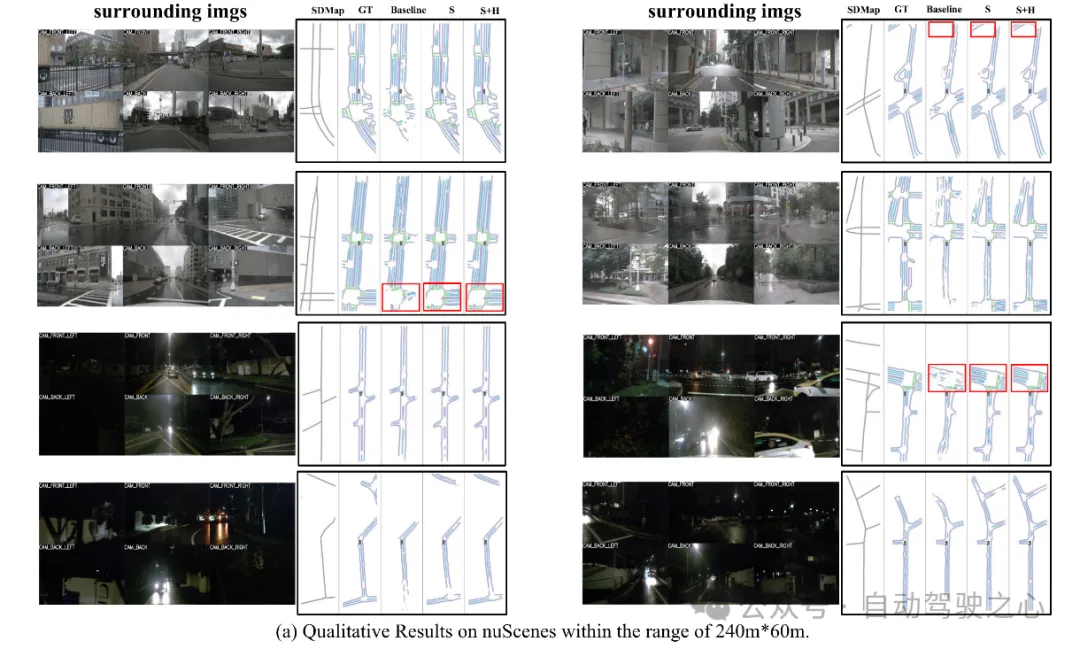

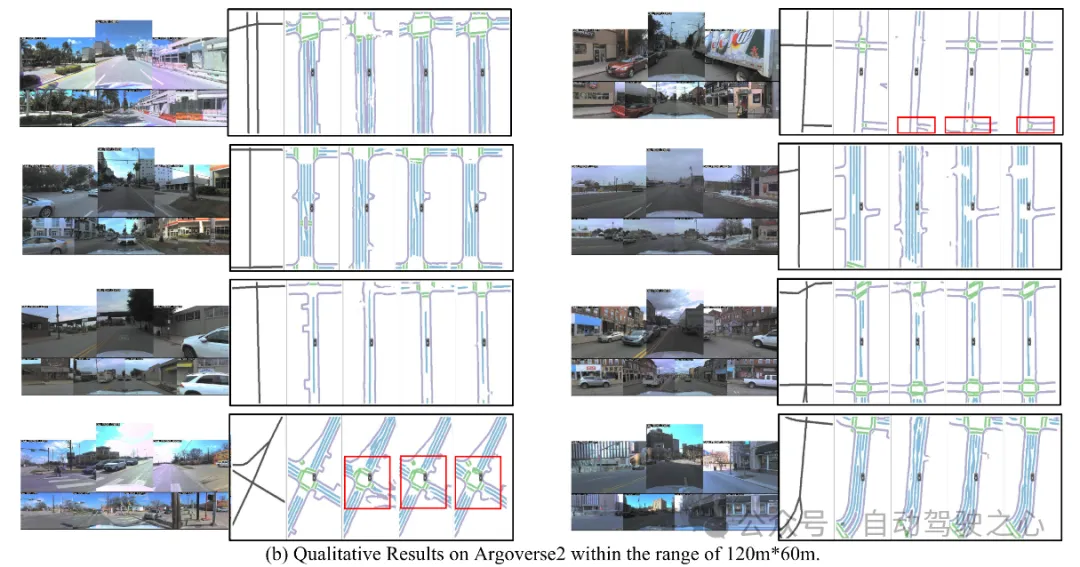

We performed a performance comparison with HDMapNet [14] at different distances and using different sensor modes, and the results are summarized in Table I and Table III. Our method achieves 13.4% improvement on mIOU in the range of 240m × 60m. As the perceived distance exceeds or even exceeds the sensor detection range, the effectiveness of the SDMap prior becomes more significant, thus validating the efficacy of the SDMap prior. Finally, we leverage the HD map prior to further bring performance improvements by refining the initial prediction results to make them more realistic and eliminate false results.

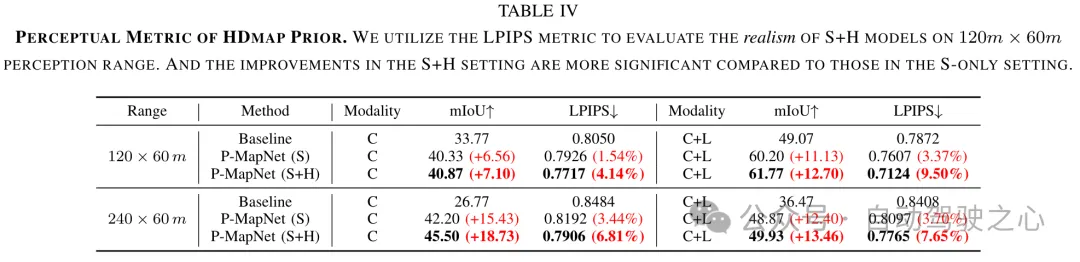

HDMap a priori perceptual metric. The HDMap prior module maps the network’s initial predictions onto the HD map’s distribution, making it more realistic. In order to evaluate the authenticity of the HDMap prior module output, we used the perceptual metric LPIPS (the lower the value, the better the performance) for evaluation. As shown in Table IV, the LPIPS indicator in the setting has a greater improvement than that in the -only setting.

Visualization:

The above is the detailed content of Mass production killer! P-Mapnet: Using the low-precision map SDMap prior, the mapping performance is violently improved by nearly 20 points!. For more information, please follow other related articles on the PHP Chinese website!

Related articles

See more- An in-depth analysis of Tesla's autonomous driving technology solutions

- The bitterness and helplessness of the self-driving annotator: 20 cents to draw a box and a monthly salary of 3,000 yuan

- One article to understand lidar and visual fusion perception of autonomous driving

- Realistic, controllable, and scalable, the autonomous driving lighting simulation platform LightSim is newly launched

- ADMap: A new idea for anti-interference online high-precision maps