Home >Technology peripherals >AI >MySQL connection hangs unexpectedly, but it is not the fault of the connection pool...

MySQL connection hangs unexpectedly, but it is not the fault of the connection pool...

- PHPzforward

- 2023-04-14 16:28:032560browse

1. Background

There have been a lot of questions reported from tests recently. Among them, the questions raised about system reliability testing are very troublesome. Firstly, such questions sometimes belong to " "Accidental" phenomenon is difficult to reproduce quickly in the environment; secondly, the location chain of reliability problems sometimes becomes very long. In extreme cases, it may be traced from service A to service Z, or from application code to hardware. level.

What I share this time is a process of locating MySQL high availability problems. There are many twists and turns, but the problem itself is relatively representative, so I will record it. for reference.

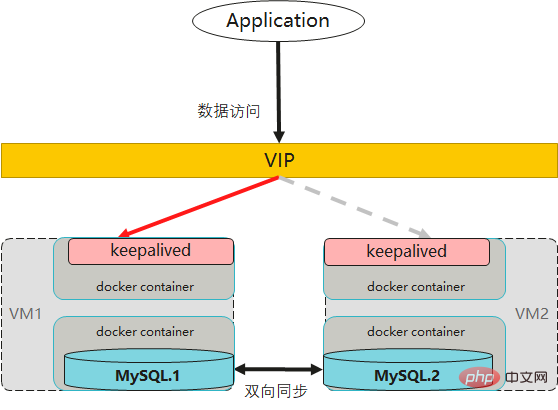

1. Architecture

First of all, this system uses MySQL as the main data storage component. The whole one is a typical microservice architecture (SpringBoot SpringCloud), and the persistence layer uses the following components:

- mybatis, implementing SQL Method mapping

- hikaricp, implement database connection pool

- mariadb-java- client, implements JDBC driver

In the MySQL server part, the back-end adopts a dual-main architecture, and the front-end uses keepalived combined with floating IP (VIP) One floor high available. As follows:

- ##MySQL deploys two instances and sets them as mutual masters prepared relationship.

- Deploy a keepalived process for each MySQL instance, and keepalived provides VIP high-availability failover.

- In fact, both keepalived and MySQL are containerized, and the VIP port is mapped to the nodePort service port on the VM.

- #Business services always use VIP for database access.

Keepalived implements routing layer conversion based on the VRRP protocol. At the same time, the VIP will only point to one of the virtual machines (master).

#When the master node fails, other keepalived will detect the problem and re-elect a new master, after which the VIP will switch to another available MySQL instance on the node.

#In this way, the MySQL database has basic high availability capabilities.

In addition, Keepalived will also perform regular health checks on the MySQL instance. Once it is found that the MySQL instance is unavailable, it will kill its own process and trigger VIP switching action.

2. Problem phenomenon

This test case is also designed based on the scenario of virtual machine failure:

Continue to initiate access to the business service with less pressure, and then restart one of the MySQL container instances (master).

According to the original evaluation, the business may have a small jitter, but its interruption time should be maintained at the second level.

#However, after many tests, it was found that after restarting the MySQL master node container, there is a certain probability that the business will no longer be accessible!

2. Analysis process

After the problem occurred, the first reaction of the development students was that there was something wrong with the high availability mechanism of MySQL. Since there has been a problem in the past that VIPs failed to switch in time due to improper configuration of keepalived, we have been wary of it.

After a thorough investigation, we did not find any configuration problems with keepalived.

# Then, with no other solution, I retested several times, and the problem recurred.

Immediately afterwards, we raised several doubts:

1) Keepalived will Based on the reachability of the MySQL instance, is there a problem with the health check?

#But in this test scenario, the destruction of the MySQL container will cause keepalived's port detection to fail, which will also cause keepalived to fail. If keepalived also aborts, then VIP should be able to automatically preempt. After comparing the information of the two virtual machine nodes, it was found that the VIP had indeed been switched.

#2) Is the container where the business process is located experiencing network unreachability?

Try to enter the container, perform a telnet test on the floating IP and port after the current switch, and find that the access can still be successful.

1. Connection pool troubleshooting

#After troubleshooting the first two doubts, we can only turn our attention to On the DB client of the business service.

Looking from the logs, at the time of the failure, some exceptions did occur on the business side, as follows:

Unable to acquire JDBC Connection [n/a] java.sql.SQLTransientConnectionException: HikariPool-1 - Connection is not available, request timed out after 30000ms. at com.zaxxer.hikari.pool.HikariPool.createTimeoutException(HikariPool.java:669) ~[HikariCP-2.7.9.jar!/:?] at com.zaxxer.hikari.pool.HikariPool.getConnection(HikariPool.java:183) ~[HikariCP-2.7.9.jar!/:?] ...

The prompt here is that the business operation has timed out to obtain the connection (more than 30 seconds). So, could it be that the number of connections is not enough?

Business access uses the hikariCP connection pool, which is also a very popular component on the market.

We then checked the current connection pool configuration, as follows:

//最小空闲连接数 spring.datasource.hikari.minimum-idle=10 //连接池最大大小 spring.datasource.hikari.maximum-pool-size=50 //连接最大空闲时长 spring.datasource.hikari.idle-timeout=60000 //连接生命时长 spring.datasource.hikari.max-lifetime=1800000 //获取连接的超时时长 spring.datasource.hikari.connection-timeout=30000

where Note that the hikari connection pool is configured with minimum-idle = 10, which means that even if there is no business, the connection pool should guarantee 10 connections. What's more, the current business traffic is extremely low, and there should not be insufficient connections.

In addition, another possibility may be that a "zombie connection" has occurred, which means that during the restart process, the connection pool has been These unavailable connections are not released, resulting in no usable connections.

Development students are convinced of the term "zombie link" and tend to believe that this is likely to come from a BUG in the HikariCP component..

So I started reading the source code of HikariCP and found that the application layer requests a connection from the connection pool. The code is as follows:

public class HikariPool{

//获取连接对象入口

public Connection getConnection(final long hardTimeout) throws SQLException

{

suspendResumeLock.acquire();

final long startTime = currentTime();

try {

//使用预设的30s 超时时间

long timeout = hardTimeout;

do {

//进入循环,在指定时间内获取可用连接

//从 connectionBag 中获取连接

PoolEntry poolEntry = connectionBag.borrow(timeout, MILLISECONDS);

if (poolEntry == null) {

break; // We timed out... break and throw exception

}

final long now = currentTime();

//连接对象被标记清除或不满足存活条件时,关闭该连接

if (poolEntry.isMarkedEvicted() || (elapsedMillis(poolEntry.lastAccessed, now) > aliveBypassWindowMs && !isConnectionAlive(poolEntry.connection))) {

closeConnection(poolEntry, poolEntry.isMarkedEvicted() ? EVICTED_CONNECTION_MESSAGE : DEAD_CONNECTION_MESSAGE);

timeout = hardTimeout - elapsedMillis(startTime);

}

//成功获得连接对象

else {

metricsTracker.recordBorrowStats(poolEntry, startTime);

return poolEntry.createProxyConnection(leakTaskFactory.schedule(poolEntry), now);

}

} while (timeout > 0L);

//超时了,抛出异常

metricsTracker.recordBorrowTimeoutStats(startTime);

throw createTimeoutException(startTime);

}

catch (InterruptedException e) {

Thread.currentThread().interrupt();

throw new SQLException(poolName + " - Interrupted during connection acquisition", e);

}

finally {

suspendResumeLock.release();

}

}

}The getConnection() method shows the entire process of obtaining a connection, where connectionBag is a container object used to store connection objects. If the connection obtained from the connectionBag no longer meets the survival conditions, it will be closed manually. The code is as follows:

void closeConnection(final PoolEntry poolEntry, final String closureReason)

{

//移除连接对象

if (connectionBag.remove(poolEntry)) {

final Connection connection = poolEntry.close();

//异步关闭连接

closeConnectionExecutor.execute(() -> {

quietlyCloseConnection(connection, closureReason);

//由于可用连接变少,将触发填充连接池的任务

if (poolState == POOL_NORMAL) {

fillPool();

}

});

}

}Note that only when the connection meets the following conditions When one of them is detected, close will be executed.

- isMarkedEvicted() 的返回结果是 true,即标记为清除

如果连接存活时间超出最大生存时间(maxLifeTime),或者距离上一次使用超过了idleTimeout,会被定时任务标记为清除状态,清除状态的连接在获取的时候才真正 close。

- 500ms 内没有被使用,且连接已经不再存活,即 isConnectionAlive() 返回 false

由于我们把 idleTimeout 和 maxLifeTime 都设置得非常大,因此需重点检查 isConnectionAlive 方法中的判断,如下:

public class PoolBase{

//判断连接是否存活

boolean isConnectionAlive(final Connection connection)

{

try {

try {

//设置 JDBC 连接的执行超时

setNetworkTimeout(connection, validationTimeout);

final int validationSeconds = (int) Math.max(1000L, validationTimeout) / 1000;

//如果没有设置 TestQuery,使用 JDBC4 的校验接口

if (isUseJdbc4Validation) {

return connection.isValid(validationSeconds);

}

//使用 TestQuery(如 select 1)语句对连接进行探测

try (Statement statement = connection.createStatement()) {

if (isNetworkTimeoutSupported != TRUE) {

setQueryTimeout(statement, validationSeconds);

}

statement.execute(config.getConnectionTestQuery());

}

}

finally {

setNetworkTimeout(connection, networkTimeout);

if (isIsolateInternalQueries && !isAutoCommit) {

connection.rollback();

}

}

return true;

}

catch (Exception e) {

//发生异常时,将失败信息记录到上下文

lastConnectionFailure.set(e);

logger.warn("{} - Failed to validate connection {} ({}). Possibly consider using a shorter maxLifetime value.",

poolName, connection, e.getMessage());

return false;

}

}

}我们看到,在PoolBase.isConnectionAlive 方法中对连接执行了一系列的探测,如果发生异常还会将异常信息记录到当前的线程上下文中。随后,在 HikariPool 抛出异常时会将最后一次检测失败的异常也一同收集,如下:

private SQLException createTimeoutException(long startTime)

{

logPoolState("Timeout failure ");

metricsTracker.recordConnectionTimeout();

String sqlState = null;

//获取最后一次连接失败的异常

final Throwable originalException = getLastConnectionFailure();

if (originalException instanceof SQLException) {

sqlState = ((SQLException) originalException).getSQLState();

}

//抛出异常

final SQLException connectionException = new SQLTransientConnectionException(poolName + " - Connection is not available, request timed out after " + elapsedMillis(startTime) + "ms.", sqlState, originalException);

if (originalException instanceof SQLException) {

connectionException.setNextException((SQLException) originalException);

}

return connectionException;

}这里的异常消息和我们在业务服务中看到的异常日志基本上是吻合的,即除了超时产生的 "Connection is not available, request timed out after xxxms" 消息之外,日志中还伴随输出了校验失败的信息:

Caused by: java.sql.SQLException: Connection.setNetworkTimeout cannot be called on a closed connection at org.mariadb.jdbc.internal.util.exceptions.ExceptionMapper.getSqlException(ExceptionMapper.java:211) ~[mariadb-java-client-2.2.6.jar!/:?] at org.mariadb.jdbc.MariaDbConnection.setNetworkTimeout(MariaDbConnection.java:1632) ~[mariadb-java-client-2.2.6.jar!/:?] at com.zaxxer.hikari.pool.PoolBase.setNetworkTimeout(PoolBase.java:541) ~[HikariCP-2.7.9.jar!/:?] at com.zaxxer.hikari.pool.PoolBase.isConnectionAlive(PoolBase.java:162) ~[HikariCP-2.7.9.jar!/:?] at com.zaxxer.hikari.pool.HikariPool.getConnection(HikariPool.java:172) ~[HikariCP-2.7.9.jar!/:?] at com.zaxxer.hikari.pool.HikariPool.getConnection(HikariPool.java:148) ~[HikariCP-2.7.9.jar!/:?] at com.zaxxer.hikari.HikariDataSource.getConnection(HikariDataSource.java:128) ~[HikariCP-2.7.9.jar!/:?]

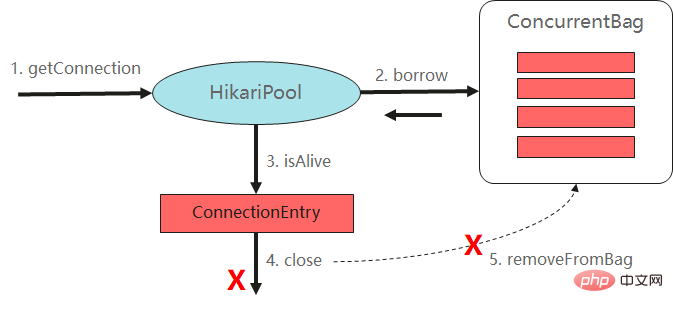

到这里,我们已经将应用获得连接的代码大致梳理了一遍,整个过程如下图所示:

从执行逻辑上看,连接池的处理并没有问题,相反其在许多细节上都考虑到位了。在对非存活连接执行 close 时,同样调用了 removeFromBag 动作将其从连接池中移除,因此也不应该存在僵尸连接对象的问题。

那么,我们之前的推测应该就是错误的!

2、陷入焦灼

在代码分析之余,开发同学也注意到当前使用的 hikariCP 版本为 3.4.5,而环境上出问题的业务服务却是 2.7.9 版本,这仿佛预示着什么.. 让我们再次假设 hikariCP 2.7.9 版本存在某种未知的 BUG,导致了问题的产生。

为了进一步分析连接池对于服务端故障的行为处理,我们尝试在本地机器上进行模拟,这一次使用了 hikariCP 2.7.9 版本进行测试,并同时将 hikariCP 的日志级别设置为 DEBUG。

模拟场景中,会由 由本地应用程序连接本机的 MySQL 数据库进行操作,步骤如下:

1)初始化数据源,此时连接池 min-idle 设置为 10;

2)每隔50ms 执行一次SQL操作,查询当前的元数据表;

3)将 MySQL 服务停止一段时间,观察业务表现;

4)将 MySQL 服务重新启动,观察业务表现。

最终产生的日志如下:

//初始化过程,建立10个连接 DEBUG -HikariPool.logPoolState - Pool stats (total=1, active=1, idle=0, waiting=0) DEBUG -HikariPool$PoolEntryCreator.call- Added connection MariaDbConnection@71ab7c09 DEBUG -HikariPool$PoolEntryCreator.call- Added connection MariaDbConnection@7f6c9c4c DEBUG -HikariPool$PoolEntryCreator.call- Added connection MariaDbConnection@7b531779 ... DEBUG -HikariPool.logPoolState- After adding stats (total=10, active=1, idle=9, waiting=0) //执行业务操作,成功 execute statement: true test time -------1 execute statement: true test time -------2 ... //停止MySQL ... //检测到无效连接 WARN-PoolBase.isConnectionAlive - Failed to validate connection MariaDbConnection@9225652 ((conn=38652) Connection.setNetworkTimeout cannot be called on a closed connection). Possibly consider using a shorter maxLifetime value. WARN-PoolBase.isConnectionAlive - Failed to validate connection MariaDbConnection@71ab7c09 ((conn=38653) Connection.setNetworkTimeout cannot be called on a closed connection). Possibly consider using a shorter maxLifetime value. //释放连接 DEBUG -PoolBase.quietlyCloseConnection(PoolBase.java:134) - Closing connection MariaDbConnection@9225652: (connection is dead) DEBUG -PoolBase.quietlyCloseConnection(PoolBase.java:134) - Closing connection MariaDbConnection@71ab7c09: (connection is dead) //尝试创建连接失败 DEBUG -HikariPool.createPoolEntry - Cannot acquire connection from data source java.sql.SQLNonTransientConnectionException: Could not connect to address=(host=localhost)(port=3306)(type=master) : Socket fail to connect to host:localhost, port:3306. Connection refused: connect Caused by: java.sql.SQLNonTransientConnectionException: Socket fail to connect to host:localhost, port:3306. Connection refused: connect at internal.util.exceptions.ExceptionFactory.createException(ExceptionFactory.java:73) ~[mariadb-java-client-2.6.0.jar:?] ... //持续失败.. 直到MySQL重启 //重启后,自动创建连接成功 DEBUG -HikariPool$PoolEntryCreator.call -Added connection MariaDbConnection@42c5503e DEBUG -HikariPool$PoolEntryCreator.call -Added connection MariaDbConnection@695a7435 //连接池状态,重新建立10个连接 DEBUG -HikariPool.logPoolState(HikariPool.java:421) -After adding stats (total=10, active=1, idle=9, waiting=0) //执行业务操作,成功(已经自愈) execute statement: true

从日志上看,hikariCP 还是能成功检测到坏死的连接并将其踢出连接池,一旦 MySQL 重新启动,业务操作又能自动恢复成功了。根据这个结果,基于 hikariCP 版本问题的设想也再次落空,研发同学再次陷入焦灼。

3、拨开云雾见光明

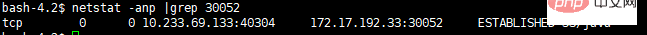

多方面求证无果之后,我们最终尝试在业务服务所在的容器内进行抓包,看是否能发现一些蛛丝马迹。

进入故障容器,执行 tcpdump -i eth0 tcp port 30052 进行抓包,然后对业务接口发起访问。

此时令人诡异的事情发生了,没有任何网络包产生!而业务日志在 30s 之后也出现了获取连接失败的异常。

我们通过 netstat 命令检查网络连接,发现只有一个 ESTABLISHED 状态的 TCP 连接。

也就是说,当前业务实例和 MySQL 服务端是存在一个建好的连接的,但为什么业务还是报出可用连接呢?

推测可能原因有二:

- 该连接被某个业务(如定时器)一直占用。

- 该连接实际上还没有办法使用,可能处于某种僵死的状态。

对于原因一,很快就可以被推翻,一来当前服务并没有什么定时器任务,二来就算该连接被占用,按照连接池的原理,只要没有达到上限,新的业务请求应该会促使连接池进行新连接的建立,那么无论是从 netstat 命令检查还是 tcpdump 的结果来看,不应该一直是只有一个连接的状况。

那么,情况二的可能性就很大了。带着这个思路,继续分析 Java 进程的线程栈。

执行 kill -3 pid 将线程栈输出后分析,果不其然,在当前 thread stack 中发现了如下的条目:

"HikariPool-1 connection adder" #121 daemon prio=5 os_prio=0 tid=0x00007f1300021800 nid=0xad runnable [0x00007f12d82e5000] java.lang.Thread.State: RUNNABLE at java.net.SocketInputStream.socketRead0(Native Method) at java.net.SocketInputStream.socketRead(SocketInputStream.java:116) at java.net.SocketInputStream.read(SocketInputStream.java:171) at java.net.SocketInputStream.read(SocketInputStream.java:141) at java.io.FilterInputStream.read(FilterInputStream.java:133) at org.mariadb.jdbc.internal.io.input.ReadAheadBufferedStream.fillBuffer(ReadAheadBufferedStream.java:129) at org.mariadb.jdbc.internal.io.input.ReadAheadBufferedStream.read(ReadAheadBufferedStream.java:102) - locked <0x00000000d7f5b480> (a org.mariadb.jdbc.internal.io.input.ReadAheadBufferedStream) at org.mariadb.jdbc.internal.io.input.StandardPacketInputStream.getPacketArray(StandardPacketInputStream.java:241) at org.mariadb.jdbc.internal.io.input.StandardPacketInputStream.getPacket(StandardPacketInputStream.java:212) at org.mariadb.jdbc.internal.com.read.ReadInitialHandShakePacket.<init>(ReadInitialHandShakePacket.java:90) at org.mariadb.jdbc.internal.protocol.AbstractConnectProtocol.createConnection(AbstractConnectProtocol.java:480) at org.mariadb.jdbc.internal.protocol.AbstractConnectProtocol.connectWithoutProxy(AbstractConnectProtocol.java:1236) at org.mariadb.jdbc.internal.util.Utils.retrieveProxy(Utils.java:610) at org.mariadb.jdbc.MariaDbConnection.newConnection(MariaDbConnection.java:142) at org.mariadb.jdbc.Driver.connect(Driver.java:86) at com.zaxxer.hikari.util.DriverDataSource.getConnection(DriverDataSource.java:138) at com.zaxxer.hikari.pool.PoolBase.newConnection(PoolBase.java:358) at com.zaxxer.hikari.pool.PoolBase.newPoolEntry(PoolBase.java:206) at com.zaxxer.hikari.pool.HikariPool.createPoolEntry(HikariPool.java:477)

这里显示 HikariPool-1 connection adder 这个线程一直处于 socketRead 的可执行状态。从命名上看该线程应该是 HikariCP 连接池用于建立连接的任务线程,socket 读操作则来自于 MariaDbConnection.newConnection() 这个方法,即 mariadb-java-client 驱动层建立 MySQL 连接的一个操作,其中 ReadInitialHandShakePacket 初始化则属于 MySQL 建链协议中的一个环节。

简而言之,上面的线程刚好处于建链的一个过程态,关于 mariadb 驱动和 MySQL 建链的过程大致如下:

MySQL 建链首先是建立 TCP 连接(三次握手),客户端会读取 MySQL 协议的一个初始化握手消息包,内部包含 MySQL 版本号,鉴权算法等等信息,之后再进入身份鉴权的环节。

这里的问题就在于 ReadInitialHandShakePacket 初始化(读取握手消息包)一直处于 socket read 的一个状态。

如果此时 MySQL 远端主机故障了,那么该操作就会一直卡住。而此时的连接虽然已经建立(处于 ESTABLISHED 状态),但却一直没能完成协议握手和后面的身份鉴权流程,即该连接只能算一个半成品(无法进入 hikariCP 连接池的列表中)。从故障服务的 DEBUG 日志也可以看到,连接池持续是没有可用连接的,如下:

DEBUG HikariPool.logPoolState --> Before cleanup stats (total=0, active=0, idle=0, waiting=3)

另一个需要解释的问题则是,这样一个 socket read 操作的阻塞是否就造成了整个连接池的阻塞呢?

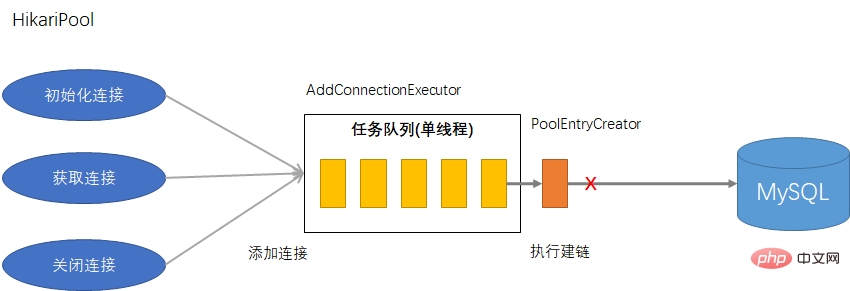

经过代码走读,我们再次梳理了 hikariCP 建立连接的一个流程,其中涉及到几个模块:

- HikariPool,连接池实例,由该对象连接的获取、释放以及连接的维护。

- ConnectionBag,连接对象容器,存放当前的连接对象列表,用于提供可用连接。

- AddConnectionExecutor,添加连接的执行器,命名如 "HikariPool-1 connection adder",是一个单线程的线程池。

- PoolEntryCreator,添加连接的任务,实现创建连接的具体逻辑。

- HouseKeeper,内部定时器,用于实现连接的超时淘汰、连接池的补充等工作。

HouseKeeper 在连接池初始化后的 100ms 触发执行,其调用 fillPool() 方法完成连接池的填充,例如 min-idle 是10,那么初始化就会创建10个连接。ConnectionBag 维护了当前连接对象的列表,该模块还维护了请求连接者(waiters)的一个计数器,用于评估当前连接数的需求。

其中,borrow 方法的逻辑如下:

public T borrow(long timeout, final TimeUnit timeUnit) throws InterruptedException

{

// 尝试从 thread-local 中获取

final List<Object> list = threadList.get();

for (int i = list.size() - 1; i >= 0; i--) {

...

}

// 计算当前等待请求的任务

final int waiting = waiters.incrementAndGet();

try {

for (T bagEntry : sharedList) {

if (bagEntry.compareAndSet(STATE_NOT_IN_USE, STATE_IN_USE)) {

//如果获得了可用连接,会触发填充任务

if (waiting > 1) {

listener.addBagItem(waiting - 1);

}

return bagEntry;

}

}

//没有可用连接,先触发填充任务

listener.addBagItem(waiting);

//在指定时间内等待可用连接进入

timeout = timeUnit.toNanos(timeout);

do {

final long start = currentTime();

final T bagEntry = handoffQueue.poll(timeout, NANOSECONDS);

if (bagEntry == null || bagEntry.compareAndSet(STATE_NOT_IN_USE, STATE_IN_USE)) {

return bagEntry;

}

timeout -= elapsedNanos(start);

} while (timeout > 10_000);

return null;

}

finally {

waiters.decrementAndGet();

}

}注意到,无论是有没有可用连接,该方法都会触发一个 listener.addBagItem() 方法,HikariPool 对该接口的实现如下:

public void addBagItem(final int waiting)

{

final boolean shouldAdd = waiting - addConnectionQueueReadOnlyView.size() >= 0; // Yes, >= is intentional.

if (shouldAdd) {

//调用 AddConnectionExecutor 提交创建连接的任务

addConnectionExecutor.submit(poolEntryCreator);

}

else {

logger.debug("{} - Add connection elided, waiting {}, queue {}", poolName, waiting, addConnectionQueueReadOnlyView.size());

}

}PoolEntryCreator 则实现了创建连接的具体逻辑,如下:

public class PoolEntryCreator{

@Override

public Boolean call()

{

long sleepBackoff = 250L;

//判断是否需要建立连接

while (poolState == POOL_NORMAL && shouldCreateAnotherConnection()) {

//创建 MySQL 连接

final PoolEntry poolEntry = createPoolEntry();

if (poolEntry != null) {

//建立连接成功,直接返回。

connectionBag.add(poolEntry);

logger.debug("{} - Added connection {}", poolName, poolEntry.connection);

if (loggingPrefix != null) {

logPoolState(loggingPrefix);

}

return Boolean.TRUE;

}

...

}

// Pool is suspended or shutdown or at max size

return Boolean.FALSE;

}

}由此可见,AddConnectionExecutor 采用了单线程的设计,当产生新连接需求时,会异步触发 PoolEntryCreator 任务进行补充。其中 PoolEntryCreator. createPoolEntry() 会完成 MySQL 驱动连接建立的所有事情,而我们的情况则恰恰是 MySQL 建链过程产生了永久性阻塞。因此无论后面怎么获取连接,新来的建链任务都会一直排队等待,这便导致了业务上一直没有连接可用。

下面这个图说明了 hikariCP 的建链过程:

好了,让我们在回顾一下前面关于可靠性测试的场景:

首先,MySQL 主实例发生故障,而紧接着 hikariCP 则检测到了坏的连接(connection is dead)并将其释放,在释放关闭连接的同时又发现连接数需要补充,进而立即触发了新的建链请求。而问题就刚好出在这一次建链请求上,TCP 握手的部分是成功了(客户端和 MySQL VM 上 nodePort 的完成连接),但在接下来由于当前的 MySQL 容器已经停止(此时 VIP 也切换到了另一台 MySQL 实例上),因此客户端再也无法获得原 MySQL 实例的握手包响应(该握手属于MySQL应用层的协议),此时便陷入了长时间的阻塞式 socketRead 操作。而建链请求任务恰恰好采用了单线程运作,进一步则导致了所有业务的阻塞。

三、解决方案

在了解了事情的来龙去脉之后,我们主要考虑从两方面进行优化:

1)优化一,增加 HirakiPool 中 AddConnectionExecutor 线程的数量,这样即使第一个线程出现挂死,还有其他的线程能参与建链任务的分配。

2)优化二,出问题的 socketRead 是一种同步阻塞式的调用,可通过 SO_TIMEOUT 来避免长时间挂死。

对于优化点一,我们一致认为用处并不大,如果连接出现了挂死那么相当于线程资源已经泄露,对服务后续的稳定运行十分不利,而且 hikariCP 在这里也已经将其写死了。因此关键的方案还是避免阻塞式的调用。

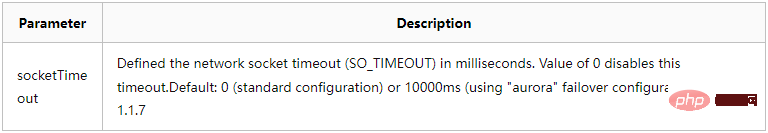

查阅了 mariadb-java-client 官方文档后,发现可以在 JDBC URL 中指定网络IO 的超时参数,如下:

如描述所说的,socketTimeout 可以设置 socket 的 SO_TIMEOUT 属性,从而达到控制超时时间的目的。默认是 0,即不超时。

我们在 MySQL JDBC URL 中加入了相关的参数,如下:

spring.datasource.url=jdbc:mysql://10.0.71.13:33052/appdb?socketTimeout=60000&cnotallow=30000&serverTimeznotallow=UTC

此后对 MySQL 可靠性场景进行多次验证,发现连接挂死的现象已经不再出现,此时问题得到解决。

四、小结

本次分享了一次关于 MySQL 连接挂死问题排查的心路历程,由于环境搭建的工作量巨大,而且该问题复现存在偶然性,整个分析过程还是有些坎坷的(其中也踩了坑)。

的确,我们很容易被一些表面的现象所迷惑,而觉得问题很难解决时,更容易带着偏向性思维去处理问题。

例如本例中曾一致认为连接池出现了问题,但实际上却是由于 MySQL JDBC 驱动(mariadb driver)的一个不严谨的配置所导致。

从原则上讲,应该避免一切可能导致资源挂死的行为。

The above is the detailed content of MySQL connection hangs unexpectedly, but it is not the fault of the connection pool.... For more information, please follow other related articles on the PHP Chinese website!

Related articles

See more- Technology trends to watch in 2023

- How Artificial Intelligence is Bringing New Everyday Work to Data Center Teams

- Can artificial intelligence or automation solve the problem of low energy efficiency in buildings?

- OpenAI co-founder interviewed by Huang Renxun: GPT-4's reasoning capabilities have not yet reached expectations

- Microsoft's Bing surpasses Google in search traffic thanks to OpenAI technology