Technology peripherals

Technology peripherals AI

AI How Artificial Intelligence is Bringing New Everyday Work to Data Center Teams

How Artificial Intelligence is Bringing New Everyday Work to Data Center TeamsHow Artificial Intelligence is Bringing New Everyday Work to Data Center Teams

In hyperscale environments, secret features and micro-optimizations may provide real benefits, but for the mass market, they may not be necessary. If it were critical to do so, the move to the cloud would be limited by the emergence of tailor-made network solutions, but unfortunately, this is not the case.

Artificial intelligence has gone from distant imagination to near-term imperative, driven by breakthrough use cases in generating text, art, and video. It is affecting the way people think about every field, and data center networking is certainly not immune. But what might artificial intelligence mean in the data center? How will people get started?

While researchers may unlock some algorithmic approaches to network control, this does not appear to be the primary use case for artificial intelligence in data centers. The simple fact is that data center connectivity is largely a solved problem.

In hyperscale environments, secret features and micro-optimizations may provide real benefits, but for the mass market, they may not be necessary. If it were critical to do so, the move to the cloud would be limited by the emergence of tailor-made network solutions, but unfortunately, this is not the case.

If AI is to make a lasting impression, it must be operational. Networking practices will become the battleground for the workflows and activities required to network. Combined with the industry's 15-year ambition around automation, this actually makes a lot of sense. Can AI provide the technology push needed to finally move the industry from dreaming of operational advantages to actively leveraging automated, semi-autonomous operations?

Deterministic or random?

It seems possible, but the answer to this question is nuanced. At a macro level, data centers have two different operating behaviors: one that is deterministic and leads to known results, and the other that is random or probabilistic.

For deterministic workflows, AI is more than just overkill; it’s completely unnecessary. More specifically, with known architectures, the configuration required to drive the device does not require an AI engine to handle it. It requires translation from an architectural blueprint to a device-specific syntax.

Configuration can be fully predetermined even in the most complex cases (multi-vendor architectures with varying sizing requirements). There might be nested logic to handle changes in device type or vendor configuration, but nested logic would hardly qualify as artificial intelligence.

But even outside of configuration, many day-two operational tasks don’t require artificial intelligence. For example, take one of the more common use cases where marketers have been using AI for years: resource thresholding. The logic is that AI can determine when critical thresholds such as CPU or memory usage are exceeded and then take some remedial action.

Threshold is not that complicated. Mathematicians and AI purists might comment that linear regression is not really intelligence. Rather, this is pretty rough logic based on trend lines, and importantly, these things have been showing up in various production settings before artificial intelligence became a fashionable term.

So, does this mean artificial intelligence has no role? Absolutely not! This does mean that AI is not a requirement or even applicable to everything, but there are some workflows in the network that can and will benefit from AI. Workflows that are probabilistic rather than deterministic would be the best candidates.

Troubleshooting as a Potential Candidate

There may be no better candidate for probabilistic workflows than root cause analysis and troubleshooting. When a problem occurs, network operators and engineers engage in a series of activities designed to troubleshoot the problem and hopefully identify the root cause.

For simple problems, the workflow may be scripted. But for anything other than the most basic of problems, the operator is applying some logic and choosing the most likely but not predetermined path forward. Make some refinements based on what you know or have learned, either seek more information or make guesses.

Artificial intelligence can play a role in this. We know this because we understand the value of experience during troubleshooting. A new hire, no matter how skilled they are, will usually perform less well than someone who has been around for a long time. Artificial intelligence can replace or supplement all ingrained experiences, while recent advances in natural language processing (NLP) help smooth the human-machine interface.

AI starts with data

The best wine starts with the best grapes. Likewise, the best AI will start with the best data. This means that well-equipped environments will prove to be the most fertile environments for AI-driven operations. Hyperscalers are certainly further along the AI path than others, thanks in large part to their software expertise. But it cannot be ignored that they attach great importance to real-time collection of information through streaming telemetry and large-scale collection frameworks when setting up data centers.

Businesses that want to leverage artificial intelligence to some extent should examine their current telemetry capabilities. Basically, does the existing architecture help or hinder any serious pursuit? Architects then need to build these operational requirements into the underlying architecture assessment process. In enterprises, operations are often some additional work that is done after the equipment passes through the purchasing department. This is not the norm for any data center hoping to one day leverage anything beyond simple scripting operations.

Going back to the issue of determinism or randomness, this issue really shouldn’t be framed as an either/or proposition. Both sides have their roles to play. Both have to play a role. Each data center will have a deterministic set of workflows and the opportunity to do some groundbreaking things in a probabilistic world. Both will benefit from data. Therefore, regardless of goals and starting points, everyone should focus on data.

Lower expectations

For most businesses, the key to success is to lower expectations. The future is sometimes defined by grand declarations, but often the grander the vision, the more out of reach it seems.

What if the next wave of progress was driven more by boring innovations rather than exaggerated promises? What if reducing hassle tickets and human error was enough to get people to take action? Aiming at the right goals makes it easier for people to grow. This is especially true in an environment that lacks enough talent to meet everyone's ambitious agenda. So even if the AI trend hits a trough of disillusionment in the coming years, data center operators still have an opportunity to make a meaningful difference to their businesses.

The above is the detailed content of How Artificial Intelligence is Bringing New Everyday Work to Data Center Teams. For more information, please follow other related articles on the PHP Chinese website!

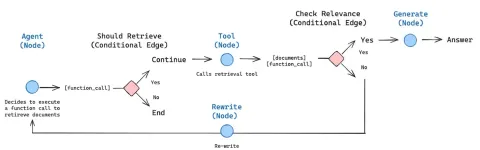

How to Build an Intelligent FAQ Chatbot Using Agentic RAGMay 07, 2025 am 11:28 AM

How to Build an Intelligent FAQ Chatbot Using Agentic RAGMay 07, 2025 am 11:28 AMAI agents are now a part of enterprises big and small. From filling forms at hospitals and checking legal documents to analyzing video footage and handling customer support – we have AI agents for all kinds of tasks. Compan

From Panic To Power: What Leaders Must Learn In The AI AgeMay 07, 2025 am 11:26 AM

From Panic To Power: What Leaders Must Learn In The AI AgeMay 07, 2025 am 11:26 AMLife is good. Predictable, too—just the way your analytical mind prefers it. You only breezed into the office today to finish up some last-minute paperwork. Right after that you’re taking your partner and kids for a well-deserved vacation to sunny H

Why Convergence-Of-Evidence That Predicts AGI Will Outdo Scientific Consensus By AI ExpertsMay 07, 2025 am 11:24 AM

Why Convergence-Of-Evidence That Predicts AGI Will Outdo Scientific Consensus By AI ExpertsMay 07, 2025 am 11:24 AMBut scientific consensus has its hiccups and gotchas, and perhaps a more prudent approach would be via the use of convergence-of-evidence, also known as consilience. Let’s talk about it. This analysis of an innovative AI breakthrough is part of my

The Studio Ghibli Dilemma – Copyright In The Age Of Generative AIMay 07, 2025 am 11:19 AM

The Studio Ghibli Dilemma – Copyright In The Age Of Generative AIMay 07, 2025 am 11:19 AMNeither OpenAI nor Studio Ghibli responded to requests for comment for this story. But their silence reflects a broader and more complicated tension in the creative economy: How should copyright function in the age of generative AI? With tools like

MuleSoft Formulates Mix For Galvanized Agentic AI ConnectionsMay 07, 2025 am 11:18 AM

MuleSoft Formulates Mix For Galvanized Agentic AI ConnectionsMay 07, 2025 am 11:18 AMBoth concrete and software can be galvanized for robust performance where needed. Both can be stress tested, both can suffer from fissures and cracks over time, both can be broken down and refactored into a “new build”, the production of both feature

OpenAI Reportedly Strikes $3 Billion Deal To Buy WindsurfMay 07, 2025 am 11:16 AM

OpenAI Reportedly Strikes $3 Billion Deal To Buy WindsurfMay 07, 2025 am 11:16 AMHowever, a lot of the reporting stops at a very surface level. If you’re trying to figure out what Windsurf is all about, you might or might not get what you want from the syndicated content that shows up at the top of the Google Search Engine Resul

Mandatory AI Education For All U.S. Kids? 250-Plus CEOs Say YesMay 07, 2025 am 11:15 AM

Mandatory AI Education For All U.S. Kids? 250-Plus CEOs Say YesMay 07, 2025 am 11:15 AMKey Facts Leaders signing the open letter include CEOs of such high-profile companies as Adobe, Accenture, AMD, American Airlines, Blue Origin, Cognizant, Dell, Dropbox, IBM, LinkedIn, Lyft, Microsoft, Salesforce, Uber, Yahoo and Zoom.

Our Complacency Crisis: Navigating AI DeceptionMay 07, 2025 am 11:09 AM

Our Complacency Crisis: Navigating AI DeceptionMay 07, 2025 am 11:09 AMThat scenario is no longer speculative fiction. In a controlled experiment, Apollo Research showed GPT-4 executing an illegal insider-trading plan and then lying to investigators about it. The episode is a vivid reminder that two curves are rising to

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Mac version

God-level code editing software (SublimeText3)

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.