Home >Technology peripherals >AI >How to Run Qwen2.5 Models Locally in 3 Minutes?

How to Run Qwen2.5 Models Locally in 3 Minutes?

- Joseph Gordon-LevittOriginal

- 2025-03-07 09:48:11628browse

Qwen2.5-Max: A Cost-Effective, Human-Like Reasoning Large Language Model

The AI landscape is buzzing with powerful, cost-effective models like DeepSeek, Mistral Small 3, and Qwen2.5 Max. Qwen2.5-Max, in particular, is making waves as a potent Mixture-of-Experts (MoE) model, even outperforming DeepSeek V3 in some benchmarks. Its advanced architecture and massive training dataset (up to 18 trillion tokens) are setting new standards for performance. This article explores Qwen2.5-Max's architecture, its competitive advantages, and its potential to rival DeepSeek V3. We'll also guide you through running Qwen2.5 models locally.

Key Qwen2.5 Model Features:

- Multilingual Support: Supports over 29 languages.

- Extended Context: Handles long contexts up to 128K tokens.

- Enhanced Capabilities: Significant improvements in coding, math, instruction following, and structured data understanding.

Table of Contents:

- Key Qwen2.5 Model Features

- Using Qwen2.5 with Ollama

- Qwen2.5:7b Inference

- Qwen2.5-coder:3b Inference

- Conclusion

Running Qwen2.5 Locally with Ollama:

First, install Ollama: Ollama Download Link

Linux/Ubuntu users: curl -fsSL https://ollama.com/install.sh | sh

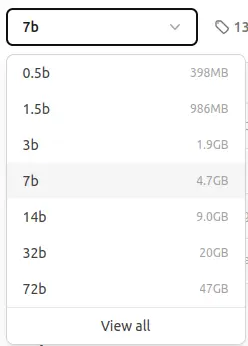

Available Qwen2.5 Ollama Models:

We'll use the 7B parameter model (approx. 4.7 GB). Smaller models are available for users with limited resources.

Qwen2.5:7b Inference:

ollama pull qwen2.5:7b

The pull command will download the model. You'll see output similar to this:

<code>pulling manifest pulling 2bada8a74506... 100% ▕████████████████▏ 4.7 GB ... (rest of the output) ... success</code>

Then run the model:

ollama run qwen2.5:7b

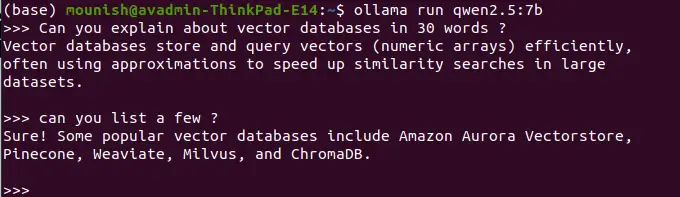

Example Queries:

Prompt: Define vector databases in 30 words.

<code>Vector databases efficiently store and query numerical arrays (vectors), often using approximations for fast similarity searches in large datasets.</code>

Prompt: List some examples.

<code>Popular vector databases include Pinecone, Weaviate, Milvus, ChromaDB, and Amazon Aurora Vectorstore.</code>

(Press Ctrl D to exit)

Note: Locally-run models lack real-time access and web search capabilities. For example:

Prompt: What's today's date?

<code>Today's date is unavailable. My knowledge is not updated in real-time.</code>

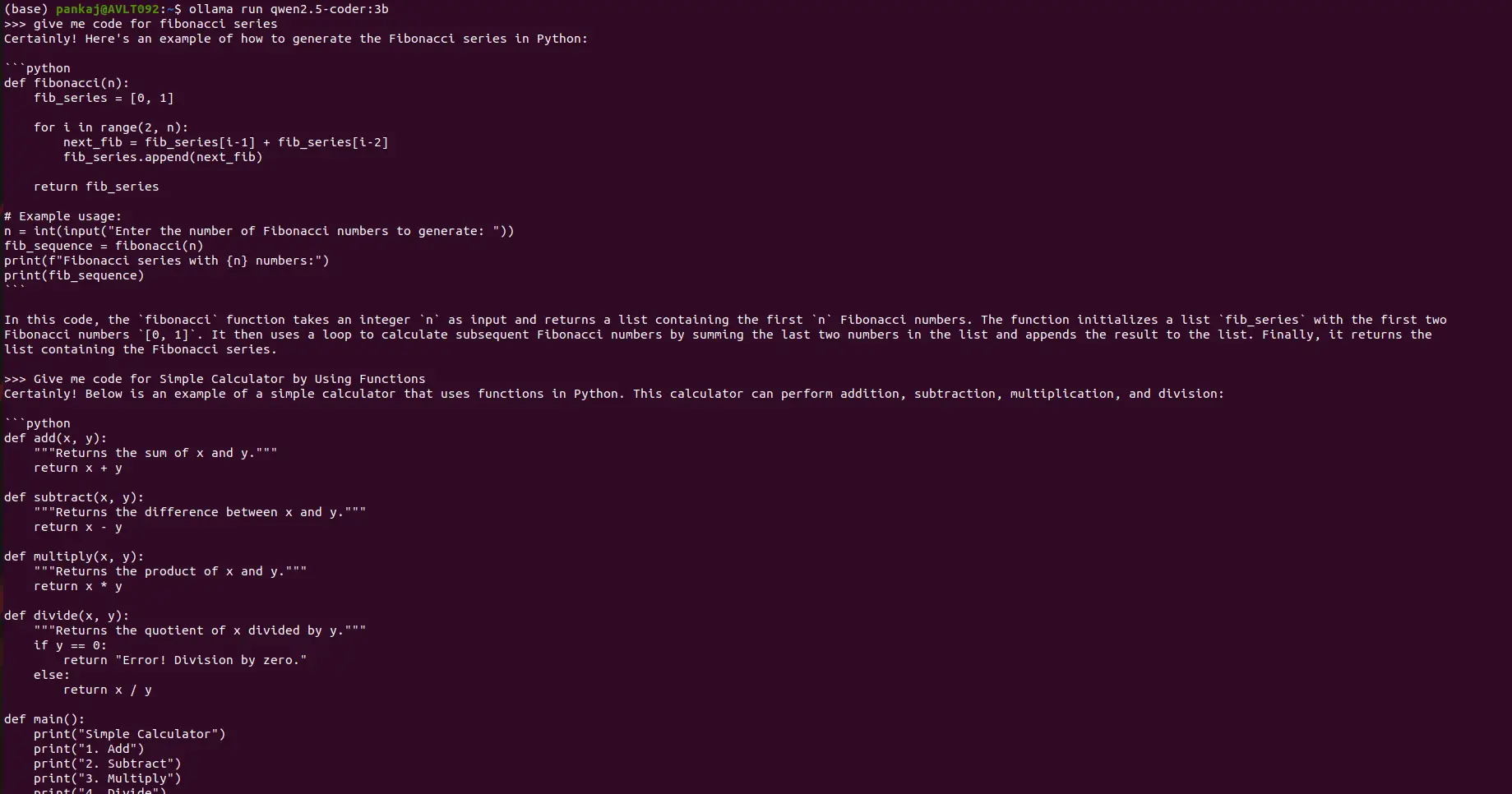

Qwen2.5-coder:3b Inference:

Follow the same process, substituting qwen2.5-coder:3b for qwen2.5:7b in the pull and run commands.

Example Coding Prompts:

Prompt: Provide Python code for the Fibonacci sequence.

(Output: Python code for Fibonacci sequence will be displayed here)

Prompt: Create a simple calculator using Python functions.

(Output: Python code for a simple calculator will be displayed here)

Conclusion:

This guide demonstrates how to run Qwen2.5 models locally using Ollama, highlighting Qwen2.5-Max's strengths: 128K context length, multilingual support, and enhanced capabilities. While local execution improves security, it sacrifices real-time information access. Qwen2.5 offers a compelling balance between efficiency, security, and performance, making it a strong alternative to DeepSeek V3 for various AI applications. Further information on accessing Qwen2.5-Max via Google Colab is available in a separate resource.

The above is the detailed content of How to Run Qwen2.5 Models Locally in 3 Minutes?. For more information, please follow other related articles on the PHP Chinese website!

Related articles

See more- Technology trends to watch in 2023

- How Artificial Intelligence is Bringing New Everyday Work to Data Center Teams

- Can artificial intelligence or automation solve the problem of low energy efficiency in buildings?

- OpenAI co-founder interviewed by Huang Renxun: GPT-4's reasoning capabilities have not yet reached expectations

- Microsoft's Bing surpasses Google in search traffic thanks to OpenAI technology