Technology peripherals

Technology peripherals AI

AI To protect customer privacy, run open source AI models locally using Ruby

To protect customer privacy, run open source AI models locally using RubyTo protect customer privacy, run open source AI models locally using Ruby

Translator| Chen Jun

##Reviewer| Chonglou

Recently, we implemented a customized artificial intelligence (AI) project. Given that Party A holds very sensitive customer information, for security reasons we cannot pass it to OpenAI or other proprietary models. Therefore, we downloaded and ran an open source AI model in an AWS virtual machine, keeping it completely under our control. At the same time, Rails applications can make API calls to AI in a safe environment. Of course, if security issues do not have to be considered, we would prefer to cooperate directly with OpenAI.

Next, I will share with you how to download the open source AI model locally, let it run, and how to Run the Ruby script against it.

Why customize?

#The motivation for this project is simple: data security. When handling sensitive customer information, the most reliable approach is usually to do it within the company. Therefore, we need customized AI models to play a role in providing a higher level of security control and privacy protection.

Open source model

In the past6In the past few months, products such as: Mistral, Mixtral and Lama# have appeared on the market. ## and many other open source AI models. Although they are not as powerful as GPT-4, the performance of many of them has exceeded GPT-3.5, and with the As time goes by, they will become stronger and stronger. Of course, which model you choose depends entirely on your processing capabilities and what you need to achieve.

#Since we will be running the AI model locally, a size of approximately4GB was chosen Mistral. It outperforms GPT-3.5 on most metrics. Although Mixtral performs better than Mistral, it is a bulky model requiring at least 48GBMemory can run.

ParametersTalking about large language models (

LLM ), we often consider mentioning their parameter sizes. Here, the Mistral model we will run locally is a 70# model with 70 million parameters (of course, Mixtral has 700 billion parameters, while GPT-3.5 has approximately 1750 billion parameters).

# Typically, large language models use neural network-based techniques. Neural networks are made up of neurons, and each neuron is connected to all other neurons in the next layer.

The purpose of the neural network is to "learn" an advanced algorithm, a pattern matching algorithm. By being trained on large amounts of text, it will gradually learn the ability to predict text patterns and respond meaningfully to the cues we give it. Simply put, parameters are the number of weights and biases in the model. It gives us an idea of how many neurons there are in a neural network. For example, for a model with 70 billion parameters, there are approximately 100 layers, each with thousands of neurons .

Run the model locally

To run the open source model locally, you must first download it Related applications. While there are many options on the market, the one I find easiest and easiest to run on IntelMac is Ollama.

Although Ollama is currently only available on Mac and It runs on Linux, but it will also run on Windows in the future. Of course, you can use WSL (Windows Subsystem for Linux) to run Linux shell# on Windows ##.

Ollama not only allows you to download and run a variety of open source models, but also opens the model on a local port, allowing you to Ruby code makes API calls. This makes it easier for Ruby developers to write Ruby applications that can be integrated with local models.

GetOllama

Since Ollama is mainly based on the command line , so installing Ollama on Mac# and Linux systems is very simple. You just need to download Ollama through the linkhttps://www.php.cn/link/04c7f37f2420f0532d7f0e062ff2d5b5, spend5 It will take about a minute to install the software package and then run the model.

After you have Ollama set up and running, you will see the Ollama icon in your browser's taskbar. This means it is running in the background and can run your model. In order to download the model, you can open a terminal and run the following command:

##ollama run mistral

Since Mistral is approximately 4GB, it will take some time for you to complete the download. Once the download is complete, it will automatically open the Ollama prompt for you to interact and communicate with Mistral.

#Next time you run it through Ollamamistral, you can run the corresponding model directly.

Customized model

##Similar to what we have inOpenAI## Create a customized GPT in #. Through Ollama, you can customize the basic model. Here we can simply create a custom model. For more detailed cases, please refer to Ollama's online documentation. First, you can create a Modelfile (model file) and add the following text in it:

FROM mistral# Set the temperature set the randomness or creativity of the responsePARAMETER temperature 0.3# Set the system messageSYSTEM ”””You are an excerpt Ruby developer. You will be asked questions about the Ruby Programminglanguage. You will provide an explanation along with code examples.”””

Next, you can run the following command on the terminal to create a new model:

ollama create

Ruby. ollama create ruby -f './Modelfile'

ollama list

At this point you can use Run the custom model with the following command:

Integrated with Ruby

Although Ollama does not yet have a dedicated gem, butRuby Developers can interact with models using basic HTTP request methods. Ollama running in the background can open the model through the 11434 port, so you can open it via "https://www.php.cn/link/ dcd3f83c96576c0fd437286a1ff6f1f0" to access it. Additionally, the documentation for OllamaAPI also provides different endpoints for basic commands such as chat conversations and creating embeds.

In this project case, we want to use the /api/chat endpoint to send prompts to the AI model. The image below shows some basic Ruby code for interacting with the model:

The functions of the above Ruby code snippet include:

- Via "net/http", "uri" and "json" three libraries respectively execute HTTP requests, parse URI and process JSONData.

- Create an endpoint address containing API(https://www.php. URI object of cn/link/dcd3f83c96576c0fd437286a1ff6f1f0/api/chat).

- Use Net::HTTP::Post.new## with URI as parameter #Method to create a new HTTP POST request.

- The request body is set to a JSON string that represents the hash value. The hash contains three keys: "model", "message" and "stream". Among them, the

- model key is set to "ruby", which is our model;

- The message key is set to an array containing a single hash value representing the user message;

- And the stream key is set to false.

- #How the system guides the model to respond to the information. We have already set it in the Modelfile.

- # User information is our standard prompt.

- #The model will respond with auxiliary information.

- Message hashing follows a pattern that intersects with AI models. It comes with a character and content. Roles here can be System, User, and Auxiliary. Among them, the

- HTTP request is sent using the Net::HTTP.start method . This method opens a network connection to the specified hostname and port and then sends the request. The connection's read timeout is set to 120 seconds, after all I'm running a 2019 Intel Mac, so the response speed may be a bit slow. This will not be a problem when running on the corresponding AWS server.

- The server's response is stored in the "response" variable.

Case Summary

As mentioned above, running the local AI model The real value lies in assisting companies holding sensitive data, processing unstructured data such as emails or documents, and extracting valuable structured information. In the project case we participated in, we conducted model training on all customer information in the customer relationship management (CRM) system. From this, users can ask any questions they have about their customers without having to sift through hundreds of records.

Translator introduction

Julian Chen ), 51CTO community editor, has more than ten years of experience in IT project implementation, is good at managing and controlling internal and external resources and risks, and focuses on disseminating network and information security knowledge and experience.

Original title: ##How To Run Open-Source AI Models Locally With Ruby By Kane Hooper

The above is the detailed content of To protect customer privacy, run open source AI models locally using Ruby. For more information, please follow other related articles on the PHP Chinese website!

7 Powerful AI Prompts Every Project Manager Needs To Master NowMay 08, 2025 am 11:39 AM

7 Powerful AI Prompts Every Project Manager Needs To Master NowMay 08, 2025 am 11:39 AMGenerative AI, exemplified by chatbots like ChatGPT, offers project managers powerful tools to streamline workflows and ensure projects stay on schedule and within budget. However, effective use hinges on crafting the right prompts. Precise, detail

Defining The Ill-Defined Meaning Of Elusive AGI Via The Helpful Assistance Of AI ItselfMay 08, 2025 am 11:37 AM

Defining The Ill-Defined Meaning Of Elusive AGI Via The Helpful Assistance Of AI ItselfMay 08, 2025 am 11:37 AMThe challenge of defining Artificial General Intelligence (AGI) is significant. Claims of AGI progress often lack a clear benchmark, with definitions tailored to fit pre-determined research directions. This article explores a novel approach to defin

IBM Think 2025 Showcases Watsonx.data's Role In Generative AIMay 08, 2025 am 11:32 AM

IBM Think 2025 Showcases Watsonx.data's Role In Generative AIMay 08, 2025 am 11:32 AMIBM Watsonx.data: Streamlining the Enterprise AI Data Stack IBM positions watsonx.data as a pivotal platform for enterprises aiming to accelerate the delivery of precise and scalable generative AI solutions. This is achieved by simplifying the compl

The Rise of the Humanoid Robotic Machines Is Nearing.May 08, 2025 am 11:29 AM

The Rise of the Humanoid Robotic Machines Is Nearing.May 08, 2025 am 11:29 AMThe rapid advancements in robotics, fueled by breakthroughs in AI and materials science, are poised to usher in a new era of humanoid robots. For years, industrial automation has been the primary focus, but the capabilities of robots are rapidly exp

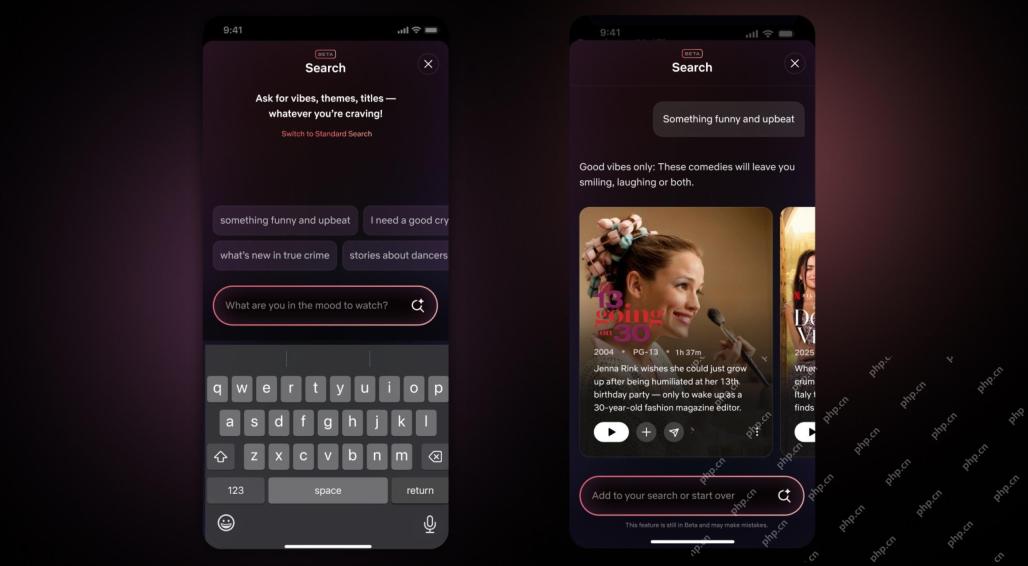

Netflix Revamps Interface — Debuting AI Search Tools And TikTok-Like DesignMay 08, 2025 am 11:25 AM

Netflix Revamps Interface — Debuting AI Search Tools And TikTok-Like DesignMay 08, 2025 am 11:25 AMThe biggest update of Netflix interface in a decade: smarter, more personalized, embracing diverse content Netflix announced its largest revamp of its user interface in a decade, not only a new look, but also adds more information about each show, and introduces smarter AI search tools that can understand vague concepts such as "ambient" and more flexible structures to better demonstrate the company's interest in emerging video games, live events, sports events and other new types of content. To keep up with the trend, the new vertical video component on mobile will make it easier for fans to scroll through trailers and clips, watch the full show or share content with others. This reminds you of the infinite scrolling and very successful short video website Ti

Long Before AGI: Three AI Milestones That Will Challenge YouMay 08, 2025 am 11:24 AM

Long Before AGI: Three AI Milestones That Will Challenge YouMay 08, 2025 am 11:24 AMThe growing discussion of general intelligence (AGI) in artificial intelligence has prompted many to think about what happens when artificial intelligence surpasses human intelligence. Whether this moment is close or far away depends on who you ask, but I don’t think it’s the most important milestone we should focus on. Which earlier AI milestones will affect everyone? What milestones have been achieved? Here are three things I think have happened. Artificial intelligence surpasses human weaknesses In the 2022 movie "Social Dilemma", Tristan Harris of the Center for Humane Technology pointed out that artificial intelligence has surpassed human weaknesses. What does this mean? This means that artificial intelligence has been able to use humans

Venkat Achanta On TransUnion's Platform Transformation And AI AmbitionMay 08, 2025 am 11:23 AM

Venkat Achanta On TransUnion's Platform Transformation And AI AmbitionMay 08, 2025 am 11:23 AMTransUnion's CTO, Ranganath Achanta, spearheaded a significant technological transformation since joining the company following its Neustar acquisition in late 2021. His leadership of over 7,000 associates across various departments has focused on u

When Trust In AI Leaps Up, Productivity FollowsMay 08, 2025 am 11:11 AM

When Trust In AI Leaps Up, Productivity FollowsMay 08, 2025 am 11:11 AMBuilding trust is paramount for successful AI adoption in business. This is especially true given the human element within business processes. Employees, like anyone else, harbor concerns about AI and its implementation. Deloitte researchers are sc

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software

SublimeText3 English version

Recommended: Win version, supports code prompts!

Atom editor mac version download

The most popular open source editor

Notepad++7.3.1

Easy-to-use and free code editor

Safe Exam Browser

Safe Exam Browser is a secure browser environment for taking online exams securely. This software turns any computer into a secure workstation. It controls access to any utility and prevents students from using unauthorized resources.