Technology peripherals

Technology peripherals AI

AI The principle and application of rejection sampling in large model training

The principle and application of rejection sampling in large model trainingThe principle and application of rejection sampling in large model training

Rejection sampling is a common technique in the training of large language models. It samples based on the probability density function of the target distribution to generate samples that fit the target distribution. The purpose of rejection sampling is to increase the diversity of training data, thereby improving the generalization ability of the model. This method is particularly important in language model training because it can help the model learn richer and more accurate language expressions. By rejecting sampling, the model can generate text from different perspectives and styles, making it more adaptable and creative. In this way, the model can more accurately predict the next word or phrase when processing various types of text, thereby improving the overall generation quality. The application of rejection sampling can also alleviate the problem of training.

Rejection sampling is a basic idea that uses an auxiliary distribution to generate samples and accept or reject the samples according to a certain probability. Auxiliary distributions are usually simple distributions such as uniform distributions or Gaussian distributions. In rejection sampling, the probability of accepting a sample is proportional to the probability of the target distribution. If the generated sample conforms to the target distribution, the sample is accepted; otherwise, it is rejected and a new sample is regenerated. This method can be used to generate samples that satisfy a specific probability distribution, which is especially useful when the target distribution is complex or cannot be directly sampled. By rejecting sampling, you can effectively obtain a sample set that conforms to the target distribution.

For example, when training a text generation model, we can use rejection sampling to generate sentences that are grammatically correct but different from the training data to expand the diversity of the training data. Such an approach can improve the model's generative capabilities and creativity, enabling it to generate more creative and diverse text content.

In principle, we can use an auxiliary distribution, such as an n-gram model or language model, to generate samples. For example, suppose we adopt a 3-gram model. First, we randomly select a 3-gram sequence from the training data as the starting point. Next, according to the probability distribution in the 3-gram model, we randomly select a next word as the next word of the current sequence. If the generated sequence is reasonable under the grammar rules, we accept the sequence; otherwise, we reject the sequence and regenerate a new sequence. In this way, we can generate sample sequences that comply with grammatical rules.

For example, there are the following two sentences in the training data:

The cat sat on the mat.

The dog chased the cat.

In order to generate new samples, we can use the 3-gram model to generate new sentences. First, we randomly select a 3-gram sequence from the training data as the starting point, such as "The cat sat". Then, according to the probability distribution in the 3-gram model, we randomly select a next word as the next word of the current sequence, such as "on". Next, we update the current sequence to "cat sat on" and repeat the above steps until we generate a sentence that conforms to the grammatical rules. Eventually, we can get a new sentence, such as "The dog sat on the mat."

Combined with the above examples, it can be found that rejection sampling can be used to generate sentences that are different from the training data but are grammatically correct, so that the model has better understanding and generation capabilities for different types of sentences. . In addition, rejection sampling can also be used to generate sentences that are similar to the training data but have different meanings, allowing the model to better understand the semantics of the language.

In rejection sampling, it is very important to choose an appropriate auxiliary distribution. The auxiliary distribution should be simple enough to make it easy to generate samples, but close enough to the target distribution that the probability of accepting a sample is not too low. In practical applications, commonly used auxiliary distributions include n-gram models, language models, and context-based models.

However, there are still some problems and challenges in rejecting sampling. For example, if the probability density function of the target distribution is complex, then rejection sampling may be inefficient. Furthermore, if the rejection rate is too high, the diversity of the training data may be affected, resulting in reduced generalization ability of the model. Therefore, reasonable parameter adjustment and optimization need to be carried out in practical applications.

In short, rejection sampling is an important technique in large language model training. It can be used to increase the diversity of training data and improve the generalization ability of the model.

The above is the detailed content of The principle and application of rejection sampling in large model training. For more information, please follow other related articles on the PHP Chinese website!

7 Powerful AI Prompts Every Project Manager Needs To Master NowMay 08, 2025 am 11:39 AM

7 Powerful AI Prompts Every Project Manager Needs To Master NowMay 08, 2025 am 11:39 AMGenerative AI, exemplified by chatbots like ChatGPT, offers project managers powerful tools to streamline workflows and ensure projects stay on schedule and within budget. However, effective use hinges on crafting the right prompts. Precise, detail

Defining The Ill-Defined Meaning Of Elusive AGI Via The Helpful Assistance Of AI ItselfMay 08, 2025 am 11:37 AM

Defining The Ill-Defined Meaning Of Elusive AGI Via The Helpful Assistance Of AI ItselfMay 08, 2025 am 11:37 AMThe challenge of defining Artificial General Intelligence (AGI) is significant. Claims of AGI progress often lack a clear benchmark, with definitions tailored to fit pre-determined research directions. This article explores a novel approach to defin

IBM Think 2025 Showcases Watsonx.data's Role In Generative AIMay 08, 2025 am 11:32 AM

IBM Think 2025 Showcases Watsonx.data's Role In Generative AIMay 08, 2025 am 11:32 AMIBM Watsonx.data: Streamlining the Enterprise AI Data Stack IBM positions watsonx.data as a pivotal platform for enterprises aiming to accelerate the delivery of precise and scalable generative AI solutions. This is achieved by simplifying the compl

The Rise of the Humanoid Robotic Machines Is Nearing.May 08, 2025 am 11:29 AM

The Rise of the Humanoid Robotic Machines Is Nearing.May 08, 2025 am 11:29 AMThe rapid advancements in robotics, fueled by breakthroughs in AI and materials science, are poised to usher in a new era of humanoid robots. For years, industrial automation has been the primary focus, but the capabilities of robots are rapidly exp

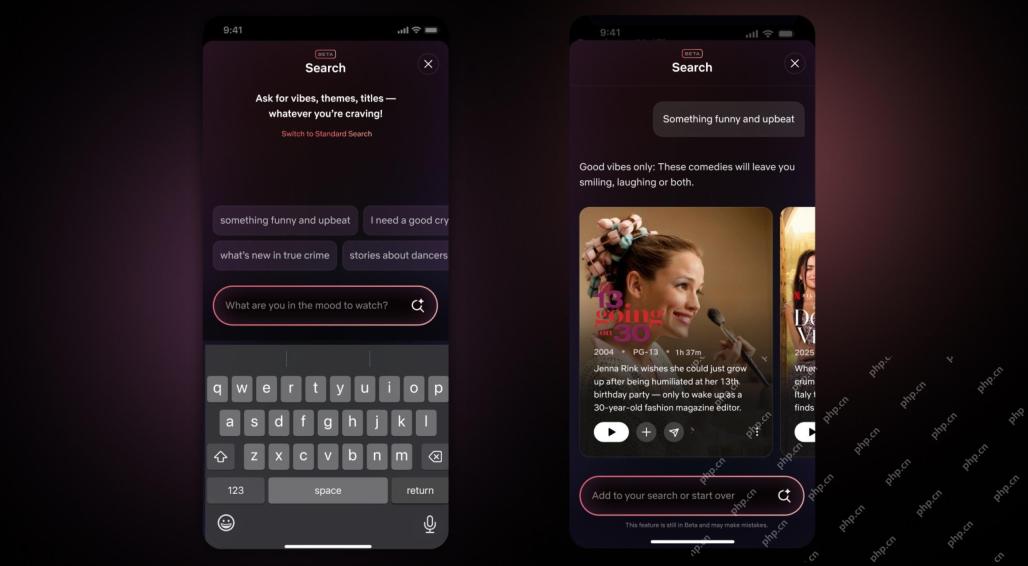

Netflix Revamps Interface — Debuting AI Search Tools And TikTok-Like DesignMay 08, 2025 am 11:25 AM

Netflix Revamps Interface — Debuting AI Search Tools And TikTok-Like DesignMay 08, 2025 am 11:25 AMThe biggest update of Netflix interface in a decade: smarter, more personalized, embracing diverse content Netflix announced its largest revamp of its user interface in a decade, not only a new look, but also adds more information about each show, and introduces smarter AI search tools that can understand vague concepts such as "ambient" and more flexible structures to better demonstrate the company's interest in emerging video games, live events, sports events and other new types of content. To keep up with the trend, the new vertical video component on mobile will make it easier for fans to scroll through trailers and clips, watch the full show or share content with others. This reminds you of the infinite scrolling and very successful short video website Ti

Long Before AGI: Three AI Milestones That Will Challenge YouMay 08, 2025 am 11:24 AM

Long Before AGI: Three AI Milestones That Will Challenge YouMay 08, 2025 am 11:24 AMThe growing discussion of general intelligence (AGI) in artificial intelligence has prompted many to think about what happens when artificial intelligence surpasses human intelligence. Whether this moment is close or far away depends on who you ask, but I don’t think it’s the most important milestone we should focus on. Which earlier AI milestones will affect everyone? What milestones have been achieved? Here are three things I think have happened. Artificial intelligence surpasses human weaknesses In the 2022 movie "Social Dilemma", Tristan Harris of the Center for Humane Technology pointed out that artificial intelligence has surpassed human weaknesses. What does this mean? This means that artificial intelligence has been able to use humans

Venkat Achanta On TransUnion's Platform Transformation And AI AmbitionMay 08, 2025 am 11:23 AM

Venkat Achanta On TransUnion's Platform Transformation And AI AmbitionMay 08, 2025 am 11:23 AMTransUnion's CTO, Ranganath Achanta, spearheaded a significant technological transformation since joining the company following its Neustar acquisition in late 2021. His leadership of over 7,000 associates across various departments has focused on u

When Trust In AI Leaps Up, Productivity FollowsMay 08, 2025 am 11:11 AM

When Trust In AI Leaps Up, Productivity FollowsMay 08, 2025 am 11:11 AMBuilding trust is paramount for successful AI adoption in business. This is especially true given the human element within business processes. Employees, like anyone else, harbor concerns about AI and its implementation. Deloitte researchers are sc

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

VSCode Windows 64-bit Download

A free and powerful IDE editor launched by Microsoft

SublimeText3 English version

Recommended: Win version, supports code prompts!

SublimeText3 Linux new version

SublimeText3 Linux latest version

Dreamweaver CS6

Visual web development tools