What is speech segmentation

Speech segmentation is the process of decomposing speech signals into smaller, meaningful speech units. Generally speaking, continuous speech signals are segmented into words, syllables, or speech segments. Speech segmentation is the basis of speech processing tasks, such as speech recognition, speech synthesis, and speech conversion. In speech recognition, speech segmentation splits a continuous speech signal into words or phonemes to help the recognizer better understand the speech signal. By segmenting the speech signal into smaller units, the recognizer can more accurately identify different words and phonemes in speech, improving recognition accuracy. In speech synthesis and speech conversion, speech segmentation can split the speech signal into smaller units to better control the quality and fluency of speech synthesis or conversion. By performing fine-grained segmentation of speech signals, parameters such as phonemes, tones, and speech speed can be better controlled, thereby achieving more natural and smooth speech synthesis or conversion effects. In short, speech segmentation is an important technology that plays an important role in speech processing tasks and can help improve the effects of recognition, synthesis, and conversion.

In speech segmentation, selecting appropriate features to determine the boundary between speech signals and non-speech signals is an important issue. Commonly used features include short-time energy, zero-crossing rate, and cepstral coefficient (MFCC). Short-term energy can be used to evaluate the strength of the speech signal, while the zero-crossing rate can reflect the frequency characteristics of the speech signal. MFCC is a commonly used speech feature representation method. It can convert the speech signal into a set of high-dimensional vectors to better represent the spectral characteristics of the speech signal.

Methods of speech segmentation

Methods of speech segmentation can be divided into threshold-based methods, model-based methods and deep learning-based methods .

1) Threshold-based segmentation method

The threshold-based segmentation method determines the threshold based on the characteristics of the speech signal, and then divides the speech signal into Split into different speech segments. Threshold-based methods usually use signal characteristics such as energy, zero-crossing rate, and short-term energy to determine the boundary between speech signals and non-speech signals. This method is simple and easy to understand, but it has poor segmentation effect on speech signals with large noise interference.

2) Model-based segmentation method

#The model-based segmentation method uses the statistical model of the speech signal to segment the noise. The inhibitory ability is relatively strong. However, the model needs to be trained and the computational complexity is high. Model-based methods often use models such as hidden Markov models (HMM), conditional random fields (CRF), and maximum entropy Markov models (MEMM) to model and segment speech signals.

3) Segmentation method based on deep learning

The segmentation method based on deep learning uses neural networks to perform speech segmentation. Commonly used neural networks include deep learning models such as convolutional neural networks (CNN), recurrent neural networks (RNN), and long short-term memory networks (LSTM) to automatically learn the characteristics of speech signals and segment them. This method can learn higher-level features of the speech signal and achieve better segmentation results. However, a large amount of data and computing resources are required for training.

In addition, factors such as changes in speech signals and noise interference also need to be considered during speech segmentation. For example, the volume and speed of speech signals will affect the accuracy of speech segmentation, and noise interference may cause misjudgments in the speech segmentation results. Therefore, preprocessing of speech signals, such as speech enhancement and denoising, is usually required to improve the accuracy of speech segmentation.

Speech segmentation example

The following is an example of threshold-based speech segmentation, implemented in Python. This example uses the two features of short-term energy and zero-crossing rate to determine the boundary between speech signals and non-speech signals, and performs segmentation based on the change rate of energy and zero-crossing rate. Since actual speech signal data is not provided, the speech signal in the example is simulated data generated through the NumPy library.

import numpy as np

# 生成模拟语音信号

fs = 16000 # 采样率

t = np.arange(fs * 2) / fs # 2秒语音信号

speech_signal = np.sin(2 * np.pi * 1000 * t) * np.hamming(len(t))

# 计算短时能量和过零率

frame_size = int(fs * 0.01) # 帧长

frame_shift = int(fs * 0.005) # 帧移

energy = np.sum(np.square(speech_signal.reshape(-1, frame_size)), axis=1)

zcr = np.mean(np.abs(np.diff(np.sign(speech_signal.reshape(-1, frame_size))), axis=1), axis=1)

# 计算能量和过零率的变化率

energy_diff = np.diff(energy)

zcr_diff = np.diff(zcr)

# 设置阈值

energy_threshold = np.mean(energy) + np.std(energy)

zcr_threshold = np.mean(zcr) + np.std(zcr)

# 根据能量和过零率的变化率进行分割

start_points = np.where((energy_diff > energy_threshold) & (zcr_diff > zcr_threshold))[0] * frame_shift

end_points = np.where((energy_diff < -energy_threshold) & (zcr_diff < -zcr_threshold))[0] * frame_shift

# 将分割结果写入文件

with open('segments.txt', 'w') as f:

for i in range(len(start_points)):

f.write('{}\t{}\n'.format(start_points[i], end_points[i]))The idea of this example is to first calculate the short-term energy and zero-crossing rate characteristics of the speech signal, and then calculate their change rate to determine the boundary between the speech signal and the non-speech signal. Then set the thresholds of energy and zero-crossing rate, perform segmentation based on the change rate of energy and zero-crossing rate, and write the segmentation results to a file.

It should be noted that the segmentation result of this example may be misjudged because it only uses two features and does not perform preprocessing. In practical applications, it is necessary to select appropriate features and methods according to specific scenarios, and preprocess the speech signal to improve segmentation accuracy.

In short, speech segmentation algorithm is an important research direction in the field of speech signal processing. Through different methods and technologies, speech signals can be segmented more accurately and the effect and application scope of speech processing can be improved.

The above is the detailed content of sound cutting. For more information, please follow other related articles on the PHP Chinese website!

One Prompt Can Bypass Every Major LLM's SafeguardsApr 25, 2025 am 11:16 AM

One Prompt Can Bypass Every Major LLM's SafeguardsApr 25, 2025 am 11:16 AMHiddenLayer's groundbreaking research exposes a critical vulnerability in leading Large Language Models (LLMs). Their findings reveal a universal bypass technique, dubbed "Policy Puppetry," capable of circumventing nearly all major LLMs' s

5 Mistakes Most Businesses Will Make This Year With SustainabilityApr 25, 2025 am 11:15 AM

5 Mistakes Most Businesses Will Make This Year With SustainabilityApr 25, 2025 am 11:15 AMThe push for environmental responsibility and waste reduction is fundamentally altering how businesses operate. This transformation affects product development, manufacturing processes, customer relations, partner selection, and the adoption of new

H20 Chip Ban Jolts China AI Firms, But They've Long Braced For ImpactApr 25, 2025 am 11:12 AM

H20 Chip Ban Jolts China AI Firms, But They've Long Braced For ImpactApr 25, 2025 am 11:12 AMThe recent restrictions on advanced AI hardware highlight the escalating geopolitical competition for AI dominance, exposing China's reliance on foreign semiconductor technology. In 2024, China imported a massive $385 billion worth of semiconductor

If OpenAI Buys Chrome, AI May Rule The Browser WarsApr 25, 2025 am 11:11 AM

If OpenAI Buys Chrome, AI May Rule The Browser WarsApr 25, 2025 am 11:11 AMThe potential forced divestiture of Chrome from Google has ignited intense debate within the tech industry. The prospect of OpenAI acquiring the leading browser, boasting a 65% global market share, raises significant questions about the future of th

How AI Can Solve Retail Media's Growing PainsApr 25, 2025 am 11:10 AM

How AI Can Solve Retail Media's Growing PainsApr 25, 2025 am 11:10 AMRetail media's growth is slowing, despite outpacing overall advertising growth. This maturation phase presents challenges, including ecosystem fragmentation, rising costs, measurement issues, and integration complexities. However, artificial intell

'AI Is Us, And It's More Than Us'Apr 25, 2025 am 11:09 AM

'AI Is Us, And It's More Than Us'Apr 25, 2025 am 11:09 AMAn old radio crackles with static amidst a collection of flickering and inert screens. This precarious pile of electronics, easily destabilized, forms the core of "The E-Waste Land," one of six installations in the immersive exhibition, &qu

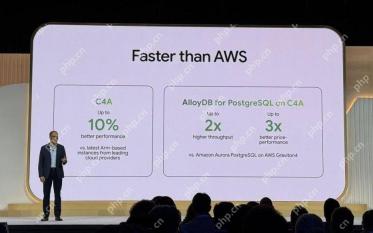

Google Cloud Gets More Serious About Infrastructure At Next 2025Apr 25, 2025 am 11:08 AM

Google Cloud Gets More Serious About Infrastructure At Next 2025Apr 25, 2025 am 11:08 AMGoogle Cloud's Next 2025: A Focus on Infrastructure, Connectivity, and AI Google Cloud's Next 2025 conference showcased numerous advancements, too many to fully detail here. For in-depth analyses of specific announcements, refer to articles by my

Talking Baby AI Meme, Arcana's $5.5 Million AI Movie Pipeline, IR's Secret Backers RevealedApr 25, 2025 am 11:07 AM

Talking Baby AI Meme, Arcana's $5.5 Million AI Movie Pipeline, IR's Secret Backers RevealedApr 25, 2025 am 11:07 AMThis week in AI and XR: A wave of AI-powered creativity is sweeping through media and entertainment, from music generation to film production. Let's dive into the headlines. AI-Generated Content's Growing Impact: Technology consultant Shelly Palme

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),

SublimeText3 Linux new version

SublimeText3 Linux latest version

Notepad++7.3.1

Easy-to-use and free code editor

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software