Technology peripherals

Technology peripherals AI

AI The LLaMA2 context length skyrockets to 1 million tokens, with only one hyperparameter need to be adjusted.

The LLaMA2 context length skyrockets to 1 million tokens, with only one hyperparameter need to be adjusted.The LLaMA2 context length skyrockets to 1 million tokens, with only one hyperparameter need to be adjusted.

With just a few tweaks, the large model support context size can be extended from 16,000 tokens to 1 million? !

Still on LLaMA 2 which has only 7 billion parameters.

You must know that even the most popular Claude 2 and GPT-4 support context lengths of only 100,000 and 32,000. Beyond this range, large models will start to talk nonsense and be unable to remember things.

Now, a new study from Fudan University and Shanghai Artificial Intelligence Laboratory has not only found a way to increase the length of the context window for a series of large models, but also discovered the rules.

According to this rule, only need to adjust 1 hyperparameter, can ensure the output effect while stably improving the large modelExtrapolation performance.

Extrapolation refers to the change in output performance when the input length of the large model exceeds the length of the pre-trained text. If the extrapolation ability is not good, once the input length exceeds the length of the pre-trained text, the large model will "talk nonsense".

So, what exactly can it improve the extrapolation capabilities of large models, and how does it do it?

"Mechanism" to improve the extrapolation ability of large models

This method of improving the extrapolation ability of large models is the same as the position coding in the Transformer architecture. module related.

In fact, the simple attention mechanism (Attention) module cannot distinguish tokens in different positions. For example, "I eat apples" and "apples eat me" have no difference in its eyes. Therefore, position coding needs to be added to allow it to understand the word order information and truly understand the meaning of a sentence. The current Transformer position encoding methods include absolute position encoding (integrating position information into the input), relative position encoding (writing position information into attention score calculation) and rotation position encoding. Among them, the most popular one is the rotational position encoding, which isRoPE.

RoPE achieves the effect of relative position encoding through absolute position encoding, but compared with relative position encoding, it can better improve the extrapolation potential of large models. How to further stimulate the extrapolation capabilities of large models using RoPE position encoding has become a new direction of many recent studies. These studies are mainly divided into two major schools:Limiting attention and Adjusting rotation angle.

Representative research on limiting attention includes ALiBi, xPos, BCA, etc. The StreamingLLM recently proposed by MIT can allow large models to achieve infinite input length (but does not increase the context window length), which belongs to the type of research in this direction.There is more work to adjust the rotation angle, typical representatives such as linear interpolation, Giraffe, Code LLaMA, LLaMA2 Long et al. all belong to this type of research.

32,000 tokens.

This hyperparameter is exactly the"switch" found by Code LLaMA and LLaMA2 Long et al.——

rotation angle base(base ).

Just fine-tune it to ensure that the extrapolation performance of large models is improved. But whether it is Code LLaMA or LLaMA2 Long, they are only fine-tuned on a specific base and continued training length to enhance their extrapolation capabilities. Is it possible to find a pattern to ensure thatall large models using RoPE position encoding can steadily improve the extrapolation performance?

Master this rule, the context is easy 100w Researchers from Fudan University and Shanghai AI Research Institute conducted experiments on this problem. They first analyzed several parameters that affect the RoPE extrapolation ability, and proposed a concept calledCritical Dimension (Critical Dimension). Then based on this concept, they concluded A set of RoPE extrapolation scaling laws (Scaling Laws of RoPE-based Extrapolation).

Just apply thisrule to ensure that any large model based on RoPE positional encoding can improve extrapolation capabilities.

Let’s first look at what the critical dimension is.From the definition, it is related to the pre-training text length Ttrain, the number of self-attention head dimensions d and other parameters. The specific calculation method is as follows:

Among them, 10000 is the "initial value" of the hyperparameter and rotation angle base.

The author found that whether the base is enlarged or reduced, the extrapolation ability of the large model based on RoPE can be enhanced in the end. In contrast, when the base of the rotation angle is 10000, the extrapolation ability of the large model is the best. Poor.

This paper believes that a smaller base of the rotation angle can allow more dimensions to perceive position information, and a larger base of the rotation angle, then Can express longer location information.

In this case, when facing continued training corpus of different lengths, how much rotation angle base should be reduced and enlarged to ensure that the extrapolation ability of the large model is maximized? To what extent?

The paper gives a scaling rule for extended RoPE extrapolation, which is related to parameters such as critical dimensions, continued training text length and pre-training text length of large models:

Based on this rule, the extrapolation performance of the large model can be directly calculated based on different pre-training and continued training text lengths. In other words, the context length supported by the large model is predicted.

On the contrary, using this rule, we can also quickly deduce how to best adjust the base of the rotation angle, thereby improving the extrapolation performance of large models.

The author tested this series of tasks and found that currently inputting 100,000, 500,000, or even 1 million tokens lengths can ensure that extrapolation can be achieved without additional attention restrictions.

At the same time, work on enhancing the extrapolation capabilities of large models, including Code LLaMA and LLaMA2 Long, has proven that this rule is indeed reasonable and effective.

In this way, you only need to "adjust a parameter" according to this rule, and you can easily expand the context window length of the large model based on RoPE and enhance the extrapolation capability.

Liu Xiaoran, the first author of the paper, said that this research is still improving the downstream task effect by improving the continued training corpus. After completion, the code and model will be open source. You can look forward to it~

Paper address:

https://arxiv.org/abs/2310.05209

Github repository:

https://github.com/OpenLMLab/scaling-rope

Paper analysis blog:

https:// zhuanlan.zhihu.com/p/660073229

The above is the detailed content of The LLaMA2 context length skyrockets to 1 million tokens, with only one hyperparameter need to be adjusted.. For more information, please follow other related articles on the PHP Chinese website!

One Prompt Can Bypass Every Major LLM's SafeguardsApr 25, 2025 am 11:16 AM

One Prompt Can Bypass Every Major LLM's SafeguardsApr 25, 2025 am 11:16 AMHiddenLayer's groundbreaking research exposes a critical vulnerability in leading Large Language Models (LLMs). Their findings reveal a universal bypass technique, dubbed "Policy Puppetry," capable of circumventing nearly all major LLMs' s

5 Mistakes Most Businesses Will Make This Year With SustainabilityApr 25, 2025 am 11:15 AM

5 Mistakes Most Businesses Will Make This Year With SustainabilityApr 25, 2025 am 11:15 AMThe push for environmental responsibility and waste reduction is fundamentally altering how businesses operate. This transformation affects product development, manufacturing processes, customer relations, partner selection, and the adoption of new

H20 Chip Ban Jolts China AI Firms, But They've Long Braced For ImpactApr 25, 2025 am 11:12 AM

H20 Chip Ban Jolts China AI Firms, But They've Long Braced For ImpactApr 25, 2025 am 11:12 AMThe recent restrictions on advanced AI hardware highlight the escalating geopolitical competition for AI dominance, exposing China's reliance on foreign semiconductor technology. In 2024, China imported a massive $385 billion worth of semiconductor

If OpenAI Buys Chrome, AI May Rule The Browser WarsApr 25, 2025 am 11:11 AM

If OpenAI Buys Chrome, AI May Rule The Browser WarsApr 25, 2025 am 11:11 AMThe potential forced divestiture of Chrome from Google has ignited intense debate within the tech industry. The prospect of OpenAI acquiring the leading browser, boasting a 65% global market share, raises significant questions about the future of th

How AI Can Solve Retail Media's Growing PainsApr 25, 2025 am 11:10 AM

How AI Can Solve Retail Media's Growing PainsApr 25, 2025 am 11:10 AMRetail media's growth is slowing, despite outpacing overall advertising growth. This maturation phase presents challenges, including ecosystem fragmentation, rising costs, measurement issues, and integration complexities. However, artificial intell

'AI Is Us, And It's More Than Us'Apr 25, 2025 am 11:09 AM

'AI Is Us, And It's More Than Us'Apr 25, 2025 am 11:09 AMAn old radio crackles with static amidst a collection of flickering and inert screens. This precarious pile of electronics, easily destabilized, forms the core of "The E-Waste Land," one of six installations in the immersive exhibition, &qu

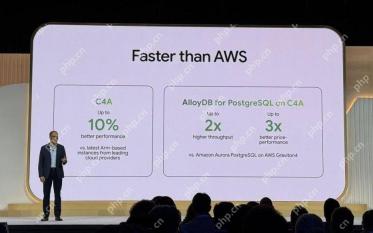

Google Cloud Gets More Serious About Infrastructure At Next 2025Apr 25, 2025 am 11:08 AM

Google Cloud Gets More Serious About Infrastructure At Next 2025Apr 25, 2025 am 11:08 AMGoogle Cloud's Next 2025: A Focus on Infrastructure, Connectivity, and AI Google Cloud's Next 2025 conference showcased numerous advancements, too many to fully detail here. For in-depth analyses of specific announcements, refer to articles by my

Talking Baby AI Meme, Arcana's $5.5 Million AI Movie Pipeline, IR's Secret Backers RevealedApr 25, 2025 am 11:07 AM

Talking Baby AI Meme, Arcana's $5.5 Million AI Movie Pipeline, IR's Secret Backers RevealedApr 25, 2025 am 11:07 AMThis week in AI and XR: A wave of AI-powered creativity is sweeping through media and entertainment, from music generation to film production. Let's dive into the headlines. AI-Generated Content's Growing Impact: Technology consultant Shelly Palme

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

SublimeText3 Linux new version

SublimeText3 Linux latest version

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Atom editor mac version download

The most popular open source editor

EditPlus Chinese cracked version

Small size, syntax highlighting, does not support code prompt function

Zend Studio 13.0.1

Powerful PHP integrated development environment