Home >Technology peripherals >AI >Google AudioPaLM implements 'text + audio' dual-modal solution, a large model for both speaking and listening

Google AudioPaLM implements 'text + audio' dual-modal solution, a large model for both speaking and listening

- PHPzforward

- 2023-06-30 13:49:221098browse

With its powerful performance and versatility, large-scale language models have driven the development of a number of multi-modal large models, such as audio, video, etc.

The underlying architecture of the language model is mostly based on Transformer and mainly decoder, so it can adapt to other sequence modalities without too much adjustment of the model architecture.

Recently, Google released a unified speech-text model AudioPaLM, which merges text and audio tokens into a multi-modal joint vocabulary, and then combines different task description tags to Achieve training of decoder-only models on any mixed speech and text tasks, including speech recognition (ASR), text-to-speech synthesis, automatic speech translation (AST) and speech-to-speech translation (S2ST), etc., which will be traditionally used by heterogeneous The tasks solved by the model are unified into an architecture and training process.

Picture

Picture

Paper link: https://arxiv.org/pdf/2306.12925.pdf

Example link: https://google-research.github.io/seanet/audiopalm/examples/

In addition, since the underlying architecture of AudioPaLM is a large Transformer models, which can be initialized with the weights of a large language model pre-trained on text, can benefit from the linguistic knowledge of models such as PaLM.

From the perspective of implementation results, AudioPaLM has achieved state-of-the-art results on the AST and S2ST benchmarks, and its performance on the ASR benchmark is comparable to other models.

By leveraging AudioLM’s audio cues, the AudioPaLM model is able to perform S2ST on new speaker speech migration, surpassing existing methods in terms of speech quality and speech preservation.

The AudioPaLM model also has the zero-shot ability to perform AST tasks on speech input/target language combinations not seen in training.

AudioPaLM

The researchers used a decoder-only Transformer model to model text and speech tokens, where the text and audio have been processed before being input to the model. Word segmentation, so the input is just a sequence of integers, and the detokenized operation is performed on the output end and returned to the user.

Picture

Picture

Audio embedding and word segmentation

The process of converting the original audio waveform into tokens includes extracting embeddings from existing speech representation models and discretizing the embeddings into a limited set of audio tokens

In previous work, embeddings were extracted from the w2v-BERT model and quantized by k-means. In this paper, the researchers experimented with three solutions:

w2v-BERT: Use the w2v-BERT model trained on multi-lingual data instead of pure English; and no normalization processing is performed before k-means clustering, otherwise it will lead to multi-language environment Medium performance degrades. Then generate tokens at a rate of 25Hz with a vocabulary size of 1024

USM-v1: Use a more powerful, 2 billion parameter Universal Speech Model (USM) encoder to perform similar operations, and extract embeddings from intermediate layers;

USM-v2: trained with auxiliary ASR loss and further fine-tuned to support multi-language.

Modify text-only decoder

In the Transfomrer decoder structure, except Except for the input and the final softmax output layer, the number of modeling tokens is not involved, and in the PaLM architecture, the weight variables of the input and output matrices are shared, that is, they are transposed to each other.

So you only need to expand the size of the embedding matrix from (t × m) to (t a) × m to turn a pure text model into a model that can simulate both text and text. A model for audio, where t is the size of the text vocabulary, a is the size of the audio vocabulary, and m is the embedding dimension.

To take advantage of the pre-trained text model, the researchers changed the checkpoint of the existing model by adding new rows to the embedding matrix.

The specific implementation is that the first t tokens correspond to the SentencePiece text tags, and the following a tokens represent the audio tags. Although the text embedding reuses the pre-trained weights, the audio embedding is Newly initialized, must be trained.

Experimental results show that compared with retraining from scratch, text-based pre-training models are very beneficial to improving the performance of multi-modal tasks of speech and text.

Decode the audio token into native audio

In order to synthesize the audio waveform from the audio token , the researchers tested two different methods:

1. Autoregressive decoding similar to the AudioLM model

2. Similar to the SoundStorm model Non-autoregressive decoding

Both methods require first generating a SoundStream token and then using a convolutional decoder to convert it into an audio waveform.

The researchers trained on Multilingual LibriSpeech. The speech condition was a 3-second long speech sample, which was represented as an audio token and a SoundStream token at the same time

By providing part of the original input speech as speech conditions, the model is able to preserve the original speaker's speech when translating the speaker's speech into different languages, and fill in the blank time by repeated playback when the original audio is shorter than 3 seconds.

Training task

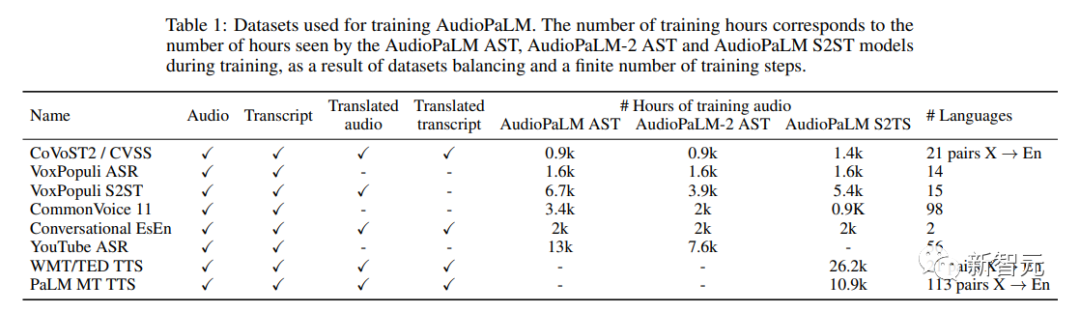

The training data sets used are speech-text data :

1. Audio Audio: Speech in the source language

2. Transcript: Transcription of the speech in the audio data

3. Translated Audio: The spoken translation of the voice in the audio

##4. Translated Transcript: The written translation of the voice in the audio

Component tasks include:

1. ASR (Automatic Speech Recognition): Transcribe audio to obtain transcribed text

2. AST (Automatic Speech Translation): Translate the audio to get the translated transcript

3. S2ST (Speech to Speech Translation): Translate the audio to get the translated transcript Audio

4. TTS (Text to Speech): Read the transcript for audio.

5. MT (Text-to-Text Machine Translation): Translating Transcriptions to Obtain Translated Transcript Text

A dataset may is used for multiple tasks, so the researchers chose to signal the model which task it should perform on a given input. The specific method is: add a label before the input, specify the English name of the task and input language, and output Language can also be selected.

For example, when you want the model to perform ASR on French corpus, you need to add the label [ASR French] in front of the audio input after word segmentation; to perform TTS tasks in English, you need to add the label in front of the text Add [TTS English]; to perform the S2ST task from English to French, the English audio after segmentation will be preceded by [S2ST English French]

##training mix

The researchers used the SeqIO library to blend the training data and down-weight the larger data sets.

Picture Experimental part

Experimental part

Picture

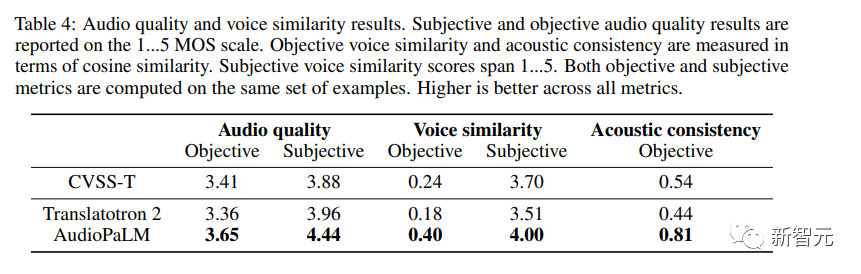

In addition to evaluating the translation quality of speech content, the researchers also evaluated whether the language generated by AudioPaLM was of high enough quality and whether the speaker's voice was preserved when translated into different languages.

Objective Metrics

Using something similar to the no-reference MOS estimator, given an audio sample, between 1 and Provides an estimate of perceived audio quality in the range of 5.To measure the quality of speech transfer across languages, the researchers used off-the-shelf speaker verification models and calculated the embeddings between the source (encoded/decoded with SoundStream) and the translated speech. Cosine similarity; also measures the acoustic properties (recording conditions, background noise) from the source audio to the target audio.

Subjective Evaluation The researchers conducted two independent studies to evaluate the quality of generated speech and speech similarity. In both studies, Use the same sample set. Due to the uneven quality of the corpus, some contain loud overlapping speech (for example, TV shows or songs playing in the background) or extremely strong noise (for example, clothes rubbing against the microphone) ), similar distortion effects complicate the work of human raters, so the researchers decided to pre-filter by only selecting inputs with an MOS estimate of at least 3.0. Ratings are provided on a 5-point scale, ranging from 1 (poor quality, or completely different sound) to 5 (good quality, same sound). It can be observed from the results that AudioPaLM performs well in both objective and subjective measurements, in terms of audio quality and voice similarity. Both are significantly better than the baseline Translatotron 2 system, and AudioPaLM has higher quality and better speech similarity than the real synthetic recordings in CVSS-T, with relatively large improvements in most indicators. The researchers also compared the systems of high- and low-resource groups (French, German, Spanish, and Catalan with other languages) and found that between these groups There is no significant difference in the indicators.  Picture

Picture

The above is the detailed content of Google AudioPaLM implements 'text + audio' dual-modal solution, a large model for both speaking and listening. For more information, please follow other related articles on the PHP Chinese website!

Related articles

See more- Technology trends to watch in 2023

- How Artificial Intelligence is Bringing New Everyday Work to Data Center Teams

- Can artificial intelligence or automation solve the problem of low energy efficiency in buildings?

- OpenAI co-founder interviewed by Huang Renxun: GPT-4's reasoning capabilities have not yet reached expectations

- Microsoft's Bing surpasses Google in search traffic thanks to OpenAI technology