Home >Technology peripherals >AI >From manual work to the industrial revolution! Nature article: Five areas of biological image analysis revolutionized by deep learning

From manual work to the industrial revolution! Nature article: Five areas of biological image analysis revolutionized by deep learning

- PHPzforward

- 2023-04-11 19:58:161201browse

One cubic millimeter does not sound big, it is the size of a sesame seed, but in the human brain, this small space can accommodate about 134 million synapses connected by 50,000 neural wires.

In order to generate the raw data, biological scientists need to use serial ultrathin section electron microscopy to analyze the data within 11 months Thousands of tissue fragments are imaged.

The amount of data finally obtained reached an astonishing1.4 PetaBytes (i.e. 1400TB, equivalent to the capacity of approximately 2 million CD-ROMs). For research For personnel, this is simply an astronomical figure.

Jeff Lichtman, a molecular and cellular biologist at Harvard University, said that if it were done purely by hand, it would be impossible for humans to manually trace all the nerve lines. There aren't enough people who can actually do this job effectively.The advancement of microscopy technology has brought a large amount of imaging data, but the amount of data is too large and the manpower is insufficient. This is also the reason why Connectomics

(Connectomics, a research The subject of brain structure and functional connections), as well ascommon phenomena in other biological fields. But The mission of computer science

is precisely to solve this type ofproblem of insufficient human resources, especially the optimized depth Learning algorithms can mine data patterns from large-scale data sets. Deep learning has had a huge push in biology over the past few years, says Beth Cimini, a computational biologist at the Broad Institute of MIT and Harvard University in Cambridge. role and developed many research tools.

The following are the editors of Nature summarizing the five areas of biological image analysis

that are brought about by deep learning.Large-Scale Connectomics

Deep learning enables researchers to generate increasingly complex connectomes from fruit flies, mice, and even humans.These data can help neuroscientists understand how the brain works and how brain structure changes during development and disease, but neural connections are not easy to map

.In 2018, Lichtman

joined forces withGoogle’s connectomics lead Viren Jain in Mountain View, California , to find solutions for the artificial intelligence algorithms the team needs. The image analysis task in connectomics is actually very difficult, you have to be able to trace these thin wires, the axons and dendrites of cells, over long distances ,Traditional image processing methods will have many errors in this task and are basically useless for this task

.These nerve strands can be thinner than a micron, extending hundreds of microns or even across millimeters of tissue.

And the deep learning algorithm

can not only automatically analyze connectomics data, but also maintainvery high accuracy. Researchers can use annotated data sets containing features of interest to train complex computational models that can quickly identify the same features in other data.

Anna Kreshuk, a computer scientist at the European Molecular Biology Laboratory, believes that the process of using deep learning algorithms is similar to "giving an example." As long as there are enough examples, you can solve all problems All solved.

But even using deep learning, Lichtman and Jain’s team still had a difficult task: mapping segments of the human cerebral cortex.

In the data collection phase

, it took326 days just to take more than 5,000 ultra-thin tissue sections. Two researchers spent about 100 hours manually annotating

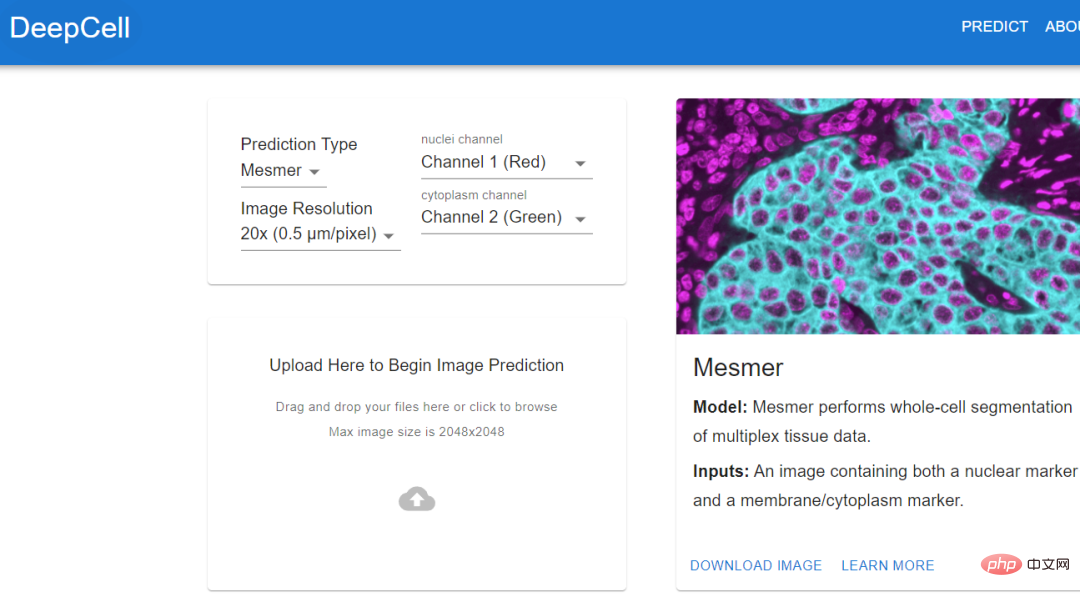

images and tracking neurons to create a ground truth dataset to train the algorithm.The algorithm trained using standard data can automatically stitch together images, identify neurons and synapses, and generate the final connectome. Jain's team also invested a lot of computing resources to solve this problem, including thousands of tensor processing units (TPU) , and also spent A few months to pre-process the data required for 1 million TPU hours. Although researchers have obtained the largest data set currently collected and can reconstruct it at a very fine level, this amount of data Approximately only 0.0001% of the human brain #As algorithms and hardware improve, researchers should be able to map larger brain regions and simultaneously resolve more Cellular features such as organelles and even proteins. At least, deep learning provides a feasibility. Histology (histology) is an important tool in medicine, used on the basis of chemical or molecular staining Diagnose disease. But the whole process is time-consuming and laborious, and usually takes days or even weeks to complete. The biopsy is sliced into thin slices and stained to reveal cellular and subcellular features. The pathologist then reads and interprets the results. Aydogan Ozcan, a computer engineer at the University of California, Los Angeles, believes that the entire process can be accelerated through deep learning. He trained a customized deep learning model, used computer simulation to stain a tissue section, and tens of thousands of tissue sections on the same section. The unstained and dyed samples are fed to the model and the model calculates the difference between them. In addition to the time advantage of virtual staining (it can be completed in an instant), pathologists have discovered through observation that there is almost no difference between virtual staining and traditional staining Experimental results show that the algorithm can replicate the molecular staining of the breast cancer biomarker HER2 in seconds, a process that . An expert panel of three breast pathologists evaluated the images and deemed them to be of comparable quality and accuracy to traditional immunohistochemical stains. Ozcan sees the potential for commercializing virtual staining in drug development, but he hopes to eliminate the need for toxic dyes and expensive staining equipment in histology. If you want to extract data from cell images, then you must know the actual location of the cells in the image. This process is also called cell segmentation (cell segmentation). Researchers need to observe cells under a microscope, or outline the cells one by one in the software. Morgan Schwartz, a computational biologist at the California Institute of Technology, is looking for ways to automate processing. As imaging data sets become larger and larger, traditional manual methods are also encountering bottlenecks. Some experiments cannot be analyzed without automation. Schwartz’s graduate advisor, bioengineer David Van Valen, created a set of artificial intelligence models and published them on the deepcell.org website, which can be used to calculate and analyze living cells and preserve them. Cells and other features in tissue images. Van Valen, along with collaborators such as Stanford University cancer biologist Noah Greenwald, also developed a deep learning model Mesmer that can quickly , Accurately detect cells and nuclei of different tissue types According to Greenwald, researchers could use this information to distinguish cancerous tissue from non-cancerous tissue and look for differences before and after treatment, or based on imaging changes to better understand why some Will patients respond or not respond, as well as determine the tumor subtype. The Human Protein Atlas project takes advantage of another application of deep learning: intracellular localization. Emma Lundberg, a bioengineer at Stanford University, said that over the past few decades, the project has generated millions of images depicting what is happening in human cells and tissues. protein expression. At the beginning, project participants needed tomanually annotate these images, but this method was unsustainable, and Lundberg began to seek help from artificial intelligence algorithms. In the past few years, she began to initiate crowdsourcing solutions in the Kaggle Challenge, where scientists and artificial intelligence enthusiasts complete various computing tasks for prize money. The prize money for the two projects is 37,000 US dollars 25,000 US dollars respectively. Contestants will design supervised machine learning models and annotate protein map images. multi-label classification of protein localization patterns, and can be generalized to cell lines, and has also achieved new industry breakthroughs, accurately classifying proteins that exist in multiple cellular locations.

With the model in hand, biological experiments can move forward. The location of human proteins is important because the same proteins are present in Different places behave differently, and knowing whether a protein is in the nucleus or mitochondria can help understand its function. Mackenzie Mathis, a neuroscientist at the Biotechnology Center on the campus of Ecole Polytechnique Fédérale de Lausanne in Switzerland, has long been interested in how the brain drives behavior. track animals’ postures and fine movements from videos and Videos" and other animal recordings are converted into data. DeepLabcut provides a graphical user interface that allows researchers to upload and annotate videos and train deep learning models with the click of a button. In April of this year, Mathis' team expanded the software to estimate poses for at the same time, which is a benefit for both humans and artificial intelligence. A whole new challenge. Applying the model trained by DeepLabCut to marmosets, the researchers found that when these animals were very close, their bodies would line up in a straight line and look towards similar animals. direction, and when they are apart, they tend to face each other. Biologists identify animal postures to understand how two animals interact, gaze, or observe the world.  .

. Localizing proteins

Tracking Animal Behavior

Tracking Animal Behavior

The above is the detailed content of From manual work to the industrial revolution! Nature article: Five areas of biological image analysis revolutionized by deep learning. For more information, please follow other related articles on the PHP Chinese website!

Related articles

See more- Technology trends to watch in 2023

- How Artificial Intelligence is Bringing New Everyday Work to Data Center Teams

- Can artificial intelligence or automation solve the problem of low energy efficiency in buildings?

- OpenAI co-founder interviewed by Huang Renxun: GPT-4's reasoning capabilities have not yet reached expectations

- Microsoft's Bing surpasses Google in search traffic thanks to OpenAI technology