Technology peripherals

Technology peripherals AI

AI To run the ChatGPT volume model, you only need a GPU from now on: here is a method to accelerate it by a hundred times.

To run the ChatGPT volume model, you only need a GPU from now on: here is a method to accelerate it by a hundred times.Computational cost is one of the major challenges that people face when building large models such as ChatGPT.

According to statistics, the evolution from GPT to GPT-3 is also a process of growth in model size - the number of parameters has increased from 117 million to 175 billion, and the amount of pre-training data has increased from 5GB. To 45TB, the cost of one GPT-3 training is US$4.6 million, and the total training cost reaches US$12 million.

In addition to training, inference is also expensive. Some people estimate that the computing power cost of OpenAI running ChatGPT is US$100,000 per day.

While developing technology to allow large models to master more capabilities, some people are also trying to reduce the computing resources required for AI. Recently, a technology called FlexGen has gained people's attention because of "an RTX 3090 running the ChatGPT volume model".

Although the large model accelerated by FlexGen still looks very slow - 1 token per second when running a 175 billion parameter language model, what is impressive is that it has The impossible became possible.

Traditionally, the high computational and memory requirements of large language model (LLM) inference necessitated the use of multiple high-end AI accelerators for training. This study explores how to reduce the requirements of LLM inference to a consumer-grade GPU and achieve practical performance.

Recently, new research from Stanford University, UC Berkeley, ETH Zurich, Yandex, Moscow State Higher School of Economics, Meta, Carnegie Mellon University and other institutions proposed FlexGen. is a high-throughput generation engine for running LLM with limited GPU memory.

By aggregating memory and computation from GPU, CPU and disk, FlexGen can be flexibly configured under various hardware resource constraints. Through a linear programming optimizer, it searches for the best pattern for storing and accessing tensors, including weights, activations, and attention key/value (KV) caches. FlexGen further compresses the weights and KV cache to 4 bits with negligible accuracy loss. Compared to state-of-the-art offloading systems, FlexGen runs OPT-175B 100x faster on a single 16GB GPU and achieves real-world generation throughput of 1 token/s for the first time. FlexGen also comes with a pipelined parallel runtime to allow super-linear scaling in decoding if more distributed GPUs are available.

Currently, this technology has released the code and has obtained thousands of stars: https://www.php.cn /link/ee715daa76f1b51d80343f45547be570

In recent years, large language models have shown excellent performance in a wide range of tasks. While LLM demonstrates unprecedented general intelligence, it also exposes people to unprecedented challenges when building. These models may have billions or even trillions of parameters, resulting in extremely high computational and memory requirements to run them. For example, GPT-175B (GPT-3) requires 325GB of memory just to store model weights. For this model to do inference, you need at least five Nvidia A100s (80GB) and a complex parallelism strategy.

Methods to reduce the resource requirements of LLM inference have been frequently discussed recently. These efforts are divided into three directions:

(1) Model compression to reduce the total memory footprint;

(2) Collaborative inference, Amortize costs through decentralization;

(3) Offloading to utilize CPU and disk memory.

These techniques significantly reduce the computational resource requirements for using LLM. However, models are often assumed to fit in GPU memory, and existing offloading-based systems still struggle to run 175 billion parameter-sized models with acceptable throughput using a single GPU.

In new research, the authors focus on effective offloading strategies for high-throughput generative inference. When the GPU memory is not enough, we need to offload it to secondary storage and perform calculations piece by piece through partial loading. On a typical machine, the memory hierarchy is divided into three levels, as shown in the figure below. High-level memory is fast but scarce, low-level memory is slow but abundant.

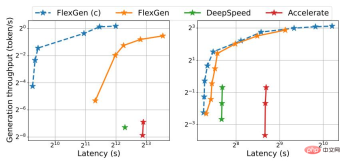

In FlexGen, the author does not pursue low latency, but targets throughput-oriented scenarios, which are popular in applications such as benchmarking, information extraction, and data sorting. Achieving low latency is inherently a challenge for offloading, but for throughput-oriented scenarios, the efficiency of offloading can be greatly improved. Figure 1 illustrates the latency-throughput tradeoff for three inference systems with offloading. With careful scheduling, I/O costs can be spread over large amounts of input and overlap with computation. In the study, the authors showed that a single consumer-grade GPU throughput-optimized T4 GPU is 4 times more efficient than 8 latency-optimized A100 GPUs on the cloud in terms of cost per unit of computing power.

Figure 1. OPT-175B (left) and OPT-30B (right) top Latency and throughput tradeoffs for three offloading-based systems. FlexGen achieves a new Pareto optimal boundary, increasing the maximum throughput of the OPT-175B by a factor of 100. Other systems were unable to further increase throughput due to insufficient memory.

Although there have been studies discussing the latency-throughput trade-off of offloading in the context of training, no one has yet used it to generate LLM inference, which is A very different process. Generative inference presents unique challenges due to the autoregressive nature of LLMs. In addition to storing all parameters, it requires sequential decoding and maintaining a large attention key/value cache (KV cache). Existing offload systems are unable to cope with these challenges, so they perform too much I/O and achieve throughput well below the capabilities of the hardware.

Designing a good offloading strategy for generative inference is challenging. First, there are three tensors in this process: weights, activations and KV cache. The policy should specify what, where, and when to uninstall on a three-level hierarchy. Second, the structure of batch-by-batch, per-token, and per-layer calculations forms a complex dependency graph that can be calculated in a variety of ways. The strategy should choose a schedule that minimizes the execution time. Together, these choices create a complex design space.

To this end, on the new method FlexGen, an offloading framework for LLM inference is proposed. FlexGen aggregates memory from GPU, CPU, and disk and schedules I/O operations efficiently. The authors also discuss possible compression methods and distributed pipeline parallelism.

The main contributions of this study are as follows:

#1. The author formally defines the search space of possible offloading strategies and uses cost models and A linear programming solver searches for the optimal strategy. Notably, the researchers demonstrated that the search space captures an almost I/O-optimal computation order with an I/O complexity within 2 times the optimal computation order. The search algorithm can be configured for a variety of hardware specifications and latency/throughput constraints, providing a way to smoothly navigate the trade-off space. Compared to existing strategies, the FlexGen solution unifies weights, activations, and KV cache placement, enabling larger batch sizes.

2. Research shows that the weights and KV cache of LLMs such as OPT-175B can be compressed to 4 bits without retraining/calibration and with negligible accuracy loss. This is achieved through fine-grained grouping quantization, which can significantly reduce I/O costs.

3. Demonstrate the efficiency of FlexGen by running OPT-175B on NVIDIA T4 GPU (16GB). On a single GPU, given the same latency requirements, uncompressed FlexGen can achieve 65x higher throughput compared to DeepSpeed Zero-Inference (Aminabadi et al., 2022) and Hugging Face Accelerate (HuggingFace, 2022) The latter is currently the most advanced inference system based on offloading in the industry. If higher latency and compression are allowed, FlexGen can further increase throughput and achieve 100x improvements. FlexGen is the first system to achieve 1 token/s speed throughput for the OPT-175B using a single T4 GPU. FlexGen with pipelined parallelism achieves super-linear scaling in decoding given multiple distributed GPUs.

In the study, the authors also compared FlexGen and Petals as representatives of offloading and decentralized set inference methods. Results show that FlexGen with a single T4 GPU outperforms a decentralized Petal cluster with 12 T4 GPUs in terms of throughput, and in some cases even achieves lower latency.

Operating Mechanism

By aggregating memory and computation from GPU, CPU and disk, FlexGen can be flexibly configured under various hardware resource constraints. Through a linear programming optimizer, it searches for the best pattern for storing and accessing tensors, including weights, activations, and attention key/value (KV) caches. FlexGen further compresses the weights and KV cache to 4 bits with negligible accuracy loss.

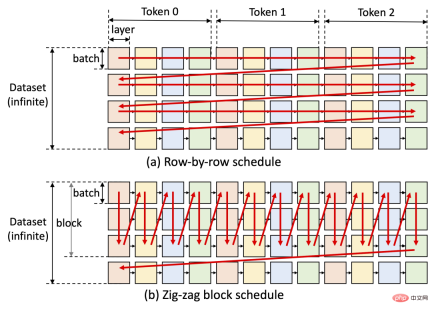

One of the key ideas of FlexGen is the latency-throughput trade-off. Achieving low latency is inherently challenging for offloading methods, but for throughput-oriented scenarios, offloading efficiency can be greatly improved (see figure below). FlexGen utilizes block scheduling to reuse weights and overlap I/O with computations, as shown in Figure (b) below, while other baseline systems use inefficient row-by-row scheduling, as shown in Figure (a) below.

Currently, the next steps of the study’s authors include support for Apple’s M1 and M2 chips and support for Colab deployment .

FlexGen has quickly received thousands of stars on GitHub since its release, and is also very popular on social networks. People have expressed that this project is very promising. It seems that the obstacles to running high-performance large-scale language models are gradually being overcome. It is hoped that within this year, ChatGPT can be handled on a single machine.

Someone used this method to train a language model, and the results are as follows:

Although it has not been fed with a large amount of data and the AI does not know specific knowledge, the logic of answering questions seems relatively clear. Perhaps we can see such NPCs in future games?

The above is the detailed content of To run the ChatGPT volume model, you only need a GPU from now on: here is a method to accelerate it by a hundred times.. For more information, please follow other related articles on the PHP Chinese website!

从VAE到扩散模型:一文解读以文生图新范式Apr 08, 2023 pm 08:41 PM

从VAE到扩散模型:一文解读以文生图新范式Apr 08, 2023 pm 08:41 PM1 前言在发布DALL·E的15个月后,OpenAI在今年春天带了续作DALL·E 2,以其更加惊艳的效果和丰富的可玩性迅速占领了各大AI社区的头条。近年来,随着生成对抗网络(GAN)、变分自编码器(VAE)、扩散模型(Diffusion models)的出现,深度学习已向世人展现其强大的图像生成能力;加上GPT-3、BERT等NLP模型的成功,人类正逐步打破文本和图像的信息界限。在DALL·E 2中,只需输入简单的文本(prompt),它就可以生成多张1024*1024的高清图像。这些图像甚至

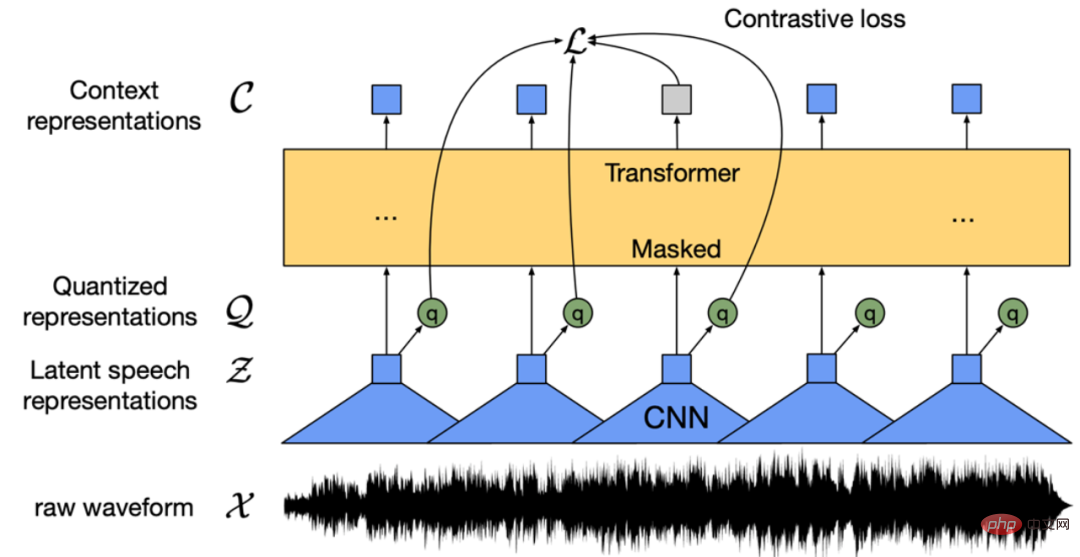

找不到中文语音预训练模型?中文版 Wav2vec 2.0和HuBERT来了Apr 08, 2023 pm 06:21 PM

找不到中文语音预训练模型?中文版 Wav2vec 2.0和HuBERT来了Apr 08, 2023 pm 06:21 PMWav2vec 2.0 [1],HuBERT [2] 和 WavLM [3] 等语音预训练模型,通过在多达上万小时的无标注语音数据(如 Libri-light )上的自监督学习,显著提升了自动语音识别(Automatic Speech Recognition, ASR),语音合成(Text-to-speech, TTS)和语音转换(Voice Conversation,VC)等语音下游任务的性能。然而这些模型都没有公开的中文版本,不便于应用在中文语音研究场景。 WenetSpeech [4] 是

普林斯顿陈丹琦:如何让「大模型」变小Apr 08, 2023 pm 04:01 PM

普林斯顿陈丹琦:如何让「大模型」变小Apr 08, 2023 pm 04:01 PM“Making large models smaller”这是很多语言模型研究人员的学术追求,针对大模型昂贵的环境和训练成本,陈丹琦在智源大会青源学术年会上做了题为“Making large models smaller”的特邀报告。报告中重点提及了基于记忆增强的TRIME算法和基于粗细粒度联合剪枝和逐层蒸馏的CofiPruning算法。前者能够在不改变模型结构的基础上兼顾语言模型困惑度和检索速度方面的优势;而后者可以在保证下游任务准确度的同时实现更快的处理速度,具有更小的模型结构。陈丹琦 普

解锁CNN和Transformer正确结合方法,字节跳动提出有效的下一代视觉TransformerApr 09, 2023 pm 02:01 PM

解锁CNN和Transformer正确结合方法,字节跳动提出有效的下一代视觉TransformerApr 09, 2023 pm 02:01 PM由于复杂的注意力机制和模型设计,大多数现有的视觉 Transformer(ViT)在现实的工业部署场景中不能像卷积神经网络(CNN)那样高效地执行。这就带来了一个问题:视觉神经网络能否像 CNN 一样快速推断并像 ViT 一样强大?近期一些工作试图设计 CNN-Transformer 混合架构来解决这个问题,但这些工作的整体性能远不能令人满意。基于此,来自字节跳动的研究者提出了一种能在现实工业场景中有效部署的下一代视觉 Transformer——Next-ViT。从延迟 / 准确性权衡的角度看,

Stable Diffusion XL 现已推出—有什么新功能,你知道吗?Apr 07, 2023 pm 11:21 PM

Stable Diffusion XL 现已推出—有什么新功能,你知道吗?Apr 07, 2023 pm 11:21 PM3月27号,Stability AI的创始人兼首席执行官Emad Mostaque在一条推文中宣布,Stable Diffusion XL 现已可用于公开测试。以下是一些事项:“XL”不是这个新的AI模型的官方名称。一旦发布稳定性AI公司的官方公告,名称将会更改。与先前版本相比,图像质量有所提高与先前版本相比,图像生成速度大大加快。示例图像让我们看看新旧AI模型在结果上的差异。Prompt: Luxury sports car with aerodynamic curves, shot in a

五年后AI所需算力超100万倍!十二家机构联合发表88页长文:「智能计算」是解药Apr 09, 2023 pm 07:01 PM

五年后AI所需算力超100万倍!十二家机构联合发表88页长文:「智能计算」是解药Apr 09, 2023 pm 07:01 PM人工智能就是一个「拼财力」的行业,如果没有高性能计算设备,别说开发基础模型,就连微调模型都做不到。但如果只靠拼硬件,单靠当前计算性能的发展速度,迟早有一天无法满足日益膨胀的需求,所以还需要配套的软件来协调统筹计算能力,这时候就需要用到「智能计算」技术。最近,来自之江实验室、中国工程院、国防科技大学、浙江大学等多达十二个国内外研究机构共同发表了一篇论文,首次对智能计算领域进行了全面的调研,涵盖了理论基础、智能与计算的技术融合、重要应用、挑战和未来前景。论文链接:https://spj.scien

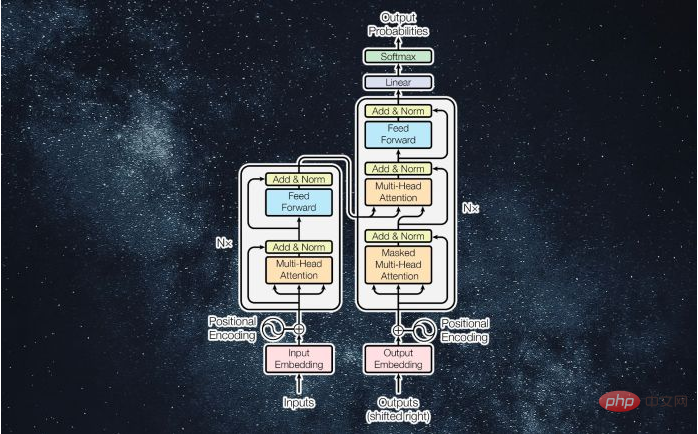

什么是Transformer机器学习模型?Apr 08, 2023 pm 06:31 PM

什么是Transformer机器学习模型?Apr 08, 2023 pm 06:31 PM译者 | 李睿审校 | 孙淑娟近年来, Transformer 机器学习模型已经成为深度学习和深度神经网络技术进步的主要亮点之一。它主要用于自然语言处理中的高级应用。谷歌正在使用它来增强其搜索引擎结果。OpenAI 使用 Transformer 创建了著名的 GPT-2和 GPT-3模型。自从2017年首次亮相以来,Transformer 架构不断发展并扩展到多种不同的变体,从语言任务扩展到其他领域。它们已被用于时间序列预测。它们是 DeepMind 的蛋白质结构预测模型 AlphaFold

AI模型告诉你,为啥巴西最可能在今年夺冠!曾精准预测前两届冠军Apr 09, 2023 pm 01:51 PM

AI模型告诉你,为啥巴西最可能在今年夺冠!曾精准预测前两届冠军Apr 09, 2023 pm 01:51 PM说起2010年南非世界杯的最大网红,一定非「章鱼保罗」莫属!这只位于德国海洋生物中心的神奇章鱼,不仅成功预测了德国队全部七场比赛的结果,还顺利地选出了最终的总冠军西班牙队。不幸的是,保罗已经永远地离开了我们,但它的「遗产」却在人们预测足球比赛结果的尝试中持续存在。在艾伦图灵研究所(The Alan Turing Institute),随着2022年卡塔尔世界杯的持续进行,三位研究员Nick Barlow、Jack Roberts和Ryan Chan决定用一种AI算法预测今年的冠军归属。预测模型图

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

EditPlus Chinese cracked version

Small size, syntax highlighting, does not support code prompt function

Dreamweaver CS6

Visual web development tools

WebStorm Mac version

Useful JavaScript development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software