Technology peripherals

Technology peripherals AI

AI Re-examining the Prompt optimization problem, prediction bias makes language model context learning stronger

Re-examining the Prompt optimization problem, prediction bias makes language model context learning strongerRe-examining the Prompt optimization problem, prediction bias makes language model context learning stronger

LLMs have achieved good performance under In-context Learning, but choosing different examples will lead to completely different performances. A recent research work proposes a prompt search strategy from the perspective of predictive bias, and approximately finds the optimal combination of examples.

- Paper link: https://arxiv.org/abs/2303.13217

- Code link: https://github.com/MaHuanAAA /g_fair_searching

Research Introduction

Large language models have shown amazing capabilities in context learning. These models can learn from contexts constructed from several input and output examples without the need for fine-tuning. Optimizations are applied directly to many downstream tasks. However, previous research has shown that contextual learning can exhibit a high degree of instability due to changes in training examples, example order, and prompt formats. Therefore, constructing appropriate prompts is crucial to improve the performance of contextual learning.

Previous research usually studies this issue from two directions: (1) prompt tuning in the encoding space (prompt tuning), (2) searching in the original space (prompt searching).

The key idea of Prompt tuning is to inject task-specific embeddings into hidden layers and then use gradient-based optimization to adjust these embeddings. However, these methods require modifying the original inference process of the model and obtaining the model gradient, which is impractical in black-box LLM services like GPT-3 and ChatGPT. Furthermore, hint tuning introduces additional computational and storage costs, which are generally expensive for LLM.

A more feasible and efficient approach is to optimize the prompts by searching the original text space for approximate demonstration samples and sequences. Some work build prompts from the "Global view" or the "Local view". Global view-based methods usually optimize the different elements of the prompt as a whole to achieve better performance. For example, Diversity-guided [1] approaches exploit the overall diversity of demonstrations for search, or attempt to optimize the entire sample combination order [2] to achieve better performance. In contrast to the Global view, Local view-based methods work by designing different heuristic selection criteria, such as KATE [3].

But these methods have their own limitations: (1) Most current research mainly focuses on searching for cues along a single factor, such as example selection or order. However, the overall impact of each factor on performance is unclear. (2) These methods are usually based on heuristic criteria and require a unified perspective to explain how these methods work. (3) More importantly, existing methods optimize hints globally or locally, which may lead to unsatisfactory performance.

This article re-examines the prompt optimization problem in the field of NLP from the perspective of "prediction bias" and discovers a key phenomenon: the quality of a given prompt depends on its inherent bias. Based on this phenomenon, the article proposes an alternative criterion to evaluate the quality of prompts based on prediction bias. This metric can evaluate prompts through a single forward process without the need for an additional development set.

Specifically, by inputting a "no content" test under a given prompt, the model is expected to output a uniform prediction distribution (a "no content" input does not contain any useful information) . Therefore, the uniformity of the prediction distribution is used in this paper to represent the prediction deviation of a given prompt. This is similar to the metric used by the previous post-calibration method [4], but unlike post-calibration which uses this metric for probabilistic post-calibration under a fixed prompt, this paper further explores its application in automatically searching for approximate prompts. And through extensive experiments, we confirmed the correlation between the inherent bias of a given prompt and its average task performance on a given test set.

Furthermore, this bias-based metric enables the method to search for suitable prompts in a “local-to-global” manner. However, a realistic problem is that it is not possible to search for the optimal solution by traversing all combinations because its complexity would exceed O (N!).

This work proposes two novel strategies to search for high-quality prompts in an efficient manner: (1) T-fair-Prompting (2) G-fair-Prompting. T-fair-Prompting uses an intuitive approach, first calculating the deviation of each example individually forming a prompt, and then selecting the Top-k fairest examples to combine into the final prompt. This strategy is quite efficient, with complexity O (N). But it should be noted that T-fair-Prompting is based on the assumption that the optimal prompt is usually constructed from the least biased examples. However, this may not hold true in practical situations and often leads to local optimal solutions. Therefore, G-fair-Prompting is further introduced in the article to improve search quality. G-fair-Prompting follows the regular process of greedy search to find the optimal solution by making local optimal choices at each step. At each step of the algorithm, examples are selected such that the updated prompt achieves optimal fairness with a worst-case time complexity of O (N^2), significantly improving search quality. G-fair-Prompting works from a local-to-global perspective, where the bias of individual samples is considered in the early stages, while the later stages focus on reducing the global prediction bias.

Experimental results

This study proposes an effective and interpretable method to improve the context learning performance of language models, which can be applied to various downstream tasks. The article verifies the effectiveness of these two strategies on various LLMs (including the GPT series of models and the recently released LMaMA series). Compared with the SOTA method, G-fair-Prompting achieved more than 10% on different downstream tasks. relative improvement.

The closest thing to this research is the Calibration-before-use [4] method, both of which use “content-free” input to improve model performance. However, the Calibration-before-use method is designed to use this standard to calibrate the output, which is still susceptible to the quality of the examples used. In contrast, this paper aims to search the original space to find a near-optimal prompt to improve the performance of the model without any post-processing of the model output. Furthermore, this paper is the first to demonstrate through extensive experiments the link between prediction bias and final task performance, which has not yet been studied in calibration-before-use methods.

Through experiments, it can also be found that even without calibration, the prompt selected by the method proposed in this article can be better than the calibrated randomly selected prompt. This shows that the method can be practical and effective in practical applications and can provide inspiration for future natural language processing research.

The above is the detailed content of Re-examining the Prompt optimization problem, prediction bias makes language model context learning stronger. For more information, please follow other related articles on the PHP Chinese website!

Understanding LangChain Agent FrameworkApr 21, 2025 am 11:25 AM

Understanding LangChain Agent FrameworkApr 21, 2025 am 11:25 AMLangChain is a powerful toolkit for building sophisticated AI applications. Its agent architecture is particularly noteworthy, allowing developers to create intelligent systems capable of independent reasoning, decision-making, and action. This expl

What are the Radial Basis Functions Neural Networks?Apr 21, 2025 am 11:13 AM

What are the Radial Basis Functions Neural Networks?Apr 21, 2025 am 11:13 AMRadial Basis Function Neural Networks (RBFNNs): A Comprehensive Guide Radial Basis Function Neural Networks (RBFNNs) are a powerful type of neural network architecture that leverages radial basis functions for activation. Their unique structure make

The Meshing Of Minds And Machines Has ArrivedApr 21, 2025 am 11:11 AM

The Meshing Of Minds And Machines Has ArrivedApr 21, 2025 am 11:11 AMBrain-computer interfaces (BCIs) directly link the brain to external devices, translating brain impulses into actions without physical movement. This technology utilizes implanted sensors to capture brain signals, converting them into digital comman

Insights on spaCy, Prodigy and Generative AI from Ines MontaniApr 21, 2025 am 11:01 AM

Insights on spaCy, Prodigy and Generative AI from Ines MontaniApr 21, 2025 am 11:01 AMThis "Leading with Data" episode features Ines Montani, co-founder and CEO of Explosion AI, and co-developer of spaCy and Prodigy. Ines offers expert insights into the evolution of these tools, Explosion's unique business model, and the tr

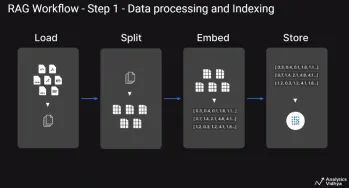

A Guide to Building Agentic RAG Systems with LangGraphApr 21, 2025 am 11:00 AM

A Guide to Building Agentic RAG Systems with LangGraphApr 21, 2025 am 11:00 AMThis article explores Retrieval Augmented Generation (RAG) systems and how AI agents can enhance their capabilities. Traditional RAG systems, while useful for leveraging custom enterprise data, suffer from limitations such as a lack of real-time dat

What are Integrity Constraints in SQL? - Analytics VidhyaApr 21, 2025 am 10:58 AM

What are Integrity Constraints in SQL? - Analytics VidhyaApr 21, 2025 am 10:58 AMSQL Integrity Constraints: Ensuring Database Accuracy and Consistency Imagine you're a city planner, responsible for ensuring every building adheres to regulations. In the world of databases, these regulations are known as integrity constraints. Jus

Top 30 PySpark Interview Questions and Answers (2025)Apr 21, 2025 am 10:51 AM

Top 30 PySpark Interview Questions and Answers (2025)Apr 21, 2025 am 10:51 AMPySpark, the Python API for Apache Spark, empowers Python developers to harness Spark's distributed processing power for big data tasks. It leverages Spark's core strengths, including in-memory computation and machine learning capabilities, offering

Self-Consistency in Prompt EngineeringApr 21, 2025 am 10:50 AM

Self-Consistency in Prompt EngineeringApr 21, 2025 am 10:50 AMHarnessing the Power of Self-Consistency in Prompt Engineering: A Comprehensive Guide Have you ever wondered how to effectively communicate with today's advanced AI models? As Large Language Models (LLMs) like Claude, GPT-3, and GPT-4 become increas

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.

SublimeText3 English version

Recommended: Win version, supports code prompts!

SublimeText3 Chinese version

Chinese version, very easy to use

VSCode Windows 64-bit Download

A free and powerful IDE editor launched by Microsoft

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software