Backend Development

Backend Development Python Tutorial

Python Tutorial Build an AI code review assistant with vev, litellm and Agenta

Build an AI code review assistant with vev, litellm and AgentaThis tutorial demonstrates building a production-ready AI pull request reviewer using LLMOps best practices. The final application, accessible here, accepts a public PR URL and returns an AI-generated review.

Application Overview

This tutorial covers:

- Code Development: Retrieving PR diffs from GitHub and leveraging LiteLLM for LLM interaction.

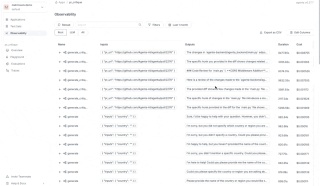

- Observability: Implementing Agenta for application monitoring and debugging.

- Prompt Engineering: Iterating on prompts and model selection using Agenta's playground.

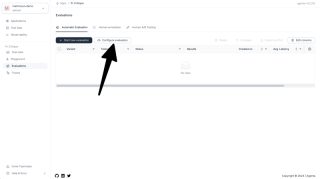

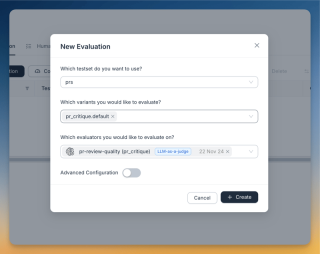

- LLM Evaluation: Employing LLM-as-a-judge for prompt and model assessment.

- Deployment: Deploying the application as an API and creating a simple UI with v0.dev.

Core Logic

The AI assistant's workflow is simple: given a PR URL, it retrieves the diff from GitHub and submits it to an LLM for review.

GitHub diffs are accessed via:

<code>https://patch-diff.githubusercontent.com/raw/{owner}/{repo}/pull/{pr_number}.diff</code>

This Python function fetches the diff:

def get_pr_diff(pr_url):

# ... (Code remains the same)

return response.text

LiteLLM facilitates LLM interactions, offering a consistent interface across various providers.

prompt_system = """

You are an expert Python developer performing a file-by-file review of a pull request. You have access to the full diff of the file to understand the overall context and structure. However, focus on reviewing only the specific hunk provided.

"""

prompt_user = """

Here is the diff for the file:

{diff}

Please provide a critique of the changes made in this file.

"""

def generate_critique(pr_url: str):

diff = get_pr_diff(pr_url)

response = litellm.completion(

model=config.model,

messages=[

{"content": config.system_prompt, "role": "system"},

{"content": config.user_prompt.format(diff=diff), "role": "user"},

],

)

return response.choices[0].message.content

Implementing Observability with Agenta

Agenta enhances observability, tracking inputs, outputs, and data flow for easier debugging.

Initialize Agenta and configure LiteLLM callbacks:

import agenta as ag ag.init() litellm.callbacks = [ag.callbacks.litellm_handler()]

Instrument functions with Agenta decorators:

@ag.instrument()

def generate_critique(pr_url: str):

# ... (Code remains the same)

return response.choices[0].message.content

Set the AGENTA_API_KEY environment variable (obtained from Agenta) and optionally AGENTA_HOST for self-hosting.

Creating an LLM Playground

Agenta's custom workflow feature provides an IDE-like playground for iterative development. The following code snippet demonstrates the configuration and integration with Agenta:

from pydantic import BaseModel, Field

from typing import Annotated

import agenta as ag

import litellm

from agenta.sdk.assets import supported_llm_models

# ... (previous code)

class Config(BaseModel):

system_prompt: str = prompt_system

user_prompt: str = prompt_user

model: Annotated[str, ag.MultipleChoice(choices=supported_llm_models)] = Field(default="gpt-3.5-turbo")

@ag.route("/", config_schema=Config)

@ag.instrument()

def generate_critique(pr_url:str):

diff = get_pr_diff(pr_url)

config = ag.ConfigManager.get_from_route(schema=Config)

response = litellm.completion(

model=config.model,

messages=[

{"content": config.system_prompt, "role": "system"},

{"content": config.user_prompt.format(diff=diff), "role": "user"},

],

)

return response.choices[0].message.content

Serving and Evaluating with Agenta

- Run

agenta initspecifying the app name and API key. - Run

agenta variant serve app.py.

This makes the application accessible through Agenta's playground for end-to-end testing. LLM-as-a-judge is used for evaluation. The evaluator prompt is:

<code>You are an evaluator grading the quality of a PR review. CRITERIA: ... (criteria remain the same) ANSWER ONLY THE SCORE. DO NOT USE MARKDOWN. DO NOT PROVIDE ANYTHING OTHER THAN THE NUMBER</code>

The user prompt for the evaluator:

<code>https://patch-diff.githubusercontent.com/raw/{owner}/{repo}/pull/{pr_number}.diff</code>

Deployment and Frontend

Deployment is done through Agenta's UI:

- Navigate to the overview page.

- Click the three dots next to the chosen variant.

- Select "Deploy to Production".

A v0.dev frontend was used for rapid UI creation.

Next Steps and Conclusion

Future improvements include prompt refinement, incorporating full code context, and handling large diffs. This tutorial successfully demonstrates building, evaluating, and deploying a production-ready AI pull request reviewer using Agenta and LiteLLM.

The above is the detailed content of Build an AI code review assistant with vev, litellm and Agenta. For more information, please follow other related articles on the PHP Chinese website!

How to Use Python to Find the Zipf Distribution of a Text FileMar 05, 2025 am 09:58 AM

How to Use Python to Find the Zipf Distribution of a Text FileMar 05, 2025 am 09:58 AMThis tutorial demonstrates how to use Python to process the statistical concept of Zipf's law and demonstrates the efficiency of Python's reading and sorting large text files when processing the law. You may be wondering what the term Zipf distribution means. To understand this term, we first need to define Zipf's law. Don't worry, I'll try to simplify the instructions. Zipf's Law Zipf's law simply means: in a large natural language corpus, the most frequently occurring words appear about twice as frequently as the second frequent words, three times as the third frequent words, four times as the fourth frequent words, and so on. Let's look at an example. If you look at the Brown corpus in American English, you will notice that the most frequent word is "th

How Do I Use Beautiful Soup to Parse HTML?Mar 10, 2025 pm 06:54 PM

How Do I Use Beautiful Soup to Parse HTML?Mar 10, 2025 pm 06:54 PMThis article explains how to use Beautiful Soup, a Python library, to parse HTML. It details common methods like find(), find_all(), select(), and get_text() for data extraction, handling of diverse HTML structures and errors, and alternatives (Sel

Image Filtering in PythonMar 03, 2025 am 09:44 AM

Image Filtering in PythonMar 03, 2025 am 09:44 AMDealing with noisy images is a common problem, especially with mobile phone or low-resolution camera photos. This tutorial explores image filtering techniques in Python using OpenCV to tackle this issue. Image Filtering: A Powerful Tool Image filter

How to Perform Deep Learning with TensorFlow or PyTorch?Mar 10, 2025 pm 06:52 PM

How to Perform Deep Learning with TensorFlow or PyTorch?Mar 10, 2025 pm 06:52 PMThis article compares TensorFlow and PyTorch for deep learning. It details the steps involved: data preparation, model building, training, evaluation, and deployment. Key differences between the frameworks, particularly regarding computational grap

Introduction to Parallel and Concurrent Programming in PythonMar 03, 2025 am 10:32 AM

Introduction to Parallel and Concurrent Programming in PythonMar 03, 2025 am 10:32 AMPython, a favorite for data science and processing, offers a rich ecosystem for high-performance computing. However, parallel programming in Python presents unique challenges. This tutorial explores these challenges, focusing on the Global Interprete

How to Implement Your Own Data Structure in PythonMar 03, 2025 am 09:28 AM

How to Implement Your Own Data Structure in PythonMar 03, 2025 am 09:28 AMThis tutorial demonstrates creating a custom pipeline data structure in Python 3, leveraging classes and operator overloading for enhanced functionality. The pipeline's flexibility lies in its ability to apply a series of functions to a data set, ge

Serialization and Deserialization of Python Objects: Part 1Mar 08, 2025 am 09:39 AM

Serialization and Deserialization of Python Objects: Part 1Mar 08, 2025 am 09:39 AMSerialization and deserialization of Python objects are key aspects of any non-trivial program. If you save something to a Python file, you do object serialization and deserialization if you read the configuration file, or if you respond to an HTTP request. In a sense, serialization and deserialization are the most boring things in the world. Who cares about all these formats and protocols? You want to persist or stream some Python objects and retrieve them in full at a later time. This is a great way to see the world on a conceptual level. However, on a practical level, the serialization scheme, format or protocol you choose may determine the speed, security, freedom of maintenance status, and other aspects of the program

Mathematical Modules in Python: StatisticsMar 09, 2025 am 11:40 AM

Mathematical Modules in Python: StatisticsMar 09, 2025 am 11:40 AMPython's statistics module provides powerful data statistical analysis capabilities to help us quickly understand the overall characteristics of data, such as biostatistics and business analysis. Instead of looking at data points one by one, just look at statistics such as mean or variance to discover trends and features in the original data that may be ignored, and compare large datasets more easily and effectively. This tutorial will explain how to calculate the mean and measure the degree of dispersion of the dataset. Unless otherwise stated, all functions in this module support the calculation of the mean() function instead of simply summing the average. Floating point numbers can also be used. import random import statistics from fracti

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

PhpStorm Mac version

The latest (2018.2.1) professional PHP integrated development tool

Safe Exam Browser

Safe Exam Browser is a secure browser environment for taking online exams securely. This software turns any computer into a secure workstation. It controls access to any utility and prevents students from using unauthorized resources.

SublimeText3 English version

Recommended: Win version, supports code prompts!

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)