Backend Development

Backend Development Python Tutorial

Python Tutorial Part Building Your Own AI - Setting Up the Environment for AI/ML Development

Part Building Your Own AI - Setting Up the Environment for AI/ML DevelopmentAuthor: Trix Cyrus

Waymap Pentesting tool: Click Here

TrixSec Github: Click Here

TrixSec Telegram: Click Here

Getting started with AI and Machine Learning requires a well-prepared development environment. This article will guide you through setting up the tools and libraries needed for your AI/ML journey, ensuring a smooth start for beginners. We’ll also discuss online platforms like Google Colab for those who want to avoid complex local setups.

System Requirements for AI/ML Development

Before diving into AI and Machine Learning projects, it’s essential to ensure your system can handle the computational demands. While most basic tasks can run on standard machines, more advanced projects (like deep learning) may require better hardware. Here’s a breakdown of system requirements based on project complexity:

1. For Beginners: Small Projects and Learning

- Operating System: Windows 10/11, macOS, or any modern Linux distribution.

- Processor: Dual-core CPU (Intel i5 or AMD equivalent).

- RAM: 8 GB (minimum); 16 GB recommended for smoother multitasking.

-

Storage:

- 20 GB free space for Python, libraries, and small datasets.

- An SSD is highly recommended for faster performance.

- GPU (Graphics Card): Not necessary; CPU will suffice for basic ML tasks.

- Internet Connection: Required for downloading libraries, datasets, and using cloud platforms.

2. For Intermediate Projects: Larger Datasets

- Processor: Quad-core CPU (Intel i7 or AMD Ryzen 5 equivalent).

- RAM: 16 GB minimum; 32 GB recommended for large datasets.

-

Storage:

- 50–100 GB free space for datasets and experiments.

- SSD for quick data loading and operations.

-

GPU:

- Dedicated GPU with at least 4 GB VRAM (e.g., NVIDIA GTX 1650 or AMD Radeon RX 550).

- Useful for training larger models or experimenting with neural networks.

- Display: Dual monitors can improve productivity during model debugging and visualization.

3. For Advanced Projects: Deep Learning and Large Models

- Processor: High-performance CPU (Intel i9 or AMD Ryzen 7/9).

- RAM: 32–64 GB to handle memory-intensive operations and large datasets.

-

Storage:

- 1 TB or more (SSD strongly recommended).

- External storage may be needed for datasets.

-

GPU:

- NVIDIA GPUs are preferred for deep learning due to CUDA support.

- Recommended: NVIDIA RTX 3060 (12 GB VRAM) or higher (e.g., RTX 3090, RTX 4090).

- For budget options: NVIDIA RTX 2060 or RTX 2070.

-

Cooling and Power Supply:

- Ensure proper cooling for GPUs, especially during long training sessions.

- Reliable power supply to support hardware.

4. Cloud Platforms: If Your System Falls Short

If your system doesn’t meet the above specs or you need more computational power, consider using cloud platforms:

- Google Colab: Free with access to GPUs (upgradable to Colab Pro for longer runtime and better GPUs).

- AWS EC2 or SageMaker: High-performance instances for large-scale ML projects.

- Azure ML or GCP AI Platform: Suitable for enterprise-level projects.

- Kaggle Kernels: Free for experiments with smaller datasets.

Recommended Setup Based on Use Case

|

CPU | RAM | GPU | Storage | |||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Learning Basics | Dual-Core i5 | 8–16 GB | None/Integrated | 20–50 GB | |||||||||||||||||||||||||

| Intermediate ML Projects | Quad-Core i7 | 16–32 GB | GTX 1650 (4 GB) | 50–100 GB | |||||||||||||||||||||||||

| Deep Learning (Large Models) | High-End i9/Ryzen 9 | 32–64 GB | RTX 3060 (12 GB) | 1 TB SSD | |||||||||||||||||||||||||

| Cloud Platforms | Not Required Locally | N/A | Cloud GPUs (e.g., T4, V100) | N/A |

Step 1: Installing Python

Python is the go-to language for AI/ML due to its simplicity and a vast ecosystem of libraries. Here’s how you can install it:

-

Download Python:

- Visit python.org and download the latest stable version (preferably Python 3.9 or later).

-

Install Python:

- Follow the installation steps for your operating system (Windows, macOS, or Linux).

- Make sure to check the option to add Python to PATH during installation.

-

Verify Installation:

- Open a terminal and type:

python --version

You should see the installed version of Python.

Step 2: Setting Up a Virtual Environment

To keep your projects organized and avoid dependency conflicts, it’s a good idea to use a virtual environment.

- Create a Virtual Environment:

python -m venv env

-

Activate the Virtual Environment:

- On Windows:

.\env\Scripts\activate

-

On macOS/Linux:

source env/bin/activate

- Install Libraries Within the Environment: After activation, any library installed will be isolated to this environment.

Step 3: Installing Essential Libraries

Once Python is ready, install the following libraries, which are essential for AI/ML:

- NumPy: For numerical computations.

pip install numpy

- pandas: For data manipulation and analysis.

pip install pandas

- Matplotlib and Seaborn: For data visualization.

pip install matplotlib seaborn

- scikit-learn: For basic ML algorithms and tools.

pip install scikit-learn

- TensorFlow/PyTorch: For deep learning.

pip install tensorflow

or

pip install torch torchvision

- Jupyter Notebook: An interactive environment for coding and visualizations.

pip install notebook

Step 4: Exploring Jupyter Notebooks

Jupyter Notebooks provide an interactive way to write and test code, making them perfect for learning AI/ML.

- Launch Jupyter Notebook:

jupyter notebook

This will open a web interface in your browser.

-

Create a New Notebook:

- Click New > Python 3 Notebook and start coding!

Step 5: Setting Up Google Colab (Optional)

For those who don’t want to set up a local environment, Google Colab is a great alternative. It’s free and provides powerful GPUs for training AI models.

-

Visit Google Colab:

- Go to colab.research.google.com.

-

Create a New Notebook:

- Click New Notebook to start.

Install Libraries (if needed):

Libraries like NumPy and pandas are pre-installed, but you can install others using:

python --version

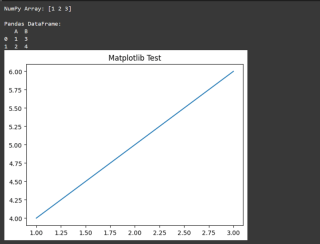

Step 6: Testing the Setup

To ensure everything is working, run this simple test in your Jupyter Notebook or Colab:

python -m venv env

Output Should Be

Common Errors and Solutions

-

Library Not Found:

- Ensure you’ve installed the library in the active virtual environment.

-

Python Not Recognized:

- Verify Python is added to your system PATH.

-

Jupyter Notebook Issues:

- Ensure you’ve installed Jupyter in the correct environment.

~Trixsec

The above is the detailed content of Part Building Your Own AI - Setting Up the Environment for AI/ML Development. For more information, please follow other related articles on the PHP Chinese website!

How to Use Python to Find the Zipf Distribution of a Text FileMar 05, 2025 am 09:58 AM

How to Use Python to Find the Zipf Distribution of a Text FileMar 05, 2025 am 09:58 AMThis tutorial demonstrates how to use Python to process the statistical concept of Zipf's law and demonstrates the efficiency of Python's reading and sorting large text files when processing the law. You may be wondering what the term Zipf distribution means. To understand this term, we first need to define Zipf's law. Don't worry, I'll try to simplify the instructions. Zipf's Law Zipf's law simply means: in a large natural language corpus, the most frequently occurring words appear about twice as frequently as the second frequent words, three times as the third frequent words, four times as the fourth frequent words, and so on. Let's look at an example. If you look at the Brown corpus in American English, you will notice that the most frequent word is "th

Image Filtering in PythonMar 03, 2025 am 09:44 AM

Image Filtering in PythonMar 03, 2025 am 09:44 AMDealing with noisy images is a common problem, especially with mobile phone or low-resolution camera photos. This tutorial explores image filtering techniques in Python using OpenCV to tackle this issue. Image Filtering: A Powerful Tool Image filter

How Do I Use Beautiful Soup to Parse HTML?Mar 10, 2025 pm 06:54 PM

How Do I Use Beautiful Soup to Parse HTML?Mar 10, 2025 pm 06:54 PMThis article explains how to use Beautiful Soup, a Python library, to parse HTML. It details common methods like find(), find_all(), select(), and get_text() for data extraction, handling of diverse HTML structures and errors, and alternatives (Sel

Introduction to Parallel and Concurrent Programming in PythonMar 03, 2025 am 10:32 AM

Introduction to Parallel and Concurrent Programming in PythonMar 03, 2025 am 10:32 AMPython, a favorite for data science and processing, offers a rich ecosystem for high-performance computing. However, parallel programming in Python presents unique challenges. This tutorial explores these challenges, focusing on the Global Interprete

How to Perform Deep Learning with TensorFlow or PyTorch?Mar 10, 2025 pm 06:52 PM

How to Perform Deep Learning with TensorFlow or PyTorch?Mar 10, 2025 pm 06:52 PMThis article compares TensorFlow and PyTorch for deep learning. It details the steps involved: data preparation, model building, training, evaluation, and deployment. Key differences between the frameworks, particularly regarding computational grap

How to Implement Your Own Data Structure in PythonMar 03, 2025 am 09:28 AM

How to Implement Your Own Data Structure in PythonMar 03, 2025 am 09:28 AMThis tutorial demonstrates creating a custom pipeline data structure in Python 3, leveraging classes and operator overloading for enhanced functionality. The pipeline's flexibility lies in its ability to apply a series of functions to a data set, ge

Serialization and Deserialization of Python Objects: Part 1Mar 08, 2025 am 09:39 AM

Serialization and Deserialization of Python Objects: Part 1Mar 08, 2025 am 09:39 AMSerialization and deserialization of Python objects are key aspects of any non-trivial program. If you save something to a Python file, you do object serialization and deserialization if you read the configuration file, or if you respond to an HTTP request. In a sense, serialization and deserialization are the most boring things in the world. Who cares about all these formats and protocols? You want to persist or stream some Python objects and retrieve them in full at a later time. This is a great way to see the world on a conceptual level. However, on a practical level, the serialization scheme, format or protocol you choose may determine the speed, security, freedom of maintenance status, and other aspects of the program

Mathematical Modules in Python: StatisticsMar 09, 2025 am 11:40 AM

Mathematical Modules in Python: StatisticsMar 09, 2025 am 11:40 AMPython's statistics module provides powerful data statistical analysis capabilities to help us quickly understand the overall characteristics of data, such as biostatistics and business analysis. Instead of looking at data points one by one, just look at statistics such as mean or variance to discover trends and features in the original data that may be ignored, and compare large datasets more easily and effectively. This tutorial will explain how to calculate the mean and measure the degree of dispersion of the dataset. Unless otherwise stated, all functions in this module support the calculation of the mean() function instead of simply summing the average. Floating point numbers can also be used. import random import statistics from fracti

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.

VSCode Windows 64-bit Download

A free and powerful IDE editor launched by Microsoft

Dreamweaver Mac version

Visual web development tools

SublimeText3 English version

Recommended: Win version, supports code prompts!

Notepad++7.3.1

Easy-to-use and free code editor