前面幾篇文章介紹了特徵歸一化和張量,接下來開始寫兩篇PyTorch簡明教程,主要介紹PyTorch簡單實踐。

1、四則運算

import torcha = torch.tensor([2, 3, 4])b = torch.tensor([3, 4, 5])print("a + b: ", (a + b).numpy())print("a - b: ", (a - b).numpy())print("a * b: ", (a * b).numpy())print("a / b: ", (a / b).numpy())

加減乘除就不用多解釋了,輸出為:

a + b:[5 7 9]a - b:[-1 -1 -1]a * b:[ 6 12 20]a / b:[0.6666667 0.750.8]

2、線性迴歸

線性迴歸是找到一條直線盡可能接近已知點,如圖:

圖1

圖1

import torchfrom torch import optimdef build_model1():return torch.nn.Sequential(torch.nn.Linear(1, 1, bias=False))def build_model2():model = torch.nn.Sequential()model.add_module("linear", torch.nn.Linear(1, 1, bias=False))return modeldef train(model, loss, optimizer, x, y):model.train()optimizer.zero_grad()fx = model.forward(x.view(len(x), 1)).squeeze()output = loss.forward(fx, y)output.backward()optimizer.step()return output.item()def main():torch.manual_seed(42)X = torch.linspace(-1, 1, 101, requires_grad=False)Y = 2 * X + torch.randn(X.size()) * 0.33print("X: ", X.numpy(), ", Y: ", Y.numpy())model = build_model1()loss = torch.nn.MSELoss(reductinotallow='mean')optimizer = optim.SGD(model.parameters(), lr=0.01, momentum=0.9)batch_size = 10for i in range(100):cost = 0.num_batches = len(X) // batch_sizefor k in range(num_batches):start, end = k * batch_size, (k + 1) * batch_sizecost += train(model, loss, optimizer, X[start:end], Y[start:end])print("Epoch = %d, cost = %s" % (i + 1, cost / num_batches))w = next(model.parameters()).dataprint("w = %.2f" % w.numpy())if __name__ == "__main__":main()

(1)先從main函數開始,torch.manual_seed(42 )用於設定隨機數產生器的種子,以確保在每次運行時產生的隨機數序列相同,該函數接受一個整數參數作為種子,可以在訓練神經網路等需要隨機數的場景中使用,以確保結果的可重複性;

(2)torch.linspace(-1, 1, 101, requires_grad=False)用於在指定的區間內產生一組等間隔的數值,該函數接受三個參數:起始值、終止值和元素個數,傳回一個張量,其中包含了指定個數的等間隔數值;

(3)build_model1的內部實作:

- #torch.nn.Sequential(torch.nn.Linear(1, 1, bias=False))中使用nn.Sequential類別的建構函數,將線性層作為參數傳遞給它,然後傳回一個包含該線性層的神經網路模型;

- build_model2和build_model1功能一樣,使用add_module()方法向其中新增了一個名為linear的子模組;

#(4)torch.nn.MSELoss (reductinotallow='mean')定義損失函數;

使用optim.SGD(model.parameters(), lr=0.01, momentum=0.9)可以實現隨機梯度下降(Stochastic Gradient Descent,SGD)優化演算法

將訓練集透過批次大小拆分,循環100次

(7)接下來是訓練函數train,用於訓練一個神經網路模型,具體來說,該函數接受以下參數:

- model:神經網路模型,通常是繼承自nn.Module的類別的實例;

- loss:損失函數,用於計算模型的預測值與真實值之間的差異;

- optimizer:優化器,用於更新模型的參數;

- x:輸入數據,是一個torch.Tensor類型的張量;

- y:目標數據,是一個torch.Tensor類型的張量;

(8)train是PyTorch訓練過程中常用的方法,其步驟如下:

- #將模型設定為訓練模式,即啟用dropout和batch normalization等訓練時使用的特殊操作;

- 將優化器的梯度快取清零,以便進行新一輪的梯度計算;

- 將輸入資料傳遞給模型,計算模型的預測值,並將預測值與目標資料傳遞給損失函數,計算損失值;

- 對損失值進行反向傳播,計算模型參數的梯度;

- 使用最佳化器更新模型參數,以最小化損失值;

- 傳回損失值的標量值;

(9)print("輪次= %d, 損失值= %s" % (i 1, cost / num_batches)) 最後列印目前訓練的輪次和損失值,上述的程式碼輸出如下:

...Epoch = 95, cost = 0.10514946877956391Epoch = 96, cost = 0.10514946877956391Epoch = 97, cost = 0.10514946877956391Epoch = 98, cost = 0.10514946877956391Epoch = 99, cost = 0.10514946877956391Epoch = 100, cost = 0.10514946877956391w = 1.98

3、邏輯迴歸

邏輯迴歸即用一條曲線近似表示一堆離散點的軌跡,如圖:

圖2

圖2

import numpy as npimport torchfrom torch import optimfrom data_util import load_mnistdef build_model(input_dim, output_dim):return torch.nn.Sequential(torch.nn.Linear(input_dim, output_dim, bias=False))def train(model, loss, optimizer, x_val, y_val):model.train()optimizer.zero_grad()fx = model.forward(x_val)output = loss.forward(fx, y_val)output.backward()optimizer.step()return output.item()def predict(model, x_val):model.eval()output = model.forward(x_val)return output.data.numpy().argmax(axis=1)def main():torch.manual_seed(42)trX, teX, trY, teY = load_mnist(notallow=False)trX = torch.from_numpy(trX).float()teX = torch.from_numpy(teX).float()trY = torch.tensor(trY)n_examples, n_features = trX.size()n_classes = 10model = build_model(n_features, n_classes)loss = torch.nn.CrossEntropyLoss(reductinotallow='mean')optimizer = optim.SGD(model.parameters(), lr=0.01, momentum=0.9)batch_size = 100for i in range(100):cost = 0.num_batches = n_examples // batch_sizefor k in range(num_batches):start, end = k * batch_size, (k + 1) * batch_sizecost += train(model, loss, optimizer,trX[start:end], trY[start:end])predY = predict(model, teX)print("Epoch %d, cost = %f, acc = %.2f%%"% (i + 1, cost / num_batches, 100. * np.mean(predY == teY)))if __name__ == "__main__":main()

(1)先從main函數開始,torch.manual_seed(42)上面有介紹,在此略過;

(2)load_mnist是自己實作下載mnist資料集,回傳trX和teX是輸入數據,trY和teY是標籤資料;

(3)build_model內部實作:torch.nn .Sequential(torch.nn.Linear(input_dim, output_dim, bias=False)) 用於建構一個包含一個線性層的神經網路模型,模型的輸入特徵數量為input_dim,輸出特徵數量為output_dim,且該線性層沒有偏置項,其中n_classes=10表示輸出10個分類; 重寫後: (3)build_model內部實作:使用torch.nn.Sequential(torch.nn.Linear(input_dim, output_dim, bias=False)) 建構一個包含一個線性層的神經網路模型,該模型的輸入特徵數量為input_dim,輸出特徵數量為output_dim,且該線性層沒有偏壓項。其中n_classes=10表示輸出10個分類;

(4)其他的步驟就是定義損失函數,梯度下降優化器,透過batch_size將訓練集拆分,循環100次進行train;

使用optim.SGD(model.parameters(), lr=0.01, momentum=0.9)可以實現隨機梯度下降(Stochastic Gradient Descent,SGD)優化演算法

(6)在每一輪訓練結束後,需要執行predict函數來進行預測。函數接受兩個參數model(已經訓練好的模型)和teX(需要進行預測的資料)。具體步驟如下:

- model.eval()模型设置为评估模式,这意味着模型将不会进行训练,而是仅用于推理;

- 将output转换为NumPy数组,并使用argmax()方法获取每个样本的预测类别;

(7)print("Epoch %d, cost = %f, acc = %.2f%%" % (i + 1, cost / num_batches, 100. * np.mean(predY == teY)))最后打印当前训练的轮次,损失值和acc,上述的代码输出如下(执行很快,但是准确率偏低):

...Epoch 91, cost = 0.252863, acc = 92.52%Epoch 92, cost = 0.252717, acc = 92.51%Epoch 93, cost = 0.252573, acc = 92.50%Epoch 94, cost = 0.252431, acc = 92.50%Epoch 95, cost = 0.252291, acc = 92.52%Epoch 96, cost = 0.252153, acc = 92.52%Epoch 97, cost = 0.252016, acc = 92.51%Epoch 98, cost = 0.251882, acc = 92.51%Epoch 99, cost = 0.251749, acc = 92.51%Epoch 100, cost = 0.251617, acc = 92.51%

4、神经网络

一个经典的LeNet网络,用于对字符进行分类,如图:

图3

图3

- 定义一个多层的神经网络

- 对数据集的预处理并准备作为网络的输入

- 将数据输入到网络

- 计算网络的损失

- 反向传播,计算梯度

import numpy as npimport torchfrom torch import optimfrom data_util import load_mnistdef build_model(input_dim, output_dim):return torch.nn.Sequential(torch.nn.Linear(input_dim, 512, bias=False),torch.nn.Sigmoid(),torch.nn.Linear(512, output_dim, bias=False))def train(model, loss, optimizer, x_val, y_val):model.train()optimizer.zero_grad()fx = model.forward(x_val)output = loss.forward(fx, y_val)output.backward()optimizer.step()return output.item()def predict(model, x_val):model.eval()output = model.forward(x_val)return output.data.numpy().argmax(axis=1)def main():torch.manual_seed(42)trX, teX, trY, teY = load_mnist(notallow=False)trX = torch.from_numpy(trX).float()teX = torch.from_numpy(teX).float()trY = torch.tensor(trY)n_examples, n_features = trX.size()n_classes = 10model = build_model(n_features, n_classes)loss = torch.nn.CrossEntropyLoss(reductinotallow='mean')optimizer = optim.SGD(model.parameters(), lr=0.01, momentum=0.9)batch_size = 100for i in range(100):cost = 0.num_batches = n_examples // batch_sizefor k in range(num_batches):start, end = k * batch_size, (k + 1) * batch_sizecost += train(model, loss, optimizer,trX[start:end], trY[start:end])predY = predict(model, teX)print("Epoch %d, cost = %f, acc = %.2f%%"% (i + 1, cost / num_batches, 100. * np.mean(predY == teY)))if __name__ == "__main__":main()

(1)以上这段神经网络的代码与逻辑回归没有太多的差异,区别的地方是build_model,这里是构建一个包含两个线性层和一个Sigmoid激活函数的神经网络模型,该模型包含一个输入特征数量为input_dim,输出特征数量为output_dim的线性层,一个Sigmoid激活函数,以及一个输入特征数量为512,输出特征数量为output_dim的线性层;

(2)print("Epoch %d, cost = %f, acc = %.2f%%" % (i + 1, cost / num_batches, 100. * np.mean(predY == teY)))最后打印当前训练的轮次,损失值和acc,上述的代码输入如下(执行时间比逻辑回归要长,但是准确率要高很多):

第91个时期,费用= 0.054484,准确率= 97.58%第92个时期,费用= 0.053753,准确率= 97.56%第93个时期,费用= 0.053036,准确率= 97.60%第94个时期,费用= 0.052332,准确率= 97.61%第95个时期,费用= 0.051641,准确率= 97.63%第96个时期,费用= 0.050964,准确率= 97.66%第97个时期,费用= 0.050298,准确率= 97.66%第98个时期,费用= 0.049645,准确率= 97.67%第99个时期,费用= 0.049003,准确率= 97.67%第100个时期,费用= 0.048373,准确率= 97.68%

以上是機器學習 | PyTorch簡明教學上篇的詳細內容。更多資訊請關注PHP中文網其他相關文章!

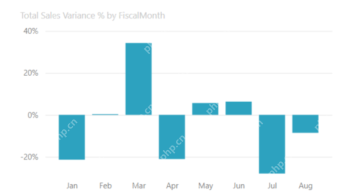

大多數使用的10個功率BI圖 - 分析VidhyaApr 16, 2025 pm 12:05 PM

大多數使用的10個功率BI圖 - 分析VidhyaApr 16, 2025 pm 12:05 PM用Microsoft Power BI圖來利用數據可視化的功能 在當今數據驅動的世界中,有效地將復雜信息傳達給非技術觀眾至關重要。 數據可視化橋接此差距,轉換原始數據i

AI的專家系統Apr 16, 2025 pm 12:00 PM

AI的專家系統Apr 16, 2025 pm 12:00 PM專家系統:深入研究AI的決策能力 想像一下,從醫療診斷到財務計劃,都可以訪問任何事情的專家建議。 這就是人工智能專家系統的力量。 這些系統模仿Pro

三個最好的氛圍編碼器分解了這項代碼中的AI革命Apr 16, 2025 am 11:58 AM

三個最好的氛圍編碼器分解了這項代碼中的AI革命Apr 16, 2025 am 11:58 AM首先,很明顯,這種情況正在迅速發生。各種公司都在談論AI目前撰寫的代碼的比例,並且這些代碼的比例正在迅速地增加。已經有很多工作流離失所

跑道AI的Gen-4:AI蒙太奇如何超越荒謬Apr 16, 2025 am 11:45 AM

跑道AI的Gen-4:AI蒙太奇如何超越荒謬Apr 16, 2025 am 11:45 AM從數字營銷到社交媒體的所有創意領域,電影業都站在技術十字路口。隨著人工智能開始重塑視覺講故事的各個方面並改變娛樂的景觀

如何註冊5天ISRO AI免費課程? - 分析VidhyaApr 16, 2025 am 11:43 AM

如何註冊5天ISRO AI免費課程? - 分析VidhyaApr 16, 2025 am 11:43 AMISRO的免費AI/ML在線課程:通向地理空間技術創新的門戶 印度太空研究組織(ISRO)通過其印度遙感研究所(IIR)為學生和專業人士提供了絕佳的機會

AI中的本地搜索算法Apr 16, 2025 am 11:40 AM

AI中的本地搜索算法Apr 16, 2025 am 11:40 AM本地搜索算法:綜合指南 規劃大規模活動需要有效的工作量分佈。 當傳統方法失敗時,本地搜索算法提供了強大的解決方案。 本文探討了爬山和模擬

OpenAI以GPT-4.1的重點轉移,將編碼和成本效率優先考慮Apr 16, 2025 am 11:37 AM

OpenAI以GPT-4.1的重點轉移,將編碼和成本效率優先考慮Apr 16, 2025 am 11:37 AM該版本包括三種不同的型號,GPT-4.1,GPT-4.1 MINI和GPT-4.1 NANO,標誌著向大語言模型景觀內的特定任務優化邁進。這些模型並未立即替換諸如

提示:chatgpt生成假護照Apr 16, 2025 am 11:35 AM

提示:chatgpt生成假護照Apr 16, 2025 am 11:35 AMChip Giant Nvidia週一表示,它將開始製造AI超級計算機(可以處理大量數據並運行複雜算法的機器),完全是在美國首次在美國境內。這一消息是在特朗普總統SI之後發布的

熱AI工具

Undresser.AI Undress

人工智慧驅動的應用程序,用於創建逼真的裸體照片

AI Clothes Remover

用於從照片中去除衣服的線上人工智慧工具。

Undress AI Tool

免費脫衣圖片

Clothoff.io

AI脫衣器

AI Hentai Generator

免費產生 AI 無盡。

熱門文章

熱工具

WebStorm Mac版

好用的JavaScript開發工具

SAP NetWeaver Server Adapter for Eclipse

將Eclipse與SAP NetWeaver應用伺服器整合。

VSCode Windows 64位元 下載

微軟推出的免費、功能強大的一款IDE編輯器

SublimeText3漢化版

中文版,非常好用

Atom編輯器mac版下載

最受歡迎的的開源編輯器