Technology peripherals

Technology peripherals AI

AI 58 lines of code scale Llama 3 to 1 million contexts, any fine-tuned version is applicable

58 lines of code scale Llama 3 to 1 million contexts, any fine-tuned version is applicable58 lines of code scale Llama 3 to 1 million contexts, any fine-tuned version is applicable

Llama 3, the majestic king of open source, original context window actually only has...8k, which made me swallow the words "really delicious" on my lips again. .

Today, when 32k is the starting point and 100k is common, is this deliberately leaving room for contributions to the open source community?

The open source community will certainly not miss this opportunity:

Now with only 58 lines of code, any fine-tuned version of Llama 3 70b can be automatically extended 1048k(One million)Context.

Behind the scenes is a LoRA, extracted from a fine-tuned version of Llama 3 70B Instruct that extends the context, The file is only 800mb .

Next, using Mergekit, you can run it with other models of the same architecture or merge it directly into the model.

The fine-tuned version of the 1048k context used has just achieved an all-green (100% accuracy) score in the popular needle-in-a-haystack test.

It must be said that the speed of progress of open source is exponential.

How to make 1048k contextual LoRA

First, the 1048k contextual version of Llama 3 fine-tuning model comes from Gradient AI, an enterprise AI solutions startup.

The corresponding LoRA comes from developer Eric Hartford. By comparing the differences between the fine-tuned model and the original version, the parameters are extracted Variety.

He first produced a 524k contextual version, and then updated the 1048k version.

First of all, the Gradient team continued training based on the original Llama 3 70B Instruct and obtained Llama-3-70B-Instruct-Gradient-1048k.

The specific method is as follows:

- Adjust position encoding: Initialize RoPE theta with NTK-aware interpolation Optimal scheduling, optimization to prevent loss of high-frequency information after extending the length

- Progressive training: Proposed by the UC Berkeley Pieter Abbeel team The Blockwise RingAttention method extends the context length of the model

It is worth noting that the team layered parallelization on top of Ring Attention through a custom network topology to better utilize large GPU clusters to cope with device-to-device The network bottleneck caused by transferring many KV blocks between nodes.

Ultimately, the training speed of the model was increased by 33 times.

#In long text retrieval performance evaluation, only in the most difficult version, errors are prone to occur when the "needle" is hidden in the middle of the text.

After having the fine-tuned model with extended context, use the open source tool Mergekit to compare the fine-tuned model and the basic model, and extract the difference in parameters as LoRA.

Also using Mergekit, you can merge the extracted LoRA into other models with the same architecture.

The merge code is also open sourced by Eric Hartford on GitHub, with only 58 lines.

It is unclear whether this LoRA merge will work with Llama 3, which is fine-tuned on Chinese.

However, it can be seen that the Chinese developer community has paid attention to this development.

524k version LoRA: https://huggingface.co/cognitivecomputations/Llama-3-70B-Gradient-524k-adapter

1048k version LoRA: https://huggingface.co/cognitivecomputations/Llama-3-70B-Gradient-1048k-adapter

Merge code: https://gist.github.com/ehartford/731e3f7079db234fa1b79a01e09859ac

The above is the detailed content of 58 lines of code scale Llama 3 to 1 million contexts, any fine-tuned version is applicable. For more information, please follow other related articles on the PHP Chinese website!

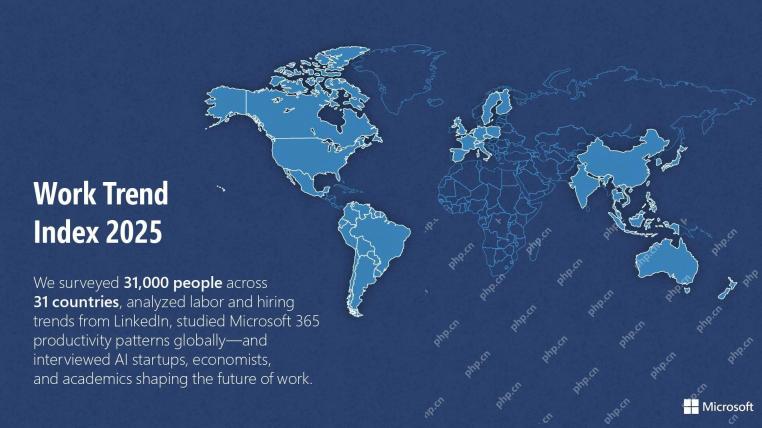

Microsoft Work Trend Index 2025 Shows Workplace Capacity StrainApr 24, 2025 am 11:19 AM

Microsoft Work Trend Index 2025 Shows Workplace Capacity StrainApr 24, 2025 am 11:19 AMThe burgeoning capacity crisis in the workplace, exacerbated by the rapid integration of AI, demands a strategic shift beyond incremental adjustments. This is underscored by the WTI's findings: 68% of employees struggle with workload, leading to bur

Can AI Understand? The Chinese Room Argument Says No, But Is It Right?Apr 24, 2025 am 11:18 AM

Can AI Understand? The Chinese Room Argument Says No, But Is It Right?Apr 24, 2025 am 11:18 AMJohn Searle's Chinese Room Argument: A Challenge to AI Understanding Searle's thought experiment directly questions whether artificial intelligence can genuinely comprehend language or possess true consciousness. Imagine a person, ignorant of Chines

China's 'Smart' AI Assistants Echo Microsoft Recall's Privacy FlawsApr 24, 2025 am 11:17 AM

China's 'Smart' AI Assistants Echo Microsoft Recall's Privacy FlawsApr 24, 2025 am 11:17 AMChina's tech giants are charting a different course in AI development compared to their Western counterparts. Instead of focusing solely on technical benchmarks and API integrations, they're prioritizing "screen-aware" AI assistants – AI t

Docker Brings Familiar Container Workflow To AI Models And MCP ToolsApr 24, 2025 am 11:16 AM

Docker Brings Familiar Container Workflow To AI Models And MCP ToolsApr 24, 2025 am 11:16 AMMCP: Empower AI systems to access external tools Model Context Protocol (MCP) enables AI applications to interact with external tools and data sources through standardized interfaces. Developed by Anthropic and supported by major AI providers, MCP allows language models and agents to discover available tools and call them with appropriate parameters. However, there are some challenges in implementing MCP servers, including environmental conflicts, security vulnerabilities, and inconsistent cross-platform behavior. Forbes article "Anthropic's model context protocol is a big step in the development of AI agents" Author: Janakiram MSVDocker solves these problems through containerization. Doc built on Docker Hub infrastructure

Using 6 AI Street-Smart Strategies To Build A Billion-Dollar StartupApr 24, 2025 am 11:15 AM

Using 6 AI Street-Smart Strategies To Build A Billion-Dollar StartupApr 24, 2025 am 11:15 AMSix strategies employed by visionary entrepreneurs who leveraged cutting-edge technology and shrewd business acumen to create highly profitable, scalable companies while maintaining control. This guide is for aspiring entrepreneurs aiming to build a

Google Photos Update Unlocks Stunning Ultra HDR For All Your PicturesApr 24, 2025 am 11:14 AM

Google Photos Update Unlocks Stunning Ultra HDR For All Your PicturesApr 24, 2025 am 11:14 AMGoogle Photos' New Ultra HDR Tool: A Game Changer for Image Enhancement Google Photos has introduced a powerful Ultra HDR conversion tool, transforming standard photos into vibrant, high-dynamic-range images. This enhancement benefits photographers a

Descope Builds Authentication Framework For AI Agent IntegrationApr 24, 2025 am 11:13 AM

Descope Builds Authentication Framework For AI Agent IntegrationApr 24, 2025 am 11:13 AMTechnical Architecture Solves Emerging Authentication Challenges The Agentic Identity Hub tackles a problem many organizations only discover after beginning AI agent implementation that traditional authentication methods aren’t designed for machine-

Google Cloud Next 2025 And The Connected Future Of Modern WorkApr 24, 2025 am 11:12 AM

Google Cloud Next 2025 And The Connected Future Of Modern WorkApr 24, 2025 am 11:12 AM(Note: Google is an advisory client of my firm, Moor Insights & Strategy.) AI: From Experiment to Enterprise Foundation Google Cloud Next 2025 showcased AI's evolution from experimental feature to a core component of enterprise technology, stream

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

SAP NetWeaver Server Adapter for Eclipse

Integrate Eclipse with SAP NetWeaver application server.

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software

EditPlus Chinese cracked version

Small size, syntax highlighting, does not support code prompt function

PhpStorm Mac version

The latest (2018.2.1) professional PHP integrated development tool

SublimeText3 Linux new version

SublimeText3 Linux latest version