Home >Technology peripherals >AI >An 8-year masterpiece of NTU Zhou Zhihua's team! The 'learningware' system solves the problem of machine learning reuse, and 'model fusion' emerges a new paradigm of scientific research

An 8-year masterpiece of NTU Zhou Zhihua's team! The 'learningware' system solves the problem of machine learning reuse, and 'model fusion' emerges a new paradigm of scientific research

- PHPzforward

- 2024-02-01 14:24:261380browse

HuggingFace is the most popular machine learning open source community, with 300,000 different machine learning models and 100,000 available applications.

What would it look like if the 300,000 models on HuggingFace could be freely combined to complete new learning tasks together?

In fact, in 2016, when HuggingFace came out, Professor Zhou Zhihua of Nanjing University proposed the concept of “Learnware” and drew such a blueprint.

Recently, the team of Professor Zhou Zhihua of Nanjing University launched such a platform-Beimingwu.

Address: https://bmwu.cloud/

Beimingwu not only provides researchers and users with the opportunity to upload their own models, but also Model matching and collaborative fusion can be performed according to user needs to efficiently handle learning tasks.

Paper address: https://arxiv.org/abs/2401.14427

Beimingwu System warehouse: https://www.gitlink.org.cn/beimingwu/beimingwu

Scientific research toolkit warehouse: https://www.gitlink.org.cn/beimingwu/learnware

The biggest feature of this platform is the introduction of the Learnware system, which has achieved a breakthrough in model adaptive matching and collaboration capabilities based on user needs.

Learningware consists of a machine learning model and a specification describing the model, that is, "Learningware = Model Specification".

The specification of the learning software consists of two parts: "semantic specification" and "statistical specification":

- The semantic specification determines the type of the model through text and functions are described;

- Statistical rules use various machine learning technologies to describe the statistical information contained in the model.

The specification of the learningware describes the capabilities of the model so that the model can be fully recognized and reused in the future without the user knowing anything about the learningware in advance to meet user needs. .

The protocol is the core component of the learningware base system, which connects all the learningware processes in the system, including learningware uploading, organization, Search, deploy and reuse.

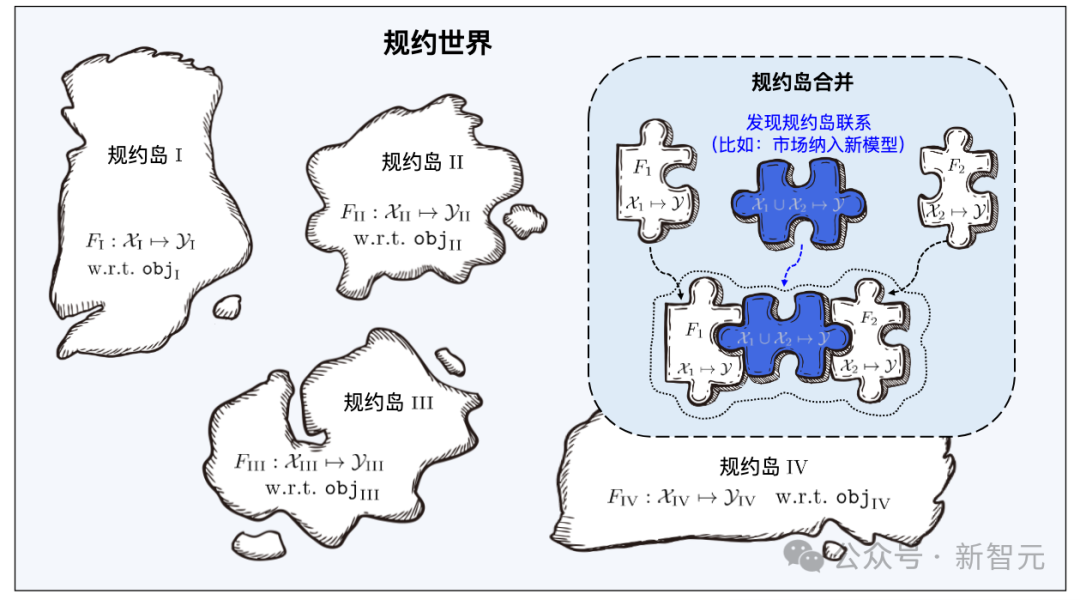

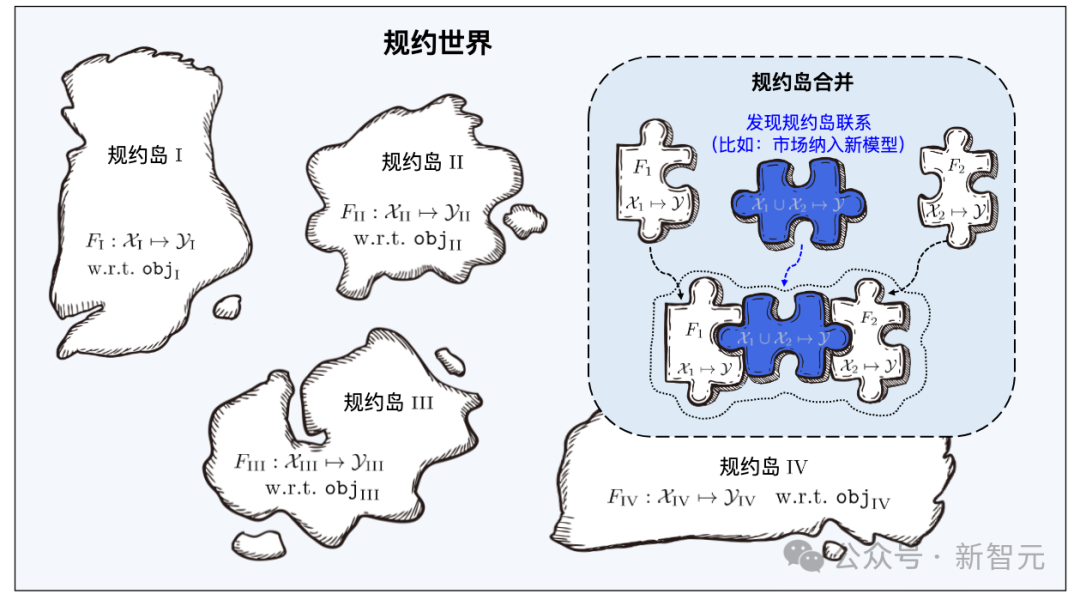

Just like Yanziwu in "Dragon Babu" is composed of many small islands, the regulations in Beimingwu are also like small islands.

Learnware from different feature/marker spaces constitutes numerous protocol islands, and all protocol islands together constitute the protocol in the learnware base system world. In the protocol world, if the connections between different islands can be discovered and established, then the corresponding protocol islands will be able to be merged.

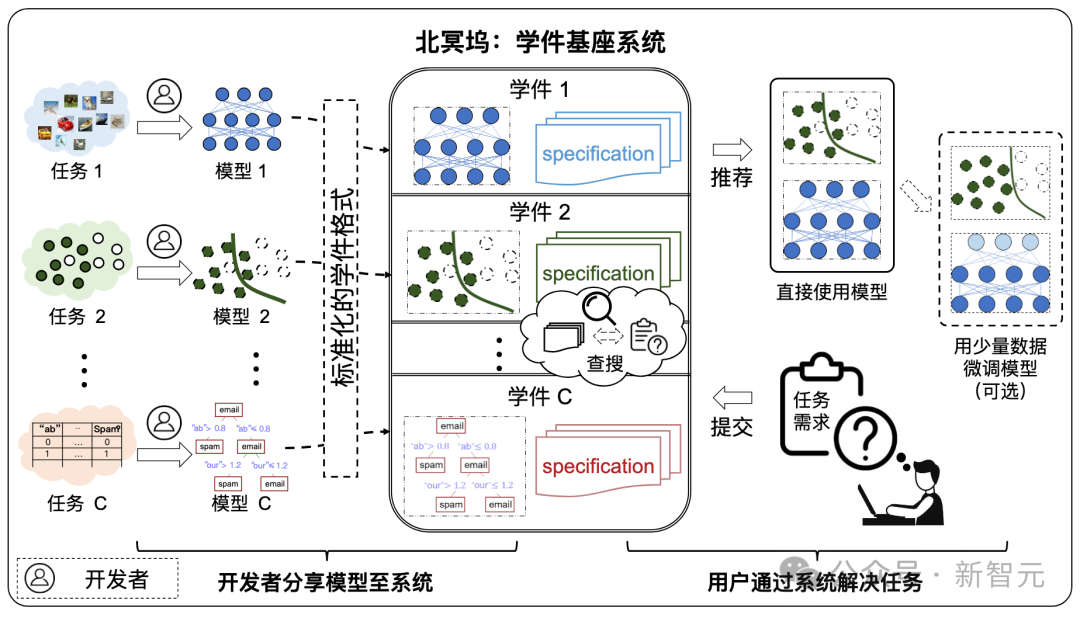

Under the learningware paradigm, developers around the world can share models to the learningware base system. The system helps users efficiently solve machine learning tasks by effectively searching and reusing learningware. No need to build a machine learning model from scratch.

Beimingwu is the first systematic open source implementation of academicware, providing a preliminary scientific research platform for academicware-related research.

Developers who are willing to share can freely submit models, and the Learning Warehouse assists in generating specifications to form learning software and store them in the Learning Warehouse. In this process, there is no need to disclose your training data to the learning dock.

Future users can submit their requirements to the Learning Warehouse, and with the assistance of the Learning Warehouse, they can search and reuse learning materials to complete their own machine learning tasks, and users do not need to submit to the Learning Warehouse. The dock leaked its own data.

And in the future, after there are millions of learning software in the learning dock, "emergence" behavior may occur: machine learning tasks for which no model has been specially developed in the past may be solved through Solved by reusing several existing learning software.

Learningware Base System

Machine learning has achieved great success in many fields, but it still faces many problems, such as the need for a large amount of training data and Superior training techniques, difficulties with continuous learning, risk of catastrophic forgetting, and leakage of data privacy/ownership, etc.

Although each of the above problems has corresponding research, because the problems are coupled to each other, solving one of the problems may cause other problems to become more serious.

The learning base system hopes to solve many of the above problems at the same time through an overall framework:

- Lack of training data/skills: even for lack of Ordinary users with smaller training skills or smaller amounts of data can also obtain powerful machine learning models because users can obtain high-performing learningware from the learningware base system and further adjust or improve it, rather than building the model from scratch themselves. .

- Continuous learning: As learning software with excellent performance trained on various tasks is continuously submitted, the knowledge in the learning software base system will continue to be enriched, thereby naturally realizing continuous and lifelong learning. .

- Catastrophic forgetting: Once a learning piece is received, it will always be accommodated in the learning base system, unless all aspects of its functions can be replaced by other learning pieces. Therefore, old knowledge in the learning base system is always retained and never forgotten.

- Data privacy/ownership: Developers only submit models without sharing private data, so data privacy/ownership can be well protected. Although the possibility of reverse engineering the model cannot be completely ruled out, the risk of privacy leakage with the learning base system is very small compared to many other privacy protection schemes.

The composition of the learning base system

As shown in the figure below, the system workflow is divided into the following two stages:

- Submission stage: Developers spontaneously submit various learning software to the learning software base system, and the system will perform quality inspection and further organization on these learning software.

- Deployment stage: When the user submits task requirements, the learningware base system will recommend learningware that is helpful to the user's task according to the learningware specification and guide the user to deploy and reuse it.

Protocol World

Protocol is the core component of the learning base system, connecting the system in series Regarding the entire process of learning software, including learning software uploading, organization, search, deployment and reuse.

Learning materials from different feature/marker spaces constitute numerous protocol islands, and all protocol islands together constitute the protocol world in the learning component base system. In the protocol world, if the connections between different islands can be discovered and established, then the corresponding protocol islands will be able to be merged.

When the learning base system searches, it first locates the specific protocol island through the semantic specifications in the user requirements, and then uses the user requirements to The statistical protocol in the protocol accurately identifies the learning artifacts on the protocol island. The merging of different protocol islands means that the corresponding learning software can be used for tasks in different feature/marker spaces, that is, it can be reused for tasks beyond its original purpose.

Learningware Paradigm builds a unified specification space by making full use of the capabilities of machine learning models shared by the community, and efficiently solves machine learning tasks for new users in a unified manner. As the number of learning pieces increases, by effectively organizing the learning piece structure, the overall ability of the learning piece base system to solve tasks will be significantly enhanced.

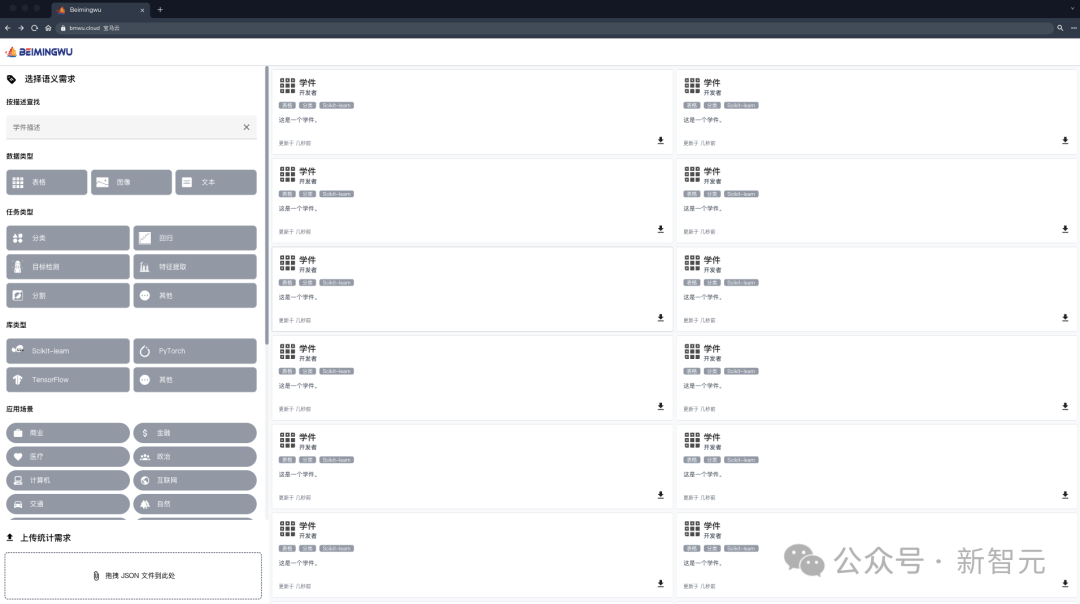

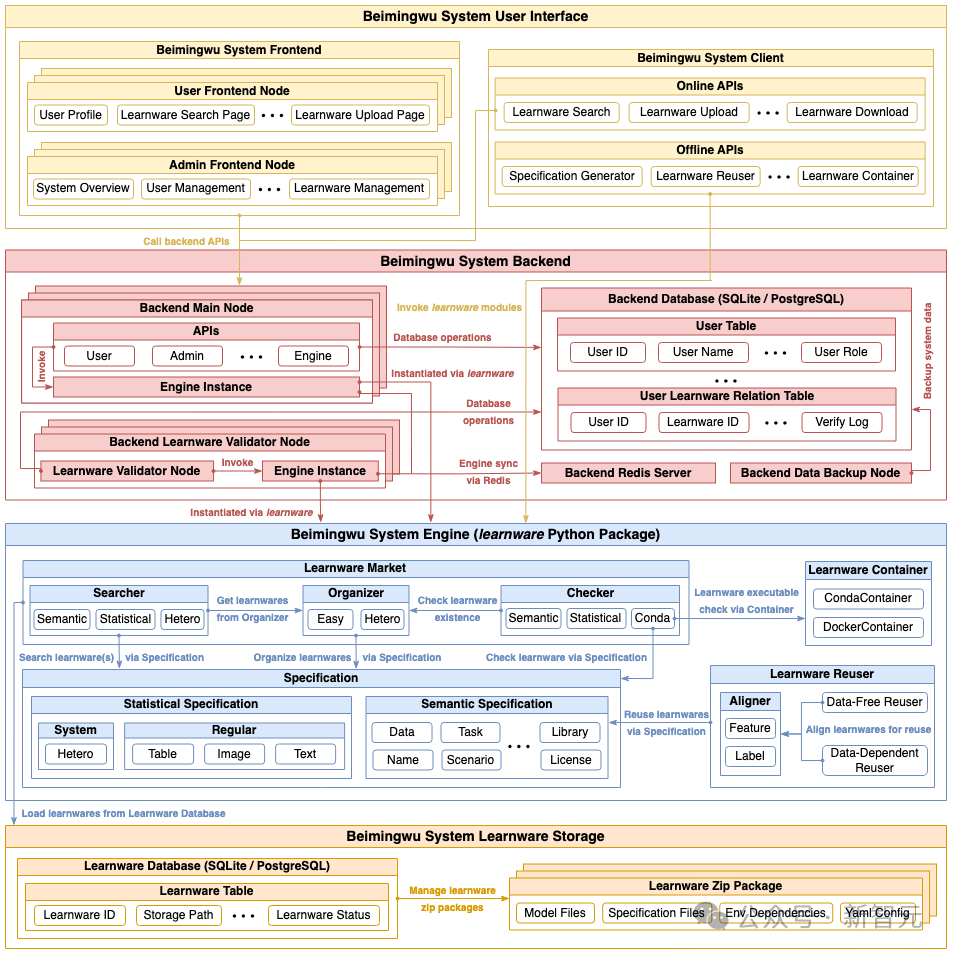

The architecture of Beimingwu

As shown in the figure below, the system architecture of Beimingwu contains four levels, from the learning software storage layer As for the user interaction layer, the learningware paradigm is systematically implemented from the bottom up for the first time. The specific functions of the four levels are as follows:

- Learningware storage layer: manages learningware stored in zip package format, and provides access to relevant information through the learningware database;

- System engine layer: includes the learningware paradigm All processes, including learningware uploading, detection, organization, search, deployment and reuse, are run independently of the backend and frontend in the form of a learnware Python package, providing a rich algorithm interface for learningware-related tasks and scientific research exploration;

- System back-end layer: realizes the industrial-grade deployment of Beimingwu, provides stable system online services, and supports user interaction between the front-end and the client by providing a rich back-end API;

- User interaction layer: Implements web-based front-end and command-line-based client, providing rich and convenient ways for user interaction.

Experimental Evaluation

In the paper, the research team also constructed various types of basic experimental scenarios to evaluate tables, images and text data A benchmark algorithm for specification generation, learning artifact identification and reuse.

Tabular Data Experiment

On various tabular data sets, the team first evaluated the learning software system Performance in identifying and reusing learning artifacts that share the same feature space as user tasks.

Furthermore, since form tasks usually come from different feature spaces, the research team also evaluated the recognition and reuse of learning pieces from different feature spaces.

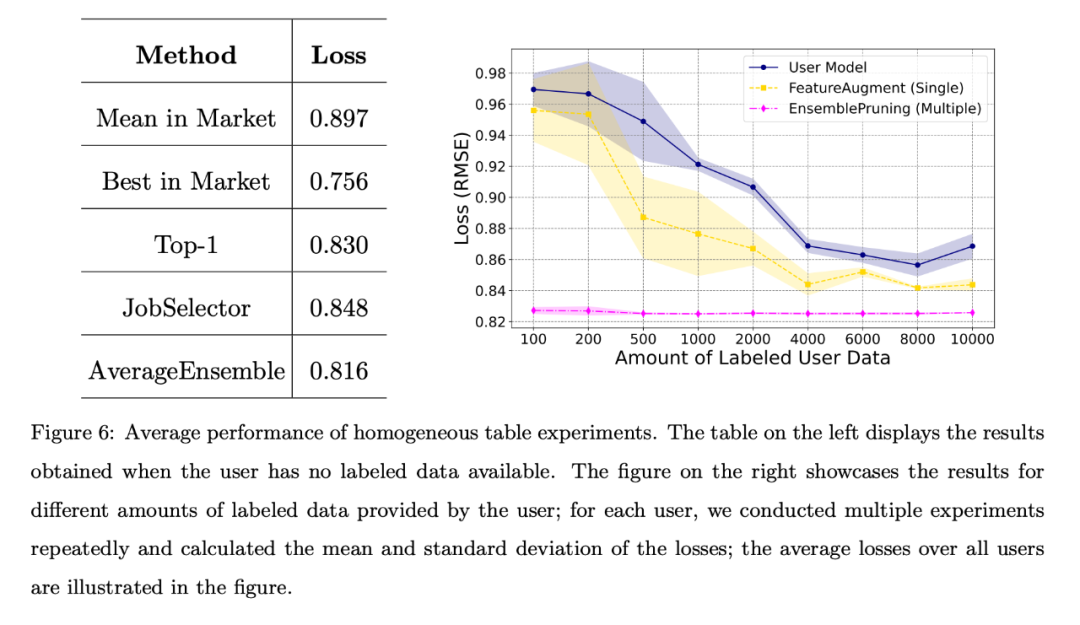

Homogeneous case

In the homogeneous case, the 53 stores in the PFS dataset act as 53 independent user.

Each store utilizes its own test data as user task data and adopts a unified feature engineering approach. These users can then search the base system for homogeneous learning items that share the same feature space as their tasks.

When the user has no labeled data or the amount of labeled data is limited, the team compared different benchmark algorithms, and the average loss for all users is shown in the figure below. The left table shows that the data-free approach is much better than randomly selecting and deploying a learnware from the market; the right chart shows that when the user has limited training data, identifying and reusing single or multiple learnware is better than user-trained models. Better performance.

#The left table shows that the data-free approach is much better than randomly selecting and deploying a piece of learning from the market; the right table shows that when the user When training data is limited, identifying and reusing single or multiple learning pieces has better performance than user-trained models.

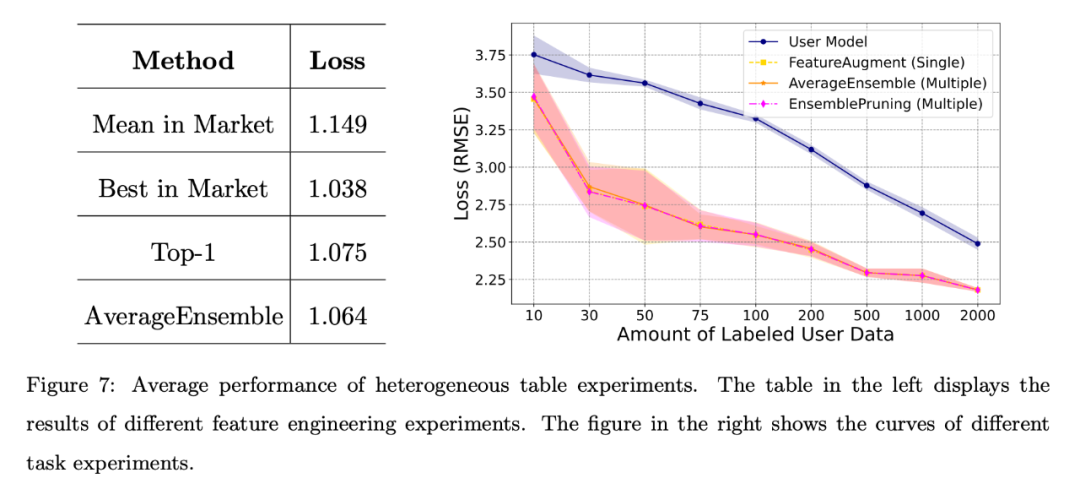

Heterogeneous cases

Heterogeneous cases can be further divided into for different feature engineering and different task scenarios.

Different feature engineering scenarios:

The results shown on the left in the figure below show that even if the user lacks annotation data, the learning software in the system It can show strong performance, especially the AverageEnsemble method that reuses multiple learning pieces.

Different task scenarios:

The right picture above shows the user self-training model and several Loss curves for learningware reuse methods.

Obviously, experimental verification of heterogeneous learning pieces is beneficial when the amount of user annotated data is limited, and helps to better align with the user's feature space.

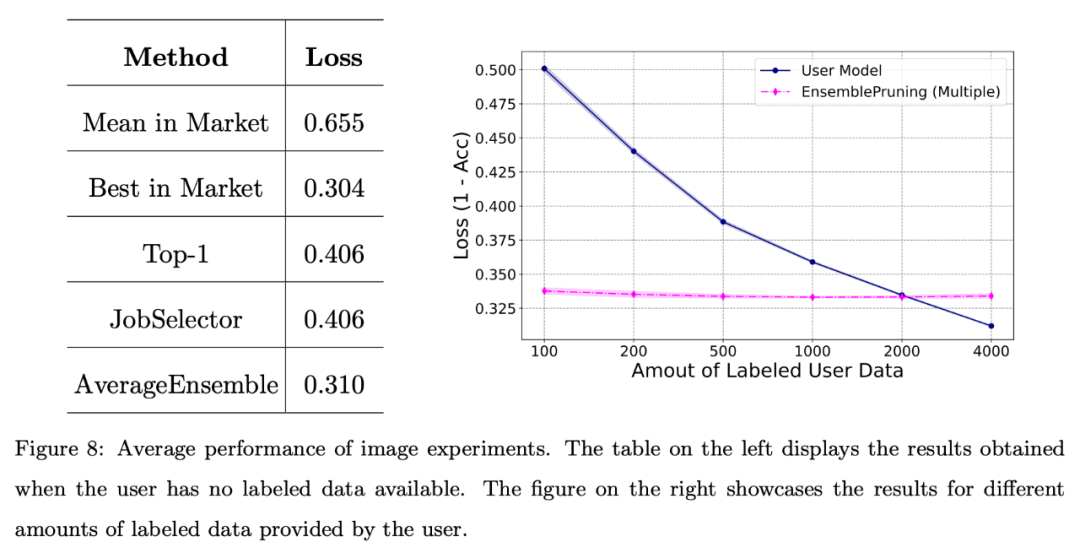

Image and text data experiments

In addition, the research team conducted basic testing of the system on image data sets Evaluate.

The figure below shows that leveraging a learning base system can yield good performance when users face scarcity of annotated data or only have a limited amount of data (fewer than 2000 instances).

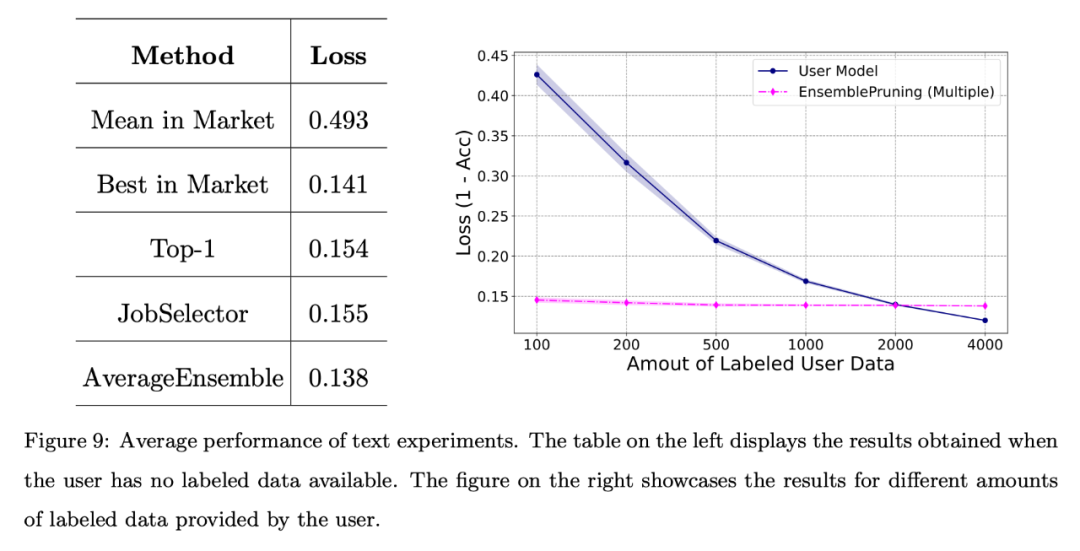

The team also conducted a basic evaluation of the system on a benchmark text dataset. Feature space alignment via a unified feature extractor.

As shown in the figure below, even when no annotation data is provided, the performance obtained through learningware identification and reuse is comparable to the best learningware in the system.

In addition, using the learning base system can reduce approximately 2,000 samples compared to training the model from scratch.

The above is the detailed content of An 8-year masterpiece of NTU Zhou Zhihua's team! The 'learningware' system solves the problem of machine learning reuse, and 'model fusion' emerges a new paradigm of scientific research. For more information, please follow other related articles on the PHP Chinese website!

Related articles

See more- Detailed introduction to python machine learning decision tree

- What does windows boot manager boot failed mean?

- What should I do if the win10 blue screen appears with the error code kernel security check failure?

- Use Tsinghua Source to accelerate Python package downloads, Pip settings for Windows operating systems

- Learn more about how pip works: interpret the download and installation process of Python packages