Technology peripherals

Technology peripherals AI

AI Attention-free large model Eagle7B: Based on RWKV, inference cost is reduced by 10-100 times

Attention-free large model Eagle7B: Based on RWKV, inference cost is reduced by 10-100 timesAttention-free large model Eagle7B: Based on RWKV, the inference cost is reduced by 10-100 times

In the AI track, small models have attracted much attention recently. Compared with models with hundreds of billions of parameters, Model. For example, the Mistral-7B model released by the French AI startup outperformed Llama 2 by 13B in every benchmark, and outperformed Llama 1 by 34B in code, math, and inference.

Compared with large models, small models have many advantages, such as low computing power requirements and the ability to run on the device side.

Recently, a new language model has emerged, namely the 7.52B parameter Eagle 7B, from the open source non-profit organization RWKV, which has the following characteristics:

- Built based on RWKV-v5 architecture, which has lower reasoning cost (RWKV is a linear transformer, reducing reasoning cost 10-100 times more);

- is trained on more than 100 languages and 1.1 trillion tokens;

- is trained on multi-language benchmarks In the test, it is better than all 7B class models;

- In the English evaluation, the performance of Eagle 7B is close to Falcon (1.5T), LLaMA2 (2T), and Mistral;

- Compared with MPT-7B (1T) in English review;

- Transformer without attention.

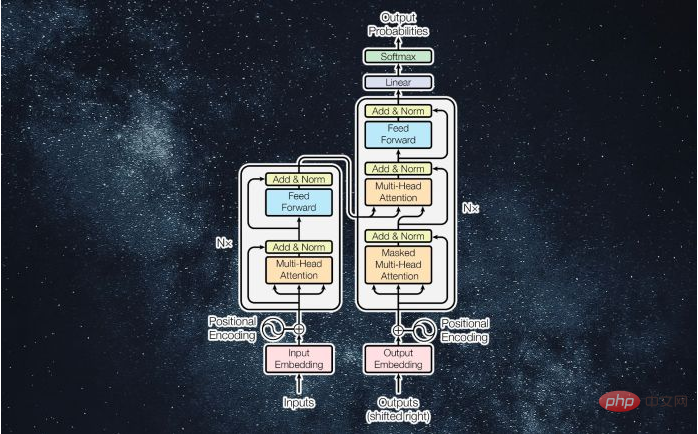

##Eagle 7B is built based on the RWKV-v5 architecture. RWKV (Receptance Weighted Key Value) is a novel architecture that combines the advantages of RNN and Transformer and avoids their shortcomings. It is very well designed and can alleviate the memory and expansion bottlenecks of Transformer and achieve more effective linear expansion. At the same time, RWKV also retains some of the properties that have made Transformer dominant in the field.

Currently RWKV has been iterated to the sixth generation RWKV-6, with performance and size similar to Transformer. Future researchers can use this architecture to create more efficient models.

For more information about RWKV, you can refer to "Reshaping RNN in the Transformer era, RWKV expands the non-Transformer architecture to tens of billions of parameters."

It is worth mentioning that RWKV-v5 Eagle 7B can be used for personal or commercial use without restrictions.

Test results on 23 languages

The performance of different models on multiple languages is as follows, Test benchmarks include xLAMBDA, xStoryCloze, xWinograd, and xCopa.

These benchmarks incorporate mostly common sense reasoning and show the huge leap in multi-language performance of the RWKV architecture from v4 to v5. However, due to the lack of multilingual benchmarks, the study can only test its ability in 23 more commonly used languages, and the ability in the remaining 75 or more languages is still unknown.

Performance in English

The performance of different models in English is judged through 12 benchmarks, including Common sense reasoning and world knowledge.

Additionally, v5 performance starts to align with expected Transformer performance levels given the approximate token training statistics.

Previously, Mistral-7B used a training method of 2-7 trillion Tokens to maintain its lead in the 7B scale model. The study hopes to close this gap so that RWKV-v5 Eagle 7B surpasses llama2 performance and reaches the level of Mistral. The following figure shows that RWKV-v5 Eagle 7B's checkpoints near 300 billion token points show similar performance to pythia-6.9b: This is consistent with previous experiments (pile-based) on the RWKV-v4 architecture, where linear transformers like RWKV are similar in performance level to transformers and have the same number of tokens. train. # Predictably, the emergence of this model marks the arrival of the strongest linear transformer (in terms of evaluation benchmarks) to date.

The above is the detailed content of Attention-free large model Eagle7B: Based on RWKV, inference cost is reduced by 10-100 times. For more information, please follow other related articles on the PHP Chinese website!

从VAE到扩散模型:一文解读以文生图新范式Apr 08, 2023 pm 08:41 PM

从VAE到扩散模型:一文解读以文生图新范式Apr 08, 2023 pm 08:41 PM1 前言在发布DALL·E的15个月后,OpenAI在今年春天带了续作DALL·E 2,以其更加惊艳的效果和丰富的可玩性迅速占领了各大AI社区的头条。近年来,随着生成对抗网络(GAN)、变分自编码器(VAE)、扩散模型(Diffusion models)的出现,深度学习已向世人展现其强大的图像生成能力;加上GPT-3、BERT等NLP模型的成功,人类正逐步打破文本和图像的信息界限。在DALL·E 2中,只需输入简单的文本(prompt),它就可以生成多张1024*1024的高清图像。这些图像甚至

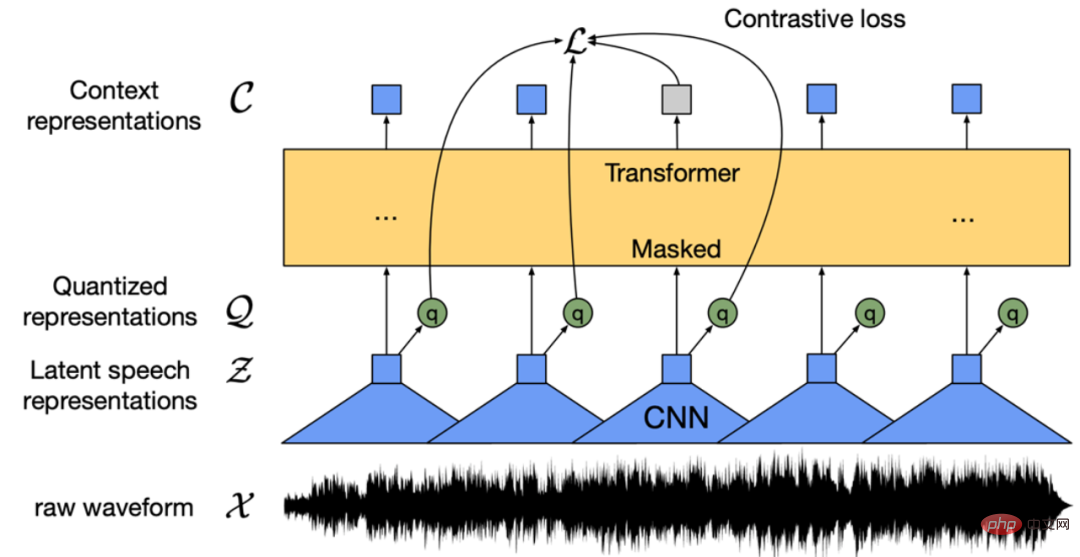

找不到中文语音预训练模型?中文版 Wav2vec 2.0和HuBERT来了Apr 08, 2023 pm 06:21 PM

找不到中文语音预训练模型?中文版 Wav2vec 2.0和HuBERT来了Apr 08, 2023 pm 06:21 PMWav2vec 2.0 [1],HuBERT [2] 和 WavLM [3] 等语音预训练模型,通过在多达上万小时的无标注语音数据(如 Libri-light )上的自监督学习,显著提升了自动语音识别(Automatic Speech Recognition, ASR),语音合成(Text-to-speech, TTS)和语音转换(Voice Conversation,VC)等语音下游任务的性能。然而这些模型都没有公开的中文版本,不便于应用在中文语音研究场景。 WenetSpeech [4] 是

普林斯顿陈丹琦:如何让「大模型」变小Apr 08, 2023 pm 04:01 PM

普林斯顿陈丹琦:如何让「大模型」变小Apr 08, 2023 pm 04:01 PM“Making large models smaller”这是很多语言模型研究人员的学术追求,针对大模型昂贵的环境和训练成本,陈丹琦在智源大会青源学术年会上做了题为“Making large models smaller”的特邀报告。报告中重点提及了基于记忆增强的TRIME算法和基于粗细粒度联合剪枝和逐层蒸馏的CofiPruning算法。前者能够在不改变模型结构的基础上兼顾语言模型困惑度和检索速度方面的优势;而后者可以在保证下游任务准确度的同时实现更快的处理速度,具有更小的模型结构。陈丹琦 普

解锁CNN和Transformer正确结合方法,字节跳动提出有效的下一代视觉TransformerApr 09, 2023 pm 02:01 PM

解锁CNN和Transformer正确结合方法,字节跳动提出有效的下一代视觉TransformerApr 09, 2023 pm 02:01 PM由于复杂的注意力机制和模型设计,大多数现有的视觉 Transformer(ViT)在现实的工业部署场景中不能像卷积神经网络(CNN)那样高效地执行。这就带来了一个问题:视觉神经网络能否像 CNN 一样快速推断并像 ViT 一样强大?近期一些工作试图设计 CNN-Transformer 混合架构来解决这个问题,但这些工作的整体性能远不能令人满意。基于此,来自字节跳动的研究者提出了一种能在现实工业场景中有效部署的下一代视觉 Transformer——Next-ViT。从延迟 / 准确性权衡的角度看,

Stable Diffusion XL 现已推出—有什么新功能,你知道吗?Apr 07, 2023 pm 11:21 PM

Stable Diffusion XL 现已推出—有什么新功能,你知道吗?Apr 07, 2023 pm 11:21 PM3月27号,Stability AI的创始人兼首席执行官Emad Mostaque在一条推文中宣布,Stable Diffusion XL 现已可用于公开测试。以下是一些事项:“XL”不是这个新的AI模型的官方名称。一旦发布稳定性AI公司的官方公告,名称将会更改。与先前版本相比,图像质量有所提高与先前版本相比,图像生成速度大大加快。示例图像让我们看看新旧AI模型在结果上的差异。Prompt: Luxury sports car with aerodynamic curves, shot in a

五年后AI所需算力超100万倍!十二家机构联合发表88页长文:「智能计算」是解药Apr 09, 2023 pm 07:01 PM

五年后AI所需算力超100万倍!十二家机构联合发表88页长文:「智能计算」是解药Apr 09, 2023 pm 07:01 PM人工智能就是一个「拼财力」的行业,如果没有高性能计算设备,别说开发基础模型,就连微调模型都做不到。但如果只靠拼硬件,单靠当前计算性能的发展速度,迟早有一天无法满足日益膨胀的需求,所以还需要配套的软件来协调统筹计算能力,这时候就需要用到「智能计算」技术。最近,来自之江实验室、中国工程院、国防科技大学、浙江大学等多达十二个国内外研究机构共同发表了一篇论文,首次对智能计算领域进行了全面的调研,涵盖了理论基础、智能与计算的技术融合、重要应用、挑战和未来前景。论文链接:https://spj.scien

什么是Transformer机器学习模型?Apr 08, 2023 pm 06:31 PM

什么是Transformer机器学习模型?Apr 08, 2023 pm 06:31 PM译者 | 李睿审校 | 孙淑娟近年来, Transformer 机器学习模型已经成为深度学习和深度神经网络技术进步的主要亮点之一。它主要用于自然语言处理中的高级应用。谷歌正在使用它来增强其搜索引擎结果。OpenAI 使用 Transformer 创建了著名的 GPT-2和 GPT-3模型。自从2017年首次亮相以来,Transformer 架构不断发展并扩展到多种不同的变体,从语言任务扩展到其他领域。它们已被用于时间序列预测。它们是 DeepMind 的蛋白质结构预测模型 AlphaFold

AI模型告诉你,为啥巴西最可能在今年夺冠!曾精准预测前两届冠军Apr 09, 2023 pm 01:51 PM

AI模型告诉你,为啥巴西最可能在今年夺冠!曾精准预测前两届冠军Apr 09, 2023 pm 01:51 PM说起2010年南非世界杯的最大网红,一定非「章鱼保罗」莫属!这只位于德国海洋生物中心的神奇章鱼,不仅成功预测了德国队全部七场比赛的结果,还顺利地选出了最终的总冠军西班牙队。不幸的是,保罗已经永远地离开了我们,但它的「遗产」却在人们预测足球比赛结果的尝试中持续存在。在艾伦图灵研究所(The Alan Turing Institute),随着2022年卡塔尔世界杯的持续进行,三位研究员Nick Barlow、Jack Roberts和Ryan Chan决定用一种AI算法预测今年的冠军归属。预测模型图

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

SublimeText3 Linux new version

SublimeText3 Linux latest version

WebStorm Mac version

Useful JavaScript development tools

Dreamweaver CS6

Visual web development tools

SAP NetWeaver Server Adapter for Eclipse

Integrate Eclipse with SAP NetWeaver application server.

SublimeText3 Chinese version

Chinese version, very easy to use