Knowledge compression: model distillation and model pruning

Model distillation and pruning are neural network model compression technologies that effectively reduce parameters and computational complexity, and improve operating efficiency and performance. Model distillation improves performance by training a smaller model on a larger model, transferring knowledge. Pruning reduces model size by removing redundant connections and parameters. These two techniques are very useful for model compression and optimization.

Model Distillation

Model distillation is a technique that replicates the predictive power of a large model by training a smaller model. The large model is called the "teacher model" and the small model is called the "student model". Teacher models typically have more parameters and complexity and are therefore better able to fit the training and test data. In model distillation, the student model is trained to imitate the predictive behavior of the teacher model to achieve similar performance at a smaller model volume. In this way, model distillation can reduce the model volume while maintaining the model's predictive power.

Specifically, model distillation is achieved through the following steps:

When training the teacher model, we usually use conventional methods such as backpropagation and stochastic gradient descent to train a large deep neural network model and ensure that it performs well on the training data.

2. Generate soft labels: Use the teacher model to predict the training data and use its output as soft labels. The concept of soft labels is developed based on traditional hard labels (one-hot encoding). It can provide more continuous information and better describe the relationship between different categories.

3. Train the student model: Use soft labels as the objective function to train a small deep neural network model so that it performs well on the training data. At this time, the input and output of the student model are the same as the teacher model, but the model parameters and structure are more simplified and streamlined.

The advantage of model distillation is that it allows small models to have lower computational complexity and storage space requirements while maintaining performance. In addition, using soft labels can provide more continuous information, allowing the student model to better learn the relationships between different categories. Model distillation has been widely used in various application fields, such as natural language processing, computer vision, and speech recognition.

Model Pruning

Model pruning is a technique that compresses neural network models by removing unnecessary neurons and connections. Neural network models usually have a large number of parameters and redundant connections. These parameters and connections may not have much impact on the performance of the model, but will greatly increase the computational complexity and storage space requirements of the model. Model pruning can reduce model size and computational complexity by removing these useless parameters and connections while maintaining model performance.

The specific steps of model pruning are as follows:

1. Train the original model: use conventional training methods, such as backpropagation and randomization Gradient descent trains a large deep neural network model to perform well on training data.

2. Evaluate neuron importance: Use some methods (such as L1 regularization, Hessian matrix, Taylor expansion, etc.) to evaluate the importance of each neuron, that is, to the final output Contribution to the results. Neurons with low importance can be considered as useless neurons.

3. Remove useless neurons and connections: Remove useless neurons and connections based on the importance of the neurons. This can be achieved by setting their weights to zero or deleting the corresponding neurons and connections.

The advantage of model pruning is that it can effectively reduce the size and computational complexity of the model, thereby improving model performance. In addition, model pruning can help reduce overfitting and improve the generalization ability of the model. Model pruning has also been widely used in various application fields, such as natural language processing, computer vision, and speech recognition.

Finally, although model distillation and model pruning are both neural network model compression techniques, their implementation methods and purposes are slightly different. Model distillation focuses more on using the predicted behavior of the teacher model to train the student model, while model pruning focuses more on removing useless parameters and connections to compress the model.

The above is the detailed content of Knowledge compression: model distillation and model pruning. For more information, please follow other related articles on the PHP Chinese website!

Are You At Risk Of AI Agency Decay? Take The Test To Find OutApr 21, 2025 am 11:31 AM

Are You At Risk Of AI Agency Decay? Take The Test To Find OutApr 21, 2025 am 11:31 AMThis article explores the growing concern of "AI agency decay"—the gradual decline in our ability to think and decide independently. This is especially crucial for business leaders navigating the increasingly automated world while retainin

How to Build an AI Agent from Scratch? - Analytics VidhyaApr 21, 2025 am 11:30 AM

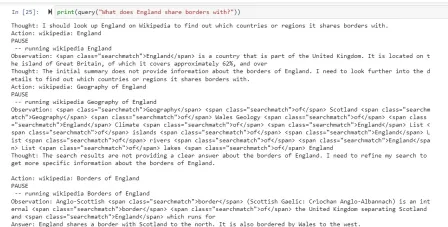

How to Build an AI Agent from Scratch? - Analytics VidhyaApr 21, 2025 am 11:30 AMEver wondered how AI agents like Siri and Alexa work? These intelligent systems are becoming more important in our daily lives. This article introduces the ReAct pattern, a method that enhances AI agents by combining reasoning an

Revisiting The Humanities In The Age Of AIApr 21, 2025 am 11:28 AM

Revisiting The Humanities In The Age Of AIApr 21, 2025 am 11:28 AM"I think AI tools are changing the learning opportunities for college students. We believe in developing students in core courses, but more and more people also want to get a perspective of computational and statistical thinking," said University of Chicago President Paul Alivisatos in an interview with Deloitte Nitin Mittal at the Davos Forum in January. He believes that people will have to become creators and co-creators of AI, which means that learning and other aspects need to adapt to some major changes. Digital intelligence and critical thinking Professor Alexa Joubin of George Washington University described artificial intelligence as a “heuristic tool” in the humanities and explores how it changes

Understanding LangChain Agent FrameworkApr 21, 2025 am 11:25 AM

Understanding LangChain Agent FrameworkApr 21, 2025 am 11:25 AMLangChain is a powerful toolkit for building sophisticated AI applications. Its agent architecture is particularly noteworthy, allowing developers to create intelligent systems capable of independent reasoning, decision-making, and action. This expl

What are the Radial Basis Functions Neural Networks?Apr 21, 2025 am 11:13 AM

What are the Radial Basis Functions Neural Networks?Apr 21, 2025 am 11:13 AMRadial Basis Function Neural Networks (RBFNNs): A Comprehensive Guide Radial Basis Function Neural Networks (RBFNNs) are a powerful type of neural network architecture that leverages radial basis functions for activation. Their unique structure make

The Meshing Of Minds And Machines Has ArrivedApr 21, 2025 am 11:11 AM

The Meshing Of Minds And Machines Has ArrivedApr 21, 2025 am 11:11 AMBrain-computer interfaces (BCIs) directly link the brain to external devices, translating brain impulses into actions without physical movement. This technology utilizes implanted sensors to capture brain signals, converting them into digital comman

Insights on spaCy, Prodigy and Generative AI from Ines MontaniApr 21, 2025 am 11:01 AM

Insights on spaCy, Prodigy and Generative AI from Ines MontaniApr 21, 2025 am 11:01 AMThis "Leading with Data" episode features Ines Montani, co-founder and CEO of Explosion AI, and co-developer of spaCy and Prodigy. Ines offers expert insights into the evolution of these tools, Explosion's unique business model, and the tr

A Guide to Building Agentic RAG Systems with LangGraphApr 21, 2025 am 11:00 AM

A Guide to Building Agentic RAG Systems with LangGraphApr 21, 2025 am 11:00 AMThis article explores Retrieval Augmented Generation (RAG) systems and how AI agents can enhance their capabilities. Traditional RAG systems, while useful for leveraging custom enterprise data, suffer from limitations such as a lack of real-time dat

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.

WebStorm Mac version

Useful JavaScript development tools

Atom editor mac version download

The most popular open source editor

EditPlus Chinese cracked version

Small size, syntax highlighting, does not support code prompt function

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software