Technology peripherals

Technology peripherals AI

AI The value function in reinforcement learning and the importance of its Bellman equation

The value function in reinforcement learning and the importance of its Bellman equationThe value function in reinforcement learning and the importance of its Bellman equation

Reinforcement learning is a branch of machine learning that aims to learn optimal actions in a given environment through trial and error. Among them, the value function and Bellman equation are key concepts in reinforcement learning and help us understand the basic principles of this field.

The value function is the expected value of the long-term return expected to be obtained in a given state. In reinforcement learning, we often use rewards to evaluate the merits of an action. Rewards can be immediate or delayed, with effects occurring in future time steps. Therefore, we can divide value functions into two categories: state value functions and action value functions. State value functions evaluate the value of taking an action in a certain state, while action value functions evaluate the value of taking a specific action in a given state. By computing and updating a value function, reinforcement learning algorithms can find optimal strategies to maximize long-term returns.

The state value function is the expected return that can be obtained by adopting the optimal strategy in a specific state. We can estimate the state value function by calculating the expected return from executing a certain strategy in the current state. The Monte Carlo method and the time difference learning method are commonly used methods to estimate the state value function.

The action value function refers to the expected return that may be obtained after taking an action in a specific state. Q-learning algorithm and SARSA algorithm can be used to estimate the action value function. These algorithms make estimates by calculating the expected return from taking a certain action in the current state.

The Bellman equation is an important concept in reinforcement learning and is used to recursively calculate the value function of the state. The Bellman equation can be divided into two types: the Bellman equation for the state value function and the Bellman equation for the action value function. The former is calculated through the value function of the subsequent state and the immediate reward, while the latter needs to consider the impact of the action taken on the value. These equations play a key role in reinforcement learning algorithms, helping agents learn and make optimal decisions.

The Bellman equation of the state value function states that the value function of a state can be calculated recursively through the value function of the next state and the immediate reward of the state. The mathematical formula is:

V(s)=E[R γV(s')]

where V(s) represents the state The value function of s; R represents the immediate reward after taking an action in state s; γ represents the discount factor, used to measure the importance of future returns; E represents the expected value; s' represents the next state.

The Bellman equation of the action value function expresses that the value function of taking an action in one state can be calculated recursively through the value function of the next state of the action and the immediate reward. The mathematical formula is:

Q(s,a)=E[R γQ(s',a')]

where, Q (s,a) represents the value function of taking action a in state s; R represents the immediate reward after taking action a in state s; γ represents the discount factor; E represents the expected value; s' represents the next step after taking action a. A state; a' represents the optimal action to take in the next state s'.

The Bellman equation is a very important equation in reinforcement learning. It provides an effective recursive calculation method for estimating the state value function and action value function. The Bellman equation can be calculated recursively using value function-based reinforcement learning algorithms, such as value iteration algorithms, policy iteration algorithms, and Q-learning algorithms.

In short, the value function and the Bellman equation are two important concepts in reinforcement learning, and they are the basis for understanding reinforcement learning. By estimating the value function and recursively calculating the Bellman equation, we can find the optimal strategy to take the optimal action in a specific environment and maximize the long-term return.

The above is the detailed content of The value function in reinforcement learning and the importance of its Bellman equation. For more information, please follow other related articles on the PHP Chinese website!

Are You At Risk Of AI Agency Decay? Take The Test To Find OutApr 21, 2025 am 11:31 AM

Are You At Risk Of AI Agency Decay? Take The Test To Find OutApr 21, 2025 am 11:31 AMThis article explores the growing concern of "AI agency decay"—the gradual decline in our ability to think and decide independently. This is especially crucial for business leaders navigating the increasingly automated world while retainin

How to Build an AI Agent from Scratch? - Analytics VidhyaApr 21, 2025 am 11:30 AM

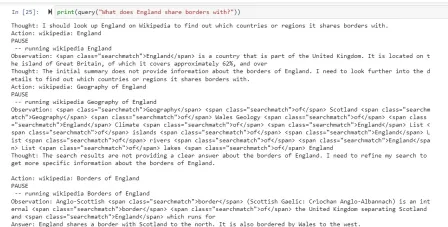

How to Build an AI Agent from Scratch? - Analytics VidhyaApr 21, 2025 am 11:30 AMEver wondered how AI agents like Siri and Alexa work? These intelligent systems are becoming more important in our daily lives. This article introduces the ReAct pattern, a method that enhances AI agents by combining reasoning an

Revisiting The Humanities In The Age Of AIApr 21, 2025 am 11:28 AM

Revisiting The Humanities In The Age Of AIApr 21, 2025 am 11:28 AM"I think AI tools are changing the learning opportunities for college students. We believe in developing students in core courses, but more and more people also want to get a perspective of computational and statistical thinking," said University of Chicago President Paul Alivisatos in an interview with Deloitte Nitin Mittal at the Davos Forum in January. He believes that people will have to become creators and co-creators of AI, which means that learning and other aspects need to adapt to some major changes. Digital intelligence and critical thinking Professor Alexa Joubin of George Washington University described artificial intelligence as a “heuristic tool” in the humanities and explores how it changes

Understanding LangChain Agent FrameworkApr 21, 2025 am 11:25 AM

Understanding LangChain Agent FrameworkApr 21, 2025 am 11:25 AMLangChain is a powerful toolkit for building sophisticated AI applications. Its agent architecture is particularly noteworthy, allowing developers to create intelligent systems capable of independent reasoning, decision-making, and action. This expl

What are the Radial Basis Functions Neural Networks?Apr 21, 2025 am 11:13 AM

What are the Radial Basis Functions Neural Networks?Apr 21, 2025 am 11:13 AMRadial Basis Function Neural Networks (RBFNNs): A Comprehensive Guide Radial Basis Function Neural Networks (RBFNNs) are a powerful type of neural network architecture that leverages radial basis functions for activation. Their unique structure make

The Meshing Of Minds And Machines Has ArrivedApr 21, 2025 am 11:11 AM

The Meshing Of Minds And Machines Has ArrivedApr 21, 2025 am 11:11 AMBrain-computer interfaces (BCIs) directly link the brain to external devices, translating brain impulses into actions without physical movement. This technology utilizes implanted sensors to capture brain signals, converting them into digital comman

Insights on spaCy, Prodigy and Generative AI from Ines MontaniApr 21, 2025 am 11:01 AM

Insights on spaCy, Prodigy and Generative AI from Ines MontaniApr 21, 2025 am 11:01 AMThis "Leading with Data" episode features Ines Montani, co-founder and CEO of Explosion AI, and co-developer of spaCy and Prodigy. Ines offers expert insights into the evolution of these tools, Explosion's unique business model, and the tr

A Guide to Building Agentic RAG Systems with LangGraphApr 21, 2025 am 11:00 AM

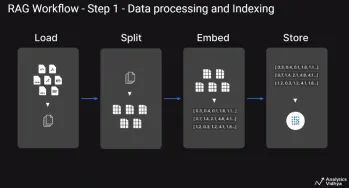

A Guide to Building Agentic RAG Systems with LangGraphApr 21, 2025 am 11:00 AMThis article explores Retrieval Augmented Generation (RAG) systems and how AI agents can enhance their capabilities. Traditional RAG systems, while useful for leveraging custom enterprise data, suffer from limitations such as a lack of real-time dat

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.

Dreamweaver Mac version

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

PhpStorm Mac version

The latest (2018.2.1) professional PHP integrated development tool

WebStorm Mac version

Useful JavaScript development tools