Technology peripherals

Technology peripherals AI

AI ICCV 2023 announced: Popular papers such as ControlNet and SAM won awards

ICCV 2023 announced: Popular papers such as ControlNet and SAM won awardsICCV 2023 announced: Popular papers such as ControlNet and SAM won awards

The International Conference on Computer Vision ICCV (International Conference on Computer Vision) opened in Paris, France this week

As the top academic conference in the global computer vision field, ICCV is held every two years.

ICCV’s popularity has always been on par with CVPR, setting new highs repeatedly

At today’s opening ceremony, ICCV officially announced this year’s paper data: a total of 8,068 papers were submitted to this year’s ICCV. , 2160 of them were accepted, with an acceptance rate of 26.8%, slightly higher than the acceptance rate of the previous ICCV 2021 of 25.9%

In terms of paper topics, the official also announced Relevant data has been obtained: 3D technology with multiple viewing angles and sensors is the most popular

At today’s opening ceremony, the most important part is undoubtedly the award presentation. Next, we will announce the winners of the best paper, best paper nomination and best student paper one by one

Best Paper-Marr Award

This year’s best Best paper (Marr Prize) Two papers won this award

The first study was conducted by researchers at the University of Toronto

Paper address: https://openaccess.thecvf.com/content/ICCV2023/papers/Wei_Passive_Ultra-Wideband_Single-Photon_Imaging_ICCV_2023_paper.pdf

Authors: Mian Wei, Sotiris Nousias, Rahul Gulve, David B. Lindell , Kiriakos N. Kutulakos

Rewritten content: The University of Toronto is a well-known institution

Abstract: This paper considers the range of extreme time scales, simultaneously (seconds to picoseconds) The problem with imaging a dynamic scene, and doing it passively, without much light and without any timing signal from the light source emitting it. Because existing flux estimation techniques for single-photon cameras fail in this case, we develop a flux detection theory that draws insights from stochastic calculus to enable the Time-varying flux of reconstructed pixels in a stream of photon detection timestamps.

This paper uses this theory to (1) show that passive free-running SPAD cameras have an achievable frequency bandwidth under low-flux conditions, spanning the entire DC-to31 GHz range, (2) derive a a novel Fourier domain flux reconstruction algorithm, and (3) ensure that the algorithm's noise model remains valid even for very low photon counts or non-negligible dead times.

Popular papers such as ControlNet and SAM won awards, and the ICCV 2023 paper awards were announced. This paper experimentally demonstrates the potential of this asynchronous imaging mechanism: (1) to image scenes illuminated simultaneously by light sources (bulbs, projectors, multiple pulsed lasers) operating at significantly different speeds, without synchronization, (2 ) Passive non-line-of-sight video acquisition; (3) Record ultra-wideband video that can later be played back at 30 Hz to show everyday movement, but also a billion times slower to show the propagation of light itself

The content that needs to be rewritten is: the second article is what we know as ControNet

Paper address: https ://arxiv.org/pdf/2302.05543.pdf

Authors: Zhang Lumin, Rao Anyi, Maneesh Agrawala

Institution: Stanford University

Abstract: This article is proposed An end-to-end neural network architecture, ControlNet, is developed. This architecture can control the diffusion model (such as Stable Diffusion) by adding additional conditions, thereby improving the image-generating effect, and can generate full-color images from line drawings and generate structures with the same depth. The map, hand key points can also be used to optimize the generation of hands, etc.

The core idea of ControlNet is to add some additional conditions to the text description to control the diffusion model (such as Stable Diffusion), thereby better controlling the character pose, depth, picture structure and other information of the generated image.

Rewritten as: We can input additional conditions in the form of images to allow the model to perform Canny edge detection, depth detection, semantic segmentation, Hough transform line detection, overall nested edge detection (HED), Human pose recognition and other operations, and retain this information in the generated image. Using this model, we can directly convert line drawings or graffiti into full-color images and generate images with the same depth structure. At the same time, we can also optimize the generation of character hands through hand key points

For detailed introduction, please refer to the report on this site: AI dimensionality reduction attacks human painters, Vincentian graphs are introduced into ControlNet, depth and edge information are fully reusable

Best Paper nomination: SAM

In April this year, Meta released an AI model called "Segment Everything (SAM)", which can generate masks for any object in an image or video. This technology shocked researchers in the field of computer vision, and some even called it "CV does not exist anymore"

Now, this high-profile paper has been nominated for the best paper.

Paper address: https://arxiv.org/abs/2304.02643

Rewritten content: Institution: Meta AI

Rewritten content: There are currently two methods to solve the segmentation problem. The first is interactive segmentation, which can be used to segment any class of objects but requires a human to guide the method by iteratively refining the mask. The second is automatic segmentation, which can be used to segment predefined specific object categories (such as cats or chairs), but requires a large number of manually annotated objects for training (such as thousands or even tens of thousands of examples of segmented cats). Neither of these two methods provide a universal, fully automatic segmentation method

The SAM proposed by Meta summarizes these two methods well. It is a single model that can easily perform interactive segmentation and automatic segmentation. The model's promptable interface allows users to use it in a flexible way. A wide range of segmentation tasks can be completed by simply designing the correct prompts for the model (clicks, box selections, text, etc.)

Summary , these features enable SAM to adapt to new tasks and domains. This flexibility is unique in the field of image segmentation

For detailed introduction, please refer to the report on this site:CV no longer exists? Meta releases "split everything" AI model, CV may usher in GPT-3 moment

Best Student Paper

The research was conducted by Cornell University It was jointly completed by researchers from , Google Research and UC Berkeley. The first work was Qianqian Wang, a doctoral student from Cornell Tech. They jointly proposed OmniMotion, a complete and globally consistent motion representation, and proposed a new test-time optimization method to perform accurate and complete motion estimation for every pixel in the video.

- Paper address: https://arxiv.org/abs/2306.05422

- Project homepage: https://omnimotion.github.io/

In the field of computer vision, there are two commonly used motion estimation methods: sparse feature tracking and dense optical flow. However, both methods have some drawbacks. Sparse feature tracking cannot model the motion of all pixels, while dense optical flow cannot capture motion trajectories for a long time

OmniMotion is a new technology proposed by research that uses quasi-3D canonical volumes to characterize video. OmniMotion is able to track every pixel through a bijection between local space and canonical space. This representation not only ensures global consistency and motion tracking even when objects are occluded, but also enables modeling of any combination of camera and object motion. Experiments have proven that the OmniMotion method is significantly better than the existing SOTA method in performance

For detailed introduction, please refer to the report on this site: Track every pixel anytime, anywhere , the "track everything" video algorithm that is not afraid of occlusion is here

Of course, in addition to these award-winning papers, there are many outstanding papers in ICCV this year that deserve everyone's attention. Finally, here is an initial list of 17 award-winning papers.

The above is the detailed content of ICCV 2023 announced: Popular papers such as ControlNet and SAM won awards. For more information, please follow other related articles on the PHP Chinese website!

Are You At Risk Of AI Agency Decay? Take The Test To Find OutApr 21, 2025 am 11:31 AM

Are You At Risk Of AI Agency Decay? Take The Test To Find OutApr 21, 2025 am 11:31 AMThis article explores the growing concern of "AI agency decay"—the gradual decline in our ability to think and decide independently. This is especially crucial for business leaders navigating the increasingly automated world while retainin

How to Build an AI Agent from Scratch? - Analytics VidhyaApr 21, 2025 am 11:30 AM

How to Build an AI Agent from Scratch? - Analytics VidhyaApr 21, 2025 am 11:30 AMEver wondered how AI agents like Siri and Alexa work? These intelligent systems are becoming more important in our daily lives. This article introduces the ReAct pattern, a method that enhances AI agents by combining reasoning an

Revisiting The Humanities In The Age Of AIApr 21, 2025 am 11:28 AM

Revisiting The Humanities In The Age Of AIApr 21, 2025 am 11:28 AM"I think AI tools are changing the learning opportunities for college students. We believe in developing students in core courses, but more and more people also want to get a perspective of computational and statistical thinking," said University of Chicago President Paul Alivisatos in an interview with Deloitte Nitin Mittal at the Davos Forum in January. He believes that people will have to become creators and co-creators of AI, which means that learning and other aspects need to adapt to some major changes. Digital intelligence and critical thinking Professor Alexa Joubin of George Washington University described artificial intelligence as a “heuristic tool” in the humanities and explores how it changes

Understanding LangChain Agent FrameworkApr 21, 2025 am 11:25 AM

Understanding LangChain Agent FrameworkApr 21, 2025 am 11:25 AMLangChain is a powerful toolkit for building sophisticated AI applications. Its agent architecture is particularly noteworthy, allowing developers to create intelligent systems capable of independent reasoning, decision-making, and action. This expl

What are the Radial Basis Functions Neural Networks?Apr 21, 2025 am 11:13 AM

What are the Radial Basis Functions Neural Networks?Apr 21, 2025 am 11:13 AMRadial Basis Function Neural Networks (RBFNNs): A Comprehensive Guide Radial Basis Function Neural Networks (RBFNNs) are a powerful type of neural network architecture that leverages radial basis functions for activation. Their unique structure make

The Meshing Of Minds And Machines Has ArrivedApr 21, 2025 am 11:11 AM

The Meshing Of Minds And Machines Has ArrivedApr 21, 2025 am 11:11 AMBrain-computer interfaces (BCIs) directly link the brain to external devices, translating brain impulses into actions without physical movement. This technology utilizes implanted sensors to capture brain signals, converting them into digital comman

Insights on spaCy, Prodigy and Generative AI from Ines MontaniApr 21, 2025 am 11:01 AM

Insights on spaCy, Prodigy and Generative AI from Ines MontaniApr 21, 2025 am 11:01 AMThis "Leading with Data" episode features Ines Montani, co-founder and CEO of Explosion AI, and co-developer of spaCy and Prodigy. Ines offers expert insights into the evolution of these tools, Explosion's unique business model, and the tr

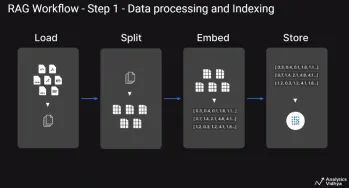

A Guide to Building Agentic RAG Systems with LangGraphApr 21, 2025 am 11:00 AM

A Guide to Building Agentic RAG Systems with LangGraphApr 21, 2025 am 11:00 AMThis article explores Retrieval Augmented Generation (RAG) systems and how AI agents can enhance their capabilities. Traditional RAG systems, while useful for leveraging custom enterprise data, suffer from limitations such as a lack of real-time dat

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.

Dreamweaver Mac version

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

PhpStorm Mac version

The latest (2018.2.1) professional PHP integrated development tool

WebStorm Mac version

Useful JavaScript development tools