Technology peripherals

Technology peripherals AI

AI This 'mistake' is not really a mistake: start with four classic papers to understand what is 'wrong' with the Transformer architecture diagram

This 'mistake' is not really a mistake: start with four classic papers to understand what is 'wrong' with the Transformer architecture diagramThis 'mistake' is not really a mistake: start with four classic papers to understand what is 'wrong' with the Transformer architecture diagram

Some time ago, a tweet pointing out the inconsistency between the Transformer architecture diagram and the code in the Google Brain team's paper "Attention Is All You Need" triggered a lot of discussion.

Some people think that Sebastian’s discovery was an unintentional mistake, but at the same time it is also strange. After all, given the popularity of the Transformer paper, this inconsistency should have been mentioned a thousand times over.

Sebastian Raschka said in response to netizen comments that the "most original" code is indeed consistent with the architecture diagram, but the code version submitted in 2017 was modified, but the architecture was not updated at the same time. picture. This is also the root cause of "inconsistent" discussions.

Subsequently, Sebastian published an article on Ahead of AI specifically describing why the original Transformer architecture diagram was inconsistent with the code, and cited multiple papers to briefly explain the development and changes of Transformer.

##The following is the original text of the article, let us take a look at what the article is about:

A few months ago I shared Understanding Large Language Models: A Cross-Section of the Most Relevant Literature To Get Up to Speed and the positive feedback was very encouraging! Therefore, I've added a few papers to keep the list fresh and relevant.

At the same time, it is crucial to keep the list concise and concise so that everyone can get up to speed in a reasonable amount of time. There are also some papers that contain a lot of information and should probably be included.

I would like to share four useful papers to understand Transformer from a historical perspective. While I'm just adding them directly to the Understanding Large Language Models article, I'm also sharing them separately in this article so that they can be more easily found by those who have read Understanding Large Language Models before.

On Layer Normalization in the Transformer Architecture (2020)

Although the original image of Transformer in the picture below (left) (https://arxiv.org/abs/1706.03762) is a useful summary of the original encoder-decoder architecture, but there is a small difference in the diagram. For example, it does layer normalization between residual blocks, which does not match the official (updated) code implementation included with the original Transformer paper. The variant shown below (middle) is called the Post-LN Transformer.

The layer normalization in the Transformer architecture paper shows that Pre-LN works better and can solve the gradient problem as shown below. Many architectures adopt this approach in practice, but it can lead to a breakdown in representation.

So, while there is still discussion about using Post-LN or Pre-LN, there is also a new paper that proposes applying both together: "ResiDual: Transformer with Dual Residual" Connections" (https://arxiv.org/abs/2304.14802), but whether it will be useful in practice remains to be seen.

Illustration: Source https://arxiv.org/abs/1706.03762 ( Left & Center) and https://arxiv.org/abs/2002.04745 (Right)

##Learning to Control Fast-Weight Memories: An Alternative to Dynamic Recurrent Neural Networks (1991)

This article is recommended for those interested in historical tidbits and early methods that are basically similar to the modern Transformer.For example, in 1991, 25 years before the Transformer paper, Juergen Schmidhuber proposed an alternative to recurrent neural networks (https://www.semanticscholar.org/paper/Learning-to-Control- Fast-Weight-Memories:-An-to-Schmidhuber/bc22e87a26d020215afe91c751e5bdaddd8e4922), called Fast Weight Programmers (FWP). Another neural network that achieves fast weight changes is the feedforward neural network involved in the FWP method that learns slowly using the gradient descent algorithm. This blog (https://people.idsia.ch//~juergen/fast-weight-programmer-1991-transformer.html#sec2) compares it with a modern Transformer The analogy is as follows: In today's Transformer terminology, FROM and TO are called key and value respectively. The input to which the fast network is applied is called a query. Essentially, queries are handled by a fast weight matrix, which is the sum of the outer products of keys and values (ignoring normalization and projection). We can use additive outer products or second-order tensor products to achieve end-to-end differentiable active control of rapid changes in weights because all operations of both networks support differentiation. During sequence processing, gradient descent can be used to quickly adapt fast networks to the problems of slow networks. This is mathematically equivalent to (except for the normalization) what has come to be known as a Transformer with linearized self-attention (or linear Transformer). As mentioned in the excerpt above, this approach is now known as linear Transformer or Transformer with linearized self-attention. They come from the papers "Transformers are RNNs: Fast Autoregressive Transformers with Linear Attention" (https://arxiv.org/abs/2006.16236) and "Rethinking Attention with Performers" (https://arxiv. org/abs/2009.14794). In 2021, the paper "Linear Transformers Are Secretly Fast Weight Programmers" (https://arxiv.org/abs/2102.11174) clearly shows that linearized self-attention and the 1990s Equivalence between fast weight programmers. ##Photo source: https://people.idsia.ch// ~juergen/fast-weight-programmer-1991-transformer.html#sec2 ##Universal Language Model Fine-tuning for Text Classification (2018) ULMFit’s proposed language model fine-tuning process is divided into three stages:

However, as a key part of ULMFiT, progressive unfreezing is usually not performed in practice because Transformer architecture usually fine-tunes all layers at once.

Gopher is a particularly good paper (https://arxiv.org/abs/2112.11446) that includes extensive analysis to understand LLM training. The researchers trained an 80-layer, 280 billion parameter model on 300 billion tokens. This includes some interesting architectural modifications, such as using RMSNorm (root mean square normalization) instead of LayerNorm (layer normalization). Both LayerNorm and RMSNorm are better than BatchNorm because they are not limited to batch size and do not require synchronization, which is an advantage in distributed settings with smaller batch sizes. RMSNorm is generally considered to stabilize training in deeper architectures. Besides the interesting tidbits above, the main focus of this article is to analyze task performance analysis at different scales. An evaluation on 152 different tasks shows that increasing model size is most beneficial for tasks such as comprehension, fact-checking, and identifying toxic language, while architecture expansion is less beneficial for tasks related to logical and mathematical reasoning. ##Illustration: Source https://arxiv.org/abs/2112.11446

1. Training the language on a large text corpus Model;

This method of training a language model on a large corpus and then fine-tuning it on downstream tasks is based on Transformer models and basic models (such as BERT, GPT -2/3/4, RoBERTa, etc.).

The above is the detailed content of This 'mistake' is not really a mistake: start with four classic papers to understand what is 'wrong' with the Transformer architecture diagram. For more information, please follow other related articles on the PHP Chinese website!

Are You At Risk Of AI Agency Decay? Take The Test To Find OutApr 21, 2025 am 11:31 AM

Are You At Risk Of AI Agency Decay? Take The Test To Find OutApr 21, 2025 am 11:31 AMThis article explores the growing concern of "AI agency decay"—the gradual decline in our ability to think and decide independently. This is especially crucial for business leaders navigating the increasingly automated world while retainin

How to Build an AI Agent from Scratch? - Analytics VidhyaApr 21, 2025 am 11:30 AM

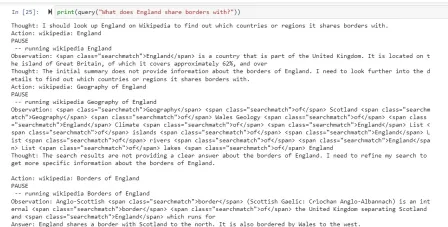

How to Build an AI Agent from Scratch? - Analytics VidhyaApr 21, 2025 am 11:30 AMEver wondered how AI agents like Siri and Alexa work? These intelligent systems are becoming more important in our daily lives. This article introduces the ReAct pattern, a method that enhances AI agents by combining reasoning an

Revisiting The Humanities In The Age Of AIApr 21, 2025 am 11:28 AM

Revisiting The Humanities In The Age Of AIApr 21, 2025 am 11:28 AM"I think AI tools are changing the learning opportunities for college students. We believe in developing students in core courses, but more and more people also want to get a perspective of computational and statistical thinking," said University of Chicago President Paul Alivisatos in an interview with Deloitte Nitin Mittal at the Davos Forum in January. He believes that people will have to become creators and co-creators of AI, which means that learning and other aspects need to adapt to some major changes. Digital intelligence and critical thinking Professor Alexa Joubin of George Washington University described artificial intelligence as a “heuristic tool” in the humanities and explores how it changes

Understanding LangChain Agent FrameworkApr 21, 2025 am 11:25 AM

Understanding LangChain Agent FrameworkApr 21, 2025 am 11:25 AMLangChain is a powerful toolkit for building sophisticated AI applications. Its agent architecture is particularly noteworthy, allowing developers to create intelligent systems capable of independent reasoning, decision-making, and action. This expl

What are the Radial Basis Functions Neural Networks?Apr 21, 2025 am 11:13 AM

What are the Radial Basis Functions Neural Networks?Apr 21, 2025 am 11:13 AMRadial Basis Function Neural Networks (RBFNNs): A Comprehensive Guide Radial Basis Function Neural Networks (RBFNNs) are a powerful type of neural network architecture that leverages radial basis functions for activation. Their unique structure make

The Meshing Of Minds And Machines Has ArrivedApr 21, 2025 am 11:11 AM

The Meshing Of Minds And Machines Has ArrivedApr 21, 2025 am 11:11 AMBrain-computer interfaces (BCIs) directly link the brain to external devices, translating brain impulses into actions without physical movement. This technology utilizes implanted sensors to capture brain signals, converting them into digital comman

Insights on spaCy, Prodigy and Generative AI from Ines MontaniApr 21, 2025 am 11:01 AM

Insights on spaCy, Prodigy and Generative AI from Ines MontaniApr 21, 2025 am 11:01 AMThis "Leading with Data" episode features Ines Montani, co-founder and CEO of Explosion AI, and co-developer of spaCy and Prodigy. Ines offers expert insights into the evolution of these tools, Explosion's unique business model, and the tr

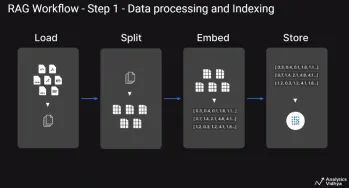

A Guide to Building Agentic RAG Systems with LangGraphApr 21, 2025 am 11:00 AM

A Guide to Building Agentic RAG Systems with LangGraphApr 21, 2025 am 11:00 AMThis article explores Retrieval Augmented Generation (RAG) systems and how AI agents can enhance their capabilities. Traditional RAG systems, while useful for leveraging custom enterprise data, suffer from limitations such as a lack of real-time dat

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.

Dreamweaver Mac version

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

PhpStorm Mac version

The latest (2018.2.1) professional PHP integrated development tool

WebStorm Mac version

Useful JavaScript development tools