Technology peripherals

Technology peripherals AI

AI Tsinghua University and other open source 'tool learning benchmark' ToolBench, fine-tuning model ToolLLaMA performance surpasses ChatGPT

Tsinghua University and other open source 'tool learning benchmark' ToolBench, fine-tuning model ToolLLaMA performance surpasses ChatGPTTsinghua University and other open source 'tool learning benchmark' ToolBench, fine-tuning model ToolLLaMA performance surpasses ChatGPT

Human beings have the ability to create and utilize tools, allowing us to break through the limitations of the body and explore a wider world.

The basic model of artificial intelligence is similar. If you only rely on the weights obtained in the training stage, the usage scenarios will be very limited. However, the recently proposed tool learning combines specialized tools in specific fields with large-scale The combination of basic models can achieve higher efficiency and performance.

However, the current research on tool learning is not in-depth enough, and there is a lack of relevant open source data and code.

Recently, OpenBMB (Open Lab for Big Model Base), an open source community supported by Tsinghua University Natural Language Processing Laboratory and others, released the ToolBench project, which can help developers build open source, large-scale, High-quality instruction tuning data that facilitates the construction of large language models with the ability to use common tools.

The ToolBench warehouse provides relevant data sets, training and evaluation scripts, and the functional model ToolLLaMA fine-tuned on ToolBench. The specific features are: 1. Supports single tool and multiple tools Tool solution The single tool setting follows the LangChain prompt style, and the multi-tool setting follows the AutoGPT prompt style. 2. The model reply not only includes the final answer, but also includes the model’s thinking chain process, tool execution and tool execution results 3. Support Real-world level complexity, supporting multi-step tool calls 4. Rich API that can be used for real-world scenarios such as weather information, search, stock updates, and PowerPoint automation 5. All data is automatically generated by the OpenAI API and filtered by the development team. The data creation process is easily scalable However It should be noted that the data released so far is not final, and researchers are still post-processing the data to improve data quality and increase the coverage of real-world tools. ToolBench The general idea of ToolBench is to train large language models in supervised data based on BMTools.

##The warehouse contains 9,800 pieces of data obtained from 312,000 real API calls, covering single Tool scenario and multi-tool scenario, the following is the statistical information of single tool.

Each row of data is a json dict, including the prompt template for data creation, Human instructions (queries) for tool usage, intermediate thought/tool execution loops, and final answers.

Tool Descrition:BMTools Tool_name: translationTool action: get_translationaction_input: {"text": target texts, "tgt_lang": target language}Generated Data:{"prompt": "Answer the following questions as best you can. Specifically, you have access to the following APIs:\n\nget_translation: . Your input should be a json (args json schema): {{\"text\" : string, \"tgt_lang\" : string, }} The Action to trigger this API should be get_translation and the input parameters should be a json dict string. Pay attention to the type of parameters.\n\nUse the following format:\n\nQuestion: the input question you must answer\nThought: you should always think about what to do\nAction: the action to take, should be one of [get_translation]\nAction Input: the input to the action\nObservation: the result of the action\n... (this Thought/Action/Action Input/Observation can repeat N times, max 7 times)\nThought: I now know the final answer\nFinal Answer: the final answer to the original input question\n\nBegin! Remember: (1) Follow the format, i.e,\nThought:\nAction:\nAction Input:\nObservation:\nFinal Answer:\n (2) Provide as much as useful information in your Final Answer. (3) Do not make up anything, and if your Observation has no link, DO NOT hallucihate one. (4) If you have enough information and want to stop the process, please use \nThought: I have got enough information\nFinal Answer: **your response. \n The Action: MUST be one of the following:get_translation\nQuestion: {input}\n Agent scratchpad (history actions):\n {agent_scratchpad}","query": "My intention is to convert the data provided in ما هي الأقسام الثلاثة للقوات المسلحة؟ into Arabic(ara).\n","chains": [{"thought": "I need to use the get_translation API to convert the text into Arabic.","action": "get_translation","action_input": "{\"text\": \"What are the three branches of the military?\", \"tgt_lang\": \"ara\"}","observation": "\"ما هي الفروع الثلاثة للجيش ؟\""}],"answer": "The translation of \"What are the three branches of the military?\" into Arabic is \"ما هي الفروع الثلاثة للجيش ؟\"."}

Model experiment

Machine evaluation: The researchers randomly selected 100 chain steps for each tool steps) to build a machine evaluation test platform, with an average of 27 final steps and 73 intermediate tool call steps, where the final step is evaluated using the Rouge-L metric, and the intermediate step is evaluated using the ExactMatch metric.

Paper link: https://arxiv.org/pdf/2304.08354.pdf

Paper link: https://arxiv.org/pdf/2304.08354.pdf

The article also reviews existing tool learning research, including tool-enhanced and tool-oriented learning, and formulates a general tool learning framework: starting from understanding user instructions, the model should learn to decompose a complex task into Several subtasks, dynamically adapt the plan through reasoning, and conquer each subtask efficiently by choosing the right tool.

The article also discusses how to train models to improve tool usage and promote the popularization of tool learning.

Considering the lack of systematic tool learning evaluation in previous work, the researchers conducted experiments with 17 representative tools and demonstrated the performance of the current base model in skillfully utilizing the tools. potential.

The paper ends by discussing several open issues in tool learning that require further research, such as ensuring safe and trustworthy tool use, implementing tool creation with basic models, and solving personalization difficult problem.

Reference materials:

https://github.com/OpenBMB/ToolBench

The above is the detailed content of Tsinghua University and other open source 'tool learning benchmark' ToolBench, fine-tuning model ToolLLaMA performance surpasses ChatGPT. For more information, please follow other related articles on the PHP Chinese website!

Insights on spaCy, Prodigy and Generative AI from Ines MontaniApr 21, 2025 am 11:01 AM

Insights on spaCy, Prodigy and Generative AI from Ines MontaniApr 21, 2025 am 11:01 AMThis "Leading with Data" episode features Ines Montani, co-founder and CEO of Explosion AI, and co-developer of spaCy and Prodigy. Ines offers expert insights into the evolution of these tools, Explosion's unique business model, and the tr

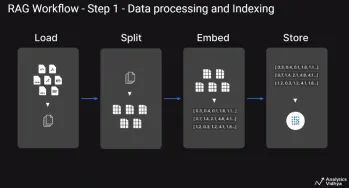

A Guide to Building Agentic RAG Systems with LangGraphApr 21, 2025 am 11:00 AM

A Guide to Building Agentic RAG Systems with LangGraphApr 21, 2025 am 11:00 AMThis article explores Retrieval Augmented Generation (RAG) systems and how AI agents can enhance their capabilities. Traditional RAG systems, while useful for leveraging custom enterprise data, suffer from limitations such as a lack of real-time dat

What are Integrity Constraints in SQL? - Analytics VidhyaApr 21, 2025 am 10:58 AM

What are Integrity Constraints in SQL? - Analytics VidhyaApr 21, 2025 am 10:58 AMSQL Integrity Constraints: Ensuring Database Accuracy and Consistency Imagine you're a city planner, responsible for ensuring every building adheres to regulations. In the world of databases, these regulations are known as integrity constraints. Jus

Top 30 PySpark Interview Questions and Answers (2025)Apr 21, 2025 am 10:51 AM

Top 30 PySpark Interview Questions and Answers (2025)Apr 21, 2025 am 10:51 AMPySpark, the Python API for Apache Spark, empowers Python developers to harness Spark's distributed processing power for big data tasks. It leverages Spark's core strengths, including in-memory computation and machine learning capabilities, offering

Self-Consistency in Prompt EngineeringApr 21, 2025 am 10:50 AM

Self-Consistency in Prompt EngineeringApr 21, 2025 am 10:50 AMHarnessing the Power of Self-Consistency in Prompt Engineering: A Comprehensive Guide Have you ever wondered how to effectively communicate with today's advanced AI models? As Large Language Models (LLMs) like Claude, GPT-3, and GPT-4 become increas

A Comprehensive Guide on Building AI Agents with AutoGPTApr 21, 2025 am 10:48 AM

A Comprehensive Guide on Building AI Agents with AutoGPTApr 21, 2025 am 10:48 AMIntroduction Imagine an AI assistant like R2-D2, always ready to lend a hand, or WALL-E, diligently tackling complex tasks. While creating sentient AI remains a future aspiration, AI agents are already reshaping our world. Leveraging advanced machi

Top 10 Platforms to Practice Data Science SkillsApr 21, 2025 am 10:47 AM

Top 10 Platforms to Practice Data Science SkillsApr 21, 2025 am 10:47 AMData Science Skill Enhancement: A Guide to Top Platforms The increasing reliance on big data analysis has made data science a highly sought-after profession. Success in this field demands a blend of technical and non-technical skills. This article

How to Use Aliases in SQL? - Analytics VidhyaApr 21, 2025 am 10:30 AM

How to Use Aliases in SQL? - Analytics VidhyaApr 21, 2025 am 10:30 AMSQL alias: A tool to improve the readability of SQL queries Do you think there is still room for improvement in the readability of your SQL queries? Then try the SQL alias! Alias This convenient tool allows you to give temporary nicknames to tables and columns, making your queries clearer and easier to process. This article discusses all use cases for aliases clauses, such as renaming columns and tables, and combining multiple columns or subqueries. Overview SQL alias provides temporary nicknames for tables and columns to enhance the readability and manageability of queries. SQL aliases created with AS keywords simplify complex queries by allowing more intuitive table and column references. Examples include renaming columns in the result set, simplifying table names in the join, and combining multiple columns into one

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.

SAP NetWeaver Server Adapter for Eclipse

Integrate Eclipse with SAP NetWeaver application server.

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.

PhpStorm Mac version

The latest (2018.2.1) professional PHP integrated development tool

VSCode Windows 64-bit Download

A free and powerful IDE editor launched by Microsoft