Technology peripherals

Technology peripherals AI

AI Reinforcement learning guru Sergey Levine's new work: Three large models teach robots to recognize their way

Reinforcement learning guru Sergey Levine's new work: Three large models teach robots to recognize their wayReinforcement learning guru Sergey Levine's new work: Three large models teach robots to recognize their way

The robot with a built-in large model has learned to follow language instructions to reach its destination without looking at a map. This achievement comes from the new work of reinforcement learning expert Sergey Levine.

Given a destination, how difficult is it to reach it smoothly without navigation tracks?

This task is also very challenging for humans with poor sense of direction. But in a recent study, several academics "taught" the robot using only three pre-trained models.

We all know that one of the core challenges of robot learning is to enable robots to perform a variety of tasks according to high-level human instructions. This requires robots that can understand human instructions and be equipped with a large number of different actions to carry out these instructions in the real world.

For instruction following tasks in navigation, previous work has mainly focused on learning from trajectories annotated with textual instructions. This may enable understanding of textual instructions, but the cost of data annotation has hindered widespread use of this technique. On the other hand, recent work has shown that self-supervised training of goal-conditioned policies can learn robust navigation. These methods are based on large, unlabeled datasets, with post hoc relabeling to train vision-based controllers. These methods are scalable, general, and robust, but often require the use of cumbersome location- or image-based target specification mechanisms.

In a latest paper, researchers from UC Berkeley, Google and other institutions aim to combine the advantages of these two methods to make a self-supervised system for robot navigation applicable to navigation data without any user annotations. , leveraging the ability of pre-trained models to execute natural language instructions. Researchers use these models to build an "interface" that communicates tasks to the robot. This system leverages the generalization capabilities of pre-trained language and vision-language models to enable robotic systems to accept complex high-level instructions.

- Paper link: https://arxiv.org/pdf/2207.04429.pdf

- Code link: https://github.com/blazejosinski/lm_nav

The researchers observed that it is possible to leverage off-the-shelf pre-trained models trained on large corpora of visual and language datasets ( These corpora are widely available and show zero-shot generalization capabilities) to create interfaces that enable specific instruction tracking. To achieve this, the researchers combined the advantages of vision and language robot-agnostic pre-trained models as well as pre-trained navigation models. Specifically, they used a visual navigation model (VNM:ViNG) to create a robot's visual output into a topological "mental map" of the environment. Given a free-form text instruction, a pre-trained large language model (LLM: GPT-3) is used to decode the instruction into a series of text-form feature points. Then, a visual language model (VLM: CLIP) is used to establish these text feature points in the topological map by inferring the joint likelihood of feature points and nodes. A new search algorithm is then used to maximize the probabilistic objective function and find the robot's instruction path, which is then executed by the VNM. The main contribution of the research is the navigation method under large-scale models (LM Nav), a specific instruction tracking system. It combines three large independent pre-trained models - a self-supervised robot control model that leverages visual observations and physical actions (VNM), a visual language model that places images within text but without a concrete implementation environment (VLM), and a large language model that parses and translates text but has no visual basis or embodied sense (LLM) to enable long-view instruction tracking in complex real-world environments. For the first time, researchers instantiated the idea of combining pre-trained vision and language models with target-conditional controllers to derive actionable instruction paths in the target environment without any fine-tuning. Notably, all three models are trained on large-scale datasets, have self-supervised objective functions, and are used out-of-the-box without fine-tuning - training LM Nav does not require human annotation of robot navigation data.

Experiments show that LM Nav is able to successfully follow natural language instructions in a new environment while using fine-grained commands to remove path ambiguity during complex suburban navigation up to 100 meters.

LM-Nav model overview

So, how do researchers use pre-trained image and language models to provide text interfaces for visual navigation models?

1. Given a set of observations in the target environment, use the target conditional distance function, which is the visual navigation model (VNM) part, infer the connectivity between them, and build a topological map of the connectivity in the environment.

## 2. Large language model (LLM) is used to parse natural language instructions into a series of feature points, these Feature points can be used as intermediate sub-goals for navigation.

3. Visual-language model (VLM) is used to establish visual observations based on feature point phrases. The vision-language model infers a joint probability distribution over the feature point descriptions and images (forming the nodes in the graph above).

4. Using the probability distribution of VLM and the graph connectivity inferred by VNM, adopts a novel search algorithm , retrieve an optimal instruction path in the environment, which (i) satisfies the original instruction and (ii) is the shortest path in the graph that can achieve the goal.

5. Then, The instruction path is executed by the target condition policy, which is part of the VNM.

In independently evaluating the efficacy of VLM in retrieving feature points, the researchers found that although it is the best off-the-shelf model for this type of task, CLIP is unable to retrieve a small number of "hard" feature points, including Fire hydrants and cement mixers. But in many real-world situations, the robot can still successfully find a path to visit the remaining feature points.

Quantitative Evaluation

Table 1 summarizes the quantitative performance of the system in 20 instructions. In 85% of the experiments, LM-Nav was able to consistently follow instructions without collisions or detachments (an average of one intervention every 6.4 kilometers of travel). Compared to the baseline without navigation model, LM-Nav consistently performs better in executing efficient, collision-free target paths. In all unsuccessful experiments, the failure can be attributed to insufficient capabilities in the planning phase—the inability of the search algorithm to intuitively locate certain “hard” feature points in the graph—resulting in incomplete execution of instructions. An investigation of these failure modes revealed that the most critical part of the system is the VLM's ability to detect unfamiliar feature points, such as fire hydrants, and scenes under challenging lighting conditions, such as underexposed images.

The above is the detailed content of Reinforcement learning guru Sergey Levine's new work: Three large models teach robots to recognize their way. For more information, please follow other related articles on the PHP Chinese website!

What is Few-Shot Prompting? - Analytics VidhyaApr 22, 2025 am 09:13 AM

What is Few-Shot Prompting? - Analytics VidhyaApr 22, 2025 am 09:13 AMFew-Shot Prompting: A Powerful Technique in Machine Learning In the realm of machine learning, achieving accurate responses with minimal data is paramount. Few-shot prompting offers a highly effective solution, enabling AI models to perform specific

What is Temperature in prompt engineering? - Analytics VidhyaApr 22, 2025 am 09:11 AM

What is Temperature in prompt engineering? - Analytics VidhyaApr 22, 2025 am 09:11 AMPrompt Engineering: Mastering the "Temperature" Parameter for AI Text Generation Prompt engineering is crucial when working with large language models (LLMs) like GPT-4. A key parameter in prompt engineering is "temperature," whi

Are You At Risk Of AI Agency Decay? Take The Test To Find OutApr 21, 2025 am 11:31 AM

Are You At Risk Of AI Agency Decay? Take The Test To Find OutApr 21, 2025 am 11:31 AMThis article explores the growing concern of "AI agency decay"—the gradual decline in our ability to think and decide independently. This is especially crucial for business leaders navigating the increasingly automated world while retainin

How to Build an AI Agent from Scratch? - Analytics VidhyaApr 21, 2025 am 11:30 AM

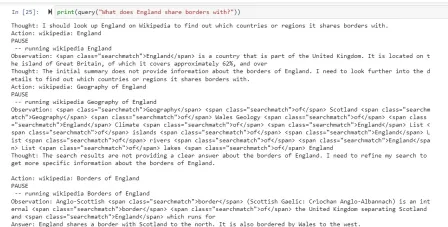

How to Build an AI Agent from Scratch? - Analytics VidhyaApr 21, 2025 am 11:30 AMEver wondered how AI agents like Siri and Alexa work? These intelligent systems are becoming more important in our daily lives. This article introduces the ReAct pattern, a method that enhances AI agents by combining reasoning an

Revisiting The Humanities In The Age Of AIApr 21, 2025 am 11:28 AM

Revisiting The Humanities In The Age Of AIApr 21, 2025 am 11:28 AM"I think AI tools are changing the learning opportunities for college students. We believe in developing students in core courses, but more and more people also want to get a perspective of computational and statistical thinking," said University of Chicago President Paul Alivisatos in an interview with Deloitte Nitin Mittal at the Davos Forum in January. He believes that people will have to become creators and co-creators of AI, which means that learning and other aspects need to adapt to some major changes. Digital intelligence and critical thinking Professor Alexa Joubin of George Washington University described artificial intelligence as a “heuristic tool” in the humanities and explores how it changes

Understanding LangChain Agent FrameworkApr 21, 2025 am 11:25 AM

Understanding LangChain Agent FrameworkApr 21, 2025 am 11:25 AMLangChain is a powerful toolkit for building sophisticated AI applications. Its agent architecture is particularly noteworthy, allowing developers to create intelligent systems capable of independent reasoning, decision-making, and action. This expl

What are the Radial Basis Functions Neural Networks?Apr 21, 2025 am 11:13 AM

What are the Radial Basis Functions Neural Networks?Apr 21, 2025 am 11:13 AMRadial Basis Function Neural Networks (RBFNNs): A Comprehensive Guide Radial Basis Function Neural Networks (RBFNNs) are a powerful type of neural network architecture that leverages radial basis functions for activation. Their unique structure make

The Meshing Of Minds And Machines Has ArrivedApr 21, 2025 am 11:11 AM

The Meshing Of Minds And Machines Has ArrivedApr 21, 2025 am 11:11 AMBrain-computer interfaces (BCIs) directly link the brain to external devices, translating brain impulses into actions without physical movement. This technology utilizes implanted sensors to capture brain signals, converting them into digital comman

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.

Dreamweaver Mac version

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

PhpStorm Mac version

The latest (2018.2.1) professional PHP integrated development tool

WebStorm Mac version

Useful JavaScript development tools