Technology peripherals

Technology peripherals AI

AI Adding special effects only requires one sentence or a picture. The company Stable Diffusion has used AIGC to play new tricks.

Adding special effects only requires one sentence or a picture. The company Stable Diffusion has used AIGC to play new tricks.Adding special effects only requires one sentence or a picture. The company Stable Diffusion has used AIGC to play new tricks.

I believe that many people have already understood the charm of generative AI technology, especially after experiencing the AIGC outbreak in 2022. Text-to-image generation technology represented by Stable Diffusion was once popular all over the world, and countless users poured in to express their artistic imagination with the help of AI...

Compared with image editing, video Editing is a more challenging topic, requiring synthesizing new actions rather than just modifying the visual appearance, while also maintaining temporal consistency.

There are many companies exploring this track. Some time ago, Google released Dreamix to apply text conditional video diffusion model (VDM) to video editing.

Recently, Runway, a company that participated in the creation of Stable Diffusion, launched a new artificial intelligence model "Gen-1", which uses any style specified by applying text prompts or reference images. Can convert existing videos into new videos.

Paper link: https://arxiv.org/pdf/2302.03011.pdf

Project homepage: https://research.runwayml.com/gen1

In 2021, Runway and the University of Munich Researchers collaborated to build the first version of Stable Diffusion. Then Stability AI, a UK startup, stepped in to fund the computational expenses needed to train the model on more data. In 2022, Stability AI brings Stable Diffusion into the mainstream, transforming it from a research project into a global phenomenon.

Runway said it hopes Gen-1 can do for video what Stable Diffusion has done for images.

“We’ve seen an explosion of image generation models,” said Cristóbal Valenzuela, CEO and co-founder of Runway. "I really believe that 2023 will be the year of video."

Specifically, Gen-1 supports several editing modes:

1. Stylization. Transfer the style of any image or prompt to every frame of your video.

2. Storyboard. Turn your model into a fully stylized and animated rendering.

3. Mask. Isolate topics in videos and modify them using simple text prompts.

4. Rendering. Turn textureless rendering into photorealistic output by applying input images or prompts.

5. Customization. Unleash the full power of Gen-1 by customizing your model for higher-fidelity results.

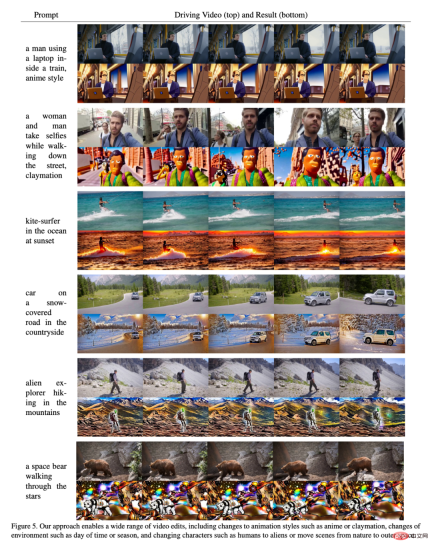

In a demo posted on the company’s official website, it shows how Gen-1 can smoothly change video styles. Let’s take a look at a few examples.

For example, to turn "people on the street" into "clay puppets", you only need one line of prompt:

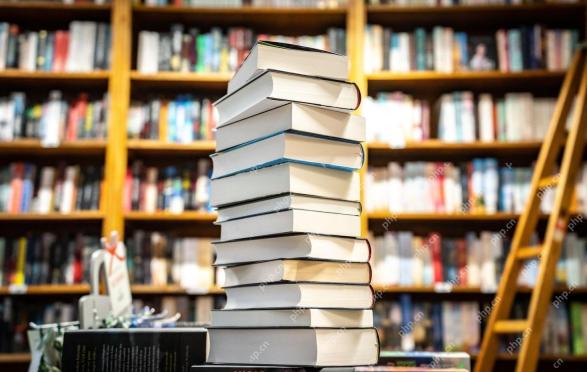

Or turn "books stacked on the table" into "cityscape at night":

From "running on the snow" to "walking on the moon":

The young girl, in seconds Become an ancient sage:

Paper Details

Visual effects and video editing are ubiquitous in the contemporary media landscape. As video-centric platforms gain popularity, the need for more intuitive and powerful video editing tools increases. However, due to the temporal nature of video data, editing in this format is still complex and time-consuming. State-of-the-art machine learning models show great promise in improving the editing process, but many methods have to strike a balance between temporal consistency and spatial detail.

Generative methods for image synthesis have recently experienced a phase of rapid growth in quality and popularity due to the introduction of diffusion models trained on large-scale datasets. Some text-conditional models, such as DALL-E 2 and Stable Diffusion, enable novice users to generate detailed images with just a text prompt. Latent diffusion models provide efficient methods for generating images by compositing in a perceptually compressed space.

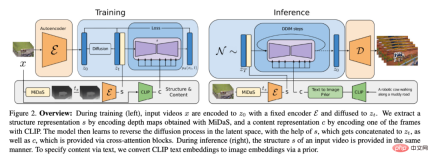

In this paper, the researchers propose a controllable structure- and content-aware video diffusion model on unsubtitled video and paired text-image data. trained on large-scale data sets. We chose to use monocular depth estimation to represent structure and embeddings predicted by a pre-trained neural network to represent content.

This method provides several powerful control modes during its generation process: First, similar to image synthesis models, the researchers train the model to make inferred video content, such as its appearance or style, matching a user-supplied image or text prompt (Figure 1). Second, inspired by the diffusion process, the researchers applied an information masking process to the structural representation to be able to select how well the model supports a given structure. Finally, we tune the inference process through a custom guidance method inspired by classification-free guidance to achieve control over the temporal consistency of generated segments.

Overall, the highlights of this study are as follows:

- By introducing a temporal layer into the pre-trained image model, and Joint training on images and videos extends the latent diffusion model to the field of video generation;

- proposes a structure- and content-aware model that modifies videos under the guidance of sample images or text . Editing occurs entirely within inference time, requiring no additional training or preprocessing for each video;

- demonstrates complete control over time, content, and structural consistency. This study shows for the first time that joint training on image and video data enables inference time to control temporal consistency. For structural consistency, training at different levels of detail in the representation allows the desired settings to be selected during inference;

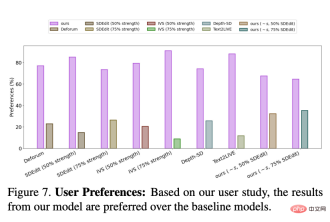

- In a user study, our method More popular than several other methods;

- The trained model can be further customized by fine-tuning on a small set of images to produce more accurate videos of a specific subject.

Method

For research purposes it will be helpful to consider a video from both a content and structure perspective. By structure, here we mean features that describe its geometry and dynamics, such as the shape and position of its bodies, and their temporal changes. For content, it is defined here as features that describe the appearance and semantics of a video, such as the color and style of objects and the lighting of the scene. The goal of the Gen-1 model is to edit the content of a video while preserving its structure.

In order to achieve this goal, the researcher learned the generative model p (x|s, c) of video x, whose conditions are structural representation (represented by s) and content representation ( Represented by c). They infer the shape representation s from the input video and modify it based on the text prompt c describing the edit. First, the implementation of the generative model as a conditional latent video diffusion model is described, and then, the choice of shape and content representations is described. Finally, the optimization process of the model is discussed.

The model structure is shown in Figure 2.

Experiment

To evaluate the method, the researchers used DAVIS videos and various materials. To automatically create the editing prompt, the researchers first ran a subtitle model to obtain a description of the original video content, and then used GPT-3 to generate the editing prompt.

Qualitative research

As shown in Figure 5, the results prove that the method in this article is effective on some different inputs good performance.

##User Research

Researcher also A user study was conducted using Amazon Mechanical Turk (AMT) on an evaluation set of 35 representative video editing prompts. For each sample, 5 annotators were asked to compare the fidelity of video editing prompts between the baseline method and our method ("Which video better represents the provided edited subtitles?"), and then randomly Presented sequentially, with majority vote used to determine final outcome.

The results are shown in Figure 7:

##Quantitative Evaluation

Figure 6 shows the results of each model using the consistency and prompt consistency indicators of this article's framework. The performance of the model in this paper tends to surpass the baseline model in both aspects (i.e., it is higher in the upper right corner of the figure). The researchers also noticed that there is a slight tradeoff for increasing the intensity parameter in the baseline model: greater intensity scaling means higher prompt consistency at the cost of lower frame consistency. They also observed that increasing structural scaling leads to higher prompt consistency because the content becomes no longer determined by the input structure.

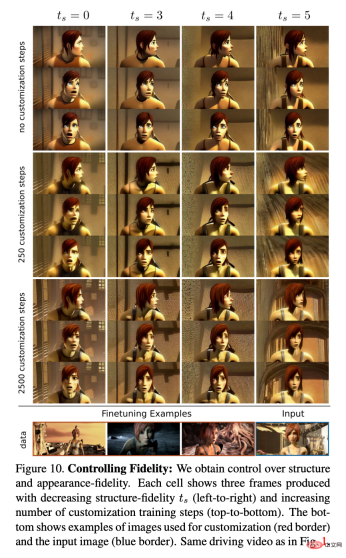

Customization

Figure 10 shows a model with different numbers of customization steps and different levels of structural dependencies. ts example. The researchers observed that customization increases fidelity to the character's style and appearance, so that, despite using driven videos of characters with different characteristics, combined with higher ts values, accurate animation effects can be achieved.

The above is the detailed content of Adding special effects only requires one sentence or a picture. The company Stable Diffusion has used AIGC to play new tricks.. For more information, please follow other related articles on the PHP Chinese website!

Are You At Risk Of AI Agency Decay? Take The Test To Find OutApr 21, 2025 am 11:31 AM

Are You At Risk Of AI Agency Decay? Take The Test To Find OutApr 21, 2025 am 11:31 AMThis article explores the growing concern of "AI agency decay"—the gradual decline in our ability to think and decide independently. This is especially crucial for business leaders navigating the increasingly automated world while retainin

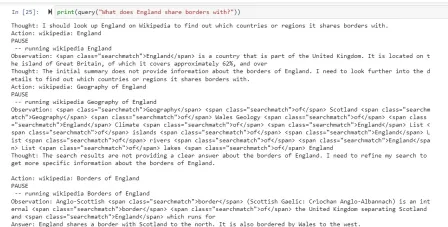

How to Build an AI Agent from Scratch? - Analytics VidhyaApr 21, 2025 am 11:30 AM

How to Build an AI Agent from Scratch? - Analytics VidhyaApr 21, 2025 am 11:30 AMEver wondered how AI agents like Siri and Alexa work? These intelligent systems are becoming more important in our daily lives. This article introduces the ReAct pattern, a method that enhances AI agents by combining reasoning an

Revisiting The Humanities In The Age Of AIApr 21, 2025 am 11:28 AM

Revisiting The Humanities In The Age Of AIApr 21, 2025 am 11:28 AM"I think AI tools are changing the learning opportunities for college students. We believe in developing students in core courses, but more and more people also want to get a perspective of computational and statistical thinking," said University of Chicago President Paul Alivisatos in an interview with Deloitte Nitin Mittal at the Davos Forum in January. He believes that people will have to become creators and co-creators of AI, which means that learning and other aspects need to adapt to some major changes. Digital intelligence and critical thinking Professor Alexa Joubin of George Washington University described artificial intelligence as a “heuristic tool” in the humanities and explores how it changes

Understanding LangChain Agent FrameworkApr 21, 2025 am 11:25 AM

Understanding LangChain Agent FrameworkApr 21, 2025 am 11:25 AMLangChain is a powerful toolkit for building sophisticated AI applications. Its agent architecture is particularly noteworthy, allowing developers to create intelligent systems capable of independent reasoning, decision-making, and action. This expl

What are the Radial Basis Functions Neural Networks?Apr 21, 2025 am 11:13 AM

What are the Radial Basis Functions Neural Networks?Apr 21, 2025 am 11:13 AMRadial Basis Function Neural Networks (RBFNNs): A Comprehensive Guide Radial Basis Function Neural Networks (RBFNNs) are a powerful type of neural network architecture that leverages radial basis functions for activation. Their unique structure make

The Meshing Of Minds And Machines Has ArrivedApr 21, 2025 am 11:11 AM

The Meshing Of Minds And Machines Has ArrivedApr 21, 2025 am 11:11 AMBrain-computer interfaces (BCIs) directly link the brain to external devices, translating brain impulses into actions without physical movement. This technology utilizes implanted sensors to capture brain signals, converting them into digital comman

Insights on spaCy, Prodigy and Generative AI from Ines MontaniApr 21, 2025 am 11:01 AM

Insights on spaCy, Prodigy and Generative AI from Ines MontaniApr 21, 2025 am 11:01 AMThis "Leading with Data" episode features Ines Montani, co-founder and CEO of Explosion AI, and co-developer of spaCy and Prodigy. Ines offers expert insights into the evolution of these tools, Explosion's unique business model, and the tr

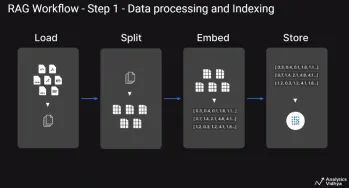

A Guide to Building Agentic RAG Systems with LangGraphApr 21, 2025 am 11:00 AM

A Guide to Building Agentic RAG Systems with LangGraphApr 21, 2025 am 11:00 AMThis article explores Retrieval Augmented Generation (RAG) systems and how AI agents can enhance their capabilities. Traditional RAG systems, while useful for leveraging custom enterprise data, suffer from limitations such as a lack of real-time dat

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.

Dreamweaver Mac version

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

PhpStorm Mac version

The latest (2018.2.1) professional PHP integrated development tool

WebStorm Mac version

Useful JavaScript development tools