Technology peripherals

Technology peripherals AI

AI Deployment of domestic ChatGPT only requires 6G of video memory! The ChatYuan model is open for download: the industry's first functional dialogue open source Chinese large model

Deployment of domestic ChatGPT only requires 6G of video memory! The ChatYuan model is open for download: the industry's first functional dialogue open source Chinese large modelSome time ago, Yuanyu Intelligent Development Team trained a functional dialogue large model ChatYuan similar to ChatGPT, and opened a trial interface in the web version.

Now you can also deploy a ChatYuan on your own machine!

The model can be used in question and answer scenarios, and can conduct dialogues and various generation tasks based on context, including creative writing. It can also answer questions in fields such as law and COVID-19.

And supports zero-sample learning in all Chinese tasks. Users can use it by providing prompts. It supports nearly 30 kinds of Chinese tasks under the categories of text generation, information extraction and understanding. .

ChatYuan is further trained based on PromptCLUE-large combined with hundreds of millions of functional question and answer and multi-round dialogue data. The model parameters are 770 million, the video memory is about 6G, and a civilian graphics card can be loaded and used. , the model is currently open for download.

PromptCLUE is pre-trained on 100 billion token Chinese corpus, has learned a total of 1.5 trillion Chinese tokens, and conducted Prompt task-based training on hundreds of tasks.

For understanding tasks, such as classification, sentiment analysis, extraction, etc., the label system can be customized; for a variety of generation tasks, sampling can be freely generated.

How to use

1. Github

##Project address: https://github.com/clue-ai/ChatYuan

2. Huggingface

Project address: https://huggingface.co/ClueAI/ChatYuan- large-v1

#3. ModelScope

Load model:

##Project address:  https://aistudio.baidu.com /aistudio/projectdetail/5404182

https://aistudio.baidu.com /aistudio/projectdetail/5404182

Model address: https://huggingface.co/ClueAI/ChatYuan-large-v1-paddle

Use since Have data to train the model

1. Organize the data

Organize the data into a unified format, which consists of two parts: input ( Input) and output (Output).

For a single round of question and answer or generation:

Input: task description input text

Output: The text that the system needs to predict, such as the content after "Xiaoyuan" in the figure below.

For multiple rounds of dialogue:

Input: Enter the text above the task description

Output: The text that the system needs to predict, such as the content after "Xiaoyuan" in the figure below.

Single round dialogue data format:

##Multiple rounds of dialogue data format:

##2. Training model

For training code, please refer to:

Use pCLUE data set for training, prediction and Effect verification, pytorch implementation - online colab.

Github project: https://colab.research.google.com/drive/1lEyFhEfoc-5Z5xqpEKkZt_iMaojH1MP_ ?usp=sharingFor example

==========Example 1======= ===

##User:What can you do

Xiao Yuan:

Hello! I am Yuanyu AI. I can answer your questions, write articles, assignments, translate, and I can also provide you with information on issues in the legal and other fields.

==========Example 2==========

User:

Write a poem about winter

Xiao Yuan:

The winter night is quiet and cold,

Clouds are floating in the sky,

On the frozen white snow,

Cold like snow.

Snowflakes melt into ice,

Like those snowflakes falling,

In the cold winter ,

Feel the joy of spring.

In the winter,

the wind and snow gradually subsided,

there was silence,

Bring happiness and warmth home.

==========Example 3==========

User:

During the internship in the preschool education major, there will be problems with students. Please propose improvement measures. 800 words

Xiao Yuan:

In the internship of preschool education majors, there will be some problems with students. , such as students' lack of professional knowledge, lack of cooperation awareness, lack of basic sense of responsibility, etc., we need to take measures to solve these problems.

First of all, during the internship process, we need to cultivate students' team awareness, strengthen cooperation between students and teachers, strengthen communication with parents, and improve their communication skills. In addition, we also need to strengthen students' independence, let students learn to think independently, learn to communicate with teachers and parents, and cultivate their independence.

In addition, in daily work, we must also provide students with appropriate opportunities and opportunities to have more contact with society and participate in more social activities, deepening their understanding of society and school. Understand and cultivate their initiative, positive and innovative spirit.

Finally, we also need to pay attention to the problems that students have during internships, especially students’ lack of cooperation, dependence, not knowing how to share, procrastination, etc. These students should cause us Pay attention to it and formulate reasonable improvement measures to improve these problems.

Technology and training process

ChatYuan is a model obtained by further training based on PromptCLUE. It removes text understanding and information extraction tasks, and strengthens the learning and training of question and answer, dialogue and various generative tasks; The addition of anti-interference data allows the model to ignore irrelevant context when necessary; the addition of learning from user feedback data enables the model to not only have certain general language understanding capabilities and specific task generation capabilities, but also better respond to user intentions. .

The learning process of PromptCLUE

- Three major unifications: unified model framework (text-to-text), unified task form (prompt), unified application method (zero-shot/few-shot) (T0)

- 大Scale pre-training: Based on the t5-large version, hundreds of G Chinese corpus were used, 1 million steps were trained, and 1.5 trillion Chinese word-level tokens were trained cumulatively

- Large-scale task data: used 16 task types, hundreds of tasks, accumulated task data of 100 million levels

- Hybrid pre-training: On the one hand, downstream tasks are used as pre-training corpus, and on the other hand, downstream tasks and pre-training corpus are trained together to reduce tasks Disaster forgetting and shortening the distance between pre-training and downstream tasks, better adapting to downstream tasks (ExT5)

- Hybrid sampling: For many tasks with greatly different amounts of data, use all training batches within each training batch Tasks are sampled according to proportion, smooth sampling is performed according to the data volume of the task, and at the same time, the upper limit of the task data volume sampling pool is limited. Smooth sampling can reduce the harm of biased task training, and training within each batch can reduce the negative transfer of training between heterogeneous tasks (T5)

- Phased training: On the one hand, it refers to the pre-training phase, Involves the phasing of training sequence length (128 and 512) to speed up pre-training (Bert); on the other hand, in the downstream training phasing, it involves changes in learning rate and sequence length and decreasing data volume restrictions on downstream tasks. Better adapt to different downstream tasks.

- Increase the training of language model: refer to t5.1.1, in addition to using the Span Corrpution construction method for unsupervised training, and also using the prefix LM method to train to enhance the ability to generate tasks (LM adapted)

- Increase the training of the encoder and decoder of the model: Construct Data_text and Data_target pre-training data corpora respectively based on the downstream task data, and add them to the pre-training to respectively enhance the model's encoder understanding ability and decoder generation ability (see UIE)

- Reconstruct the model Chinese dictionary: Use sentencepiece to learn and build a model dictionary on Qianyi token, which is more in line with Chinese language habits

Follow-up work

The current version can carry out question and answer, dialogue and various creative writing or text generation. Compared with the online version, its intention understanding and generation capabilities still have a lot of room for improvement in some situations; it also cannot achieve reasoning well. or complex tasks. The existing version will then be further improved based on feedback.

The above is the detailed content of Deployment of domestic ChatGPT only requires 6G of video memory! The ChatYuan model is open for download: the industry's first functional dialogue open source Chinese large model. For more information, please follow other related articles on the PHP Chinese website!

Are You At Risk Of AI Agency Decay? Take The Test To Find OutApr 21, 2025 am 11:31 AM

Are You At Risk Of AI Agency Decay? Take The Test To Find OutApr 21, 2025 am 11:31 AMThis article explores the growing concern of "AI agency decay"—the gradual decline in our ability to think and decide independently. This is especially crucial for business leaders navigating the increasingly automated world while retainin

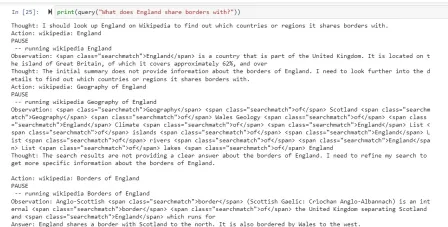

How to Build an AI Agent from Scratch? - Analytics VidhyaApr 21, 2025 am 11:30 AM

How to Build an AI Agent from Scratch? - Analytics VidhyaApr 21, 2025 am 11:30 AMEver wondered how AI agents like Siri and Alexa work? These intelligent systems are becoming more important in our daily lives. This article introduces the ReAct pattern, a method that enhances AI agents by combining reasoning an

Revisiting The Humanities In The Age Of AIApr 21, 2025 am 11:28 AM

Revisiting The Humanities In The Age Of AIApr 21, 2025 am 11:28 AM"I think AI tools are changing the learning opportunities for college students. We believe in developing students in core courses, but more and more people also want to get a perspective of computational and statistical thinking," said University of Chicago President Paul Alivisatos in an interview with Deloitte Nitin Mittal at the Davos Forum in January. He believes that people will have to become creators and co-creators of AI, which means that learning and other aspects need to adapt to some major changes. Digital intelligence and critical thinking Professor Alexa Joubin of George Washington University described artificial intelligence as a “heuristic tool” in the humanities and explores how it changes

Understanding LangChain Agent FrameworkApr 21, 2025 am 11:25 AM

Understanding LangChain Agent FrameworkApr 21, 2025 am 11:25 AMLangChain is a powerful toolkit for building sophisticated AI applications. Its agent architecture is particularly noteworthy, allowing developers to create intelligent systems capable of independent reasoning, decision-making, and action. This expl

What are the Radial Basis Functions Neural Networks?Apr 21, 2025 am 11:13 AM

What are the Radial Basis Functions Neural Networks?Apr 21, 2025 am 11:13 AMRadial Basis Function Neural Networks (RBFNNs): A Comprehensive Guide Radial Basis Function Neural Networks (RBFNNs) are a powerful type of neural network architecture that leverages radial basis functions for activation. Their unique structure make

The Meshing Of Minds And Machines Has ArrivedApr 21, 2025 am 11:11 AM

The Meshing Of Minds And Machines Has ArrivedApr 21, 2025 am 11:11 AMBrain-computer interfaces (BCIs) directly link the brain to external devices, translating brain impulses into actions without physical movement. This technology utilizes implanted sensors to capture brain signals, converting them into digital comman

Insights on spaCy, Prodigy and Generative AI from Ines MontaniApr 21, 2025 am 11:01 AM

Insights on spaCy, Prodigy and Generative AI from Ines MontaniApr 21, 2025 am 11:01 AMThis "Leading with Data" episode features Ines Montani, co-founder and CEO of Explosion AI, and co-developer of spaCy and Prodigy. Ines offers expert insights into the evolution of these tools, Explosion's unique business model, and the tr

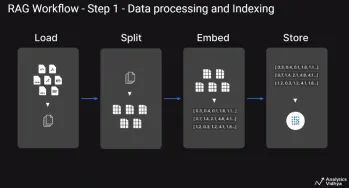

A Guide to Building Agentic RAG Systems with LangGraphApr 21, 2025 am 11:00 AM

A Guide to Building Agentic RAG Systems with LangGraphApr 21, 2025 am 11:00 AMThis article explores Retrieval Augmented Generation (RAG) systems and how AI agents can enhance their capabilities. Traditional RAG systems, while useful for leveraging custom enterprise data, suffer from limitations such as a lack of real-time dat

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software

SAP NetWeaver Server Adapter for Eclipse

Integrate Eclipse with SAP NetWeaver application server.

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.

Safe Exam Browser

Safe Exam Browser is a secure browser environment for taking online exams securely. This software turns any computer into a secure workstation. It controls access to any utility and prevents students from using unauthorized resources.