Technology peripherals

Technology peripherals AI

AI NVIDIA uses AI to design GPU arithmetic circuits, which reduce the area by 25% compared to the most advanced EDA, making it faster and more efficient

NVIDIA uses AI to design GPU arithmetic circuits, which reduce the area by 25% compared to the most advanced EDA, making it faster and more efficient

A massive array of arithmetic circuits powers NVIDIA GPUs to enable unprecedented acceleration of AI, high-performance computing, and computer graphics. Therefore, improving the design of these arithmetic circuits is critical to improving GPU performance and efficiency. What if AI learned to design these circuits? In a recent NVIDIA paper, "PrefixRL: Optimization of Parallel Prefix Circuits using Deep Reinforcement Learning," researchers demonstrated that AI can not only design these circuits from scratch, but also that AI-designed circuits are better than those designed by state-of-the-art electronic design automation (EDA) tools. Circuits are smaller and faster.

##Paper address: https://arxiv.org/pdf/2205.07000.pdf

The latest NVIDIA Hopper GPU architecture has nearly 13,000 AI-designed circuit examples. Figure 1 below: The 64b adder circuit designed by PrefixRL AI on the left is 25% smaller than the circuit designed by the most advanced EDA tool on the right of Figure 1.

Arithmetic circuits in computer chips are composed of networks of logic gates such as NAND, NOR and XOR) and wires. An ideal circuit should have the following attributes:

- Small: smaller area, more circuits can be packaged on the chip;

- Fast: lower latency, improved chip performance;

- lower power consumption.

In this NVIDIA study, researchers focused on circuit area and latency. They found that power consumption was closely related to the area of the circuit of interest. Circuit area and delay are often competing properties, so it is desirable to find a Pareto frontier for a design that effectively trades off these properties. In short, the researchers hope that the circuit area is minimized at each delay.

Therefore, in PrefixRL, researchers focus on a popular class of arithmetic circuits—parallel prefix circuits. Various important circuits in the GPU such as accelerators, increments, and encoders are prefix circuits, and they can be designated as prefix graphs at a higher level.

Then the question is: Can AI agents design good prefix maps? The state space of all prefix graphs is very large O(2^n^n) and cannot be explored using brute force methods. Figure 2 below shows an iteration of PrefixRL with a 4b circuit instance.

The researchers used Circuit Generator to convert the prefix diagram into a circuit with wires and logic gates. Next, these generated circuits are optimized through a physical synthesis tool that uses physical synthesis optimizations such as gate size, duplication, and buffer insertion.

Due to these physical synthesis optimizations, the final circuit properties (delay, area, and power) are not directly converted from the original prefix graph properties (such as levels and node count). This is why the AI agent learns to design prefix graphs but optimizes the properties of the final circuit generated from the prefix graphs.

Researchers treat arithmetic circuit design as a reinforcement learning (RL) task, in which an agent is trained to optimize the arithmetic circuit Area and delay properties. For the prefix circuit, they designed an environment where the RL agent can add or remove nodes in the prefix graph, and then perform the following steps:

- The prefix graph is normalized to always Maintain correct prefix sum calculations;

- Generate circuits from normalized prefix graphs;

- Use physical synthesis tools to perform physical synthesis optimization of circuits ;

- Measure the area and delay characteristics of the circuit.

In the following animation, the RL agent builds the prefix graph step by step by adding or deleting nodes. At each step, the agent is rewarded with improvements in circuit area and latency.

#The original image is an interactive version.

Fully convolutional Q-learning agent

The researchers use the Q-learning (Q-learning) algorithm to train the circuit design of the agent. As shown in Figure 3 below, they decompose the prefix graph into a grid representation, where each element in the grid is uniquely mapped to a prefix node. This grid represents the inputs and outputs used for the Q-network. Each element in the input grid represents whether the node exists or not. Each element in the output grid represents the Q-value of adding or removing a node.

The researcher uses a fully convolutional neural network architecture because the input and output of the Q learning agent are grid representations. The agent predicts Q-values for the area and delay attributes separately because the rewards for area and delay are separately observable during training.

Figure 3: 4b prefix graph representation (left) and fully convolutional Q-learning agent architecture (right).

Raptor for distributed training

PrefixRL requires a lot of calculations. In the physics simulation, each GPU requires 256 CPUs, and training 64b tasks requires Over 32,000 GPU hours. This time, NVIDIA has developed an internal distributed reinforcement learning platform, Raptor, which takes full advantage of NVIDIA's hardware advantages and can perform this kind of industrial-level reinforcement learning (Figure 4 below).

Raptor has features that improve the scalability and speed of training models, such as job scheduling, custom networks, and GPU-aware data structures. In the context of PrefixRL, Raptor enables hybrid allocation across CPUs, GPUs, and Spot Instances. The networks in this reinforcement learning application are diverse and benefit from the following:

- Raptor switches between NCCLs for peer-to-peer transfer of models Parameters are transferred directly from the learner GPU to the inference GPU;

- Redis is used for asynchronous and smaller messages such as rewards or statistics;

- For JIT compiled RPC, used to handle high-volume and low-latency requests, such as uploading experience data.

Finally, Raptor provides GPU-aware data structures such as replay buffers with multi-threaded services to receive experiences from multiple workers, batch data in parallel and Preload it on the GPU.

Figure 4 below shows that the PrefixRL framework supports concurrent training and data collection, and utilizes NCCL to efficiently send the latest parameters to participants (actors in the figure below).

Figure 4: Researchers use Raptor for decoupled parallel training and reward calculation to overcome circuit synthesis delays.

Reward Calculation

The researchers use a trade-off weight w (range is [0,1]) to combine the area and delay goals. They train various agents with different weights to obtain the Pareto frontier, thereby balancing the area, delay trade-off.

Physically synthesized optimization in a RL environment can generate a variety of solutions that trade off area and latency. Researchers drive physical synthesis tools using the same trade-off weights used to train specific agents.

Performing physics-synthesized optimization within a loop of reward calculations has the following advantages:

- RL agents learn to directly optimize the final circuit properties of target technology nodes and libraries ;

- RL agent includes the peripheral logic of the target algorithm circuit during the physical synthesis process, thereby jointly optimizing the performance of the target algorithm circuit and its peripheral logic.

However, doing physical synthesis is a slow process (~35 seconds for 64b adder), which can significantly slow down RL training and exploration.

The researchers decouple reward calculation from state updates because the agent only needs the current prefix graph state to take action, without circuit synthesis or previous rewards. Thanks to Raptor, they can offload lengthy reward calculations to a pool of CPU workers to perform physics synthesis in parallel, while actor agents can execute in the environment without waiting.

When the CPU worker returns the reward, the transformation can be embedded in the replay buffer. Comprehensive rewards are cached to avoid redundant calculations when a state is encountered again.

Results and Outlook

Figure 5 below shows the area and delay of a 64b adder circuit designed using PrefixRL and the Pareto-dominated adder circuit from the most advanced EDA tools.

The best PrefixRL adders achieve 25% less area than EDA tool adders at the same latency. These prefix graphs mapped to Pareto optimal adder circuits after physical synthesis optimization have irregular structures.

Figure 5: Arithmetic circuits designed by PrefixRL are smaller than circuits designed by state-of-the-art EDA tools and faster.

(left) circuit architecture; (right) corresponding 64b adder circuit characteristics diagram

As far as we know, this is the first method to use deep reinforcement learning agents to design arithmetic circuits. NVIDIA envisions a blueprint for applying AI to real-world circuit design problems, building action spaces, state representations, RL agent models, optimizing against multiple competing goals, and overcoming slow reward calculations.

The above is the detailed content of NVIDIA uses AI to design GPU arithmetic circuits, which reduce the area by 25% compared to the most advanced EDA, making it faster and more efficient. For more information, please follow other related articles on the PHP Chinese website!

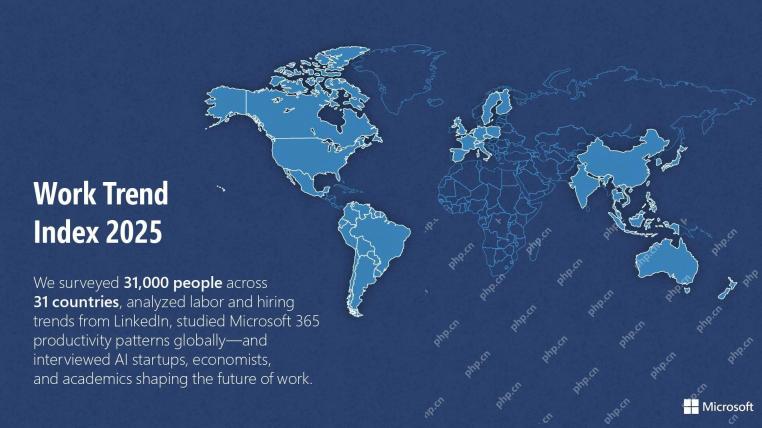

Microsoft Work Trend Index 2025 Shows Workplace Capacity StrainApr 24, 2025 am 11:19 AM

Microsoft Work Trend Index 2025 Shows Workplace Capacity StrainApr 24, 2025 am 11:19 AMThe burgeoning capacity crisis in the workplace, exacerbated by the rapid integration of AI, demands a strategic shift beyond incremental adjustments. This is underscored by the WTI's findings: 68% of employees struggle with workload, leading to bur

Can AI Understand? The Chinese Room Argument Says No, But Is It Right?Apr 24, 2025 am 11:18 AM

Can AI Understand? The Chinese Room Argument Says No, But Is It Right?Apr 24, 2025 am 11:18 AMJohn Searle's Chinese Room Argument: A Challenge to AI Understanding Searle's thought experiment directly questions whether artificial intelligence can genuinely comprehend language or possess true consciousness. Imagine a person, ignorant of Chines

China's 'Smart' AI Assistants Echo Microsoft Recall's Privacy FlawsApr 24, 2025 am 11:17 AM

China's 'Smart' AI Assistants Echo Microsoft Recall's Privacy FlawsApr 24, 2025 am 11:17 AMChina's tech giants are charting a different course in AI development compared to their Western counterparts. Instead of focusing solely on technical benchmarks and API integrations, they're prioritizing "screen-aware" AI assistants – AI t

Docker Brings Familiar Container Workflow To AI Models And MCP ToolsApr 24, 2025 am 11:16 AM

Docker Brings Familiar Container Workflow To AI Models And MCP ToolsApr 24, 2025 am 11:16 AMMCP: Empower AI systems to access external tools Model Context Protocol (MCP) enables AI applications to interact with external tools and data sources through standardized interfaces. Developed by Anthropic and supported by major AI providers, MCP allows language models and agents to discover available tools and call them with appropriate parameters. However, there are some challenges in implementing MCP servers, including environmental conflicts, security vulnerabilities, and inconsistent cross-platform behavior. Forbes article "Anthropic's model context protocol is a big step in the development of AI agents" Author: Janakiram MSVDocker solves these problems through containerization. Doc built on Docker Hub infrastructure

Using 6 AI Street-Smart Strategies To Build A Billion-Dollar StartupApr 24, 2025 am 11:15 AM

Using 6 AI Street-Smart Strategies To Build A Billion-Dollar StartupApr 24, 2025 am 11:15 AMSix strategies employed by visionary entrepreneurs who leveraged cutting-edge technology and shrewd business acumen to create highly profitable, scalable companies while maintaining control. This guide is for aspiring entrepreneurs aiming to build a

Google Photos Update Unlocks Stunning Ultra HDR For All Your PicturesApr 24, 2025 am 11:14 AM

Google Photos Update Unlocks Stunning Ultra HDR For All Your PicturesApr 24, 2025 am 11:14 AMGoogle Photos' New Ultra HDR Tool: A Game Changer for Image Enhancement Google Photos has introduced a powerful Ultra HDR conversion tool, transforming standard photos into vibrant, high-dynamic-range images. This enhancement benefits photographers a

Descope Builds Authentication Framework For AI Agent IntegrationApr 24, 2025 am 11:13 AM

Descope Builds Authentication Framework For AI Agent IntegrationApr 24, 2025 am 11:13 AMTechnical Architecture Solves Emerging Authentication Challenges The Agentic Identity Hub tackles a problem many organizations only discover after beginning AI agent implementation that traditional authentication methods aren’t designed for machine-

Google Cloud Next 2025 And The Connected Future Of Modern WorkApr 24, 2025 am 11:12 AM

Google Cloud Next 2025 And The Connected Future Of Modern WorkApr 24, 2025 am 11:12 AM(Note: Google is an advisory client of my firm, Moor Insights & Strategy.) AI: From Experiment to Enterprise Foundation Google Cloud Next 2025 showcased AI's evolution from experimental feature to a core component of enterprise technology, stream

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

SublimeText3 English version

Recommended: Win version, supports code prompts!

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.

SAP NetWeaver Server Adapter for Eclipse

Integrate Eclipse with SAP NetWeaver application server.

Atom editor mac version download

The most popular open source editor