Home >Technology peripherals >AI >Machine learning is a blessing from heaven! Data scientist and Kaggle master releases 'ML pitfall avoidance guide'

Machine learning is a blessing from heaven! Data scientist and Kaggle master releases 'ML pitfall avoidance guide'

- PHPzforward

- 2023-04-12 20:40:011409browse

Data science and machine learning are becoming increasingly popular.

The number of people entering this field is growing every day.

This means that many data scientists do not have extensive experience when building their first machine learning models, so they are prone to mistakes.

Here are some of the most common beginner mistakes in machine learning solutions.

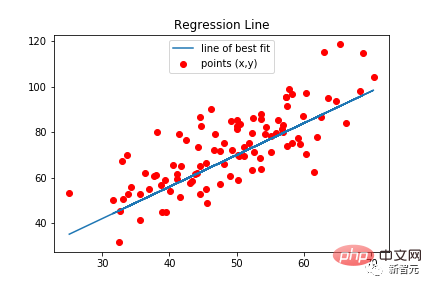

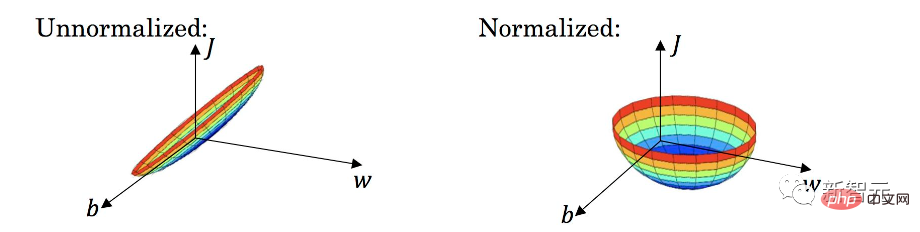

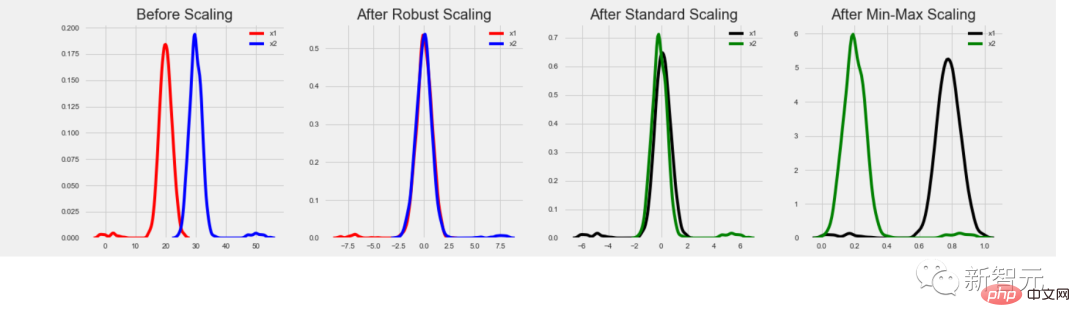

Data normalization is not used where needed

Yes For beginners, it may seem like a no-brainer to put features into a model and wait for it to give predictions.

But in some cases, the results may be disappointing because you missed a very important step.

Certain types of models require data normalization, including linear regression, classic neural networks, etc. These types of models use feature values multiplied by trained weights. If features are not normalized, it can happen that the range of possible values for one feature is very different from the range of possible values for another feature.

Assume that the value of one feature is in the range [0, 0.001] and the value of the other feature is in the range [100000, 200000]. For a model where two features are equally important, the weight of the first feature will be 100'000'000 times the weight of the second feature. Huge weights can cause serious problems for the model. For example, there are some outliers.

Furthermore, estimating the importance of various features can become very difficult, since a large weight may mean that the feature is important, or it may simply mean that it has a small value.

After normalization, all features are within the same value range, usually [0, 1] or [-1, 1]. In this case, the weights will be in a similar range and will closely correspond to the true importance of each feature.

Overall, using data normalization where needed will produce better, more accurate predictions.

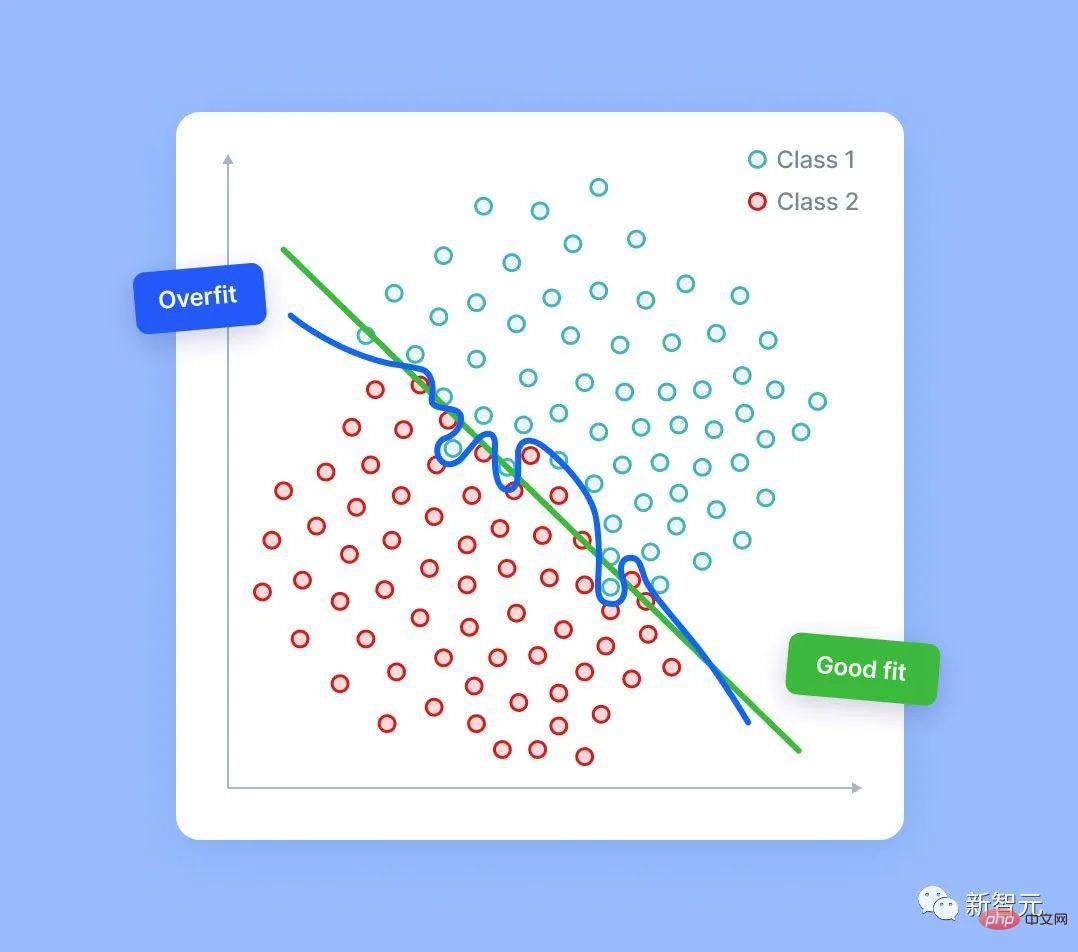

Think that the more features, the better

Some people may think that the more features, the better, so that the model will automatically select and use the best features.

In practice, this is not the case. In most cases, a model with carefully designed and selected features will significantly outperform a similar model with 10x more features.

The more features a model has, the greater the risk of overfitting. Even in completely random data, the model is able to find some signals—sometimes weaker, sometimes stronger.

Of course, there is no real signal in random noise. However, if we have enough noise columns, it is possible for the model to use some of them based on the detected error signal. When this happens, the quality of model predictions decreases because they will be based in part on random noise.

There are indeed various techniques for feature selection that can help in this situation. But this article does not discuss them.

Remember, the most important thing is - you should be able to explain every feature you have and understand why this feature will help your model.

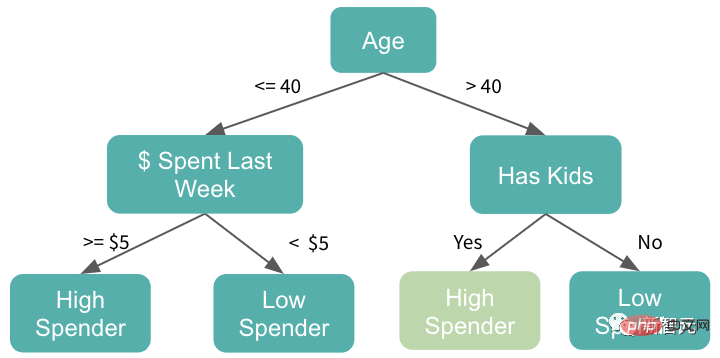

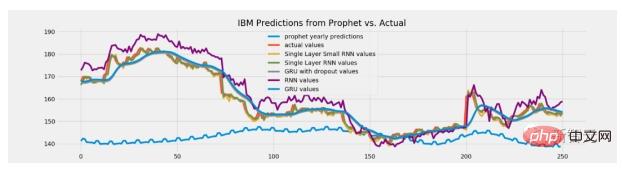

Use tree-based models when extrapolation is required

The main reason why tree models are popular is not only because of its strength, but also because it is very good use.

#However, it is not always tried and true. In some cases, using a tree-based model may well be a mistake.

Tree models have no inference capabilities. These models never give predicted values greater than the maximum value seen in the training data. They also never output predictions smaller than the minimum in training.

But in some tasks, the ability to extrapolate may play a major role. For example, if this model is used to predict stock prices, it is possible that future stock prices will be higher than ever before. So in this case, tree-based models will no longer be suitable as their predictions will be limited to levels close to all-time high prices.

So how to solve this problem?

In fact, all roads lead to Rome!

One option is to predict changes or differences instead of directly predicting values.

Another solution is to use a different model type for such tasks, such as linear regression or neural networks capable of extrapolation.

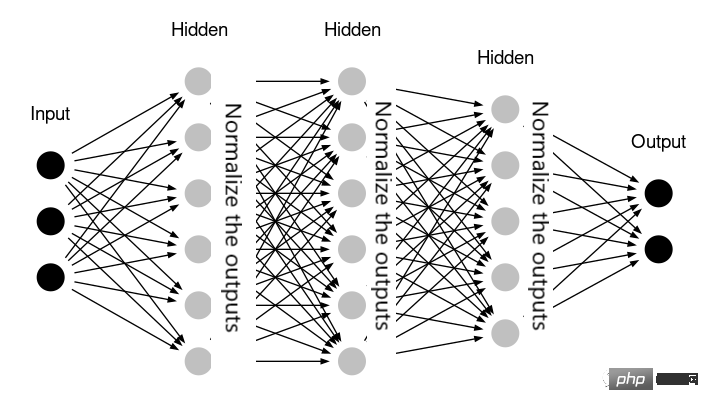

Superfluous normalization

Everyone must be familiar with the importance of data normalization. However, different tasks require different normalization methods. If you press the wrong type, you will lose more than you gain!

Tree-based models do not require data normalization because the feature raw values are not used as multipliers, and Outliers don't affect them either.

Neural networks may not require normalization either - for example, if the network already contains layers that handle normalization internally (e.g. the Keras library's BatchNormalization).

In some cases, linear regression may not require data normalization either. This means that all features are within a similar range of values and have the same meaning. For example, if the model is applied to time series data and all features are historical values of the same parameter.

In practice, applying unnecessary data normalization does not necessarily harm the model. Most of the time, the results in these cases will be very similar to skipped normalization. However, doing additional unnecessary data transformations complicates the solution and increases the risk of introducing some errors.

So, whether to use it or not, practice will bring out the true knowledge!

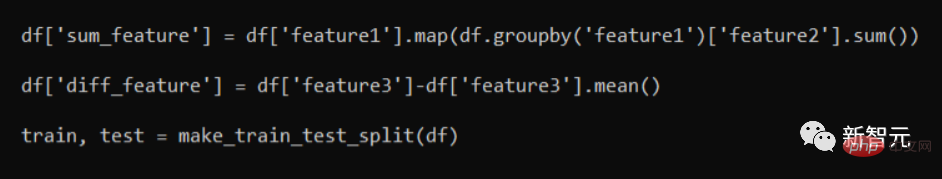

Data leakage

Data leakage is easier than we think.

Please look at the following code snippet:

They are "leaking" information because after splitting into train/test sets, the part with the training data will contain some of the information from the test rows. Although this will result in better validation results, when applied to real data models, performance will plummet.

The correct approach is to do a train/test split first. Only then the feature generation function is applied. Generally speaking, it is a good feature engineering pattern to process the training set and test set separately.

In some cases, some information must be passed between the two - for example, we may want the test set to use the same StandardScaler that was used for the training set and the Training was conducted on. But this is just an individual case, so we still need to analyze specific issues in detail!

It’s good to learn from your mistakes. But it’s best to learn from other people’s mistakes – I hope the examples of mistakes provided in this article will help you.

The above is the detailed content of Machine learning is a blessing from heaven! Data scientist and Kaggle master releases 'ML pitfall avoidance guide'. For more information, please follow other related articles on the PHP Chinese website!

Related articles

See more- Technology trends to watch in 2023

- How Artificial Intelligence is Bringing New Everyday Work to Data Center Teams

- Can artificial intelligence or automation solve the problem of low energy efficiency in buildings?

- OpenAI co-founder interviewed by Huang Renxun: GPT-4's reasoning capabilities have not yet reached expectations

- Microsoft's Bing surpasses Google in search traffic thanks to OpenAI technology