Technology peripherals

Technology peripherals AI

AI The 'upgraded version' of OPT-IML, Meta's large model with hundreds of billions of parameters, is here, and the complete model and code are released!

The 'upgraded version' of OPT-IML, Meta's large model with hundreds of billions of parameters, is here, and the complete model and code are released!In May of this year, MetaAI officially announced the release of the ultra-large model OPT-175B based on 175 billion parameters, which is also open to all communities for free.

On December 22, an updated version of the model, OPT-IML (Open Pre-trained Transformer), was officially launched. Meta said it “fine-tuned 2,000 language tasks, including 1,750 Billions of Parameters" will also be freely available for non-commercial research purposes.

How about the performance of this updated OPT-IML? Let’s take a look at the two pictures.

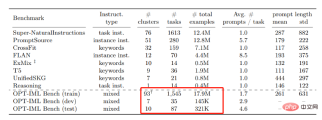

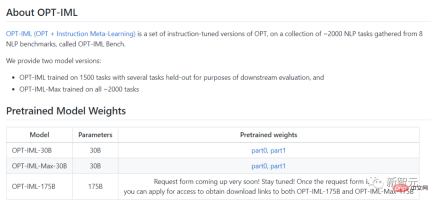

This time OPT-IML created two model sizes, 30B and 175B.

Compared with the old OPT model, OPT-IML outperformed OPT on average in 14 standard NLP evaluation tasks.

The two model sizes are 7%~ better on the zero-shot learning task and 4%~ and 0.4%~ better on the 32-shot task.

In this study, researchers describe how increasing model and benchmark size affects the impact of instruction tuning decisions on downstream task performance.

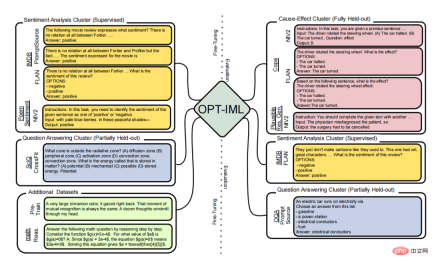

To this end they developed OPT-IML Bench, a sizable instructional meta-learning (IML) benchmark containing 2000 NLP tasks based on eight existing Benchmarks are divided into task categories.

In order to train OPT-IML 30B and 175B, the researchers first proposed the instruction tuning decisions applied to OPT-30B from the perspective of this framework gained insights.

On four evaluation benchmarks (PromptSource, FLAN, Super-NaturalInstructions and UnifiedSKG) with different targets and input formats, OPT-IML demonstrates all three Generalization skills.

Not only does it significantly outperform OPT across all benchmarks, it also outperforms existing models optimized for this specific benchmark in a very competitive manner.

In addition, OPT-IML has been open sourced, and the editor has also put the Github link below~

Github link: https://github.com/facebookresearch/metaseq/tree/main/projects/OPT-IML

Let’s learn about it through the paper OPT-IML.

Paper link: https://github.com/facebookresearch/metaseq/blob/main/projects/OPT-IML/optimal_paper_v1 .pdf

Research Methods

Instructional fine-tuning of large language models has become an effective method to enhance their zero-shot and few-shot generalization capabilities. In this study, Meta researchers made three important additions to instruction fine-tuning.

First, they compiled a large-scale instruction fine-tuning benchmark containing 2,000 NLP tasks from eight dataset collections, categorized by task type.

Researchers selectively constructed evaluation splits on this benchmark to test three different types of model generalization capabilities:

Includes tasks from fully held-out categories, held-out tasks from seen types, and held-out instances from seen tasks (held- out instances from seen tasks).

Command fine-tuning

Fine-tune the model, To make them consistent with following instructions is one of the current research directions in machine learning.

There are two methods for instruction fine-tuning. One focuses on fine-tuning models for a variety of tasks using human-annotated instructions and feedback; the other, focuses on adding instructions via annotations or automatically to publicly accessible benchmarks and datasets.

In this study, Meta AI members focused on the second technique and compiled a number of publicly accessible datasets containing methods for improving OPT.

During the research, Meta members proposed a similar scaling method using 1836 tasks from four benchmarks. Finally, while tuning the entire test to push the performance limits of challenging external benchmarks such as MMLU and Big-Bench Hard (BBH), the researchers describe the weights of various instruction tuning strategies that may impact downstream performance.

Multi-task learning

Multi-task learning is a representation of instruction-based fine-tuning (MTL).

MTL is a popular paradigm that can improve task generalization performance when combined with similar functions that share comparable parameters or representations.

In recent years, MTL has been applied to numerous NLP scenarios, mainly focusing on improving the performance of training tasks or new domains by leveraging signals from related activities.

In contrast, instruction-based fine-tuning helps us improve generalization performance to never-before-seen problems. It does this by instructing to combine all tasks into a concept and train them together by assigning the weights of the model across all tasks.

What is OPT?

Large-scale language models, natural language processing systems with over 100 billion parameters, have transformed NLP and AI research over the past few years.

These models are trained on a vast array of diverse texts, demonstrating surprising new abilities to generate creative text, solve basic math problems, answer reading comprehension questions, and more.

While in some cases the public can interact with these models via paid APIs, full research access is still limited to a handful of well-resourced labs.

This restricted access limits researchers’ ability to understand how and why these large language models work, hindering progress in improving their robustness and mitigating known issues such as bias. .

Out of its commitment to open science, Meta AI released Open Pretrained Transformer (OPT-175B) in May this year, a model with 175 billion parameters, which can be used on public data. It is trained on the set. The reason for sharing this model is that Meta AI hopes that more communities will participate in understanding the basic technology about large models.

Simply put, Meta opens access to large-scale language models used in artificial intelligence research to the public, thereby realizing the democratization of artificial intelligence in large-scale model research.

Comparison with the old version

The IML version currently released by Meta has been fine-tuned and performs better on natural language tasks than the old version of OPT.

Typical language tasks include answering questions, summarizing text, and translating.

To fine-tune, the researchers used approximately 2,000 natural language tasks. The tasks are divided into eight NLP benchmarks (OPT-IML Bench), which are also provided by the researchers.

On average, taking the 30B and 175B models as examples, OPT-IML improves the zero-shot learning accuracy by about 6-7% compared to OPT. In 32 epochs of learning, the model with 30 billion parameters showed a significant improvement in accuracy, and the model with 175 billion parameters showed a slight improvement.

After comparison, the Meta team found that the performance of OPT-IML was better than OPT on all benchmark tests, and in terms of zero-shot and few-shot learning accuracy, it was better than other Models based on instruction fine-tuning are more competitive.

The above is the detailed content of The 'upgraded version' of OPT-IML, Meta's large model with hundreds of billions of parameters, is here, and the complete model and code are released!. For more information, please follow other related articles on the PHP Chinese website!

Tesla's Robovan Was The Hidden Gem In 2024's Robotaxi TeaserApr 22, 2025 am 11:48 AM

Tesla's Robovan Was The Hidden Gem In 2024's Robotaxi TeaserApr 22, 2025 am 11:48 AMSince 2008, I've championed the shared-ride van—initially dubbed the "robotjitney," later the "vansit"—as the future of urban transportation. I foresee these vehicles as the 21st century's next-generation transit solution, surpas

Sam's Club Bets On AI To Eliminate Receipt Checks And Enhance RetailApr 22, 2025 am 11:29 AM

Sam's Club Bets On AI To Eliminate Receipt Checks And Enhance RetailApr 22, 2025 am 11:29 AMRevolutionizing the Checkout Experience Sam's Club's innovative "Just Go" system builds on its existing AI-powered "Scan & Go" technology, allowing members to scan purchases via the Sam's Club app during their shopping trip.

Nvidia's AI Omniverse Expands At GTC 2025Apr 22, 2025 am 11:28 AM

Nvidia's AI Omniverse Expands At GTC 2025Apr 22, 2025 am 11:28 AMNvidia's Enhanced Predictability and New Product Lineup at GTC 2025 Nvidia, a key player in AI infrastructure, is focusing on increased predictability for its clients. This involves consistent product delivery, meeting performance expectations, and

Exploring the Capabilities of Google's Gemma 2 ModelsApr 22, 2025 am 11:26 AM

Exploring the Capabilities of Google's Gemma 2 ModelsApr 22, 2025 am 11:26 AMGoogle's Gemma 2: A Powerful, Efficient Language Model Google's Gemma family of language models, celebrated for efficiency and performance, has expanded with the arrival of Gemma 2. This latest release comprises two models: a 27-billion parameter ver

The Next Wave of GenAI: Perspectives with Dr. Kirk Borne - Analytics VidhyaApr 22, 2025 am 11:21 AM

The Next Wave of GenAI: Perspectives with Dr. Kirk Borne - Analytics VidhyaApr 22, 2025 am 11:21 AMThis Leading with Data episode features Dr. Kirk Borne, a leading data scientist, astrophysicist, and TEDx speaker. A renowned expert in big data, AI, and machine learning, Dr. Borne offers invaluable insights into the current state and future traje

AI For Runners And Athletes: We're Making Excellent ProgressApr 22, 2025 am 11:12 AM

AI For Runners And Athletes: We're Making Excellent ProgressApr 22, 2025 am 11:12 AMThere were some very insightful perspectives in this speech—background information about engineering that showed us why artificial intelligence is so good at supporting people’s physical exercise. I will outline a core idea from each contributor’s perspective to demonstrate three design aspects that are an important part of our exploration of the application of artificial intelligence in sports. Edge devices and raw personal data This idea about artificial intelligence actually contains two components—one related to where we place large language models and the other is related to the differences between our human language and the language that our vital signs “express” when measured in real time. Alexander Amini knows a lot about running and tennis, but he still

Jamie Engstrom On Technology, Talent And Transformation At CaterpillarApr 22, 2025 am 11:10 AM

Jamie Engstrom On Technology, Talent And Transformation At CaterpillarApr 22, 2025 am 11:10 AMCaterpillar's Chief Information Officer and Senior Vice President of IT, Jamie Engstrom, leads a global team of over 2,200 IT professionals across 28 countries. With 26 years at Caterpillar, including four and a half years in her current role, Engst

New Google Photos Update Makes Any Photo Pop With Ultra HDR QualityApr 22, 2025 am 11:09 AM

New Google Photos Update Makes Any Photo Pop With Ultra HDR QualityApr 22, 2025 am 11:09 AMGoogle Photos' New Ultra HDR Tool: A Quick Guide Enhance your photos with Google Photos' new Ultra HDR tool, transforming standard images into vibrant, high-dynamic-range masterpieces. Ideal for social media, this tool boosts the impact of any photo,

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),

SublimeText3 Mac version

God-level code editing software (SublimeText3)

PhpStorm Mac version

The latest (2018.2.1) professional PHP integrated development tool

Dreamweaver CS6

Visual web development tools