Technology peripherals

Technology peripherals AI

AI Can't stop it! Diffusion model can be used to photoshop photos using only text

Can't stop it! Diffusion model can be used to photoshop photos using only textCan't stop it! Diffusion model can be used to photoshop photos using only text

It is the common wish of Party A and Party B to be able to improve the picture with just words, but usually only Party B knows the pain and sorrow involved. Today, AI has launched a challenge to this difficult problem.

In a paper uploaded to arXiv on October 17, researchers from Google Research, the Israel Institute of Technology, and the Weizmann Institute of Science in Israel introduced a method based on The real image editing method of diffusion model - Imagic, can realize PS of real photos using only text, such as making a person give a thumbs up or making two parrots kiss:

"Please help me with a like gesture." Diffusion model: No problem, I'll cover it.

#It can be seen from the images in the paper that the modified images are still very natural, and there is no obvious damage to the information other than the content that needs to be modified. Similar research includes the Prompt-to-Prompt previously completed by Google Research and Tel Aviv University in Israel (reference [16] in the Imagic paper):

Project link (including papers and code): https://prompt-to-prompt.github.io/

Therefore, someone said with emotion , "This field is changing so fast that it's a bit exaggerated." From now on, Party A can really make any changes they want with just their words.

Imagic Paper Overview

##Paper link: https://arxiv.org /pdf/2210.09276.pdf

Applying substantial semantic editing to real photos has always been an interesting task in image processing. This task has attracted considerable interest from the research community as deep learning-based systems have made considerable progress in recent years.

Using simple natural language text prompts to describe the editor we want (such as asking a dog to sit) is highly consistent with the way humans communicate. Therefore, researchers have developed many text-based image editing methods, and these methods are also effective.

However, the current mainstream methods have more or less problems, such as:

1. Limited to a specific set of Editing, such as painting on the image, adding objects or transferring styles [6, 28];

2. Can only operate on images in specific fields or synthesized images [16, 36 ];

3. In addition to the input image, they also require auxiliary input, such as an image mask indicating the desired editing position, multiple images of the same subject, or text describing the original image[ 6, 13, 40, 44].

This article proposes a semantic image editing method "Imagic" to alleviate the above problems. Given an input image to be edited and a single text prompt describing the target edit, this method enables complex non-rigid editing of real high-resolution images. The resulting image output aligns well with the target text, while preserving the overall context, structure, and composition of the original image.

As shown in Figure 1, Imagic can make two parrots kiss or make a person give a thumbs up. The text-based semantic editing it offers is the first time such complex operations, including editing of multiple objects, can be applied to a single real high-resolution image. In addition to these complex changes, Imagic allows for a wide variety of edits, including style changes, color changes, and object additions.

#To achieve this feat, the researchers leveraged the recently successful text-to-image diffusion model. Diffusion models are powerful generative models capable of high-quality image synthesis. When conditioned on a natural language text prompt, it is able to generate images consistent with the requested text. In this work, the researchers used them to edit real images rather than synthesize new ones.

As shown in Figure 3, Imagic only needs three steps to complete the above task: first optimize a text embedding to produce an image similar to the input image. The pre-trained generative diffusion model is then fine-tuned to better reconstruct the input image, conditioned on optimized embeddings. Finally, linear interpolation is performed between the target text embedding and the optimized embedding, resulting in a representation that combines the input image and target text. This representation is then passed to a generative diffusion process with a fine-tuned model, outputting the final edited image.

To prove the power of Imagic, the researchers conducted several experiments, applying the method to numerous images in different fields, and produced impressive results in all experiments the result of. The high-quality images output by Imagic are highly similar to the input images and consistent with the required target text. These results demonstrate the versatility, versatility and quality of Imagic. The researchers also conducted an ablation study that highlighted the effectiveness of each component of the proposed method. Compared to a range of recent methods, Imagic exhibits significantly better editing quality and fidelity to the original image, especially when undertaking highly complex non-rigid editing tasks.

Method details

Given an input image x and a target text, this article aims to edit the image in a way that satisfies the given text while retaining the image x Lots of details. To achieve this goal, this paper utilizes the text embedding layer of the diffusion model to perform semantic operations in a manner somewhat similar to GAN-based methods. Researchers start by looking for meaningful representations and then go through a generative process that produces images that are similar to the input image. The generative model is then optimized to better reconstruct the input image, and the final step is to process the latent representation to obtain the editing result.

As shown in Figure 3 above, our method consists of three stages: (1) Optimizing text embeddings to find the text embedding that best matches the given image near the target text embedding; (2) Fine-tune the diffusion model to better match the given image; (3) Linearly interpolate between the optimized embedding and the target text embedding to find one that achieves both image fidelity and target text alignment. point.

More specific details are as follows:

Text embedding optimization

First the target text is input to the text encoder, which outputs the corresponding text embedding  , where T is the number of tokens of the given target text, and d is the token embedding dimension. Then, the researchers froze the parameters of the generated diffusion model f_θ and used the denoising diffusion objective to optimize the target text embedding e_tgt

, where T is the number of tokens of the given target text, and d is the token embedding dimension. Then, the researchers froze the parameters of the generated diffusion model f_θ and used the denoising diffusion objective to optimize the target text embedding e_tgt

Where, x is the input image,  is a noise version of x, and θ is the pre-trained diffusion model weight. This makes the text embedding match the input image as closely as possible. This process runs in relatively few steps, keeping close to the original target text embedding, resulting in an optimized embedding e_opt.

is a noise version of x, and θ is the pre-trained diffusion model weight. This makes the text embedding match the input image as closely as possible. This process runs in relatively few steps, keeping close to the original target text embedding, resulting in an optimized embedding e_opt.

Model fine-tuning

It should be noted here that the optimized embedding e_opt obtained here is generated through diffusion process, they are not necessarily exactly similar to the input image x because they only run a small number of optimization steps (see the upper left image in Figure 5). Therefore, in the second stage, the authors close this gap by optimizing the model parameters θ using the same loss function provided in Equation (2) while freezing the optimization embedding.

Text embedding interpolation

The third stage of Imagic is Perform simple linear interpolation between e_tgt and e_opt. For a given hyperparameter  , we obtain

, we obtain  Then, the authors use a fine-tuned model, conditional on

Then, the authors use a fine-tuned model, conditional on  , to apply a basic generative diffusion process. This produces a low-resolution edited image, which is then super-resolved using a fine-tuned auxiliary model to super-resolve the target text. This generation process outputs the final high-resolution edited image

, to apply a basic generative diffusion process. This produces a low-resolution edited image, which is then super-resolved using a fine-tuned auxiliary model to super-resolve the target text. This generation process outputs the final high-resolution edited image .

.

Experimental results

In order to test the effect, the researchers applied this method to a large number of real pictures from different fields, using simple text prompts to describe different editing categories , such as: style, appearance, color, posture and composition. They collected high-resolution, free-to-use images from Unsplash and Pixabay, optimized them to generate each edit with 5 random seeds, and selected the best results. Imagic demonstrates impressive results with its ability to apply various editing categories on any general input image and text, as shown in Figures 1 and 7.

Figure 2 is an experiment with different text prompts on the same image, showing the versatility of Imagic.

Since the underlying generative diffusion model used by the researchers is based on probability, this method can be used for a single image-text pairs produce different results. Figure 4 shows several options for editing using different random seeds (with slight adjustments to eta for each seed). This randomness allows users to choose between these different options, since natural language text prompts are generally ambiguous and imprecise.

The study compared Imagic to currently leading general-purpose methods on a single input of real-world images. Take the action and edit it based on the text prompt. Figure 6 shows the editing results of different methods such as Text2LIVE[7] and SDEdit[32].

It can be seen that our method maintains high fidelity to the input image while appropriately performing the required edits. When given complex non-rigid editing tasks, such as "making the dog sit", our method significantly outperforms previous techniques. Imagic is the first demo to apply this sophisticated text-based editing on a single real-world image.

The above is the detailed content of Can't stop it! Diffusion model can be used to photoshop photos using only text. For more information, please follow other related articles on the PHP Chinese website!

Are You At Risk Of AI Agency Decay? Take The Test To Find OutApr 21, 2025 am 11:31 AM

Are You At Risk Of AI Agency Decay? Take The Test To Find OutApr 21, 2025 am 11:31 AMThis article explores the growing concern of "AI agency decay"—the gradual decline in our ability to think and decide independently. This is especially crucial for business leaders navigating the increasingly automated world while retainin

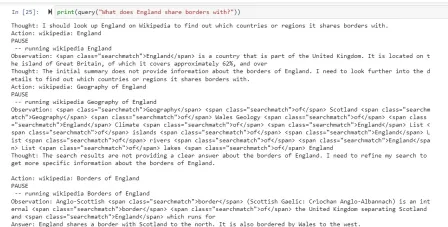

How to Build an AI Agent from Scratch? - Analytics VidhyaApr 21, 2025 am 11:30 AM

How to Build an AI Agent from Scratch? - Analytics VidhyaApr 21, 2025 am 11:30 AMEver wondered how AI agents like Siri and Alexa work? These intelligent systems are becoming more important in our daily lives. This article introduces the ReAct pattern, a method that enhances AI agents by combining reasoning an

Revisiting The Humanities In The Age Of AIApr 21, 2025 am 11:28 AM

Revisiting The Humanities In The Age Of AIApr 21, 2025 am 11:28 AM"I think AI tools are changing the learning opportunities for college students. We believe in developing students in core courses, but more and more people also want to get a perspective of computational and statistical thinking," said University of Chicago President Paul Alivisatos in an interview with Deloitte Nitin Mittal at the Davos Forum in January. He believes that people will have to become creators and co-creators of AI, which means that learning and other aspects need to adapt to some major changes. Digital intelligence and critical thinking Professor Alexa Joubin of George Washington University described artificial intelligence as a “heuristic tool” in the humanities and explores how it changes

Understanding LangChain Agent FrameworkApr 21, 2025 am 11:25 AM

Understanding LangChain Agent FrameworkApr 21, 2025 am 11:25 AMLangChain is a powerful toolkit for building sophisticated AI applications. Its agent architecture is particularly noteworthy, allowing developers to create intelligent systems capable of independent reasoning, decision-making, and action. This expl

What are the Radial Basis Functions Neural Networks?Apr 21, 2025 am 11:13 AM

What are the Radial Basis Functions Neural Networks?Apr 21, 2025 am 11:13 AMRadial Basis Function Neural Networks (RBFNNs): A Comprehensive Guide Radial Basis Function Neural Networks (RBFNNs) are a powerful type of neural network architecture that leverages radial basis functions for activation. Their unique structure make

The Meshing Of Minds And Machines Has ArrivedApr 21, 2025 am 11:11 AM

The Meshing Of Minds And Machines Has ArrivedApr 21, 2025 am 11:11 AMBrain-computer interfaces (BCIs) directly link the brain to external devices, translating brain impulses into actions without physical movement. This technology utilizes implanted sensors to capture brain signals, converting them into digital comman

Insights on spaCy, Prodigy and Generative AI from Ines MontaniApr 21, 2025 am 11:01 AM

Insights on spaCy, Prodigy and Generative AI from Ines MontaniApr 21, 2025 am 11:01 AMThis "Leading with Data" episode features Ines Montani, co-founder and CEO of Explosion AI, and co-developer of spaCy and Prodigy. Ines offers expert insights into the evolution of these tools, Explosion's unique business model, and the tr

A Guide to Building Agentic RAG Systems with LangGraphApr 21, 2025 am 11:00 AM

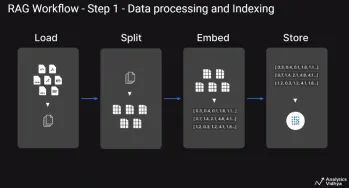

A Guide to Building Agentic RAG Systems with LangGraphApr 21, 2025 am 11:00 AMThis article explores Retrieval Augmented Generation (RAG) systems and how AI agents can enhance their capabilities. Traditional RAG systems, while useful for leveraging custom enterprise data, suffer from limitations such as a lack of real-time dat

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.

Dreamweaver Mac version

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

PhpStorm Mac version

The latest (2018.2.1) professional PHP integrated development tool

WebStorm Mac version

Useful JavaScript development tools