Machine Learning Decision Tree Practical Exercise

Translator | Zhu Xianzhong

##Reviewer | Sun Shujuan

Decision tree in machine learningModern machine learning algorithms are changing our daily lives. For example, large language models like BERT are powering Google search, and GPT-3 is powering many high-level language applications.

On the other hand, building complex machine learning algorithms is much easier today than ever before. However, no matter how complex a machine learning algorithm may be, they all fall into one of the following learning categories:

- Supervised learning

- Unsupervised learning

- Semi-supervised learning

- Reinforcement learning

In fact, Decision trees are one of the oldest supervised machine learning algorithms and can solve a wide range of real-world problems. Research shows that the earliest invention of the decision tree algorithm can be traced back to 1963.

Next, let’s delve into the details of this algorithm and see why this type of algorithm is still so popular today.

What is a decision tree?The decision tree algorithm is a popular supervised machine learning algorithm because of its relatively simple method of processing complex data sets. Decision trees get their name from their similarity to the structure of a tree; a tree structure consists of several components such as roots, branches, and leaves in the form of nodes and edges. They are used for decision analysis, much like an if-else based decision flow chart, where decisions will produce the desired predictions. Decision trees can learn these if-else decision rules to split the data set and finally generate a tree-like data model.

Decision trees have been used in the prediction of discrete results for classification problems and the prediction of continuous numerical results for regression problems. Over the years scientists have developed many different algorithms such as CART, C4.5 and ensemble algorithms such as random forests and gradient boosted trees.

The goal of the decision tree algorithm is to predict the outcome of the input data set. The tree data set is divided into three forms: attributes, attribute values, and types to be predicted. As with any supervised learning algorithm, the data set is divided into two types: training set and test set. Among them, the training set defines the decision rules that the algorithm learns and applies to the test set.

Before we gather together the steps of the decision tree algorithm, let us first understand the components of the decision tree:

- Root Node: It is the starting node at the top of the decision tree and contains all attribute values. The root node is divided into decision nodes based on the decision rules learned by the algorithm.

- Branches: Branches are connectors between nodes that correspond to attribute values. In binary splitting, the branches represent true and false paths.

- Decision Node/Internal Node: Internal node is the decision node between the root node and leaf node, corresponding to the decision rule and its answer path. Nodes represent questions, and branches show paths to relevant answers based on those questions.

- Leaf nodes: Leaf nodes are terminal nodes that represent target predictions. These nodes will not be split further.

The following is a visual representation of a decision tree and its above components, the decision tree algorithm goes through the following steps to arrive at the desired prediction:

- The algorithm starts from the root node with all attribute values.

- The root node is divided into decision nodes based on the decision rules learned by the algorithm from the training set.

- Pass internal decision nodes through branches/edges based on the question and its answer path.

- Continue the previous steps until you reach a leaf node or all attributes are used.

In order to select the best attribute on each node, one of the following two attribute selection metrics will be used for splitting:

- Gini coefficient(Gini index)Measurement of Gini impurity (Gini Impurity) to indicate the likelihood that the algorithm will misclassify a random class label.

- Information gainMeasures the improvement in entropy after segmentation to avoid predicting class 50/ 50 split. Entropy is a mathematical measure of the impurity in a given data sample. The chaotic state in the decision tree is represented by a partition close to 50/50 . Flower classification case using decision tree algorithm

A brief explanation about the data set

The data set for this tutorial is an iris data set. This dataset is already built into the Scikit open source library, so developers do not need to load it externally. The dataset includes a total of four iris attributes and corresponding attribute values, which will be input into the model to predict one of three types of iris flowers.

- Attributes/Features in the dataset: sepal length, sepal width, petal length, petal width.

- Predicted labels/flower types in the dataset: Setosis, Versicolor, Virginica.

Import library

First, import the library required to implement the decision tree through the following piece of code.import pandas as pd

import numpy as np

from sklearn.datasets import load_iris

from sklearn.model_selection import train_test_split

from sklearn.tree import DecisionTreeClassifier

Loading the iris data set

data_set = load_iris()

print('Iris plant classes to predict: ', data_set.target_names)

print('Four features of iris plant: ', data_set.feature_names)

Separating attributes and tags

Separating attributes and tags

#提取花的特性和类型信息

X_att = data_set.data

y_label = data_set.target

print('数据集中总的样本数:', X_att.shape[0])

data_view=pd.DataFrame({

'sepal length':X_att[:,0],

'sepal width':X_att[:,1],

'petal length':X_att[:,2],

'petal width':X_att[:,3],

'species':y_label

})

data_view.head()Split the data set

#数据集拆分为训练集和测试集两部分

X_att_train, X_att_test, y_label_train, y_label_test = train_test_split(X_att, y_label, random_state = 42, test_size = 0.25)

Applying the decision tree classification function

by using the DecisionTreeClassifier function Classification modelto implement a decision tree, classification standard is set to "entropy"Way. This standard enables to set the attribute selection metric to (Information gain). The code then matches the model to our training set of attributes and labels. 下面的代码负责计算并打印决策树分类模型在训练集和测试集上的准确性。为了计算准确度分数,我们使用了predict函数。测试结果是:训练集和测试集的准确率分别为100%和94.7%。 当今社会,机器学习决策树在许多行业的决策过程中都得到广泛应用。其中,决策树的最常见应用首先是在金融和营销部门,例如可用于如下一些子领域: 作为本文决策树主题讨论的总结,我们有充分的理由安全地假设:决策树的可解释性仍然很受欢迎。决策树之所以容易理解,是因为它们可以被人类以可视化方式展现并便于解释。因此,它们是解决机器学习问题的直观方法,同时也能够确保结果是可解释的。机器学习中的可解释性是我们过去讨论过的一个小话题,它也与即将到来的人工智能伦理主题存在密切联系。 与任何其他机器学习算法一样,决策树自然也可以加以改进,以避免过度拟合和出现过于偏向于优势预测类别。剪枝和ensembling技术是克服决策树算法缺点方案最常采用的方法。决策树尽管存在这些缺点,但仍然是决策分析算法的基础,并将在机器学习领域始终保持重要位置。 朱先忠,51CTO社区编辑,51CTO专家博客、讲师,潍坊一所高校计算机教师,自由编程界老兵一枚。 原文标题:An Introduction to Decision Trees for Machine Learning,作者:Stylianos Kampakis#应用决策树分类器

clf_dt = DecisionTreeClassifier(criterion = 'entropy')

clf_dt.fit(X_att_train, y_label_train)

计算模型精度

print('Training data accuracy: ', accuracy_score(y_true=y_label_train, y_pred=clf_dt.predict(X_att_train)))

print('Test data accuracy: ', accuracy_score(y_true=y_label_test, y_pred=clf_dt.predict(X_att_test)))真实世界中的决策树应用程序

如何改进决策树?

译者介绍

The above is the detailed content of Machine Learning Decision Tree Practical Exercise. For more information, please follow other related articles on the PHP Chinese website!

Are You At Risk Of AI Agency Decay? Take The Test To Find OutApr 21, 2025 am 11:31 AM

Are You At Risk Of AI Agency Decay? Take The Test To Find OutApr 21, 2025 am 11:31 AMThis article explores the growing concern of "AI agency decay"—the gradual decline in our ability to think and decide independently. This is especially crucial for business leaders navigating the increasingly automated world while retainin

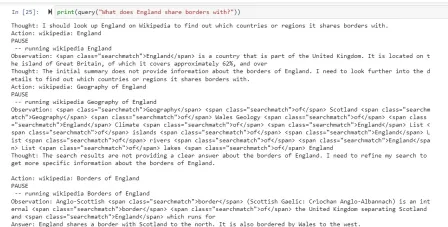

How to Build an AI Agent from Scratch? - Analytics VidhyaApr 21, 2025 am 11:30 AM

How to Build an AI Agent from Scratch? - Analytics VidhyaApr 21, 2025 am 11:30 AMEver wondered how AI agents like Siri and Alexa work? These intelligent systems are becoming more important in our daily lives. This article introduces the ReAct pattern, a method that enhances AI agents by combining reasoning an

Revisiting The Humanities In The Age Of AIApr 21, 2025 am 11:28 AM

Revisiting The Humanities In The Age Of AIApr 21, 2025 am 11:28 AM"I think AI tools are changing the learning opportunities for college students. We believe in developing students in core courses, but more and more people also want to get a perspective of computational and statistical thinking," said University of Chicago President Paul Alivisatos in an interview with Deloitte Nitin Mittal at the Davos Forum in January. He believes that people will have to become creators and co-creators of AI, which means that learning and other aspects need to adapt to some major changes. Digital intelligence and critical thinking Professor Alexa Joubin of George Washington University described artificial intelligence as a “heuristic tool” in the humanities and explores how it changes

Understanding LangChain Agent FrameworkApr 21, 2025 am 11:25 AM

Understanding LangChain Agent FrameworkApr 21, 2025 am 11:25 AMLangChain is a powerful toolkit for building sophisticated AI applications. Its agent architecture is particularly noteworthy, allowing developers to create intelligent systems capable of independent reasoning, decision-making, and action. This expl

What are the Radial Basis Functions Neural Networks?Apr 21, 2025 am 11:13 AM

What are the Radial Basis Functions Neural Networks?Apr 21, 2025 am 11:13 AMRadial Basis Function Neural Networks (RBFNNs): A Comprehensive Guide Radial Basis Function Neural Networks (RBFNNs) are a powerful type of neural network architecture that leverages radial basis functions for activation. Their unique structure make

The Meshing Of Minds And Machines Has ArrivedApr 21, 2025 am 11:11 AM

The Meshing Of Minds And Machines Has ArrivedApr 21, 2025 am 11:11 AMBrain-computer interfaces (BCIs) directly link the brain to external devices, translating brain impulses into actions without physical movement. This technology utilizes implanted sensors to capture brain signals, converting them into digital comman

Insights on spaCy, Prodigy and Generative AI from Ines MontaniApr 21, 2025 am 11:01 AM

Insights on spaCy, Prodigy and Generative AI from Ines MontaniApr 21, 2025 am 11:01 AMThis "Leading with Data" episode features Ines Montani, co-founder and CEO of Explosion AI, and co-developer of spaCy and Prodigy. Ines offers expert insights into the evolution of these tools, Explosion's unique business model, and the tr

A Guide to Building Agentic RAG Systems with LangGraphApr 21, 2025 am 11:00 AM

A Guide to Building Agentic RAG Systems with LangGraphApr 21, 2025 am 11:00 AMThis article explores Retrieval Augmented Generation (RAG) systems and how AI agents can enhance their capabilities. Traditional RAG systems, while useful for leveraging custom enterprise data, suffer from limitations such as a lack of real-time dat

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.

VSCode Windows 64-bit Download

A free and powerful IDE editor launched by Microsoft

Dreamweaver CS6

Visual web development tools

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.