I recently completed a very satisfying paper. Not only was the whole process enjoyable and memorable, but it also truly achieved "academic impact and industrial output." I believe this article will change the paradigm of differential privacy (DP) deep learning.

Because this experience is so "coincident" (the process is full of coincidences and the conclusion is extremely clever), I would like to share with my classmates my experience from observation-->conception-->evidence-- >Theory-->Complete process of large-scale experiments. I will try to keep this article lightweight and not involve too many technical details.

Paper address: arxiv.org/abs/2206.07136

Different from the order presented in the paper, the paper sometimes deliberately places the conclusions Put it at the beginning to attract readers, or introduce the simplified theorem first and put the complete theorem in the appendix. In this article, I want to write down my experience in chronological order (that is, a running account), such as the detours I have taken and the sudden changes in my research. Write down the status of the development for reference by students who have just embarked on the path of scientific research.

1. Literature Reading

The origin of the matter is a paper from Stanford, which has now been recorded in ICLR:

Paper address: https://arxiv.org/abs/2110.05679

The article is very well written. In summary, it has three main contributions:

1. In NLP tasks, the accuracy of the DP model is very high, which encourages the application of privacy in language models. (In contrast, DP in CV will cause a very large accuracy deterioration. For example, CIFAR10 currently has 80% accuracy without pre-training under the DP limit, but can easily reach 95% without considering DP; ImageNet's best DP accuracy at the time was less than 50%.)

2. On language models, the larger the model, the better the performance will be. For example, the performance improvement of GPT2 from 400 million parameters to 800 million parameters is obvious, and it has also achieved many SOTAs. (But in CV and recommendation systems, in many cases the performance of larger models will be very poor, even close to random guess. For example, the DP best accuracy of CIFAR10 was previously obtained by four-layer CNN, not ResNet.)

In NLP tasks, the larger the DP model, the better the performance [Xuechen et al. 2021]

3. Obtain SOTA super on multiple tasks The parameters are the same: the clipping threshold needs to be set small enough, and the learning rate needs to be larger. (All previous articles were to adjust a clipping threshold for each task, which was time-consuming and labor-intensive. There has never been a clipping threshold=0.1 like this one that runs through all tasks, and the performance is so good.)

Summary of the above I understood it immediately after reading the paper. The content in brackets is not from this paper, but the impression generated by many previous readings. This relies on long-term reading accumulation and a high degree of generalization ability to quickly associate and compare.

In fact, it is difficult for many students to start writing articles because they can only see the content of one article and cannot form a network or make associations with knowledge points in the entire field. On the one hand, students who are just starting out do not read enough and have not yet mastered enough knowledge points. This is especially the case for students who have been taking projects from teachers for a long time and do not propose independently. On the other hand, although the amount of reading is sufficient, it is not summarized from time to time, resulting in the information not being condensed into knowledge or the knowledge not being connected.

Here is the background knowledge of DP deep learning. I will skip the definition of DP for now and it will not affect reading.

The so-called DP deep learning from an algorithmic point of view actually means doing two extra steps: per-sample gradient clipping and Gaussian noise addition; in other words, as long as you follow the gradient according to these two After step processing is completed (the processed gradient is called private gradient), you can use the optimizer however you want, including SGD/Adam.

As for how private the final algorithm is, it is a question in another sub-field, called privacy accounting theory. This field is relatively mature and requires a strong theoretical foundation. Since this article focuses on optimization, it will not be mentioned here.

g_i is the gradient of a data point (per-sample gradient), R is the clipping threshold, and sigma is the noise multiplier.

Clip is called clipping function, just like regular gradient clipping. If the gradient is longer than R, it will be cut to R, and if it is less than R, it will not move.

For example, the DP version of SGD is currently used in all papers using the clipping function in the pioneering work of privacy deep learning (Abadi, Martin, et al. "Deep learning with differential privacy."), also known as Abadi's clipping: picture.

But this is completely unnecessary. Following the first principles and starting from privacy accounting theory, in fact, the clipping function only needs to satisfy that the modulus of Clip(g_i)*g_i is less than or equal to R. In other words, Abadi's clipping is just one function that satisfies this condition, but it is by no means the only one.

2. Entry point

There are many shining points in an article, but not all of them can be used by me. I have to judge the greatest contribution based on my own needs and expertise. What is it.

The first two contributions of this article are actually very empirical and difficult to dig into. The last contribution is very interesting. I carefully looked at the ablation study of the hyperparameters and found a point that the original author did not discover: when the clipping threshold is small enough, in fact, the clipping threshold (that is, clipping norm C, in the above formula and R is a variable) has no effect.

Longitudinally, C=0.1, 0.4, 1.6 has no difference to DP-Adam [Xuechen et al. 2021].

This aroused my interest, and I felt that there must be some principle behind it. So I handwrote the DP-Adam they used to see why. In fact, it is very simple:

If R is small enough, clipping is actually equivalent to normalization! By simply substituting private gradient (1.1), R can be extracted from the clipping part and the noising part respectively:

And the form of Adam makes R appear at the same time In the gradient and adaptive step size, once the numerator and denominator cancel, R is gone and the idea is there!

Both m and v depend on the gradient, and replacing them with private gradients results in DP-AdamW.

Such a simple substitution proves my first theorem: in DP-AdamW, sufficiently small clipping thresholds are equivalent to each other, and no parameter adjustment is required.

No doubt this is a succinct and interesting observation, but it doesn't make enough sense, so I need to think about what practical use this observation has.

In fact, this means that DP training reduces the parameter adjustment work by an order of magnitude: assuming that the learning rate and R are adjusted to 5 values each (as shown above), then 25 combinations must be tested to find the best Excellent hyperparameters. Now you only need to adjust the learning rate in 5 possibilities, and the parameter adjustment efficiency has been improved several times. This is a very valuable pain point issue for the industry.

The intention is high enough, the mathematics is concise enough, and a good idea has begun to take shape.

3. Simple expansion

If it is only established for Adam/AdamW, the limitations of this work are still too great, so I quickly expanded it to AdamW and other adaptive optimizers, such as AdaGrad. In fact, for all adaptive optimizers, it can be proved that the clipping threshold will be offset, so there is no need to adjust parameters, which greatly increases the richness of the theorem.

There is another interesting little detail here. As we all know, Adam with weight decay is different from AdamW. The latter uses decoupled weight decay. There is an ICLR article on this difference

There are two kinds of Adam Add weight decay.

This difference also exists in the DP optimizer. The same is true for Adam. If decoupled weight decay is used, scaling R does not affect the size of weight decay. However, if ordinary weight decay is used, enlarging R by twice is equivalent to reducing weight decay by twice.

4. There is another world

Smart students may have discovered that I have always emphasized the adaptive optimizer. Why don’t I talk about SGD? The answer is that I After writing the theory of DP adaptive optimizer, Google immediately published an article on DP-SGD used in CV and also did an ablation study. However, the rules were completely different from those found in Adam, which left me with a diagonal impression

For DP-SGD and when R is small enough, increasing lr by 10 times is equal to increasing R by 10 times [https://arxiv.org/abs/2201.12328].

I was very excited when I saw this article, because it was another paper that proved the effectiveness of small clipping threshold.

In the scientific world, there are often hidden patterns behind consecutive coincidences.

Simply substitute it and find that SGD is easier to analyze than Adam. (1.3) can be approximated as:

Based on this algorithm, just change one line and rerun the Stanford code, and you will get the SOTA of six NLP data sets.

On the E2E generation task, AUTO-S surpasses all other clipping functions, as well as on the SST2/MNLI/QNLI/QQP classification task.

7. Make a general algorithm

One limitation of Stanford’s article is that it only focuses on NLP, and what a coincidence is that: immediately after Google brushed up ImageNet’s DP Two months after SOTA, Google subsidiary DeepMind released an article on how DP shines in CV, directly increasing the ImageNet accuracy from 48% to 84%!

Paper address: https://arxiv.org/abs/2204.13650

In this article, I am the first It took time to look at the choice of optimizer and clipping threshold until I turned to this picture in the appendix:

DP-SGD's SOTA on ImageNet also requires clipping. threshold is small enough.

Still the small clipping threshold works best! With three high-quality articles supporting automatic clipping, I already have a strong motivation, and I am more and more sure that my work will be outstanding.

Coincidentally, this article by DeepMind is also a pure experiment without theory, which also led them to almost realize that they can theoretically do not need R. In fact, they are really close to my idea. , they have even discovered that R can be extracted and integrated with the learning rate (interested students can take a look at their formulas (2) and (3)). But the inertia of Abadi's clipping was too great... Even though they figured out the rules, they didn't go any further.

DeepMind also found that small clipping threshold works best, but did not understand why.

Inspired by this new work, I started to experiment with CV so that my algorithm can be used by all DP researchers, instead of using one set of methods for NLP and another for CV. .

A good algorithm should be universal and easy to use. Facts have also proved that automatic clipping can also achieve SOTA on CV data sets.

8. Theory as bones and experiments as wings

Looking at all the above papers, SOTA has significantly improved and achieved engineering results. It's full, but the theory is completely blank.

When I finished all the experiments, the contribution of this work has exceeded the requirements of a top conference: I will empirically use DP-SGD and DP-Adam generated by small clipping threshold. The influence of parameters is greatly simplified; a new clipping function is proposed without sacrificing computational efficiency, privacy, and without parameter adjustment; the small γ repairs the damage to gradient size information caused by Abadi's clipping and normalization; sufficient NLP and CV experiments have Achieved SOTA accuracy.

I'm not satisfied yet. An optimizer without theoretical support is still unable to make a substantial contribution to deep learning. Dozens of new optimizers are proposed every year, and all of them are discarded in the second year. There are still only a few officially supported by Pytorch and actually used by the industry.

For this reason, my collaborators and I spent an additional two months doing automatic DP-SGD convergence analysis. The process was difficult but the final proof was simplified to the extreme. The conclusion is also very simple: the impact of batch size, learning rate, model size, sample size and other variables on convergence is quantitatively expressed, and it is consistent with all known DP training behaviors.

Specially, we proved that although DP-SGD converges slower than standard SGD, when the iteration tends to infinite, the convergence speed is an order of magnitude. This provides confidence in privacy computation: the DP model converges, albeit late.

9. Car crash...

Finally, the article I wrote for 7 months was finished. Unexpectedly, the coincidences haven’t stopped yet. NeurIPS submitted the paper in May, and the internal modifications were completed on June 14 and released to arXiv. As a result, on June 27, I saw that Microsoft Research Asia (MSRA) published an article that collided with ours. The clipping proposed was exactly the same as our automatic clipping:

Exactly the same as our AUTO-S.

Looking carefully, even the proof of convergence is almost the same. And the two groups of us have no intersection. It can be said that a coincidence was born across the Pacific Ocean.

Let’s briefly talk about the differences between the two articles: the other article is more theoretical, for example, it additionally analyzes the convergence of Abadi DP-SGD (I only proved automatic clipping, which is the DP- in their article) NSGD, maybe I don’t know how to adjust DP-SGD); the assumptions used are also somewhat different; and our experiments are more and larger (more than a dozen data sets), and we have more explicitly established Abadi's clipping and normalization, etc. Valence relations, such as Theorem 1 and 2 explain why R can be used without parameter adjustment.

Since we are working at the same time, I am very happy that there are people who agree with each other and can complement each other and jointly promote this algorithm, so that the entire community can believe in this result and benefit from it as soon as possible. Of course, selfishly, I also remind myself that the next article will be accelerated!

10. Summary

Looking back on the creative process of this article, from the starting point, basic skills must be the prerequisite, and another important prerequisite is that I have always kept it in mind. The pain point of adjusting parameters. It's been a long drought, so reading the right article can help you find nectar. As for the process, the core lies in the habit of mathematically theorizing observation. In this work, the ability to implement code is not the most important. I will write another column focusing on another hard-core coding work; the final convergence analysis also relies on my collaborators and my own indomitability. Fortunately, you are not afraid of being late for a good meal, so keep going!

About the author

Bu Zhiqi, graduated from the University of Cambridge with a bachelor's degree and a Ph.D. from the University of Pennsylvania. He is currently a senior research scientist at Amazon AWS AI. His research direction is differential privacy and deep learning, focusing on Optimization algorithms and large-scale computation.

The above is the detailed content of How I wrote a top essay from scratch. For more information, please follow other related articles on the PHP Chinese website!

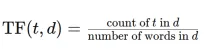

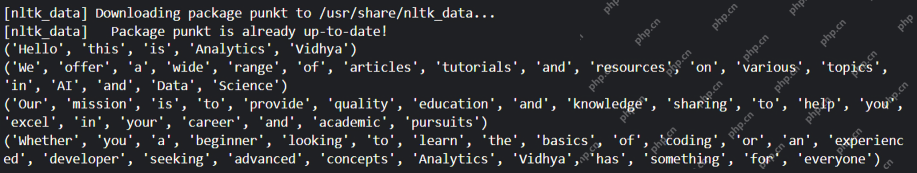

Convert Text Documents to a TF-IDF Matrix with tfidfvectorizerApr 18, 2025 am 10:26 AM

Convert Text Documents to a TF-IDF Matrix with tfidfvectorizerApr 18, 2025 am 10:26 AMThis article explains the Term Frequency-Inverse Document Frequency (TF-IDF) technique, a crucial tool in Natural Language Processing (NLP) for analyzing textual data. TF-IDF surpasses the limitations of basic bag-of-words approaches by weighting te

Building Smart AI Agents with LangChain: A Practical GuideApr 18, 2025 am 10:18 AM

Building Smart AI Agents with LangChain: A Practical GuideApr 18, 2025 am 10:18 AMUnleash the Power of AI Agents with LangChain: A Beginner's Guide Imagine showing your grandmother the wonders of artificial intelligence by letting her chat with ChatGPT – the excitement on her face as the AI effortlessly engages in conversation! Th

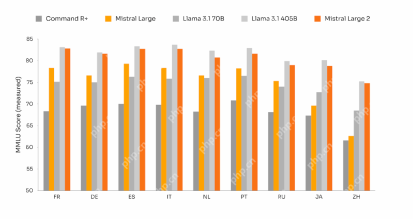

Mistral Large 2: Powerful Enough to Challenge Llama 3.1 405B?Apr 18, 2025 am 10:16 AM

Mistral Large 2: Powerful Enough to Challenge Llama 3.1 405B?Apr 18, 2025 am 10:16 AMMistral Large 2: A Deep Dive into Mistral AI's Powerful Open-Source LLM Meta AI's recent release of the Llama 3.1 family of models was quickly followed by Mistral AI's unveiling of its largest model to date: Mistral Large 2. This 123-billion paramet

What is Noise Schedules in Stable Diffusion? - Analytics VidhyaApr 18, 2025 am 10:15 AM

What is Noise Schedules in Stable Diffusion? - Analytics VidhyaApr 18, 2025 am 10:15 AMUnderstanding Noise Schedules in Diffusion Models: A Comprehensive Guide Have you ever been captivated by the stunning visuals of digital art generated by AI and wondered about the underlying mechanics? A key element is the "noise schedule,&quo

How to Build a Conversational Chatbot with GPT-4o? - Analytics VidhyaApr 18, 2025 am 10:06 AM

How to Build a Conversational Chatbot with GPT-4o? - Analytics VidhyaApr 18, 2025 am 10:06 AMBuilding a Contextual Chatbot with GPT-4o: A Comprehensive Guide In the rapidly evolving landscape of AI and NLP, chatbots have become indispensable tools for developers and organizations. A key aspect of creating truly engaging and intelligent chat

Top 7 Frameworks for Building AI Agents in 2025Apr 18, 2025 am 10:00 AM

Top 7 Frameworks for Building AI Agents in 2025Apr 18, 2025 am 10:00 AMThis article explores seven leading frameworks for building AI agents – autonomous software entities that perceive, decide, and act to achieve goals. These agents, surpassing traditional reinforcement learning, leverage advanced planning and reasoni

What's the Difference Between Type I and Type II Errors ? - Analytics VidhyaApr 18, 2025 am 09:48 AM

What's the Difference Between Type I and Type II Errors ? - Analytics VidhyaApr 18, 2025 am 09:48 AMUnderstanding Type I and Type II Errors in Statistical Hypothesis Testing Imagine a clinical trial testing a new blood pressure medication. The trial concludes the drug significantly lowers blood pressure, but in reality, it doesn't. This is a Type

Automated Text Summarization with Sumy LibraryApr 18, 2025 am 09:37 AM

Automated Text Summarization with Sumy LibraryApr 18, 2025 am 09:37 AMSumy: Your AI-Powered Summarization Assistant Tired of sifting through endless documents? Sumy, a powerful Python library, offers a streamlined solution for automatic text summarization. This article explores Sumy's capabilities, guiding you throug

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.

SublimeText3 English version

Recommended: Win version, supports code prompts!

SublimeText3 Chinese version

Chinese version, very easy to use

SAP NetWeaver Server Adapter for Eclipse

Integrate Eclipse with SAP NetWeaver application server.

PhpStorm Mac version

The latest (2018.2.1) professional PHP integrated development tool