Technology peripherals

Technology peripherals AI

AI OpenAI posts on how to ensure AI safety: Government regulation is necessary

OpenAI posts on how to ensure AI safety: Government regulation is necessaryOpenAI posts on how to ensure AI safety: Government regulation is necessary

News on April 6th, on Wednesday, local time in the United States, OpenAI posted a detailed introduction to its approach to ensuring AI security. Methods, including conducting security assessments, improving post-launch safeguards, protecting children, and respecting privacy. The company said ensuring that AI systems are built, deployed and used safely is critical to achieving its mission.

The following is the full text of OpenAI’s post:

OpenAI is committed to ensuring strong AI security that benefits as many people as possible. We know that our AI tools provide a lot of help to people today. Users around the world have told us that ChatGPT helps improve their productivity, enhance their creativity, and provide a tailored learning experience. But we also recognize that, as with any technology, there are real risks associated with these tools. Therefore, we are working hard to ensure security at every system level.

Create a safer artificial intelligenceSystem

is being launched Before any new artificial intelligence system, we conduct rigorous testing, solicit opinions from external experts, and improve the model's performance through techniques such as reinforcement learning with human feedback. At the same time, we have also established extensive security and monitoring systems.

Take our latest model GPT-4 as an example. After completing training, we conducted company-wide testing for up to 6 months to ensure that it was more secure and reliable before it was released to the public.

We believe that powerful artificial intelligence systems should undergo rigorous security assessments. Regulation is necessary to ensure widespread adoption of this practice. Therefore, we actively engage with governments to discuss the best form of regulation.

Learn from actual use and improve safeguards

We try our best to prevent foreseeable risks before system deployment, but learning in the laboratory is always limited. We research and test extensively, but cannot predict how people may use our technology, or misuse it. Therefore, we believe that learning from real-world use is a critical component in creating and releasing increasingly secure AI systems.

We carefully release new artificial intelligence systems to the crowd gradually, take substantial safeguards, and continue to improve based on the lessons we learn.

We provide the most powerful models in our own services and APIs so that developers can integrate the technology directly into their applications. This allows us to monitor and take action on abuse and develop responses. In this way, we can take practical action instead of just imagining what to do in theory.

Experience from real-world use has also led us to develop increasingly granular policies to address behavior that poses real risks to people, while still allowing our technology to be used in more beneficial ways.

We believe that society needs more time to adapt to increasingly powerful artificial intelligence, and that everyone affected by it should have a say in the further development of artificial intelligence. Iterative deployment helps different stakeholders more effectively engage in conversations about AI technologies, and having first-hand experience using these tools is critical.

Protect Children

One of the focuses of our safety work is the protection of children. We require that people using our artificial intelligence tools be 18 years of age or older, or 13 years of age or older with parental consent. Currently, we are working on verification functionality.

We do not allow our technology to be used to generate hateful, harassing, violent or adult content. The latest GPT-4 is 82% less likely to respond to requests for restricted content compared to GPT-3.5. We have robust systems in place to monitor abuse. GPT-4 is now available to subscribers of ChatGPT Plus, and we hope to allow more people to experience it over time.

We have taken significant steps to minimize the potential for our models to produce content that is harmful to children. For example, when a user attempts to upload child-safe abuse material to our image generation tool, we block it and report the matter to the National Center for Missing and Exploited Children.

In addition to the default security protection, we work with development organizations such as the non-profit organization Khan Academy to tailor security measures for them. Khan Academy has developed an artificial intelligence assistant that can serve as a virtual tutor for students and a classroom assistant for teachers. We are also working on features that allow developers to set stricter standards for model output to better support developers and users who require such capabilities.

Respect Privacy

Our large language models are trained on an extensive corpus of text, including publicly available content, licensed content, and content produced by humans Moderator-generated content. We do not use this data to sell our services or advertising, nor do we use it to build profiles. We just use this data to make our models better at helping people, such as making ChatGPT more intelligent by having more conversations with people.

Although much of our training data includes personal information that is available on the public web, we want our models to learn about the world as a whole, not individuals. Therefore, we are committed to removing personal information from training data sets where feasible, fine-tuning models to deny query requests for personal information, and responding to individuals' requests to delete their personal information from our systems. These measures minimize the likelihood that our model will generate responses that contain personal information.

Improve factual accuracy

Today's large language models can predict the next likely words to be used based on previous patterns and user-entered text. But in some cases, the next most likely word may actually be factually incorrect.

Improving factual accuracy is one of the focuses of OpenAI and many other AI research organizations, and we are making progress. We improved the factual accuracy of GPT-4 by leveraging user feedback on ChatGPT output that was flagged as incorrect as the primary data source. Compared with GPT-3.5, GPT-4 is more likely to produce factual content, with an improvement of 40%.

We strive to be as transparent as possible when users sign up to use the tool to avoid possible incorrect responses from ChatGPT. However, we have recognized that there is more work to be done to further reduce the potential for misunderstanding and educate the public about the current limitations of these AI tools.

Continuous Research and Engagement

We believe that a practical way to address AI safety issues is to invest more time and resources into researching effective mitigation and Calibrate the technology and test it against real-world potential abuse.

Importantly, we believe that improving the safety and capabilities of AI should proceed simultaneously. Our best security work to date has come from working with our most capable models, because they are better at following the user's instructions and easier to harness or "guide" them.

We will create and deploy more capable models with increasing caution and will continue to strengthen safety precautions as AI systems evolve.

While we waited more than 6 months to deploy GPT-4 to better understand its capabilities, benefits, and risks, sometimes it can take longer to improve the security of AI systems. Therefore, policymakers and AI developers need to ensure that the development and deployment of AI are effectively regulated globally so that no one takes shortcuts to stay ahead. This is a difficult challenge that requires technological and institutional innovation, but we are eager to contribute.

Addressing AI safety issues will also require extensive debate, experimentation and engagement, including setting boundaries for how AI systems can behave. We have and will continue to promote collaboration and open dialogue among stakeholders to create a safer AI ecosystem.

The above is the detailed content of OpenAI posts on how to ensure AI safety: Government regulation is necessary. For more information, please follow other related articles on the PHP Chinese website!

Are You At Risk Of AI Agency Decay? Take The Test To Find OutApr 21, 2025 am 11:31 AM

Are You At Risk Of AI Agency Decay? Take The Test To Find OutApr 21, 2025 am 11:31 AMThis article explores the growing concern of "AI agency decay"—the gradual decline in our ability to think and decide independently. This is especially crucial for business leaders navigating the increasingly automated world while retainin

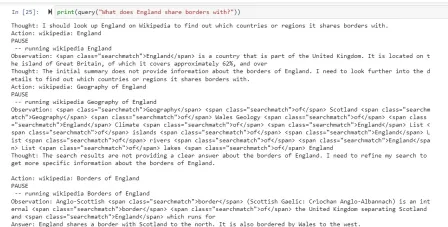

How to Build an AI Agent from Scratch? - Analytics VidhyaApr 21, 2025 am 11:30 AM

How to Build an AI Agent from Scratch? - Analytics VidhyaApr 21, 2025 am 11:30 AMEver wondered how AI agents like Siri and Alexa work? These intelligent systems are becoming more important in our daily lives. This article introduces the ReAct pattern, a method that enhances AI agents by combining reasoning an

Revisiting The Humanities In The Age Of AIApr 21, 2025 am 11:28 AM

Revisiting The Humanities In The Age Of AIApr 21, 2025 am 11:28 AM"I think AI tools are changing the learning opportunities for college students. We believe in developing students in core courses, but more and more people also want to get a perspective of computational and statistical thinking," said University of Chicago President Paul Alivisatos in an interview with Deloitte Nitin Mittal at the Davos Forum in January. He believes that people will have to become creators and co-creators of AI, which means that learning and other aspects need to adapt to some major changes. Digital intelligence and critical thinking Professor Alexa Joubin of George Washington University described artificial intelligence as a “heuristic tool” in the humanities and explores how it changes

Understanding LangChain Agent FrameworkApr 21, 2025 am 11:25 AM

Understanding LangChain Agent FrameworkApr 21, 2025 am 11:25 AMLangChain is a powerful toolkit for building sophisticated AI applications. Its agent architecture is particularly noteworthy, allowing developers to create intelligent systems capable of independent reasoning, decision-making, and action. This expl

What are the Radial Basis Functions Neural Networks?Apr 21, 2025 am 11:13 AM

What are the Radial Basis Functions Neural Networks?Apr 21, 2025 am 11:13 AMRadial Basis Function Neural Networks (RBFNNs): A Comprehensive Guide Radial Basis Function Neural Networks (RBFNNs) are a powerful type of neural network architecture that leverages radial basis functions for activation. Their unique structure make

The Meshing Of Minds And Machines Has ArrivedApr 21, 2025 am 11:11 AM

The Meshing Of Minds And Machines Has ArrivedApr 21, 2025 am 11:11 AMBrain-computer interfaces (BCIs) directly link the brain to external devices, translating brain impulses into actions without physical movement. This technology utilizes implanted sensors to capture brain signals, converting them into digital comman

Insights on spaCy, Prodigy and Generative AI from Ines MontaniApr 21, 2025 am 11:01 AM

Insights on spaCy, Prodigy and Generative AI from Ines MontaniApr 21, 2025 am 11:01 AMThis "Leading with Data" episode features Ines Montani, co-founder and CEO of Explosion AI, and co-developer of spaCy and Prodigy. Ines offers expert insights into the evolution of these tools, Explosion's unique business model, and the tr

A Guide to Building Agentic RAG Systems with LangGraphApr 21, 2025 am 11:00 AM

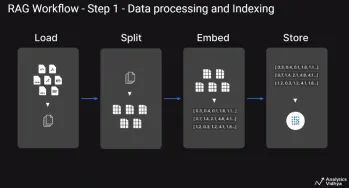

A Guide to Building Agentic RAG Systems with LangGraphApr 21, 2025 am 11:00 AMThis article explores Retrieval Augmented Generation (RAG) systems and how AI agents can enhance their capabilities. Traditional RAG systems, while useful for leveraging custom enterprise data, suffer from limitations such as a lack of real-time dat

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.

WebStorm Mac version

Useful JavaScript development tools

Atom editor mac version download

The most popular open source editor

EditPlus Chinese cracked version

Small size, syntax highlighting, does not support code prompt function

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software