Meta's Llama 4: A Trio of Open-Source AI Powerhouses

Meta AI has disrupted the AI landscape by simultaneously releasing three groundbreaking large language models (LLMs) under the Llama 4 banner: Scout, Maverick, and Behemoth. This move contrasts sharply with the trend of closed, increasingly large models from competitors. Llama 4 prioritizes accessibility, offering powerful AI tools to a wider audience. This article explores the unique capabilities and performance of each model.

Llama 4 Scout: Efficiency Redefined

Scout is the lightweight champion of the Llama 4 family. Designed for resource-constrained environments, it's perfect for developers and researchers lacking access to extensive GPU resources.

-

Key Features: Scout employs a Mixture of Experts (MoE) architecture, activating only a fraction of its 109B parameters (17B active) at any given time. It boasts a remarkable 10-million token context window and runs efficiently on a single H100 GPU using Int4 quantization. Pre-trained on 200 languages (100 with over a billion tokens each) and diverse image/video data, it supports up to 8 images per prompt.

-

Performance: Benchmarks show Scout outperforming comparable models like Gemini 3 and Mistral 3.1. Its advanced image region grounding enables precise visual reasoning.

-

Ideal Applications: Long-context chatbots, code summarization tools, educational Q&A systems, and mobile/embedded assistants.

Llama 4 Maverick: The Versatile Workhorse

Maverick is the flagship open-weight model, built for advanced reasoning, coding, and multimodal applications. While more powerful than Scout, it maintains efficiency through its MoE architecture.

-

Key Features: Maverick uses an MoE architecture with 128 routed experts and a shared expert, activating 17B of its 400B parameters during inference. Trained with cutting-edge techniques (MetaP hyperparameter scaling, FP8 precision training), it leverages a massive 30-trillion token dataset and supports up to 8 image inputs.

-

Performance: Maverick achieved an impressive ELO score of 1417 on the LMSYS Chatbot Arena, surpassing GPT-4o and Gemini 2.0 Flash. It demonstrates strong image understanding, multilingual reasoning, and cost-effective performance exceeding the Llama 3.3 70B model.

-

Ideal Applications: AI pair programming, enterprise-level document understanding, and educational tutoring systems.

Llama 4 Behemoth: The Unsung Hero

Behemoth, Meta's largest model to date, is not publicly available. However, it plays a crucial role as a teacher model, guiding the training of Scout and Maverick through co-distillation.

-

Key Features: Behemoth's massive architecture (~2 trillion parameters) and advanced training techniques result in superior performance on challenging benchmarks.

-

Role: Its primary function is to serve as a gold standard for evaluation and internal model improvement.

Accessing Llama 4 Models

Llama 4 Scout and Maverick are readily accessible through several platforms:

-

llama.meta.com: Meta's official hub for Llama models, providing model cards, papers, documentation, and access to model weights.

-

Hugging Face: Offers ready-to-use versions for testing and deployment.

-

Meta Apps: Integrated into WhatsApp, Instagram, Messenger, and Facebook, allowing users to interact with the models directly within their apps.

-

Web Interface: Direct access via a web interface is also available.

Llama 4 in Action: Examples

While the specific Llama 4 model used in Meta's apps and web interface isn't explicitly stated, testing reveals impressive capabilities:

-

Creative Planning: Quickly generates detailed social media strategies.

-

Coding: Produces code, though accuracy may require refinement.

-

Image Generation: Generates multiple images with editing and animation options.

Training and Post-Training Innovations

Llama 4's success stems from a sophisticated two-step training process:

-

Pre-training: Employs multimodal data (text, image, video), MoE architecture, early fusion, MetaP hyperparameter tuning, FP8 precision, and the iRoPE architecture for long-context handling.

-

Post-training: Utilizes lightweight supervised fine-tuning (SFT), online reinforcement learning (RL), direct preference optimization (DPO), and Behemoth co-distillation for enhanced performance and safety.

Benchmark Performance Summary

Each model excels in specific areas: Scout in efficiency, Maverick in overall performance, and Behemoth in research-grade benchmarks. Detailed benchmark results highlight their superior performance compared to leading models.

Model Comparison Table

| Model | Total Params | Active Params | Experts | Context Length | Runs on | Public Access | Ideal For |

|---|---|---|---|---|---|---|---|

| Scout | 109B | 17B | 16 | 10M tokens | Single H100 | ✅ | Lightweight AI, long memory apps |

| Maverick | 400B | 17B | 128 | Unlisted | Single/Multi-GPU | ✅ | Research, coding, enterprise |

| Behemoth | ~2T | 288B | 16 | Unlisted | Internal infra | ❌ | Internal use, benchmarks |

Conclusion

Meta's Llama 4 models represent a significant leap forward in AI accessibility and performance. Their open-source nature democratizes access to cutting-edge AI technology, empowering developers and researchers worldwide. The focus on openness and efficiency sets a new standard for the future of AI development.

The above is the detailed content of Llama 4 Models: Meta AI is Open Sourcing the Best! - Analytics Vidhya. For more information, please follow other related articles on the PHP Chinese website!

One Prompt Can Bypass Every Major LLM's SafeguardsApr 25, 2025 am 11:16 AM

One Prompt Can Bypass Every Major LLM's SafeguardsApr 25, 2025 am 11:16 AMHiddenLayer's groundbreaking research exposes a critical vulnerability in leading Large Language Models (LLMs). Their findings reveal a universal bypass technique, dubbed "Policy Puppetry," capable of circumventing nearly all major LLMs' s

5 Mistakes Most Businesses Will Make This Year With SustainabilityApr 25, 2025 am 11:15 AM

5 Mistakes Most Businesses Will Make This Year With SustainabilityApr 25, 2025 am 11:15 AMThe push for environmental responsibility and waste reduction is fundamentally altering how businesses operate. This transformation affects product development, manufacturing processes, customer relations, partner selection, and the adoption of new

H20 Chip Ban Jolts China AI Firms, But They've Long Braced For ImpactApr 25, 2025 am 11:12 AM

H20 Chip Ban Jolts China AI Firms, But They've Long Braced For ImpactApr 25, 2025 am 11:12 AMThe recent restrictions on advanced AI hardware highlight the escalating geopolitical competition for AI dominance, exposing China's reliance on foreign semiconductor technology. In 2024, China imported a massive $385 billion worth of semiconductor

If OpenAI Buys Chrome, AI May Rule The Browser WarsApr 25, 2025 am 11:11 AM

If OpenAI Buys Chrome, AI May Rule The Browser WarsApr 25, 2025 am 11:11 AMThe potential forced divestiture of Chrome from Google has ignited intense debate within the tech industry. The prospect of OpenAI acquiring the leading browser, boasting a 65% global market share, raises significant questions about the future of th

How AI Can Solve Retail Media's Growing PainsApr 25, 2025 am 11:10 AM

How AI Can Solve Retail Media's Growing PainsApr 25, 2025 am 11:10 AMRetail media's growth is slowing, despite outpacing overall advertising growth. This maturation phase presents challenges, including ecosystem fragmentation, rising costs, measurement issues, and integration complexities. However, artificial intell

'AI Is Us, And It's More Than Us'Apr 25, 2025 am 11:09 AM

'AI Is Us, And It's More Than Us'Apr 25, 2025 am 11:09 AMAn old radio crackles with static amidst a collection of flickering and inert screens. This precarious pile of electronics, easily destabilized, forms the core of "The E-Waste Land," one of six installations in the immersive exhibition, &qu

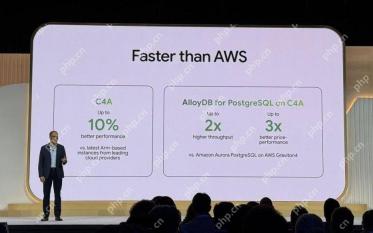

Google Cloud Gets More Serious About Infrastructure At Next 2025Apr 25, 2025 am 11:08 AM

Google Cloud Gets More Serious About Infrastructure At Next 2025Apr 25, 2025 am 11:08 AMGoogle Cloud's Next 2025: A Focus on Infrastructure, Connectivity, and AI Google Cloud's Next 2025 conference showcased numerous advancements, too many to fully detail here. For in-depth analyses of specific announcements, refer to articles by my

Talking Baby AI Meme, Arcana's $5.5 Million AI Movie Pipeline, IR's Secret Backers RevealedApr 25, 2025 am 11:07 AM

Talking Baby AI Meme, Arcana's $5.5 Million AI Movie Pipeline, IR's Secret Backers RevealedApr 25, 2025 am 11:07 AMThis week in AI and XR: A wave of AI-powered creativity is sweeping through media and entertainment, from music generation to film production. Let's dive into the headlines. AI-Generated Content's Growing Impact: Technology consultant Shelly Palme

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.

Atom editor mac version download

The most popular open source editor

VSCode Windows 64-bit Download

A free and powerful IDE editor launched by Microsoft

SublimeText3 Linux new version

SublimeText3 Linux latest version

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software