Small language models (SLMs) are making a significant impact in AI. They provide strong performance while being efficient and cost-effective. One standout example is the Llama 3.2 3B. It performs exceptionally well in Retrieval-Augmented Generation (RAG) tasks, cutting computational costs and memory usage while maintaining high accuracy. This article explores how to fine-tune the Llama 3.2 3B model. Learn how smaller models can excel in RAG tasks and push the boundaries of what compact AI solutions can achieve.

Table of contents

- What is Llama 3.2 3B?

- Finetuning Llama 3.2 3B

- LoRA

- Libraries Required

- Import the Libraries

- Initialize the Model and Tokenizers

- Initialize the Model for PEFT

- Data Processing

- Setting-up the Trainer Parameters

- Fine-tuning the Model

- Test and Save the Model

- Conclusion

- Frequently Asked Questions

What is Llama 3.2 3B?

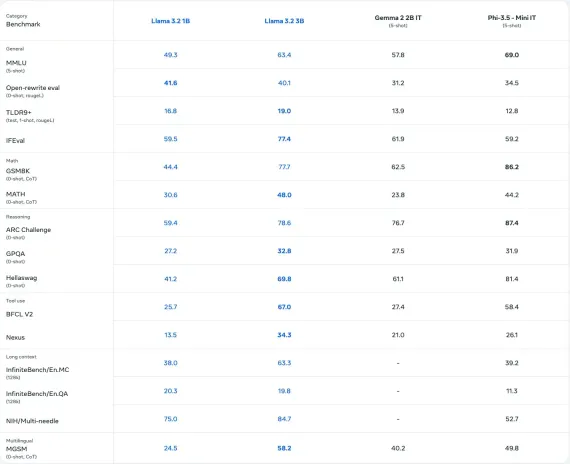

The Llama 3.2 3B model, developed by Meta, is a multilingual SLM with 3 billion parameters, designed for tasks like question answering, summarization, and dialogue systems. It outperforms many open-source models on industry benchmarks and supports diverse languages. Available in various sizes, Llama 3.2 offers efficient computational performance and includes quantized versions for faster, memory-efficient deployment in mobile and edge environments.

Also Read: Top 13 Small Language Models (SLMs)

Finetuning Llama 3.2 3B

Fine-tuning is essential for adapting SLM or LLMs to specific domains or tasks, such as medical, legal, or RAG applications. While pre-training enables language models to generate text across diverse topics, fine-tuning re-trains the model on domain-specific or task-specific data to improve relevance and performance. To address the high computational cost of fine-tuning all parameters, techniques like Parameter Efficient Fine-Tuning (PEFT) focus on training only a subset of the model’s parameters, optimizing resource usage while maintaining performance.

LoRA

One such PEFT method is Low Rank Adaptation (LoRA).

In Lora, the weight matrix in SLM or LLM is decomposed into a product of two low-rank matrices.

W = WA * WB

If W has m rows and n columns, then it can be decomposed into WA with m rows and r columns, and WB with r rows and n columns. Here r is much less than m or n. So, rather than training m*n values, we can only train r*(m n) values. r is called rank which is the hyperparameter we can choose.

def lora_linear(x):<br> h = x @ W # regular linear<br> h = scale * (x @ W_A @ W_B) # low-rank update<br> return h

Checkout: Parameter-Efficient Fine-Tuning of Large Language Models with LoRA and QLoRA

Let’s implement LoRA on the Llama 3.2 3B model.

Libraries Required

- unsloth – 2024.12.9

- datasets – 3.1.0

Installing the above sloth version will also install the compatible pytorch, transformers, and Nvidia GPU libraries. We can use google colab to access the GPU.

Let’s look at the implementation now!

Import the Libraries

from unsloth import FastLanguageModel, is_bfloat16_supported, train_on_responses_only from datasets import load_dataset, Dataset from trl import SFTTrainer, apply_chat_template from transformers import TrainingArguments, DataCollatorForSeq2Seq, TextStreamer import torch

Initialize the Model and Tokenizers

max_seq_length = 2048 dtype = None # None for auto-detection. load_in_4bit = True # Use 4bit quantization to reduce memory usage. Can be False. model, tokenizer = FastLanguageModel.from_pretrained( model_name = "unsloth/Llama-3.2-3B-Instruct", max_seq_length = max_seq_length, dtype = dtype, load_in_4bit = load_in_4bit, # token = "hf_...", # use if using gated models like meta-llama/Llama-3.2-11b )

For other models supported by Unsloth, we can refer to this document.

Initialize the Model for PEFT

model = FastLanguageModel.get_peft_model(

model,

r = 16,

target_modules = ["q_proj", "k_proj", "v_proj", "o_proj",

"gate_proj", "up_proj", "down_proj",],

lora_alpha = 16,

lora_dropout = 0,

bias = "none",

use_gradient_checkpointing = "unsloth",

random_state = 42,

use_rslora = False,

loftq_config = None,

)

Description for Each Parameter

- r: Rank of LoRA; higher values improve accuracy but use more memory (suggested: 8–128).

- target_modules: Modules to fine-tune; include all for better results

- lora_alpha: Scaling factor; typically equal to or double the rank r.

- lora_dropout: Dropout rate; set to 0 for optimized and faster training.

- bias: Bias type; “none” is optimized for speed and minimal overfitting.

- use_gradient_checkpointing: Reduces memory for long-context training; “unsloth” is highly recommended.

- random_state: Seed for deterministic runs, ensuring reproducible results (e.g., 42).

- use_rslora: Automates alpha selection; useful for rank-stabilized LoRA.

- loftq_config: Initializes LoRA with top r singular vectors for better accuracy, though memory-intensive.

Data Processing

We will use the RAG data to finetune. Download the data from huggingface.

dataset = load_dataset("neural-bridge/rag-dataset-1200", split = "train")

The dataset has three keys as follows:

Dataset({ features: [‘context’, ‘question’, ‘answer’], num_rows: 960 })

The data needs to be in a specific format depending on the language model. Read more details here.

So, let’s convert the data into the required format:

def convert_dataset_to_dict(dataset):

dataset_dict = {

"prompt": [],

"completion": []

}

for row in dataset:

user_content = f"Context: {row['context']}\nQuestion: {row['question']}"

assistant_content = row['answer']

dataset_dict["prompt"].append([

{"role": "user", "content": user_content}

])

dataset_dict["completion"].append([

{"role": "assistant", "content": assistant_content}

])

return dataset_dict

converted_data = convert_dataset_to_dict(dataset)

dataset = Dataset.from_dict(converted_data)

dataset = dataset.map(apply_chat_template, fn_kwargs={"tokenizer": tokenizer})

The dataset message will be as follows:

Setting-up the Trainer Parameters

We can initialize the trainer for finetuning the SLM:

trainer = SFTTrainer(

model = model,

tokenizer = tokenizer,

train_dataset = dataset,

max_seq_length = max_seq_length,

data_collator = DataCollatorForSeq2Seq(tokenizer = tokenizer),

dataset_num_proc = 2,

packing = False, # Can make training 5x faster for short sequences.

args = TrainingArguments(

per_device_train_batch_size = 2,

gradient_accumulation_steps = 4,

warmup_steps = 5,

# num_train_epochs = 1, # Set this for 1 full training run.

max_steps = 6, # using small number to test

learning_rate = 2e-4,

fp16 = not is_bfloat16_supported(),

bf16 = is_bfloat16_supported(),

logging_steps = 1,

optim = "adamw_8bit",

weight_decay = 0.01,

lr_scheduler_type = "linear",

seed = 3407,

output_dir = "outputs",

report_to = "none", # Use this for WandB etc

),

)

Description of some of the parameters:

- per_device_train_batch_size: Batch size per device; increase to utilize more GPU memory but watch for padding inefficiencies (suggested: 2).

- gradient_accumulation_steps: Simulates larger batch sizes without extra memory usage; increase for smoother loss curves (suggested: 4).

- max_steps: Total training steps; set for faster runs (e.g., 60), or use `num_train_epochs` for full dataset passes (e.g., 1–3).

- learning_rate: Controls training speed and convergence; lower rates (e.g., 2e-4) improve accuracy but slow training.

Make the model train on responses only by specifying the response template:

trainer = train_on_responses_only( trainer, instruction_part = "user\n\n", response_part = "assistant\n\n", )

Fine-tuning the Model

trainer_stats = trainer.train()

Here’s the training stats:

Test and Save the Model

Let’s use the model for inference:

FastLanguageModel.for_inference(model)

messages = [

{"role": "user", "content": "Context: The sky is typically clear during the day. Question: What color is the water?"},

]

inputs = tokenizer.apply_chat_template(

messages,

tokenize = True,

add_generation_prompt = True,

return_tensors = "pt",

).to("cuda")

text_streamer = TextStreamer(tokenizer, skip_prompt = True)

_ = model.generate(input_ids = inputs, streamer = text_streamer, max_new_tokens = 128,

use_cache = True, temperature = 1.5, min_p = 0.1)

To save the trained including LoRA weights, use the below code

model.save_pretrained_merged("model", tokenizer, save_method = "merged_16bit")

Checkout: Guide to Fine-Tuning Large Language Models

Conclusion

Fine-tuning Llama 3.2 3B for RAG tasks showcases the efficiency of smaller models in delivering high performance with reduced computational costs. Techniques like LoRA optimize resource usage while maintaining accuracy. This approach empowers domain-specific applications, making advanced AI more accessible, scalable, and cost-effective, driving innovation in retrieval-augmented generation and democratizing AI for real-world challenges.

Also Read: Getting Started With Meta Llama 3.2

Frequently Asked Questions

Q1. What is RAG?A. RAG combines retrieval systems with generative models to enhance responses by grounding them in external knowledge, making it ideal for tasks like question answering and summarization.

Q2. Why choose Llama 3.2 3B for fine-tuning?A. Llama 3.2 3B offers a balance of performance, efficiency, and scalability, making it suitable for RAG tasks while reducing computational and memory requirements.

Q3. What is LoRA, and how does it improve fine-tuning?A. Low-Rank Adaptation (LoRA) minimizes resource usage by training only low-rank matrices instead of all model parameters, enabling efficient fine-tuning on constrained hardware.

Q4. What dataset is used for fine-tuning in this article?A. Hugging Face provides the RAG dataset, which contains context, questions, and answers, to fine-tune the Llama 3.2 3B model for better task performance.

Q5. Can the fine-tuned model be deployed on edge devices?A. Yes, Llama 3.2 3B, especially in its quantized form, is optimized for memory-efficient deployment on edge and mobile environments.

The above is the detailed content of Fine-tuning Llama 3.2 3B for RAG - Analytics Vidhya. For more information, please follow other related articles on the PHP Chinese website!

![Can't use ChatGPT! Explaining the causes and solutions that can be tested immediately [Latest 2025]](https://img.php.cn/upload/article/001/242/473/174717025174979.jpg?x-oss-process=image/resize,p_40) Can't use ChatGPT! Explaining the causes and solutions that can be tested immediately [Latest 2025]May 14, 2025 am 05:04 AM

Can't use ChatGPT! Explaining the causes and solutions that can be tested immediately [Latest 2025]May 14, 2025 am 05:04 AMChatGPT is not accessible? This article provides a variety of practical solutions! Many users may encounter problems such as inaccessibility or slow response when using ChatGPT on a daily basis. This article will guide you to solve these problems step by step based on different situations. Causes of ChatGPT's inaccessibility and preliminary troubleshooting First, we need to determine whether the problem lies in the OpenAI server side, or the user's own network or device problems. Please follow the steps below to troubleshoot: Step 1: Check the official status of OpenAI Visit the OpenAI Status page (status.openai.com) to see if the ChatGPT service is running normally. If a red or yellow alarm is displayed, it means Open

Calculating The Risk Of ASI Starts With Human MindsMay 14, 2025 am 05:02 AM

Calculating The Risk Of ASI Starts With Human MindsMay 14, 2025 am 05:02 AMOn 10 May 2025, MIT physicist Max Tegmark told The Guardian that AI labs should emulate Oppenheimer’s Trinity-test calculus before releasing Artificial Super-Intelligence. “My assessment is that the 'Compton constant', the probability that a race to

An easy-to-understand explanation of how to write and compose lyrics and recommended tools in ChatGPTMay 14, 2025 am 05:01 AM

An easy-to-understand explanation of how to write and compose lyrics and recommended tools in ChatGPTMay 14, 2025 am 05:01 AMAI music creation technology is changing with each passing day. This article will use AI models such as ChatGPT as an example to explain in detail how to use AI to assist music creation, and explain it with actual cases. We will introduce how to create music through SunoAI, AI jukebox on Hugging Face, and Python's Music21 library. Through these technologies, everyone can easily create original music. However, it should be noted that the copyright issue of AI-generated content cannot be ignored, and you must be cautious when using it. Let’s explore the infinite possibilities of AI in the music field together! OpenAI's latest AI agent "OpenAI Deep Research" introduces: [ChatGPT]Ope

What is ChatGPT-4? A thorough explanation of what you can do, the pricing, and the differences from GPT-3.5!May 14, 2025 am 05:00 AM

What is ChatGPT-4? A thorough explanation of what you can do, the pricing, and the differences from GPT-3.5!May 14, 2025 am 05:00 AMThe emergence of ChatGPT-4 has greatly expanded the possibility of AI applications. Compared with GPT-3.5, ChatGPT-4 has significantly improved. It has powerful context comprehension capabilities and can also recognize and generate images. It is a universal AI assistant. It has shown great potential in many fields such as improving business efficiency and assisting creation. However, at the same time, we must also pay attention to the precautions in its use. This article will explain the characteristics of ChatGPT-4 in detail and introduce effective usage methods for different scenarios. The article contains skills to make full use of the latest AI technologies, please refer to it. OpenAI's latest AI agent, please click the link below for details of "OpenAI Deep Research"

Explaining how to use the ChatGPT app! Japanese support and voice conversation functionMay 14, 2025 am 04:59 AM

Explaining how to use the ChatGPT app! Japanese support and voice conversation functionMay 14, 2025 am 04:59 AMChatGPT App: Unleash your creativity with the AI assistant! Beginner's Guide The ChatGPT app is an innovative AI assistant that handles a wide range of tasks, including writing, translation, and question answering. It is a tool with endless possibilities that is useful for creative activities and information gathering. In this article, we will explain in an easy-to-understand way for beginners, from how to install the ChatGPT smartphone app, to the features unique to apps such as voice input functions and plugins, as well as the points to keep in mind when using the app. We'll also be taking a closer look at plugin restrictions and device-to-device configuration synchronization

How do I use the Chinese version of ChatGPT? Explanation of registration procedures and feesMay 14, 2025 am 04:56 AM

How do I use the Chinese version of ChatGPT? Explanation of registration procedures and feesMay 14, 2025 am 04:56 AMChatGPT Chinese version: Unlock new experience of Chinese AI dialogue ChatGPT is popular all over the world, did you know it also offers a Chinese version? This powerful AI tool not only supports daily conversations, but also handles professional content and is compatible with Simplified and Traditional Chinese. Whether it is a user in China or a friend who is learning Chinese, you can benefit from it. This article will introduce in detail how to use ChatGPT Chinese version, including account settings, Chinese prompt word input, filter use, and selection of different packages, and analyze potential risks and response strategies. In addition, we will also compare ChatGPT Chinese version with other Chinese AI tools to help you better understand its advantages and application scenarios. OpenAI's latest AI intelligence

5 AI Agent Myths You Need To Stop Believing NowMay 14, 2025 am 04:54 AM

5 AI Agent Myths You Need To Stop Believing NowMay 14, 2025 am 04:54 AMThese can be thought of as the next leap forward in the field of generative AI, which gave us ChatGPT and other large-language-model chatbots. Rather than simply answering questions or generating information, they can take action on our behalf, inter

An easy-to-understand explanation of the illegality of creating and managing multiple accounts using ChatGPTMay 14, 2025 am 04:50 AM

An easy-to-understand explanation of the illegality of creating and managing multiple accounts using ChatGPTMay 14, 2025 am 04:50 AMEfficient multiple account management techniques using ChatGPT | A thorough explanation of how to use business and private life! ChatGPT is used in a variety of situations, but some people may be worried about managing multiple accounts. This article will explain in detail how to create multiple accounts for ChatGPT, what to do when using it, and how to operate it safely and efficiently. We also cover important points such as the difference in business and private use, and complying with OpenAI's terms of use, and provide a guide to help you safely utilize multiple accounts. OpenAI

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

SAP NetWeaver Server Adapter for Eclipse

Integrate Eclipse with SAP NetWeaver application server.

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.

Zend Studio 13.0.1

Powerful PHP integrated development environment

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),