Home >Technology peripherals >AI >SmolAgents by Hugging Face: Build AI Agents in Under 30 Lines

SmolAgents by Hugging Face: Build AI Agents in Under 30 Lines

- Jennifer AnistonOriginal

- 2025-03-11 11:19:09480browse

Happy New Year! My exploration of AI Agents in 2025 led me to Hugging Face's SmolAgents framework. Let's dive in!

Hugging Face's SmolAgents library, launched in 2025, simplifies running powerful agents with minimal code. Its ease of use, Hub integrations, and broad LLM compatibility make it ideal for agentic workflows.

Table of Contents

- What is SmolAgents?

- Understanding AI Agents

- Multi-step Agent Example

- SmolAgents Key Features

- SmolAgents Capabilities:

- Code Agents

- Local Python Interpreter

- E2B Code Executor

- SmolAgents in Action:

- Demo 1: Research Agent

- Demo 2: Stock Price Retrieval

- Conclusion

What is SmolAgents?

SmolAgents is a concise, powerful library for building and running agents. Its compact design (around 1,000 lines of code) prioritizes ease of use without sacrificing functionality. It excels at supporting "Code Agents," which generate and execute code, and offers enhanced security via sandboxed environments like E2B. It also supports traditional ToolCallingAgents using JSON or text-based actions. SmolAgents integrates with various LLMs (Hugging Face Inference API, OpenAI, Anthropic, etc. via LiteLLM) and a shared tool repository on the Hugging Face Hub.

Understanding AI Agents

AI Agents are autonomous systems performing tasks on behalf of users or other systems. They achieve this by orchestrating workflows and using external tools (web searches, code execution, etc.). LLMs power these agents, integrating tool usage for real-time information. Essentially, they bridge LLMs and the external world, enabling action and decision-making. Agency exists on a spectrum, with LLMs having varying degrees of control over system actions.

| Agency Level | Description | Name | Example |

|---|---|---|---|

| ☆☆☆ | LLM output has no impact on program flow | Simple Processor | process_llm_output(llm_response) |

| ⭐☆☆ | LLM output determines an if/else switch | Router | if llm_decision(): path_a() else: path_b() |

| ⭐⭐☆ | LLM output determines function execution | Tool Caller | run_function(llm_chosen_tool, llm_chosen_args) |

| ⭐⭐⭐ | LLM output controls iteration and program continuation | Multi-step Agent | while llm_should_continue(): execute_next_step() |

| ⭐⭐⭐ | One agentic workflow starts another | Multi-Agent | if llm_trigger(): execute_agent() |

Multi-step Agent Example

Agents handle complex tasks by using multiple tools and adapting to different situations. Unlike traditional programs with rigid workflows, agents manage complexity and unpredictability more effectively.

SmolAgents Key Features

For simple tasks, custom code suffices. However, for complex behaviors (tool calling, multi-step agents), SmolAgents provides essential structure:

- Tool Calling: Agent output follows a specific format (e.g., "Thought: Use 'get_weather'. Action: get_weather(Paris).") processed by a parser. The system prompt guides the LLM on this format.

- Multi-step Agents: LLM prompts are tailored based on previous iterations, requiring memory for context.

SmolAgents integrates these components seamlessly: LLM, tools, parser, system prompt, memory, and error handling.

SmolAgents Capabilities

Code Agents

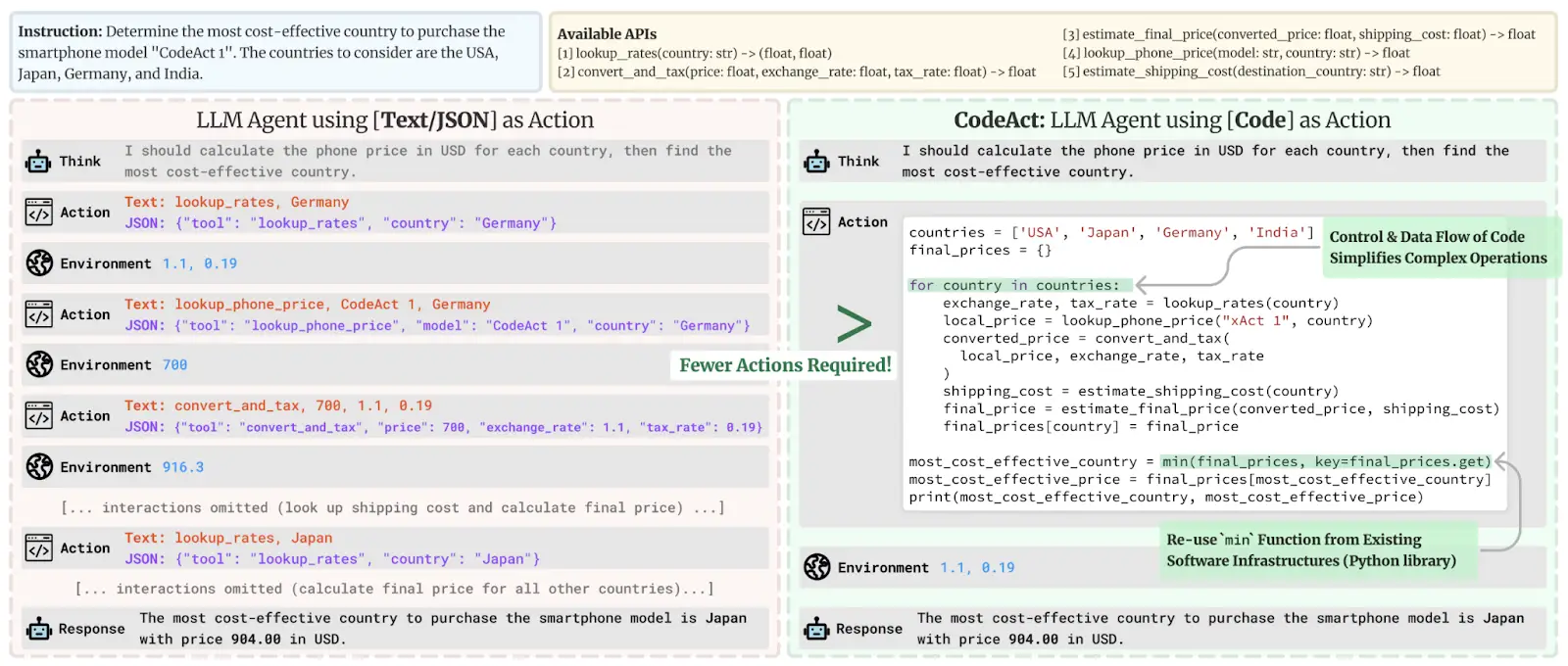

Using code (instead of JSON) for tool actions is superior due to its efficiency, composability, object management capabilities, generality, and compatibility with LLM training data.

Local Python Interpreter

The CodeAgent uses a secure LocalPythonInterpreter with controlled imports, operation limits, and predefined actions.

E2B Code Executor

For enhanced security, SmolAgents integrates with E2B for sandboxed code execution.

from smolagents import CodeAgent, VisitWebpageTool, HfApiModel

agent = CodeAgent(tools=[VisitWebpageTool()], model=HfApiModel(), additional_authorized_imports=["requests", "markdownify"], use_e2b_executor=True)

agent.run("What was Abraham Lincoln's preferred pet?")

SmolAgents in Action

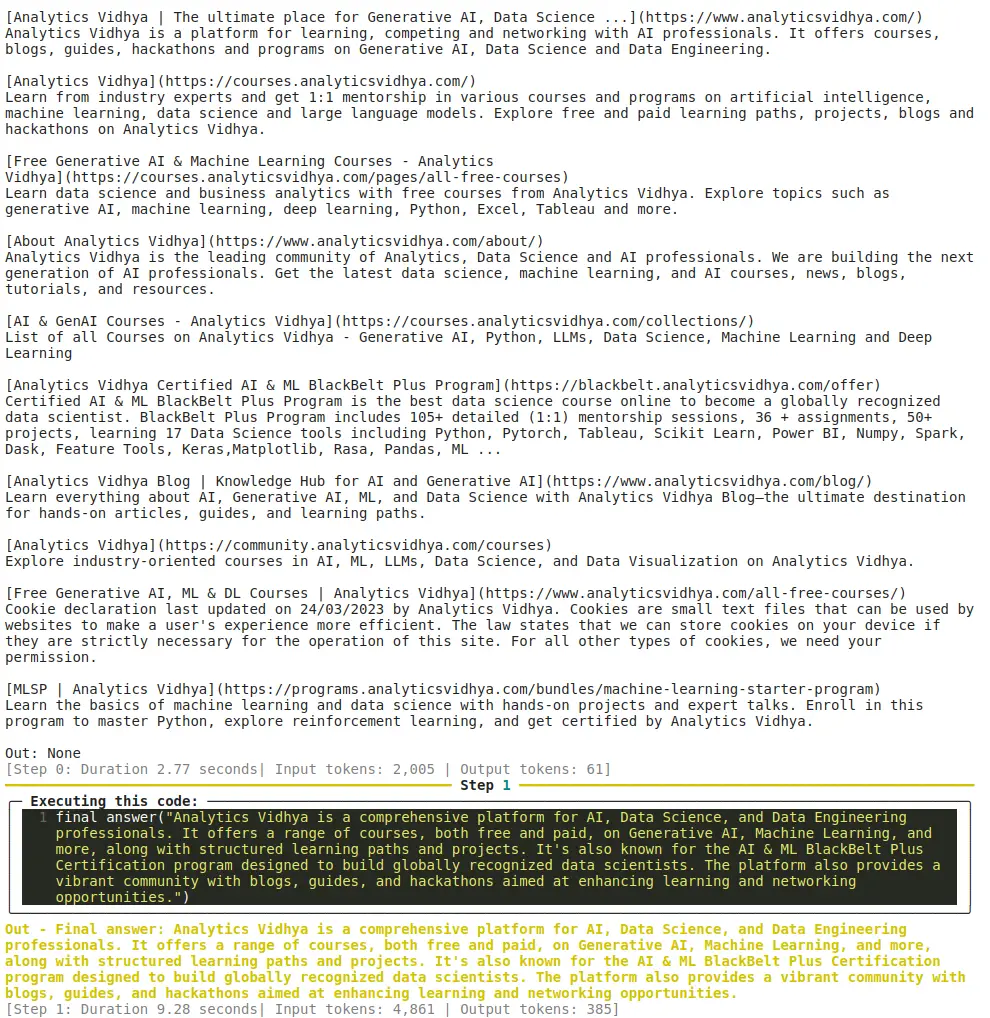

Demo 1: Research Agent

!pip install smolagents

from smolagents import CodeAgent, DuckDuckGoSearchTool, HfApiModel

model = LiteLLMModel(model_, api_key="YOUR_API_KEY") # Replace YOUR_API_KEY

agent = CodeAgent(tools=[DuckDuckGoSearchTool()], model=model)

agent.run("Tell me about Analytics Vidhya")

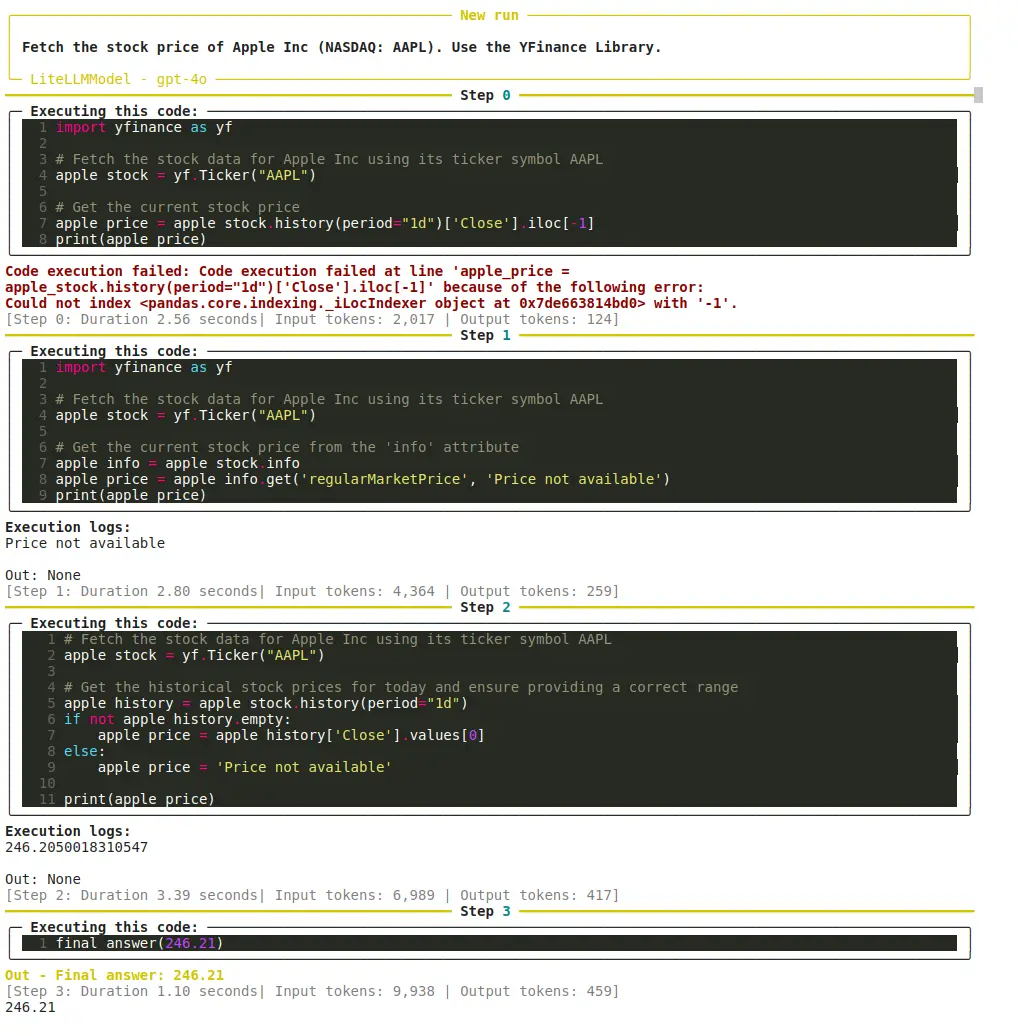

Demo 2: Stock Price Retrieval

!pip install smolagents

import yfinance as yf

model = LiteLLMModel(model_, api_key="YOUR_API_KEY") # Replace YOUR_API_KEY

agent = CodeAgent(tools=[DuckDuckGoSearchTool()], additional_authorized_imports=["yfinance"], model=model)

response = agent.run("Fetch the stock price of Apple Inc (NASDAQ: AAPL). Use the YFinance Library.")

print(response)

Conclusion

SmolAgents simplifies AI agent development. Its key strengths are simplicity, versatility, security, the use of code for tool actions, and its integrated ecosystem. It's a valuable tool for building adaptable and scalable agentic systems. Consider exploring the Agentic AI Pioneer Program for deeper insights.

The above is the detailed content of SmolAgents by Hugging Face: Build AI Agents in Under 30 Lines. For more information, please follow other related articles on the PHP Chinese website!

Related articles

See more- Technology trends to watch in 2023

- How Artificial Intelligence is Bringing New Everyday Work to Data Center Teams

- Can artificial intelligence or automation solve the problem of low energy efficiency in buildings?

- OpenAI co-founder interviewed by Huang Renxun: GPT-4's reasoning capabilities have not yet reached expectations

- Microsoft's Bing surpasses Google in search traffic thanks to OpenAI technology