Introduction

The overall data lifecycle starts with generating data and storing it in some way, somewhere. Let's call this the early-stage data lifecycle and we'll explore how to automate data ingestion into Airtable using a local workflow. We'll cover setting up a development environment, designing the ingestion process, creating a batch script, and scheduling the workflow - keeping things simple, local/reproducible and accessible.

First, let's talk about Airtable. Airtable is a powerful and flexible tool that blends the simplicity of a spreadsheet with the structure of a database. I find it perfect for organizing information, managing projects, tracking tasks, and it has a free tier!

Preparing the Environment

Setting up the development environment

We would be developing this project with python, so lunch your favorite IDE and create a virtual environment

# from your terminal python -m venv <environment_name> <environment_name>\Scripts\activate </environment_name></environment_name>

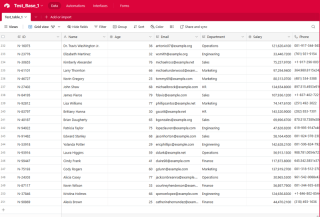

To get started with Airtable, head over to Airtable's website. Once you've signed up for a free account, you'll need to create a new Workspace. Think of a Workspace as a container for all your related tables and data.

Next, create a new Table within your Workspace. A Table is essentially a spreadsheet where you'll store your data. Define the Fields (columns) in your Table to match the structure of your data.

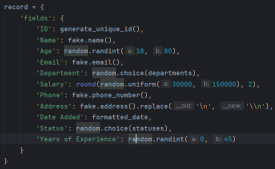

Here's a snippet of the fields used in the tutorial, it's a combination of Texts, Dates and Numbers:

To connect your script to Airtable, you'll need to generate an API Key or Personal Access Token. This key acts as a password, allowing your script to interact with your Airtable data. To generate a key, navigate to your Airtable account settings, find the API section, and follow the instructions to create a new key.

*Remember to keep your API key secure. Avoid sharing it publicly or committing it to public repositories. *

Installing necessary dependencies (Python, libraries, etc.)

Next, touch requirements.txt. Inside this .txt file put the following packages:

pyairtable schedule faker python-dotenv

now run pip install -r requirements.txt to install the required packages.

Organizing the project structure

This step is where we create the scripts, .env is where we will store our credentials, autoRecords.py - to randomly generate data for the defined fields and the ingestData.py to insert the records to Airtable.

Designing the Ingestion Process: Environment Variables

# from your terminal python -m venv <environment_name> <environment_name>\Scripts\activate </environment_name></environment_name>

Designing the Ingestion Process: Automated Records

Sounds good, let's put together a focused subtopic content for your blog post on this employee data generator.

Generating Realistic Employee Data for Your Projects

When working on projects that involve employee data, it's often helpful to have a reliable way to generate realistic sample data. Whether you're building an HR management system, an employee directory, or anything in between, having access to robust test data can streamline your development and make your application more resilient.

In this section, we'll explore a Python script that generates random employee records with a variety of relevant fields. This tool can be a valuable asset when you need to populate your application with realistic data quickly and easily.

Generating Unique IDs

The first step in our data generation process is to create unique identifiers for each employee record. This is an important consideration, as your application will likely need a way to uniquely reference each individual employee. Our script includes a simple function to generate these IDs:

pyairtable schedule faker python-dotenv

This function generates a unique ID in the format "N-#####", where the number is a random 5-digit value. You can customize this format to suit your specific needs.

Generating Random Employee Records

Next, let's look at the core function that generates the employee records themselves. The generate_random_records() function takes the number of records to create as input and returns a list of dictionaries, where each dictionary represents an employee with various fields:

"https://airtable.com/app########/tbl######/viw####?blocks=show" BASE_ID = 'app########' TABLE_NAME = 'tbl######' API_KEY = '#######'

This function uses the Faker library to generate realistic-looking data for various employee fields, such as name, email, phone number, and address. It also includes some basic constraints, such as limiting the age range and salary range to reasonable values.

The function returns a list of dictionaries, where each dictionary represents an employee record in a format that is compatible with Airtable.

Preparing Data for Airtable

Finally, let's look at the prepare_records_for_airtable() function, which takes the list of employee records and extracts the 'fields' portion of each record. This is the format that Airtable expects for importing data:

def generate_unique_id():

"""Generate a Unique ID in the format N-#####"""

return f"N-{random.randint(10000, 99999)}"

This function simplifies the data structure, making it easier to work with when integrating the generated data with Airtable or other systems.

Putting It All Together

To use this data generation tool, we can call the generate_random_records() function with the desired number of records, and then pass the resulting list to the prepare_records_for_airtable() function:

# from your terminal python -m venv <environment_name> <environment_name>\Scripts\activate </environment_name></environment_name>

This will generate 2 random employee records, print them in their original format, and then print the records in the flat format suitable for Airtable.

Run:

pyairtable schedule faker python-dotenv

Output:

"https://airtable.com/app########/tbl######/viw####?blocks=show" BASE_ID = 'app########' TABLE_NAME = 'tbl######' API_KEY = '#######'

Integrating Generated Data with Airtable

In addition to generating realistic employee data, our script also provides functionality to seamlessly integrate that data with Airtable

Setting up the Airtable Connection

Before we can start inserting our generated data into Airtable, we need to establish a connection to the platform. Our script uses the pyairtable library to interact with the Airtable API. We start by loading the necessary environment variables, including the Airtable API key and the Base ID and Table Name where we want to store the data:

def generate_unique_id():

"""Generate a Unique ID in the format N-#####"""

return f"N-{random.randint(10000, 99999)}"

With these credentials, we can then initialize the Airtable API client and get a reference to the specific table we want to work with:

def generate_random_records(num_records=10):

"""

Generate random records with reasonable constraints

:param num_records: Number of records to generate

:return: List of records formatted for Airtable

"""

records = []

# Constants

departments = ['Sales', 'Engineering', 'Marketing', 'HR', 'Finance', 'Operations']

statuses = ['Active', 'On Leave', 'Contract', 'Remote']

for _ in range(num_records):

# Generate date in the correct format

random_date = datetime.now() - timedelta(days=random.randint(0, 365))

formatted_date = random_date.strftime('%Y-%m-%dT%H:%M:%S.000Z')

record = {

'fields': {

'ID': generate_unique_id(),

'Name': fake.name(),

'Age': random.randint(18, 80),

'Email': fake.email(),

'Department': random.choice(departments),

'Salary': round(random.uniform(30000, 150000), 2),

'Phone': fake.phone_number(),

'Address': fake.address().replace('\n', '\n'), # Escape newlines

'Date Added': formatted_date,

'Status': random.choice(statuses),

'Years of Experience': random.randint(0, 45)

}

}

records.append(record)

return records

Inserting the Generated Data

Now that we have the connection set up, we can use the generate_random_records() function from the previous section to create a batch of employee records, and then insert them into Airtable:

def prepare_records_for_airtable(records):

"""Convert records from nested format to flat format for Airtable"""

return [record['fields'] for record in records]

The prep_for_insertion() function is responsible for converting the nested record format returned by generate_random_records() into the flat format expected by the Airtable API. Once the data is prepared, we use the table.batch_create() method to insert the records in a single bulk operation.

Error Handling and Logging

To ensure our integration process is robust and easy to debug, we've also included some basic error handling and logging functionality. If any errors occur during the data insertion process, the script will log the error message to help with troubleshooting:

if __name__ == "__main__":

records = generate_random_records(2)

print(records)

prepared_records = prepare_records_for_airtable(records)

print(prepared_records)

By combining the powerful data generation capabilities of our earlier script with the integration features shown here, you can quickly and reliably populate your Airtable-based applications with realistic employee data.

Scheduling Automated Data Ingestion with a Batch Script

To make the data ingestion process fully automated, we can create a batch script (.bat file) that will run the Python script on a regular schedule. This allows you to set up the data ingestion to happen automatically without manual intervention.

Here's an example of a batch script that can be used to run the ingestData.py script:

python autoRecords.py

Let's break down the key parts of this script:

- @echo off: This line suppresses the printing of each command to the console, making the output cleaner.

- echo Starting Airtable Automated Data Ingestion Service...: This line prints a message to the console, indicating that the script has started.

- cd /d C:UsersbuascPycharmProjectsscrapEngineering: This line changes the current working directory to the project directory where the ingestData.py script is located.

- call C:UsersbuascPycharmProjectsscrapEngineeringvenv_airtableScriptsactivate.bat: This line activates the virtual environment where the necessary Python dependencies are installed.

- python ingestData.py: This line runs the ingestData.py Python script.

- if %ERRORLEVEL% NEQ 0 (... ): This block checks if the Python script encountered an error (i.e., if the ERRORLEVEL is not zero). If an error occurred, it prints an error message and pauses the script, allowing you to investigate the issue.

To schedule this batch script to run automatically, you can use the Windows Task Scheduler. Here's a brief overview of the steps:

- Open the Start menu and search for "Task Scheduler".

Or

Windows R and

- In the Task Scheduler, create a new task and give it a descriptive name (e.g., "Airtable Data Ingestion").

- In the "Actions" tab, add a new action and specify the path to your batch script (e.g., C:UsersbuascPycharmProjectsscrapEngineeringingestData.bat).

- Configure the schedule for when you want the script to run, such as daily, weekly, or monthly.

- Save the task and enable it.

Now, the Windows Task Scheduler will automatically run the batch script at the specified intervals, ensuring that your Airtable data is updated regularly without manual intervention.

Conclusion

This can be an invaluable tool for testing, development, and even demonstration purposes.

Throughout this guide, you've learnt how to set up the necessary development environment, designed an ingestion process, created a batch script to automate the task, and scheduled the workflow for unattended execution. Now, we have a solid understanding of how to leverage the power of local automation to streamline our data ingestion operations and unlock valuable insights from an Airtable - powered data ecosystem.

Now that you've set up the automated data ingestion process, there are many ways you can build upon this foundation and unlock even more value from your Airtable data. I encourage you to experiment with the code, explore new use cases, and share your experiences with the community.

Here are some ideas to get you started:

- Customize the Data Generation

- Leverage the Ingested Data [Markdown-based exploratory data analysis (EDA), Build interactive dashboards or visualizations using tools like Tableau, Power BI, or Plotly, Experiment with machine learning workflows (predicting employee turnover or identifying top performers)]

- Integrate with Other Systems [cloud functions, webhooks, or data warehouses]

The possibilities are endless! I'm excited to see how you build upon this automated data ingestion process and unlock new insights and value from your Airtable data. Don't hesitate to experiment, collaborate, and share your progress. I'm here to support you along the way.

See the full code https://github.com/AkanimohOD19A/scheduling_airtable_insertion, full video tutorial is on the way.

The above is the detailed content of Local Workflow: Orchestrating Data Ingestion into Airtable. For more information, please follow other related articles on the PHP Chinese website!

How to Use Python to Find the Zipf Distribution of a Text FileMar 05, 2025 am 09:58 AM

How to Use Python to Find the Zipf Distribution of a Text FileMar 05, 2025 am 09:58 AMThis tutorial demonstrates how to use Python to process the statistical concept of Zipf's law and demonstrates the efficiency of Python's reading and sorting large text files when processing the law. You may be wondering what the term Zipf distribution means. To understand this term, we first need to define Zipf's law. Don't worry, I'll try to simplify the instructions. Zipf's Law Zipf's law simply means: in a large natural language corpus, the most frequently occurring words appear about twice as frequently as the second frequent words, three times as the third frequent words, four times as the fourth frequent words, and so on. Let's look at an example. If you look at the Brown corpus in American English, you will notice that the most frequent word is "th

How Do I Use Beautiful Soup to Parse HTML?Mar 10, 2025 pm 06:54 PM

How Do I Use Beautiful Soup to Parse HTML?Mar 10, 2025 pm 06:54 PMThis article explains how to use Beautiful Soup, a Python library, to parse HTML. It details common methods like find(), find_all(), select(), and get_text() for data extraction, handling of diverse HTML structures and errors, and alternatives (Sel

How to Perform Deep Learning with TensorFlow or PyTorch?Mar 10, 2025 pm 06:52 PM

How to Perform Deep Learning with TensorFlow or PyTorch?Mar 10, 2025 pm 06:52 PMThis article compares TensorFlow and PyTorch for deep learning. It details the steps involved: data preparation, model building, training, evaluation, and deployment. Key differences between the frameworks, particularly regarding computational grap

Mathematical Modules in Python: StatisticsMar 09, 2025 am 11:40 AM

Mathematical Modules in Python: StatisticsMar 09, 2025 am 11:40 AMPython's statistics module provides powerful data statistical analysis capabilities to help us quickly understand the overall characteristics of data, such as biostatistics and business analysis. Instead of looking at data points one by one, just look at statistics such as mean or variance to discover trends and features in the original data that may be ignored, and compare large datasets more easily and effectively. This tutorial will explain how to calculate the mean and measure the degree of dispersion of the dataset. Unless otherwise stated, all functions in this module support the calculation of the mean() function instead of simply summing the average. Floating point numbers can also be used. import random import statistics from fracti

Serialization and Deserialization of Python Objects: Part 1Mar 08, 2025 am 09:39 AM

Serialization and Deserialization of Python Objects: Part 1Mar 08, 2025 am 09:39 AMSerialization and deserialization of Python objects are key aspects of any non-trivial program. If you save something to a Python file, you do object serialization and deserialization if you read the configuration file, or if you respond to an HTTP request. In a sense, serialization and deserialization are the most boring things in the world. Who cares about all these formats and protocols? You want to persist or stream some Python objects and retrieve them in full at a later time. This is a great way to see the world on a conceptual level. However, on a practical level, the serialization scheme, format or protocol you choose may determine the speed, security, freedom of maintenance status, and other aspects of the program

What are some popular Python libraries and their uses?Mar 21, 2025 pm 06:46 PM

What are some popular Python libraries and their uses?Mar 21, 2025 pm 06:46 PMThe article discusses popular Python libraries like NumPy, Pandas, Matplotlib, Scikit-learn, TensorFlow, Django, Flask, and Requests, detailing their uses in scientific computing, data analysis, visualization, machine learning, web development, and H

How to Create Command-Line Interfaces (CLIs) with Python?Mar 10, 2025 pm 06:48 PM

How to Create Command-Line Interfaces (CLIs) with Python?Mar 10, 2025 pm 06:48 PMThis article guides Python developers on building command-line interfaces (CLIs). It details using libraries like typer, click, and argparse, emphasizing input/output handling, and promoting user-friendly design patterns for improved CLI usability.

Scraping Webpages in Python With Beautiful Soup: Search and DOM ModificationMar 08, 2025 am 10:36 AM

Scraping Webpages in Python With Beautiful Soup: Search and DOM ModificationMar 08, 2025 am 10:36 AMThis tutorial builds upon the previous introduction to Beautiful Soup, focusing on DOM manipulation beyond simple tree navigation. We'll explore efficient search methods and techniques for modifying HTML structure. One common DOM search method is ex

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

EditPlus Chinese cracked version

Small size, syntax highlighting, does not support code prompt function

SublimeText3 Linux new version

SublimeText3 Linux latest version

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 English version

Recommended: Win version, supports code prompts!