AI 影片生成,是最近最熱門的領域之一。各大學實驗室、網路巨頭 AI Lab、新創公司紛紛加入了 AI 影片生成的賽道。 Pika、Gen-2、Show-1、VideoCrafter、ModelScope、SEINE、LaVie、VideoLDM 等影片產生模型的發布,更是讓人眼睛一亮。 v⁽ⁱ⁾

大家一定對以下幾個問題感到好奇:

- ##到底哪個影片生成模型最牛?

- 每個模型有什麼專長?

- AI 影片產生領域目前還有哪些值得關注的問題待解決?

為此,我們推出了VBench,一個全面的「視訊生成模型的評測框架」,旨在向用戶提供關於各種視訊模型的優劣和特點。透過VBench,使用者可以了解不同視訊模型的強項和優勢。

- #論文:https://arxiv.org/abs /2311.17982

- #程式碼:https://github.com/Vchitect/VBench

- 網頁:https://vchitect.github.io /VBench-project/

- 論文標題:VBench: Comprehensive Benchmark Suite for Video Generative Models

#VBench不僅能全面、細緻地評估影片生成效果,也能提供符合人們感官體驗的評估,節省時間和精力。

- VBench 包含16 個分層和解耦的評測維度

- VBench 開源了用於文生視訊產生評測的Prompt List 系統

- VBench 每個維度的評測方案與人類的觀感與評估對齊

- VBench 提供了多視角的洞察,助力未來對於AI 視訊生成的探索

AI 影片產生模型- 評測結果

已開源的AI視訊生成模型

各個開源的AI 視訊產生模型在 VBench 上的表現如下。

在以上 6 個模型中,可以看到 VideoCrafter-1.0 和 Show-1 在大多數維度都有相對優勢。

新創公司的影片產生模型

#VBench 目前給了Gen-2 和Pika 這兩家創業公司模式的評測結果。

Gen-2 和 Pika 在 VBench 上的表現。在雷達圖中,為了更清晰地視覺化比較,我們加入了 VideoCrafter-1.0 和 Show-1 作為參考,同時將每個維度的評測結果歸一化到了 0.3 與 0.8 之間。

#

#

Performance of Gen-2 and Pika on VBench. We include the numerical results of VideoCrafter-1.0 and Show-1 as reference.

It can be seen that Gen-2 and Pika have obvious advantages in video quality (Video Quality), such as timing consistency (Temporal Consistency) and single frame quality (Aesthetic Quality and Imaging Quality) related dimensions. In terms of semantic consistency with user input prompts (such as Human Action and Appearance Style), partial-dimensional open source models will be better.

Video generation model VS picture generation model

Video generation model VS Image generation model. Among them, SD1.4, SD2.1 and SDXL are image generation models.

The performance of the video generation model in 8 major scene categories

The following are the performance of different models in 8 different categories evaluation results on.

VBench is now open source and can be installed with one click

At present, VBench is fully open source. And supports one-click installation. Everyone is welcome to play, test the models you are interested in, and work together to promote the development of the video generation community.

#Open source address :https://github.com/Vchitect/VBench

We have also open sourced a series of Prompt Lists : https://github.com/Vchitect/VBench/tree/master/prompts, including Benchmarks for evaluation in different capability dimensions, as well as evaluation Benchmarks on different scenario content.

The word cloud on the left shows the distribution of high-frequency words in our Prompt Suites, and the picture on the right shows the statistics of the number of prompts in different dimensions and categories.

Is VBench accurate?

For each dimension, we calculated the correlation between the VBench evaluation results and the manual evaluation results to verify the consistency of our method with human perception. In the figure below, the horizontal axis represents the manual evaluation results in different dimensions, and the vertical axis shows the results of the automatic evaluation of the VBench method. It can be seen that our method is highly aligned with human perception in all dimensions.

VBench brings thinking to AI video generation

VBench can not only evaluate existing models , More importantly, various problems that may exist in different models can also be discovered, providing valuable insights for the future development of AI video generation.

"Temporal continuity" and "video dynamic level": Don't choose one or the other, but improve both

We found that there is a certain trade-off relationship between temporal coherence (such as Subject Consistency, Background Consistency, Motion Smoothness) and the amplitude of motion in the video (Dynamic Degree). For example, Show-1 and VideoCrafter-1.0 performed very well in terms of background consistency and action smoothness, but scored lower in terms of dynamics; this may be because the generated "not moving" pictures are more likely to appear "in the timing" Very coherent." VideoCrafter-0.9, on the other hand, is weaker on the dimension related to timing consistency, but scores high on Dynamic Degree.

This shows that it is indeed difficult to achieve "temporal coherence" and "higher dynamic level" at the same time; in the future, we should not only focus on improving one aspect, but should also improve "temporal coherence" And "the dynamic level of the video", this is meaningful.

Evaluate by scene content to explore the potential of each model

Some models perform well in different categories There are big differences in performance. For example, in terms of aesthetic quality, CogVideo performs well in the "Food" category, but scores lower in the "LifeStyle" category. If the training data is adjusted, can the aesthetic quality of CogVideo in the "LifeStyle" categories be improved, thereby improving the overall video aesthetic quality of the model?

This also tells us that when evaluating video generation models, we need to consider the performance of the model under different categories or topics, explore the upper limit of the model in a certain capability dimension, and then target Improve the "holding back" scenario category.

Categories with complex motion: poor spatiotemporal performance

Categories with high spatial complexity, Scores in the aesthetic quality dimension are relatively low. For example, the "LifeStyle" category has relatively high requirements for the layout of complex elements in space, and the "Human" category poses challenges due to the generation of hinged structures.

For categories with complex timing, such as the "Human" category which usually involves complex movements and the "Vehicle" category which often moves faster, they score equally in all tested dimensions. relatively low. This shows that the current model still has certain deficiencies in processing temporal modeling. The temporal modeling limitations may lead to spatial blurring and distortion, resulting in unsatisfactory video quality in both time and space.

Difficult to generate categories: little benefit from increasing data volume

We use the commonly used video data set WebVid- 10M conducted statistics and found that about 26% of the data was related to "Human", accounting for the highest proportion among the eight categories we counted. However, in the evaluation results, the “Human” category was one of the worst performing among the eight categories.

This shows that for a complex category like "Human", simply increasing the amount of data may not bring significant improvements to performance. One potential method is to guide the learning of the model by introducing "Human" related prior knowledge or control, such as Skeletons, etc.

Millions of data sets: improving data quality takes precedence over data quantity

Although the "Food" category Occupying only 11% of WebVid-10M, it almost always has the highest aesthetic quality score in the review. So we further analyzed the aesthetic quality performance of different categories of content in the WebVid-10M data set and found that the "Food" category also had the highest aesthetic score in WebVid-10M.

This means that on the basis of millions of data, filtering/improving data quality is more helpful than increasing the amount of data.

Ability to be improved: Accurately generate multiple objects and the relationship between objects

Current video generation The model still cannot catch up with the image generation model (especially SDXL) in terms of "Multiple Objects" and "Spatial Relationship", which highlights the importance of improving combination capabilities. The so-called combination ability refers to whether the model can accurately display multiple objects in video generation, as well as the spatial and interactive relationships between them.

Potential solutions to this problem may include:

- Data labeling: Construct a video dataset to provide A clear description of multiple objects, as well as a description of the spatial positional relationships and interactions between objects.

- Add intermediate modes/modules during the video generation process to assist in controlling the combination and spatial position of objects.

- Using a better text encoder (Text Encoder) will also have a greater impact on the combined generation ability of the model.

- Curve to save the country: hand over the "object combination" problem that T2V cannot do well to T2I, and generate videos through T2I I2V. This approach may also be effective for many other video generation problems.

以上是AI視訊生成框架測試競爭:Pika、Gen-2、ModelScope、SEINE,誰能勝出?的詳細內容。更多資訊請關注PHP中文網其他相關文章!

AI內部部署的隱藏危險:治理差距和災難性風險Apr 28, 2025 am 11:12 AM

AI內部部署的隱藏危險:治理差距和災難性風險Apr 28, 2025 am 11:12 AMApollo Research的一份新報告顯示,先進的AI系統的不受檢查的內部部署構成了重大風險。 在大型人工智能公司中缺乏監督,普遍存在,允許潛在的災難性結果

構建AI測謊儀Apr 28, 2025 am 11:11 AM

構建AI測謊儀Apr 28, 2025 am 11:11 AM傳統測謊儀已經過時了。依靠腕帶連接的指針,打印出受試者生命體徵和身體反應的測謊儀,在識破謊言方面並不精確。這就是為什麼測謊結果通常不被法庭採納的原因,儘管它曾導致許多無辜者入獄。 相比之下,人工智能是一個強大的數據引擎,其工作原理是全方位觀察。這意味著科學家可以通過多種途徑將人工智能應用於尋求真相的應用中。 一種方法是像測謊儀一樣分析被審問者的生命體徵反應,但採用更詳細、更精確的比較分析。 另一種方法是利用語言標記來分析人們實際所說的話,並運用邏輯和推理。 俗話說,一個謊言會滋生另一個謊言,最終

AI是否已清除航空航天行業的起飛?Apr 28, 2025 am 11:10 AM

AI是否已清除航空航天行業的起飛?Apr 28, 2025 am 11:10 AM航空航天業是創新的先驅,它利用AI應對其最複雜的挑戰。 現代航空的越來越複雜性需要AI的自動化和實時智能功能,以提高安全性,降低操作

觀看北京的春季機器人比賽Apr 28, 2025 am 11:09 AM

觀看北京的春季機器人比賽Apr 28, 2025 am 11:09 AM機器人技術的飛速發展為我們帶來了一個引人入勝的案例研究。 來自Noetix的N2機器人重達40多磅,身高3英尺,據說可以後空翻。 Unitree公司推出的G1機器人重量約為N2的兩倍,身高約4英尺。比賽中還有許多體型更小的類人機器人參賽,甚至還有一款由風扇驅動前進的機器人。 數據解讀 這場半程馬拉松吸引了超過12,000名觀眾,但只有21台類人機器人參賽。儘管政府指出參賽機器人賽前進行了“強化訓練”,但並非所有機器人均完成了全程比賽。 冠軍——由北京類人機器人創新中心研發的Tiangong Ult

鏡子陷阱:人工智能倫理和人類想像力的崩潰Apr 28, 2025 am 11:08 AM

鏡子陷阱:人工智能倫理和人類想像力的崩潰Apr 28, 2025 am 11:08 AM人工智能以目前的形式並不是真正智能的。它擅長模仿和完善現有數據。 我們不是在創造人工智能,而是人工推斷 - 處理信息的機器,而人類則

新的Google洩漏揭示了方便的Google照片功能更新Apr 28, 2025 am 11:07 AM

新的Google洩漏揭示了方便的Google照片功能更新Apr 28, 2025 am 11:07 AM一份報告發現,在谷歌相冊Android版7.26版本的代碼中隱藏了一個更新的界面,每次查看照片時,都會在屏幕底部顯示一行新檢測到的面孔縮略圖。 新的面部縮略圖缺少姓名標籤,所以我懷疑您需要單獨點擊它們才能查看有關每個檢測到的人員的更多信息。就目前而言,此功能除了谷歌相冊已在您的圖像中找到這些人之外,不提供任何其他信息。 此功能尚未上線,因此我們不知道谷歌將如何準確地使用它。谷歌可以使用縮略圖來加快查找所選人員的更多照片的速度,或者可能用於其他目的,例如選擇要編輯的個人。我們拭目以待。 就目前而言

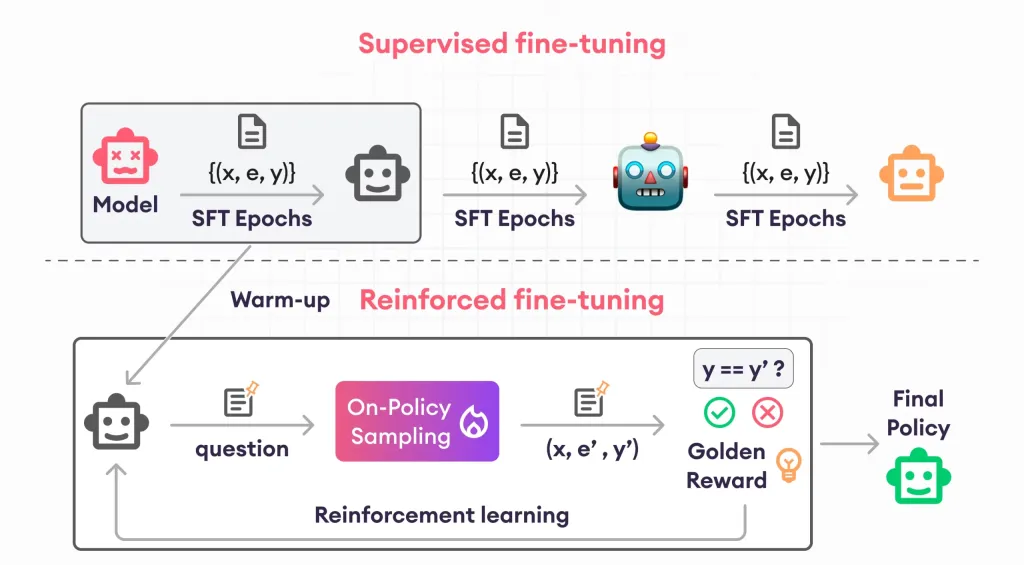

加固芬特的指南 - 分析VidhyaApr 28, 2025 am 09:30 AM

加固芬特的指南 - 分析VidhyaApr 28, 2025 am 09:30 AM增強者通過教授模型根據人類反饋進行調整來震撼AI的開發。它將監督的學習基金會與基於獎勵的更新融合在一起,使其更安全,更準確,真正地幫助

讓我們跳舞:結構化運動以微調我們的人類神經網Apr 27, 2025 am 11:09 AM

讓我們跳舞:結構化運動以微調我們的人類神經網Apr 27, 2025 am 11:09 AM科學家已經廣泛研究了人類和更簡單的神經網絡(如秀麗隱桿線蟲中的神經網絡),以了解其功能。 但是,出現了一個關鍵問題:我們如何使自己的神經網絡與新穎的AI一起有效地工作

熱AI工具

Undresser.AI Undress

人工智慧驅動的應用程序,用於創建逼真的裸體照片

AI Clothes Remover

用於從照片中去除衣服的線上人工智慧工具。

Undress AI Tool

免費脫衣圖片

Clothoff.io

AI脫衣器

Video Face Swap

使用我們完全免費的人工智慧換臉工具,輕鬆在任何影片中換臉!

熱門文章

熱工具

Dreamweaver CS6

視覺化網頁開發工具

SublimeText3 Linux新版

SublimeText3 Linux最新版

DVWA

Damn Vulnerable Web App (DVWA) 是一個PHP/MySQL的Web應用程序,非常容易受到攻擊。它的主要目標是成為安全專業人員在合法環境中測試自己的技能和工具的輔助工具,幫助Web開發人員更好地理解保護網路應用程式的過程,並幫助教師/學生在課堂環境中教授/學習Web應用程式安全性。 DVWA的目標是透過簡單直接的介面練習一些最常見的Web漏洞,難度各不相同。請注意,該軟體中

MantisBT

Mantis是一個易於部署的基於Web的缺陷追蹤工具,用於幫助產品缺陷追蹤。它需要PHP、MySQL和一個Web伺服器。請查看我們的演示和託管服務。

Atom編輯器mac版下載

最受歡迎的的開源編輯器