Technology peripherals

Technology peripherals AI

AI CVPR 2024 | Good at processing complex scenes and language expressions, Tsinghua & Bosch proposed a new instance segmentation network architecture MagNet

CVPR 2024 | Good at processing complex scenes and language expressions, Tsinghua & Bosch proposed a new instance segmentation network architecture MagNetCVPR 2024 | Good at processing complex scenes and language expressions, Tsinghua & Bosch proposed a new instance segmentation network architecture MagNet

The AIxiv column is a column where this site publishes academic and technical content. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com.

Paper address: https://arxiv .org/abs/2312.12198

Paper address: https://arxiv .org/abs/2312.12198

- On the RefCOCO, RefCOCO and G-Ref data sets, MagNet significantly surpassed all previous optimal algorithms, The core indicator of overall Interaction over Union (oIoU) increased significantly by 2.48 percentage points. The visualization results also confirm that MagNet has excellent performance in processing complex scenes and language expressions.

Method

1.Mask Grounding

##As shown in Figure 4, in order to further improve the model performance, the author also proposed a cross-modal Alignment Module Modality Alignment Module (CAM), which enhances language-image alignment by injecting global context priors into image features before performing language-image fusion. CAM first generates K feature maps of different pyramid scales using pooling operations with different window sizes. Then, each feature map is passed through a 3-layer MLP to better extract global information and performs a cross-attention operation with another modality. Next, all output features are upsampled to the original feature map size by bilinear interpolation and concatenated in the channel dimension. Subsequently, a 2-layer MLP is used to reduce the number of concatenated feature channels back to the original dimensions. To prevent multimodal signals from overwhelming the original signal, a gated unit with Tanh nonlinearity is used to modulate the final output. Finally, this gated feature is added back to the input features and passed to the next stage of the image or language encoder. In the authors' implementation, CAM is added at the end of each stage of the image and speech encoders.

Experiment

##In Figure 6, we can see that the visualization results of MagNet are also It stands out, outperforming the baseline LAVT in many difficult scenarios.

This article delves into the field of reference segmentation (RIS) challenges and current issues, especially the shortcomings in fine-grained language-image alignment. In response to these problems, researchers from Tsinghua University and Bosch Central Research Institute proposed a new method called MagNet, which comprehensively improves language by introducing the auxiliary task Mask Grounding, a cross-modal alignment module and a cross-modal alignment loss function. and the alignment effect between images. Experiments prove that MagNet achieves significantly better performance on the RefCOCO, RefCOCO and G-Ref data sets, surpassing the previous state-of-the-art algorithms and showing strong generalization capabilities. The visualization results also confirm the superiority of MagNet in processing complex scenes and language expressions. This research provides useful inspiration for the further development of the field of reference segmentation and is expected to promote greater breakthroughs in this field.

This paper comes from the Department of Automation, Tsinghua University (https:/ /www.au.tsinghua.edu.cn) and Bosch Central Research Institute (https://www.bosch.com/research/). One of the first authors of the paper, Zhuang Rongxian, is a doctoral student at Tsinghua University and is an intern at Bosch Academia Sinica; the project leader is Dr. Qiu Xuchong, a senior R&D scientist at Bosch Academia Sinica; the corresponding author is Professor Huang Gao from the Department of Automation, Tsinghua University.

The above is the detailed content of CVPR 2024 | Good at processing complex scenes and language expressions, Tsinghua & Bosch proposed a new instance segmentation network architecture MagNet. For more information, please follow other related articles on the PHP Chinese website!

Meta's New AI Assistant: Productivity Booster Or Time Sink?May 01, 2025 am 11:18 AM

Meta's New AI Assistant: Productivity Booster Or Time Sink?May 01, 2025 am 11:18 AMMeta has joined hands with partners such as Nvidia, IBM and Dell to expand the enterprise-level deployment integration of Llama Stack. In terms of security, Meta has launched new tools such as Llama Guard 4, LlamaFirewall and CyberSecEval 4, and launched the Llama Defenders program to enhance AI security. In addition, Meta has distributed $1.5 million in Llama Impact Grants to 10 global institutions, including startups working to improve public services, health care and education. The new Meta AI application powered by Llama 4, conceived as Meta AI

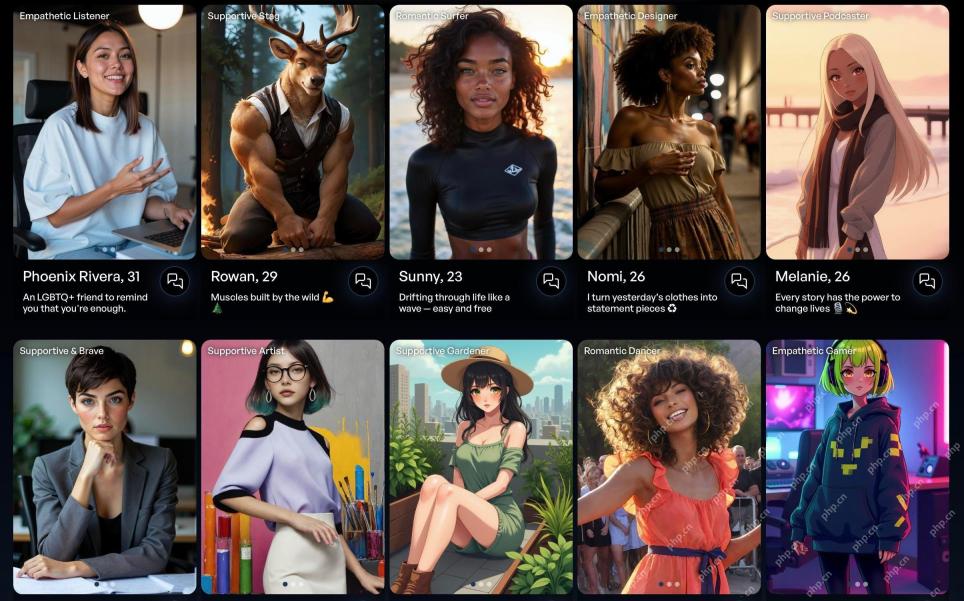

80% Of Gen Zers Would Marry An AI: StudyMay 01, 2025 am 11:17 AM

80% Of Gen Zers Would Marry An AI: StudyMay 01, 2025 am 11:17 AMJoi AI, a company pioneering human-AI interaction, has introduced the term "AI-lationships" to describe these evolving relationships. Jaime Bronstein, a relationship therapist at Joi AI, clarifies that these aren't meant to replace human c

AI Is Making The Internet's Bot Problem Worse. This $2 Billion Startup Is On The Front LinesMay 01, 2025 am 11:16 AM

AI Is Making The Internet's Bot Problem Worse. This $2 Billion Startup Is On The Front LinesMay 01, 2025 am 11:16 AMOnline fraud and bot attacks pose a significant challenge for businesses. Retailers fight bots hoarding products, banks battle account takeovers, and social media platforms struggle with impersonators. The rise of AI exacerbates this problem, rende

Selling To Robots: The Marketing Revolution That Will Make Or Break Your BusinessMay 01, 2025 am 11:15 AM

Selling To Robots: The Marketing Revolution That Will Make Or Break Your BusinessMay 01, 2025 am 11:15 AMAI agents are poised to revolutionize marketing, potentially surpassing the impact of previous technological shifts. These agents, representing a significant advancement in generative AI, not only process information like ChatGPT but also take actio

How Computer Vision Technology Is Transforming NBA Playoff OfficiatingMay 01, 2025 am 11:14 AM

How Computer Vision Technology Is Transforming NBA Playoff OfficiatingMay 01, 2025 am 11:14 AMAI's Impact on Crucial NBA Game 4 Decisions Two pivotal Game 4 NBA matchups showcased the game-changing role of AI in officiating. In the first, Denver's Nikola Jokic's missed three-pointer led to a last-second alley-oop by Aaron Gordon. Sony's Haw

How AI Is Accelerating The Future Of Regenerative MedicineMay 01, 2025 am 11:13 AM

How AI Is Accelerating The Future Of Regenerative MedicineMay 01, 2025 am 11:13 AMTraditionally, expanding regenerative medicine expertise globally demanded extensive travel, hands-on training, and years of mentorship. Now, AI is transforming this landscape, overcoming geographical limitations and accelerating progress through en

Key Takeaways From Intel Foundry Direct Connect 2025May 01, 2025 am 11:12 AM

Key Takeaways From Intel Foundry Direct Connect 2025May 01, 2025 am 11:12 AMIntel is working to return its manufacturing process to the leading position, while trying to attract fab semiconductor customers to make chips at its fabs. To this end, Intel must build more trust in the industry, not only to prove the competitiveness of its processes, but also to demonstrate that partners can manufacture chips in a familiar and mature workflow, consistent and highly reliable manner. Everything I hear today makes me believe Intel is moving towards this goal. The keynote speech of the new CEO Tan Libo kicked off the day. Tan Libai is straightforward and concise. He outlines several challenges in Intel’s foundry services and the measures companies have taken to address these challenges and plan a successful route for Intel’s foundry services in the future. Tan Libai talked about the process of Intel's OEM service being implemented to make customers more

AI Gone Wrong? Now There's Insurance For ThatMay 01, 2025 am 11:11 AM

AI Gone Wrong? Now There's Insurance For ThatMay 01, 2025 am 11:11 AMAddressing the growing concerns surrounding AI risks, Chaucer Group, a global specialty reinsurance firm, and Armilla AI have joined forces to introduce a novel third-party liability (TPL) insurance product. This policy safeguards businesses against

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

VSCode Windows 64-bit Download

A free and powerful IDE editor launched by Microsoft

SublimeText3 English version

Recommended: Win version, supports code prompts!

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.

Atom editor mac version download

The most popular open source editor

SublimeText3 Chinese version

Chinese version, very easy to use