Technology peripherals

Technology peripherals AI

AI Ant Group and Zhejiang University jointly release OneKE, an open source large model knowledge extraction framework

Ant Group and Zhejiang University jointly release OneKE, an open source large model knowledge extraction frameworkAnt Group and Zhejiang University jointly release OneKE, an open source large model knowledge extraction framework

Recently, OneKE, a large model knowledge extraction framework jointly developed by Ant Group and Zhejiang University, was announced as open source and donated to the OpenKG open knowledge graph community.

Knowledge graph is one of the key technologies to achieve trustworthiness and controllability of large models. Knowledge extraction can help build domain knowledge graphs. OneKE is committed to helping researchers and developers better handle issues such as information extraction, text data structuring, and knowledge graph construction.

Extracting risk events, person entities, institutional entities, etc. through OneKE can clearly present the event context, event development trends, and correlations between entities. The well-constructed graph can help large models realize complex reasoning across entities and documents. . OneKE is bilingual in Chinese and English, supports OpenSPG and DeepKE open source frameworks, and can be used out of the box.

Large language models have significantly improved the ability of artificial intelligence systems to process world knowledge. However, real-world information is highly fragmented and unstructured, so when large language models handle information extraction tasks, they will still have poor results due to the huge difference between the extracted content and natural language expressions; in addition, natural language text information There are many ambiguities, polysemy, metaphors, etc., which bring greater challenges to the knowledge extraction task. This also leads to the fact that generative artificial intelligence represented by large language models still has problems such as insufficient reasoning ability, lack of factual knowledge, and unstable generation results, which greatly hinders the industrialization of large language models.

The unified knowledge extraction framework can significantly reduce the cost of building domain knowledge graphs and has a wide range of application scenarios. This means that by extracting structured knowledge from massive data, building high-quality knowledge graphs and establishing logical connections between knowledge elements, explainable reasoning decisions can be achieved, and it can also be used to enhance large models to alleviate illusions and improve stability. , accelerating the application of large models in vertical fields.

In the medical field, knowledge management of doctors’ experience is realized through knowledge extraction, and controllable auxiliary diagnosis and treatment and medical Q&A are constructed. In the financial field, the knowledge extraction department is used for financial indicators, risk events, causal relationships, industrial chains, etc. to achieve automatic financial research report generation, risk prediction, industrial chain analysis, etc. In government affairs scenarios, the knowledge of government affairs regulations can be realized, improving the efficiency and accurate decision-making of government affairs services.

To accelerate the industrial implementation of production-based artificial intelligence, Ant Group and Zhejiang University have established a joint knowledge graph laboratory to focus on the construction of knowledge graphs enhanced by large models, trusted and controllable generation functions of knowledge enhancement, and domain knowledge. We have launched all-round cooperation on topics such as the World Graph, with a view to establishing a controllable generation functional paradigm with two-way enhancement of large language models and knowledge graphs through joint technical research.

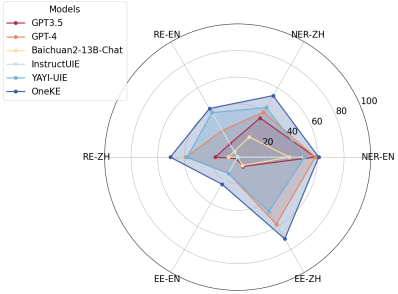

Ant Group and Zhejiang University jointly established and upgraded the capabilities of the Ant Bailing large model in the field of knowledge extraction, and released the Chinese-English bilingual large model knowledge extraction framework OneKE. They also open sourced a version based on LLaMA2 full-parameter fine-tuning. Test indicators show that OneKE has achieved relatively good results on multiple fully supervised and zero-sample entity/relationship/event extraction tasks.

OneKE is an excellent bilingual generalizable knowledge extraction tool in Chinese and English. It performs well on Chinese NER named entity recognition tasks, RE relationship extraction tasks, and EE event extraction tasks. Relatively good results have been achieved.

Liang Lei, head of Ant Group’s knowledge graph, said that Ant will continue to optimize the performance of knowledge extraction to serve the controllable and trustworthy needs of large models in different scenarios. In the future, we will work with industry partners to apply relevant technical systems to various vertical fields such as finance, medical care, and government affairs, and promote the industrial implementation of controllable generation technology dual-driven by knowledge graphs and large language models.

OneKE official homepage: http://oneke.openkg.cn/

OpenSPG GitHub: https://github.com/OpenSPG/openspg

The above is the detailed content of Ant Group and Zhejiang University jointly release OneKE, an open source large model knowledge extraction framework. For more information, please follow other related articles on the PHP Chinese website!

The AI Skills Gap Is Slowing Down Supply ChainsApr 26, 2025 am 11:13 AM

The AI Skills Gap Is Slowing Down Supply ChainsApr 26, 2025 am 11:13 AMThe term "AI-ready workforce" is frequently used, but what does it truly mean in the supply chain industry? According to Abe Eshkenazi, CEO of the Association for Supply Chain Management (ASCM), it signifies professionals capable of critic

How One Company Is Quietly Working To Transform AI ForeverApr 26, 2025 am 11:12 AM

How One Company Is Quietly Working To Transform AI ForeverApr 26, 2025 am 11:12 AMThe decentralized AI revolution is quietly gaining momentum. This Friday in Austin, Texas, the Bittensor Endgame Summit marks a pivotal moment, transitioning decentralized AI (DeAI) from theory to practical application. Unlike the glitzy commercial

Nvidia Releases NeMo Microservices To Streamline AI Agent DevelopmentApr 26, 2025 am 11:11 AM

Nvidia Releases NeMo Microservices To Streamline AI Agent DevelopmentApr 26, 2025 am 11:11 AMEnterprise AI faces data integration challenges The application of enterprise AI faces a major challenge: building systems that can maintain accuracy and practicality by continuously learning business data. NeMo microservices solve this problem by creating what Nvidia describes as "data flywheel", allowing AI systems to remain relevant through continuous exposure to enterprise information and user interaction. This newly launched toolkit contains five key microservices: NeMo Customizer handles fine-tuning of large language models with higher training throughput. NeMo Evaluator provides simplified evaluation of AI models for custom benchmarks. NeMo Guardrails implements security controls to maintain compliance and appropriateness

AI Paints A New Picture For The Future Of Art And DesignApr 26, 2025 am 11:10 AM

AI Paints A New Picture For The Future Of Art And DesignApr 26, 2025 am 11:10 AMAI: The Future of Art and Design Artificial intelligence (AI) is changing the field of art and design in unprecedented ways, and its impact is no longer limited to amateurs, but more profoundly affecting professionals. Artwork and design schemes generated by AI are rapidly replacing traditional material images and designers in many transactional design activities such as advertising, social media image generation and web design. However, professional artists and designers also find the practical value of AI. They use AI as an auxiliary tool to explore new aesthetic possibilities, blend different styles, and create novel visual effects. AI helps artists and designers automate repetitive tasks, propose different design elements and provide creative input. AI supports style transfer, which is to apply a style of image

How Zoom Is Revolutionizing Work With Agentic AI: From Meetings To MilestonesApr 26, 2025 am 11:09 AM

How Zoom Is Revolutionizing Work With Agentic AI: From Meetings To MilestonesApr 26, 2025 am 11:09 AMZoom, initially known for its video conferencing platform, is leading a workplace revolution with its innovative use of agentic AI. A recent conversation with Zoom's CTO, XD Huang, revealed the company's ambitious vision. Defining Agentic AI Huang d

The Existential Threat To UniversitiesApr 26, 2025 am 11:08 AM

The Existential Threat To UniversitiesApr 26, 2025 am 11:08 AMWill AI revolutionize education? This question is prompting serious reflection among educators and stakeholders. The integration of AI into education presents both opportunities and challenges. As Matthew Lynch of The Tech Edvocate notes, universit

The Prototype: American Scientists Are Looking For Jobs AbroadApr 26, 2025 am 11:07 AM

The Prototype: American Scientists Are Looking For Jobs AbroadApr 26, 2025 am 11:07 AMThe development of scientific research and technology in the United States may face challenges, perhaps due to budget cuts. According to Nature, the number of American scientists applying for overseas jobs increased by 32% from January to March 2025 compared with the same period in 2024. A previous poll showed that 75% of the researchers surveyed were considering searching for jobs in Europe and Canada. Hundreds of NIH and NSF grants have been terminated in the past few months, with NIH’s new grants down by about $2.3 billion this year, a drop of nearly one-third. The leaked budget proposal shows that the Trump administration is considering sharply cutting budgets for scientific institutions, with a possible reduction of up to 50%. The turmoil in the field of basic research has also affected one of the major advantages of the United States: attracting overseas talents. 35

All About Open AI's Latest GPT 4.1 Family - Analytics VidhyaApr 26, 2025 am 10:19 AM

All About Open AI's Latest GPT 4.1 Family - Analytics VidhyaApr 26, 2025 am 10:19 AMOpenAI unveils the powerful GPT-4.1 series: a family of three advanced language models designed for real-world applications. This significant leap forward offers faster response times, enhanced comprehension, and drastically reduced costs compared t

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

WebStorm Mac version

Useful JavaScript development tools

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),

EditPlus Chinese cracked version

Small size, syntax highlighting, does not support code prompt function

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software

SublimeText3 English version

Recommended: Win version, supports code prompts!