Technology peripherals

Technology peripherals AI

AI With only 200M parameters, zero-sample performance surpasses supervised! Google releases basic time series prediction model TimesFM

With only 200M parameters, zero-sample performance surpasses supervised! Google releases basic time series prediction model TimesFMWith only 200M parameters, zero-sample performance surpasses supervised! Google releases basic time series prediction model TimesFM

Time series forecasting plays an important role in various fields, such as retail, finance, manufacturing, healthcare, and natural sciences, etc. In the retail industry, inventory costs can be effectively reduced and revenue increased by improving the accuracy of demand forecasts. This means businesses can better meet customer demand, reduce inventory overhang and losses, while increasing sales and profits. Therefore, time series forecasting is of great value in the retail field and can bring substantial benefits to enterprises

Deep learning (DL) models dominate the task of "multivariable time series forecasting" status, showing excellent performance in various competitions and practical applications.

At the same time, significant progress has been made in large-scale basic language models in natural language processing (NLP) tasks, effectively improving tasks such as translation, retrieval enhancement generation, and code completion. performance.

The training of NLP models relies on massive text data, which comes from a variety of sources, including crawlers, open source code, etc. The trained model can recognize patterns in the language and has zero The ability of sample learning: For example, when using a large model for retrieval tasks, the model can answer questions about current events and summarize them.

Although deep learning-based predictors outperform traditional methods in many ways, including reducing training and inference costs, there are still some challenges that need to be overcome:

Many deep learning models undergo lengthy training and validation before they can be tested on new time series. In contrast, the underlying model for time series forecasting has "out-of-the-box forecasting" capabilities and can be applied to unknown time series data without additional training. This feature allows users to focus on improving forecasting for practical downstream tasks such as retail demand planning.

Researchers at Google Research recently proposed a basic model for time series prediction called TimesFM, which was pre-trained on 100 billion real-world time points. Compared with current state-of-the-art large language models (LLMs), TimesFM is much smaller in size, containing only 200M parameters.

Paper link: https://arxiv.org/pdf/2310.10688.pdf

Experiment The results show that despite its small size, TimesFM exhibits surprising "zero-shot performance" on different untrained datasets across various domains and time scales, approaching the performance of unambiguously trained, state-of-the-art supervised methods on these performance on the data set.

The researchers plan to make the TimesFM model available to external customers in Google Cloud Vertex AI later this year.

Basic model TimesFM

LLMs are usually trained in a decoder-only manner, including three steps:

1. Text is broken down into subwords called tokens

2. Tokens are fed into stacked causal Transformer layers and generated with each input token Corresponding output, it should be noted that this layer cannot handle tokens without input, that is, future tokens

3. The output corresponding to the i-th token summarizes all the information from the previous tokens. information, and predict the (i 1)th token

During inference, LLM generates the output of one token at a time.

For example, when inputting the prompt "What is the capital of France?" (What is the capital of France?), the model may generate the token "The", and then use this prompt Generate the next token "capital" for the condition, and so on, until the model generates a complete answer: "The capital of France is Paris" (The capital of France is Paris).

The underlying model for time series forecasting should adapt to variable context (what the model observes) and range (what the query model predicts) lengths, while having sufficient power to encode data from large pre-trained datasets. All patterns.

Similar to LLMs, the researchers used stacked Transformer layers (self-attention and feed-forward layers) as the main building blocks of the TimesFM model; in In the context of time series forecasting, a patch (a set of consecutive time points) is used as a token. The idea comes from recent long-horizon forecasting work: the specific task is to predict at the end of the stacked Transformer layer, for a given th i output to predict the (i 1)th time point patch

But TimesFM has several key differences with the language model:

1. The model requires a multi-layer perceptron block with residual connections to convert the time series patches into tokens, which can be input to the Transformer layer along with the position encoding (PE). To do this, we use residual blocks similar to our previous work in long-term prediction.

2. The output token from the stacked Transformer can be used to predict the length of subsequent time points that is longer than the input patch length, that is, the output patch length can be greater than the input patch length.

Assume that a time series with a length of 512 time points is used to train a TimesFM model with "input patch length 32" and "output patch length 128":

During training, the model is simultaneously trained to use the first 32 time points to predict the next 128 time points, the first 64 time points to predict time points 65 to 192, and the first 96 time points to predict time points 97 to 224 and so on.

Assuming that the input data is a time series of length 256, and its task is to predict the next 256 time points in the future, the model first generates future predictions for time points 257 to 384, Time points 385 to 512 are then generated conditioned on the initial 256 length input plus the generated output.

On the other hand, if in the model, the output patch length is equal to the input patch length 32, then for the same task, the model goes through eight generation steps instead of 2, increasing the error Cumulative risk, so it can be seen in the experimental results that longer output patch length leads to better long-term prediction performance.

Pre-training data

Just like LLMs can get better with more tokens, TimesFM requires a large amount of legitimate time series data to learn and improve; researchers After spending a lot of time creating and evaluating training data sets, I found two better methods:

Synthetic data helps with the basics

Meaningful synthetic time series data can be generated using statistical models or physical simulations, and basic temporal patterns can guide the model to learn the syntax of time series forecasting.

Real-world data adds real-world flavor

The researchers combed through available public time series datasets and selectively put together a large corpus of 100 billion time points.

In the data set, there are Google Trends and Wikipedia page views, which track what users are interested in, and reflect well the trends and patterns of many other real-world time series , helps TimesFM understand the bigger picture, and can improve generalization performance for "domain-specific contexts that have not been seen during training."

Zero-sample evaluation results

The researchers used a commonly used time series benchmark to conduct a zero-sample evaluation of TimesFM on data not seen during training, and it was observed that TimesFM performed better than most Statistical methods such as ARIMA, ETS, and can match or outperform powerful DL models such as DeepAR, PatchTST that have been explicitly trained on the target time series.

The researchers used the Monash Forecasting Archive to evaluate the out-of-box performance of TimesFM, a dataset containing tens of thousands of time series from various domains such as traffic, weather and demand forecasting, Coverage frequency ranges from a few minutes to yearly data.

Based on existing literature, the researchers examined the mean absolute error (MAE) appropriately scaled to average over the data set.

As can be seen, zero-shot (ZS) TimesFM outperforms most supervised methods, including recent deep learning models. TimesFM and GPT-3.5 were also compared for prediction using the specific hint technology proposed by llmtime (ZS), and the results proved that TimesFM performed better than llmtime (ZS)

Ratio MAE of TimesFM (ZS) vs. other supervised and zero-shot methods on Monash dataset (lower is better)

Most Monash datasets are Short or medium-term, meaning the forecast length is not too long; the researchers also tested TimesFM against a commonly used benchmark long-term forecast against the state-of-the-art baseline PatchTST (and other long-term forecast baselines).

The researchers plotted the MAE on the ETT dataset for the task of predicting 96 and 192 time points into the future, calculating the metric on the last test window of each dataset.

Last window MAE (lower is better) of TimesFM(ZS) versus llmtime(ZS) and long-term forecast on ETT dataset Baseline

It can be seen that TimesFM not only exceeds the performance of llmtime (ZS), but also matches the performance of the supervised PatchTST model explicitly trained on the corresponding dataset.

Conclusion

The researchers trained a base decoder-only model using a large pre-training corpus of 100 billion real-world time points, most of which were Search interest time series data from Google Trends and Wikipedia page views.

The results show that even a relatively small 200 M parameter pre-trained model, using the TimesFM architecture, exhibits excellent performance in various public benchmarks (different domains and granularities) Pretty good zero-shot performance.

The above is the detailed content of With only 200M parameters, zero-sample performance surpasses supervised! Google releases basic time series prediction model TimesFM. For more information, please follow other related articles on the PHP Chinese website!

The Hidden Dangers Of AI Internal Deployment: Governance Gaps And Catastrophic RisksApr 28, 2025 am 11:12 AM

The Hidden Dangers Of AI Internal Deployment: Governance Gaps And Catastrophic RisksApr 28, 2025 am 11:12 AMThe unchecked internal deployment of advanced AI systems poses significant risks, according to a new report from Apollo Research. This lack of oversight, prevalent among major AI firms, allows for potential catastrophic outcomes, ranging from uncont

Building The AI PolygraphApr 28, 2025 am 11:11 AM

Building The AI PolygraphApr 28, 2025 am 11:11 AMTraditional lie detectors are outdated. Relying on the pointer connected by the wristband, a lie detector that prints out the subject's vital signs and physical reactions is not accurate in identifying lies. This is why lie detection results are not usually adopted by the court, although it has led to many innocent people being jailed. In contrast, artificial intelligence is a powerful data engine, and its working principle is to observe all aspects. This means that scientists can apply artificial intelligence to applications seeking truth through a variety of ways. One approach is to analyze the vital sign responses of the person being interrogated like a lie detector, but with a more detailed and precise comparative analysis. Another approach is to use linguistic markup to analyze what people actually say and use logic and reasoning. As the saying goes, one lie breeds another lie, and eventually

Is AI Cleared For Takeoff In The Aerospace Industry?Apr 28, 2025 am 11:10 AM

Is AI Cleared For Takeoff In The Aerospace Industry?Apr 28, 2025 am 11:10 AMThe aerospace industry, a pioneer of innovation, is leveraging AI to tackle its most intricate challenges. Modern aviation's increasing complexity necessitates AI's automation and real-time intelligence capabilities for enhanced safety, reduced oper

Watching Beijing's Spring Robot RaceApr 28, 2025 am 11:09 AM

Watching Beijing's Spring Robot RaceApr 28, 2025 am 11:09 AMThe rapid development of robotics has brought us a fascinating case study. The N2 robot from Noetix weighs over 40 pounds and is 3 feet tall and is said to be able to backflip. Unitree's G1 robot weighs about twice the size of the N2 and is about 4 feet tall. There are also many smaller humanoid robots participating in the competition, and there is even a robot that is driven forward by a fan. Data interpretation The half marathon attracted more than 12,000 spectators, but only 21 humanoid robots participated. Although the government pointed out that the participating robots conducted "intensive training" before the competition, not all robots completed the entire competition. Champion - Tiangong Ult developed by Beijing Humanoid Robot Innovation Center

The Mirror Trap: AI Ethics And The Collapse Of Human ImaginationApr 28, 2025 am 11:08 AM

The Mirror Trap: AI Ethics And The Collapse Of Human ImaginationApr 28, 2025 am 11:08 AMArtificial intelligence, in its current form, isn't truly intelligent; it's adept at mimicking and refining existing data. We're not creating artificial intelligence, but rather artificial inference—machines that process information, while humans su

New Google Leak Reveals Handy Google Photos Feature UpdateApr 28, 2025 am 11:07 AM

New Google Leak Reveals Handy Google Photos Feature UpdateApr 28, 2025 am 11:07 AMA report found that an updated interface was hidden in the code for Google Photos Android version 7.26, and each time you view a photo, a row of newly detected face thumbnails are displayed at the bottom of the screen. The new facial thumbnails are missing name tags, so I suspect you need to click on them individually to see more information about each detected person. For now, this feature provides no information other than those people that Google Photos has found in your images. This feature is not available yet, so we don't know how Google will use it accurately. Google can use thumbnails to speed up finding more photos of selected people, or may be used for other purposes, such as selecting the individual to edit. Let's wait and see. As for now

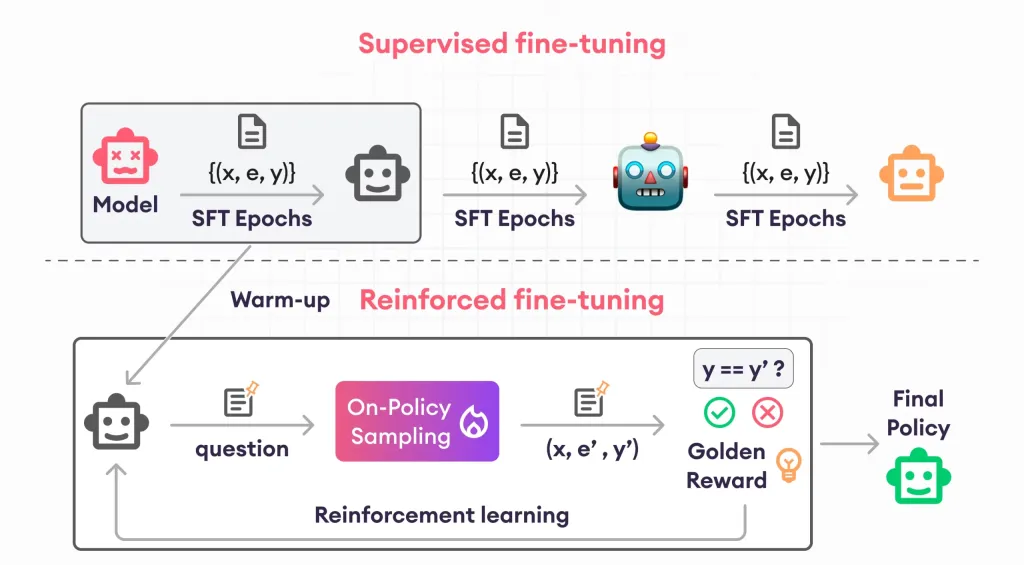

Guide to Reinforcement Finetuning - Analytics VidhyaApr 28, 2025 am 09:30 AM

Guide to Reinforcement Finetuning - Analytics VidhyaApr 28, 2025 am 09:30 AMReinforcement finetuning has shaken up AI development by teaching models to adjust based on human feedback. It blends supervised learning foundations with reward-based updates to make them safer, more accurate, and genuinely help

Let's Dance: Structured Movement To Fine-Tune Our Human Neural NetsApr 27, 2025 am 11:09 AM

Let's Dance: Structured Movement To Fine-Tune Our Human Neural NetsApr 27, 2025 am 11:09 AMScientists have extensively studied human and simpler neural networks (like those in C. elegans) to understand their functionality. However, a crucial question arises: how do we adapt our own neural networks to work effectively alongside novel AI s

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment

SublimeText3 Chinese version

Chinese version, very easy to use

SublimeText3 Mac version

God-level code editing software (SublimeText3)

SublimeText3 Linux new version

SublimeText3 Linux latest version