Technology peripherals

Technology peripherals AI

AI What to do if there is no data end-to-end? ActiveAD: End-to-end active learning for autonomous driving for planning!

What to do if there is no data end-to-end? ActiveAD: End-to-end active learning for autonomous driving for planning!What to do if there is no data end-to-end? ActiveAD: End-to-end active learning for autonomous driving for planning!

#End-to-end differentiable learning for autonomous driving has recently become a prominent paradigm. A major bottleneck is its huge demand for high-quality labeled data, such as 3D boxes and semantic segmentation, which are notoriously expensive to manually annotate. This difficulty is compounded by the salient fact that within-sample behavior in AD often has long-tailed distributions. In other words, most of the data collected may be trivial (e.g., driving forward on a straight road), with only a few situations being safety critical. In this paper, we explore a practically important but underexplored issue, namely how to achieve sample and label efficiency in end-to-end AD.

Specifically, the paper designs a planning-oriented active learning method that gradually annotates parts of the collected raw data based on the diversity and usefulness criteria of the proposed planning routes. Empirically, the proposed plan-oriented approach can outperform general active learning approaches to a large extent. Notably, our method achieves comparable performance to state-of-the-art end-to-end AD methods using only 30% of nuScenes data. Hopefully our work will inspire future work from a data-centric perspective, in addition to methodological efforts.

Paper link: https://arxiv.org/pdf/2403.02877.pdf

Main contribution of this article:

- The first in-depth study of E2E-AD People with data problems. Also provides a simple yet effective solution to identify and annotate valuable data for planning within a limited budget.

- Based on the planning-oriented philosophy of the end-to-end approach, new task-specific diversity and uncertainty measures are designed for planning routes.

- A large number of experiments and ablation studies have proven the effectiveness of the method. ActiveAD outperforms generic peer-to-peer methods by a large margin and achieves comparable performance to SOTA methods with full labels using only 30% of nuScenes data.

Method introduction

ActiveAD is described in detail in the end-to-end AD framework, and diversity and uncertainty indicators are designed based on the data characteristics of AD .

1) Initial sample selection for labels

For active learning in computer vision, initial sample selection is usually based only on the original image without additional information or learning characteristics, which has led to the common practice of random initialization. In the case of AD, there is additional prior information available. Specifically, when collecting data from sensors, traditional information such as the speed and trajectory of the self-vehicle can be recorded simultaneously. Additionally, weather and lighting conditions are often continuous and easy to annotate at the fragment level. This information facilitates making informed choices for initial set selection. Therefore, we designed a self-diversity measure for initial selection.

Ego Diversity: Consists of three parts: 1) Weather lighting 2) Driving instructions 3) Average speed. First, use the description in nuScenes to divide the complete data set into four mutually exclusive subsets: Day Sunny (DS), Day Rainy (DR), Night Sunny (NS), NightRainy (NR). Secondly, each subset is divided into four categories based on the number of left, right and straight driving commands in a complete segment: left turn (L), right turn (R), overtaking (O), and go straight (S). The paper designs a threshold τc, where if the number of left and right commands in a clip is greater than or equal to the threshold τc, we regard it as a transcendent behavior in the clip. If only the number of left commands is greater than the threshold τc, it indicates a left turn. If only the number of rightward commands is greater than the threshold τc, it indicates a right turn. All other cases are considered direct. Third, calculate the average speed in each scene and sort them in ascending order within the relevant subset.

Figure 2 gives the detailed intuitive process of the initial selection process based on multi-way trees.

2) Criterion design for incremental selection

In this section we will introduce how to incrementally annotate new parts of a fragment based on a model trained with annotated fragments . We will use the intermediate model to perform inference on unlabeled segments, and subsequent selections are based on these outputs. Nonetheless, a planning-oriented perspective is adopted and three criteria for subsequent data selection are introduced: displacement errors, soft collisions, and proxy uncertainties.

Standard 1: Displacement error (DE). will be expressed as the distance between the model’s predicted planned route τ and the human trajectories τ* recorded in the dataset.

where T represents the frame in the scene. Since the displacement error is itself a performance metric (no annotation required), it naturally becomes the first and most critical criterion in active selection.

Standard 2: Soft collision (SC). LSC is defined as the distance between the predicted self-vehicle trajectory and the predicted agent trajectory. Low confidence agent predictions will be filtered out by the threshold ε. In each scenario, the shortest distance is chosen as the measure of hazard coefficient. At the same time, maintain a positive correlation between term and nearest distance:

Use "soft collision" as a criterion because: on the one hand, unlike "displacement error", " The calculation of "collision ratio" depends on annotations of the target's 3D box, which are not available in unlabeled data. Therefore, it should be possible to calculate the criterion based solely on the model's inference results. On the other hand, consider a hard collision criterion: if the predicted self-vehicle trajectory will collide with the trajectories of other predicted agents, assign it 1, otherwise assign it 0. However, this may result in too few samples with label 1, since the collision rate of state-of-the-art models in AD is usually small (less than 1%). Therefore, it was chosen to use the closest distance to other pairs of targets instead of the "collision rate" metric. The risk is considered much higher when the distance to other vehicles or pedestrians is too close. In short, "soft collisions" are an effective measure of collision likelihood and can provide intensive oversight.

Standard III: agent uncertainty (AU). Predictions of the future trajectories of surrounding agents are naturally uncertain, so motion prediction modules typically generate multiple modalities and corresponding confidence scores. Our goal is to select data for which nearby agents have high uncertainty. Specifically, distant subjects are filtered out by a distance threshold δ, and the weighted entropy of the predicted probabilities of multiple modes for the remaining subjects is calculated. Assume that the number of modalities is and the agent’s confidence score in different modalities is Pi(a), where i∈{1,…,Nm}. Then, Agent uncertainty can be defined as:

3) Overall initiative Learning Paradigm

Alg1 introduces the entire workflow of the method. Given an available budget B, an initial selection size n0, the number of activity selections made at each step ni, and a total of M selection stages. Selection is first initialized using the randomization or self-diversity methods described above. Then, the currently annotated data is used to train the network. Based on the trained network, we make predictions on the unlabeled ones and calculate the total loss. Finally, the samples are sorted according to the overall loss and the top ni samples to be annotated in the current iteration are selected. This process is repeated until the iteration reaches the upper limit M and the number of selected samples reaches the upper limit B.

Experimental results

Experiments were conducted on the widely used nuScenes dataset. All experiments are implemented using PyTorch and run on RTX 3090 and A100 GPUs.

Figure 3: Visualization of selected scenes. Displacement error (col 1), soft collision (col 2), agent uncertainty (col 3) and hybrid (col 4) criteria based on selected front camera images based on a model trained on 10% of the data. Mixed represents our final choice strategy, ActiveAD, and takes the first three scenarios into consideration!

Table 4, performance in various scenarios. The smaller the average L2(m)/average collision rate (%) of the active model using 30% of the data, the better the performance under various weather/lighting and driving command conditions.

Figure 4: Similarity between multiple criteria. It shows the new sampling scenario with 10% (left) and 20% (right) selected by four criteria: Displacement Error (DE), Soft Collision (SC), Agent Uncertainty (AU) and Mixing (MX)

Some conclusions of this work

In order to solve the high cost and long-tail problems of end-to-end autonomous driving data annotation, we took the lead in developing a tailor-made active learning solution, ActiveAD. ActiveAD introduces new task-specific diversity and uncertainty measures based on a planning-oriented philosophy. A large number of experiments prove the effectiveness of the method. Using only 30% of the data, it significantly exceeds the general previous methods and achieves performance comparable to the state-of-the-art models. This represents a meaningful exploration of end-to-end autonomous driving from a data-centric perspective, and we hope that our work will inspire future research and discovery.

The above is the detailed content of What to do if there is no data end-to-end? ActiveAD: End-to-end active learning for autonomous driving for planning!. For more information, please follow other related articles on the PHP Chinese website!

Exploring the Capabilities of Google's Gemma 2 ModelsApr 22, 2025 am 11:26 AM

Exploring the Capabilities of Google's Gemma 2 ModelsApr 22, 2025 am 11:26 AMGoogle's Gemma 2: A Powerful, Efficient Language Model Google's Gemma family of language models, celebrated for efficiency and performance, has expanded with the arrival of Gemma 2. This latest release comprises two models: a 27-billion parameter ver

The Next Wave of GenAI: Perspectives with Dr. Kirk Borne - Analytics VidhyaApr 22, 2025 am 11:21 AM

The Next Wave of GenAI: Perspectives with Dr. Kirk Borne - Analytics VidhyaApr 22, 2025 am 11:21 AMThis Leading with Data episode features Dr. Kirk Borne, a leading data scientist, astrophysicist, and TEDx speaker. A renowned expert in big data, AI, and machine learning, Dr. Borne offers invaluable insights into the current state and future traje

AI For Runners And Athletes: We're Making Excellent ProgressApr 22, 2025 am 11:12 AM

AI For Runners And Athletes: We're Making Excellent ProgressApr 22, 2025 am 11:12 AMThere were some very insightful perspectives in this speech—background information about engineering that showed us why artificial intelligence is so good at supporting people’s physical exercise. I will outline a core idea from each contributor’s perspective to demonstrate three design aspects that are an important part of our exploration of the application of artificial intelligence in sports. Edge devices and raw personal data This idea about artificial intelligence actually contains two components—one related to where we place large language models and the other is related to the differences between our human language and the language that our vital signs “express” when measured in real time. Alexander Amini knows a lot about running and tennis, but he still

Jamie Engstrom On Technology, Talent And Transformation At CaterpillarApr 22, 2025 am 11:10 AM

Jamie Engstrom On Technology, Talent And Transformation At CaterpillarApr 22, 2025 am 11:10 AMCaterpillar's Chief Information Officer and Senior Vice President of IT, Jamie Engstrom, leads a global team of over 2,200 IT professionals across 28 countries. With 26 years at Caterpillar, including four and a half years in her current role, Engst

New Google Photos Update Makes Any Photo Pop With Ultra HDR QualityApr 22, 2025 am 11:09 AM

New Google Photos Update Makes Any Photo Pop With Ultra HDR QualityApr 22, 2025 am 11:09 AMGoogle Photos' New Ultra HDR Tool: A Quick Guide Enhance your photos with Google Photos' new Ultra HDR tool, transforming standard images into vibrant, high-dynamic-range masterpieces. Ideal for social media, this tool boosts the impact of any photo,

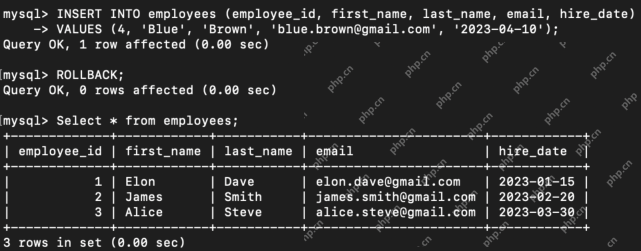

What are the TCL Commands in SQL? - Analytics VidhyaApr 22, 2025 am 11:07 AM

What are the TCL Commands in SQL? - Analytics VidhyaApr 22, 2025 am 11:07 AMIntroduction Transaction Control Language (TCL) commands are essential in SQL for managing changes made by Data Manipulation Language (DML) statements. These commands allow database administrators and users to control transaction processes, thereby

How to Make Custom ChatGPT? - Analytics VidhyaApr 22, 2025 am 11:06 AM

How to Make Custom ChatGPT? - Analytics VidhyaApr 22, 2025 am 11:06 AMHarness the power of ChatGPT to create personalized AI assistants! This tutorial shows you how to build your own custom GPTs in five simple steps, even without coding skills. Key Features of Custom GPTs: Create personalized AI models for specific t

Difference Between Method Overloading and OverridingApr 22, 2025 am 10:55 AM

Difference Between Method Overloading and OverridingApr 22, 2025 am 10:55 AMIntroduction Method overloading and overriding are core object-oriented programming (OOP) concepts crucial for writing flexible and efficient code, particularly in data-intensive fields like data science and AI. While similar in name, their mechanis

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

SublimeText3 English version

Recommended: Win version, supports code prompts!

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),

SublimeText3 Mac version

God-level code editing software (SublimeText3)

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.

Atom editor mac version download

The most popular open source editor