Technology peripherals

Technology peripherals AI

AI The large-scale inference cost rankings led by Jia Yangqing's high efficiency are released

The large-scale inference cost rankings led by Jia Yangqing's high efficiency are releasedThe large-scale inference cost rankings led by Jia Yangqing's high efficiency are released

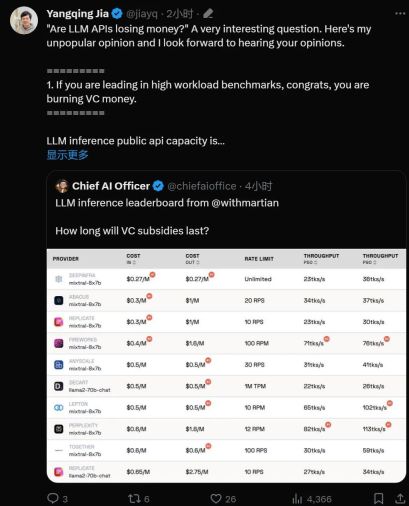

"Is the API of large models a loss-making business?"

With the practicalization of large language model technology, many technologies The company has launched a large model API for developers to use. However, we can't help but start to wonder whether a business based on large models can be sustained, especially considering that OpenAI is burning through $700,000 a day.

This Thursday, AI startup Martian calculated it carefully for us.

Leaderboard link: https://leaderboard.withmartian.com/

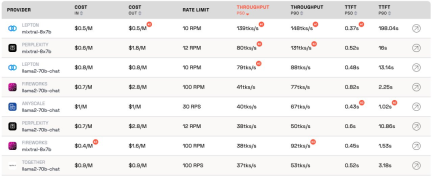

The LLM Inference Provider Leaderboard is an open-source ranking of API inference products for large models. It benchmarks the cost, rate limits, throughput, and P50 and P90 TTFT for the Mixtral-8x7B and Llama-2-70B-Chat public endpoints of each vendor.

Although they compete with each other, Martian found that there are significant differences in the cost, throughput and rate limits of each company's large model services. These differences exceed the 5x cost difference, 6x throughput difference, and even larger rate limit differences. Choosing different APIs is critical to getting the best performance, even though it's just part of doing business.

According to the current ranking, the service provided by Anyscale has the best throughput under the medium service load of Llama-2-70B. For large service loads, Together AI performed best with P50 and P90 throughput on Llama-2-70B and Mixtral-8x7B.

Additionally, Jia Yangqing’s LeptonAI showed the best throughput when handling small task loads with short input and long output cues. Its P50 throughput of 130 tks/s is the fastest among the models currently provided by all manufacturers on the market.

Well-known AI scholar and Lepton AI founder Jia Yangqing commented immediately after the rankings were released. Let’s see what he said.

Jia Yangqing first explained the current status of the industry in the field of artificial intelligence, then affirmed the significance of benchmark testing, and finally pointed out that LeptonAI will help users find the best AI Basic strategy.

1. Big model API is "burning money"

If the model is in high workload benchmark test Leading position, then congratulations, it is "burning money."

LLM Reasoning about the capacity of a public API is like running a restaurant: you have a chef and you need to estimate customer traffic. Hiring a chef costs money. Latency and throughput can be understood as "how fast you can cook for customers." For a reasonable business, you need a "reasonable" number of chefs. In other words, you want to have capacity that can handle normal traffic, not sudden bursts of traffic that occur in a matter of seconds. A surge in traffic means waiting; otherwise, the "cook" will have nothing to do.

In the world of artificial intelligence, GPU plays the role of "chef". Baseline loads are bursty. Under low workloads, the baseline load is blended into normal traffic, and the measurements provide an accurate representation of how the service performs under current workloads.

The high service load scenario is interesting because it will cause interruptions. The benchmark only runs a few times per day/week, so it's not the regular traffic one should expect. Imagine having 100 people flock to your local restaurant to check out how quickly the chef is cooking. The results would be great. To borrow the terminology of quantum physics, this is called the "observer effect." The stronger the interference (i.e. the larger the burst load), the lower the accuracy. In other words: if you put a sudden high load on a service and see that the service responds very quickly, you know that the service has quite a bit of idle capacity. As an investor, when you see this situation, you should ask: Is this way of burning money responsible?

2. The model will eventually achieve similar performance

The field of artificial intelligence is very fond of competitive competitions, which is indeed interesting. Everyone quickly converges on the same solution, and Nvidia always wins in the end because of the GPU. This is thanks to great open source projects, vLLM is a great example. This means that, as a provider, if your model performs much worse than others, you can easily catch up by looking at open source solutions and applying good engineering.

3. "As a customer, I don't care about the provider's cost"

For artificial intelligence application building For developers, we are lucky: there are always API providers willing to "burn money". The AI industry is burning money to gain traffic, and the next step is to worry about profits.

Benchmarking is a tedious and error-prone task. For better or worse, it usually happens that winners praise you and losers blame you. Such was the case with the last round of convolutional neural network benchmarks. It’s not an easy task, but benchmarking will help us achieve the next 10x in AI infrastructure.

Based on the artificial intelligence framework and cloud infrastructure, LeptonAI will help users find the best AI basic strategy.

The above is the detailed content of The large-scale inference cost rankings led by Jia Yangqing's high efficiency are released. For more information, please follow other related articles on the PHP Chinese website!

You Must Build Workplace AI Behind A Veil Of IgnoranceApr 29, 2025 am 11:15 AM

You Must Build Workplace AI Behind A Veil Of IgnoranceApr 29, 2025 am 11:15 AMIn John Rawls' seminal 1971 book The Theory of Justice, he proposed a thought experiment that we should take as the core of today's AI design and use decision-making: the veil of ignorance. This philosophy provides a simple tool for understanding equity and also provides a blueprint for leaders to use this understanding to design and implement AI equitably. Imagine that you are making rules for a new society. But there is a premise: you don’t know in advance what role you will play in this society. You may end up being rich or poor, healthy or disabled, belonging to a majority or marginal minority. Operating under this "veil of ignorance" prevents rule makers from making decisions that benefit themselves. On the contrary, people will be more motivated to formulate public

Decisions, Decisions… Next Steps For Practical Applied AIApr 29, 2025 am 11:14 AM

Decisions, Decisions… Next Steps For Practical Applied AIApr 29, 2025 am 11:14 AMNumerous companies specialize in robotic process automation (RPA), offering bots to automate repetitive tasks—UiPath, Automation Anywhere, Blue Prism, and others. Meanwhile, process mining, orchestration, and intelligent document processing speciali

The Agents Are Coming – More On What We Will Do Next To AI PartnersApr 29, 2025 am 11:13 AM

The Agents Are Coming – More On What We Will Do Next To AI PartnersApr 29, 2025 am 11:13 AMThe future of AI is moving beyond simple word prediction and conversational simulation; AI agents are emerging, capable of independent action and task completion. This shift is already evident in tools like Anthropic's Claude. AI Agents: Research a

Why Empathy Is More Important Than Control For Leaders In An AI-Driven FutureApr 29, 2025 am 11:12 AM

Why Empathy Is More Important Than Control For Leaders In An AI-Driven FutureApr 29, 2025 am 11:12 AMRapid technological advancements necessitate a forward-looking perspective on the future of work. What happens when AI transcends mere productivity enhancement and begins shaping our societal structures? Topher McDougal's upcoming book, Gaia Wakes:

AI For Product Classification: Can Machines Master Tax Law?Apr 29, 2025 am 11:11 AM

AI For Product Classification: Can Machines Master Tax Law?Apr 29, 2025 am 11:11 AMProduct classification, often involving complex codes like "HS 8471.30" from systems such as the Harmonized System (HS), is crucial for international trade and domestic sales. These codes ensure correct tax application, impacting every inv

Could Data Center Demand Spark A Climate Tech Rebound?Apr 29, 2025 am 11:10 AM

Could Data Center Demand Spark A Climate Tech Rebound?Apr 29, 2025 am 11:10 AMThe future of energy consumption in data centers and climate technology investment This article explores the surge in energy consumption in AI-driven data centers and its impact on climate change, and analyzes innovative solutions and policy recommendations to address this challenge. Challenges of energy demand: Large and ultra-large-scale data centers consume huge power, comparable to the sum of hundreds of thousands of ordinary North American families, and emerging AI ultra-large-scale centers consume dozens of times more power than this. In the first eight months of 2024, Microsoft, Meta, Google and Amazon have invested approximately US$125 billion in the construction and operation of AI data centers (JP Morgan, 2024) (Table 1). Growing energy demand is both a challenge and an opportunity. According to Canary Media, the looming electricity

AI And Hollywood's Next Golden AgeApr 29, 2025 am 11:09 AM

AI And Hollywood's Next Golden AgeApr 29, 2025 am 11:09 AMGenerative AI is revolutionizing film and television production. Luma's Ray 2 model, as well as Runway's Gen-4, OpenAI's Sora, Google's Veo and other new models, are improving the quality of generated videos at an unprecedented speed. These models can easily create complex special effects and realistic scenes, even short video clips and camera-perceived motion effects have been achieved. While the manipulation and consistency of these tools still need to be improved, the speed of progress is amazing. Generative video is becoming an independent medium. Some models are good at animation production, while others are good at live-action images. It is worth noting that Adobe's Firefly and Moonvalley's Ma

Is ChatGPT Slowly Becoming AI's Biggest Yes-Man?Apr 29, 2025 am 11:08 AM

Is ChatGPT Slowly Becoming AI's Biggest Yes-Man?Apr 29, 2025 am 11:08 AMChatGPT user experience declines: is it a model degradation or user expectations? Recently, a large number of ChatGPT paid users have complained about their performance degradation, which has attracted widespread attention. Users reported slower responses to models, shorter answers, lack of help, and even more hallucinations. Some users expressed dissatisfaction on social media, pointing out that ChatGPT has become “too flattering” and tends to verify user views rather than provide critical feedback. This not only affects the user experience, but also brings actual losses to corporate customers, such as reduced productivity and waste of computing resources. Evidence of performance degradation Many users have reported significant degradation in ChatGPT performance, especially in older models such as GPT-4 (which will soon be discontinued from service at the end of this month). this

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Zend Studio 13.0.1

Powerful PHP integrated development environment

WebStorm Mac version

Useful JavaScript development tools

SAP NetWeaver Server Adapter for Eclipse

Integrate Eclipse with SAP NetWeaver application server.

Safe Exam Browser

Safe Exam Browser is a secure browser environment for taking online exams securely. This software turns any computer into a secure workstation. It controls access to any utility and prevents students from using unauthorized resources.

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),