Technology peripherals

Technology peripherals AI

AI Implementation of personalized recommendation system based on Transformer model

Implementation of personalized recommendation system based on Transformer modelImplementation of personalized recommendation system based on Transformer model

Personalized recommendation based on Transformer is a personalized recommendation method implemented using the Transformer model. Transformer is a neural network model based on the attention mechanism, which is widely used in natural language processing tasks, such as machine translation and text generation. In personalized recommendations, Transformer can learn the user's interests and preferences and recommend relevant content to the user based on this information. Through the attention mechanism, Transformer is able to capture the relationship between the user's interests and related content, thereby improving the accuracy and effectiveness of recommendations. By using the Transformer model, the personalized recommendation system can better understand the needs of users and provide users with more personalized and accurate recommendation services.

In personalized recommendations, you first need to establish an interaction matrix between users and items. This matrix records user behavior toward items, such as ratings, clicks, or purchases. Next, we need to convert this interaction information into vector form and input it into the Transformer model for training. In this way, the model can learn the relationship between users and items and generate personalized recommendation results. In this way, we can improve the accuracy and user satisfaction of the recommendation system.

The Transformer model in personalized recommendation usually includes an encoder and a decoder. The encoder is used to learn vector representations of users and items, and the decoder is used to predict the user's interest in other items. This architecture can effectively capture the complex relationships between users and items, thereby improving the accuracy and personalization of recommendations.

In the encoder, a multi-layer self-attention mechanism is first used to interact with the vector representations of users and items. The self-attention mechanism allows the model to learn more efficient vector representations by weighting them according to the importance of different positions in the input sequence. Next, the output of the attention mechanism is processed through a feedforward neural network to obtain the final vector representation. This method can help the model better capture the correlation information between users and items and improve the performance of the recommendation system.

In the decoder, we can use the user vector and item vector to predict the user's interest in other items. To calculate the similarity between users and items, we can use the dot product attention mechanism. By calculating the attention score, we can evaluate the correlation between the user and the item and use it as a basis for predicting the level of interest. Finally, we can rank items based on predicted interest and recommend them to users. This approach can improve the accuracy and personalization of recommendation systems.

To implement personalized recommendations based on Transformer, you need to pay attention to the following points:

1. Data preparation: collect interaction data between users and items, and build an interaction matrix. This matrix records the interaction between users and items, which can include information such as ratings, clicks, and purchases.

2. Feature representation: Convert users and items in the interaction matrix into vector representations. Embedding technology can be used to map users and items into a low-dimensional space and serve as input to the model.

3. Model construction: Build an encoder-decoder model based on Transformer. The encoder learns vector representations of users and items through a multi-layer self-attention mechanism, and the decoder uses user and item vectors to predict the user's interest in other items.

4. Model training: Use the interaction data between users and items as a training set to train the model by minimizing the gap between the predicted results and the real ratings. Optimization algorithms such as gradient descent can be used to update model parameters.

5. Recommendation generation: Based on the trained model, predict and rank items that the user has not interacted with, and recommend items with high interest to the user.

In practical applications, personalized recommendations based on Transformer have the following advantages:

- The model can fully consider the relationship between users and items The interactive relationship between them can capture richer semantic information.

- The Transformer model has good scalability and parallelism and can handle large-scale data sets and high concurrent requests.

- The model can automatically learn feature representations, reducing the need for manual feature engineering.

However, Transformer-based personalized recommendations also face some challenges:

- Data sparsity: In real scenarios, the interaction data between users and items is often sparse. Since users have only interacted with a small number of items, there are a large number of missing values in the data, which makes model learning and prediction difficult.

- Cold start problem: When new users or new items join the system, their interests and preferences cannot be accurately captured due to the lack of sufficient interaction data. This requires solving the cold start problem and providing recommendations for new users and new items through other methods (such as content-based recommendations, collaborative filtering, etc.).

- Diversity and long-tail problems: Personalized recommendations often face the problem of pursuing popular items, resulting in a lack of diversity in recommendation results and neglecting long-tail items. The Transformer model may be more likely to capture the correlation between popular items during the learning process, but the recommendation effect for long-tail items is poor.

- Interpretability and interpretability: As a black box model, the Transformer model’s prediction results are often difficult to explain. In some application scenarios, users want to understand why such recommendation results are obtained, and the model needs to have certain explanation capabilities.

- Real-time and efficiency: Transformer-based models usually have large network structures and parameter quantities, and require high computing resources. In real-time recommendation scenarios, personalized recommendation results need to be generated quickly, and the traditional Transformer model may have high computational complexity and latency.

The above is the detailed content of Implementation of personalized recommendation system based on Transformer model. For more information, please follow other related articles on the PHP Chinese website!

Sam's Club Bets On AI To Eliminate Receipt Checks And Enhance RetailApr 22, 2025 am 11:29 AM

Sam's Club Bets On AI To Eliminate Receipt Checks And Enhance RetailApr 22, 2025 am 11:29 AMRevolutionizing the Checkout Experience Sam's Club's innovative "Just Go" system builds on its existing AI-powered "Scan & Go" technology, allowing members to scan purchases via the Sam's Club app during their shopping trip.

Nvidia's AI Omniverse Expands At GTC 2025Apr 22, 2025 am 11:28 AM

Nvidia's AI Omniverse Expands At GTC 2025Apr 22, 2025 am 11:28 AMNvidia's Enhanced Predictability and New Product Lineup at GTC 2025 Nvidia, a key player in AI infrastructure, is focusing on increased predictability for its clients. This involves consistent product delivery, meeting performance expectations, and

Exploring the Capabilities of Google's Gemma 2 ModelsApr 22, 2025 am 11:26 AM

Exploring the Capabilities of Google's Gemma 2 ModelsApr 22, 2025 am 11:26 AMGoogle's Gemma 2: A Powerful, Efficient Language Model Google's Gemma family of language models, celebrated for efficiency and performance, has expanded with the arrival of Gemma 2. This latest release comprises two models: a 27-billion parameter ver

The Next Wave of GenAI: Perspectives with Dr. Kirk Borne - Analytics VidhyaApr 22, 2025 am 11:21 AM

The Next Wave of GenAI: Perspectives with Dr. Kirk Borne - Analytics VidhyaApr 22, 2025 am 11:21 AMThis Leading with Data episode features Dr. Kirk Borne, a leading data scientist, astrophysicist, and TEDx speaker. A renowned expert in big data, AI, and machine learning, Dr. Borne offers invaluable insights into the current state and future traje

AI For Runners And Athletes: We're Making Excellent ProgressApr 22, 2025 am 11:12 AM

AI For Runners And Athletes: We're Making Excellent ProgressApr 22, 2025 am 11:12 AMThere were some very insightful perspectives in this speech—background information about engineering that showed us why artificial intelligence is so good at supporting people’s physical exercise. I will outline a core idea from each contributor’s perspective to demonstrate three design aspects that are an important part of our exploration of the application of artificial intelligence in sports. Edge devices and raw personal data This idea about artificial intelligence actually contains two components—one related to where we place large language models and the other is related to the differences between our human language and the language that our vital signs “express” when measured in real time. Alexander Amini knows a lot about running and tennis, but he still

Jamie Engstrom On Technology, Talent And Transformation At CaterpillarApr 22, 2025 am 11:10 AM

Jamie Engstrom On Technology, Talent And Transformation At CaterpillarApr 22, 2025 am 11:10 AMCaterpillar's Chief Information Officer and Senior Vice President of IT, Jamie Engstrom, leads a global team of over 2,200 IT professionals across 28 countries. With 26 years at Caterpillar, including four and a half years in her current role, Engst

New Google Photos Update Makes Any Photo Pop With Ultra HDR QualityApr 22, 2025 am 11:09 AM

New Google Photos Update Makes Any Photo Pop With Ultra HDR QualityApr 22, 2025 am 11:09 AMGoogle Photos' New Ultra HDR Tool: A Quick Guide Enhance your photos with Google Photos' new Ultra HDR tool, transforming standard images into vibrant, high-dynamic-range masterpieces. Ideal for social media, this tool boosts the impact of any photo,

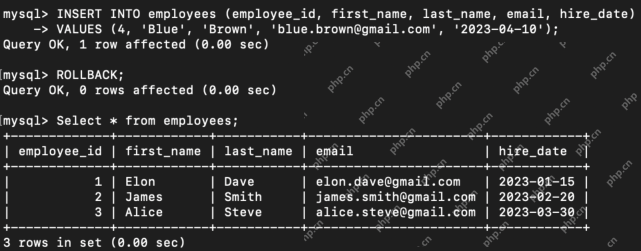

What are the TCL Commands in SQL? - Analytics VidhyaApr 22, 2025 am 11:07 AM

What are the TCL Commands in SQL? - Analytics VidhyaApr 22, 2025 am 11:07 AMIntroduction Transaction Control Language (TCL) commands are essential in SQL for managing changes made by Data Manipulation Language (DML) statements. These commands allow database administrators and users to control transaction processes, thereby

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

SublimeText3 English version

Recommended: Win version, supports code prompts!

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),

SublimeText3 Mac version

God-level code editing software (SublimeText3)

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.

Atom editor mac version download

The most popular open source editor