Privacy protection issues in artificial intelligence technology

Privacy protection issues in artificial intelligence technology

With the development of artificial intelligence (Artificial Intelligence, AI) technology, our lives have become more and more dependent on Intelligent systems and equipment. Whether it is smartphones, smart homes, or self-driving cars, artificial intelligence technology is gradually penetrating into our daily lives. However, while enjoying the convenience of artificial intelligence technology, we also face privacy protection issues.

Privacy protection means that personal sensitive information should not be collected, used or disclosed without authorization. However, artificial intelligence technology often requires a large amount of data to train models and implement functions, which leads to conflicts with privacy protection. The following will discuss privacy protection issues in artificial intelligence technology, and provide specific code examples to illustrate solutions.

- Data collection and privacy protection

In artificial intelligence technology, data collection is an essential step. However, the collection of sensitive personal data without the explicit authorization and informed consent of the user may constitute a privacy invasion. In the code example, we show how to protect user privacy during data collection.

# 导入隐私保护库

import privacylib

# 定义数据收集函数,此处仅作示例

def collect_data(user_id, data):

# 对数据进行匿名化处理

anonymized_data = privacylib.anonymize(data)

# 将匿名化后的数据存储在数据库中

privacylib.store_data(user_id, anonymized_data)

return "Data collected successfully"

# 用户许可授权

def grant_permission(user_id):

# 检查用户是否已经授权

if privacylib.check_permission(user_id):

return "User has already granted permission"

# 向用户展示隐私政策和数据收集用途

privacylib.show_privacy_policy()

# 用户同意授权

privacylib.set_permission(user_id)

return "Permission granted"

# 主程序

def main():

user_id = privacylib.get_user_id()

permission_status = grant_permission(user_id)

if permission_status == "Permission granted":

data = privacylib.collect_data(user_id)

print(collect_data(user_id, data))

else:

print("Data collection failed: permission not granted")In the above code example, we used a privacy protection library called privacylib. The library provides some privacy-preserving features such as data anonymization and data storage. In the data collection function collect_data, we anonymize the user's data and store the anonymized data in the database to protect the user's privacy. At the same time, we display the privacy policy and data collection purposes to the user in the grant_permission function, and only perform data collection operations after the user agrees to authorize.

- Model training and privacy protection

In artificial intelligence technology, model training is a key step to achieve intelligent functions. However, the large amounts of data required for model training may contain sensitive information about users, such as personally identifiable information. In order to protect user privacy, we need to take some measures to ensure data security during model training.

# 导入隐私保护库

import privacylib

# 加载训练数据

def load_train_data():

# 从数据库中获取训练数据

train_data = privacylib.load_data()

# 对训练数据进行匿名化处理

anonymized_data = privacylib.anonymize(train_data)

return anonymized_data

# 模型训练

def train_model(data):

# 模型训练代码,此处仅作示例

model = privacylib.train(data)

return model

# 主程序

def main():

train_data = load_train_data()

model = train_model(train_data)

# 使用训练好的模型进行预测等功能

predict_result = privacylib.predict(model, test_data)

print("Prediction result:", predict_result)In the above code example, we use the load_data function in the privacylib library to obtain data from the database and anonymize the data before loading the training data. deal with. In this way, sensitive information will not be exposed during model training. Then, we use the anonymized data for model training to ensure the security of user privacy.

Summary:

The development of artificial intelligence technology has brought us convenience and intelligence, but it has also brought challenges in privacy protection. During the data collection and model training process, we need to take privacy protection measures to ensure user privacy security. By introducing methods such as privacy protection libraries and anonymization processing, we can effectively solve privacy issues in artificial intelligence technology. However, privacy protection is a complex issue that requires continuous research and improvement to meet the growing demands for intelligence and privacy protection.

The above is the detailed content of Privacy protection issues in artificial intelligence technology. For more information, please follow other related articles on the PHP Chinese website!

The Hidden Dangers Of AI Internal Deployment: Governance Gaps And Catastrophic RisksApr 28, 2025 am 11:12 AM

The Hidden Dangers Of AI Internal Deployment: Governance Gaps And Catastrophic RisksApr 28, 2025 am 11:12 AMThe unchecked internal deployment of advanced AI systems poses significant risks, according to a new report from Apollo Research. This lack of oversight, prevalent among major AI firms, allows for potential catastrophic outcomes, ranging from uncont

Building The AI PolygraphApr 28, 2025 am 11:11 AM

Building The AI PolygraphApr 28, 2025 am 11:11 AMTraditional lie detectors are outdated. Relying on the pointer connected by the wristband, a lie detector that prints out the subject's vital signs and physical reactions is not accurate in identifying lies. This is why lie detection results are not usually adopted by the court, although it has led to many innocent people being jailed. In contrast, artificial intelligence is a powerful data engine, and its working principle is to observe all aspects. This means that scientists can apply artificial intelligence to applications seeking truth through a variety of ways. One approach is to analyze the vital sign responses of the person being interrogated like a lie detector, but with a more detailed and precise comparative analysis. Another approach is to use linguistic markup to analyze what people actually say and use logic and reasoning. As the saying goes, one lie breeds another lie, and eventually

Is AI Cleared For Takeoff In The Aerospace Industry?Apr 28, 2025 am 11:10 AM

Is AI Cleared For Takeoff In The Aerospace Industry?Apr 28, 2025 am 11:10 AMThe aerospace industry, a pioneer of innovation, is leveraging AI to tackle its most intricate challenges. Modern aviation's increasing complexity necessitates AI's automation and real-time intelligence capabilities for enhanced safety, reduced oper

Watching Beijing's Spring Robot RaceApr 28, 2025 am 11:09 AM

Watching Beijing's Spring Robot RaceApr 28, 2025 am 11:09 AMThe rapid development of robotics has brought us a fascinating case study. The N2 robot from Noetix weighs over 40 pounds and is 3 feet tall and is said to be able to backflip. Unitree's G1 robot weighs about twice the size of the N2 and is about 4 feet tall. There are also many smaller humanoid robots participating in the competition, and there is even a robot that is driven forward by a fan. Data interpretation The half marathon attracted more than 12,000 spectators, but only 21 humanoid robots participated. Although the government pointed out that the participating robots conducted "intensive training" before the competition, not all robots completed the entire competition. Champion - Tiangong Ult developed by Beijing Humanoid Robot Innovation Center

The Mirror Trap: AI Ethics And The Collapse Of Human ImaginationApr 28, 2025 am 11:08 AM

The Mirror Trap: AI Ethics And The Collapse Of Human ImaginationApr 28, 2025 am 11:08 AMArtificial intelligence, in its current form, isn't truly intelligent; it's adept at mimicking and refining existing data. We're not creating artificial intelligence, but rather artificial inference—machines that process information, while humans su

New Google Leak Reveals Handy Google Photos Feature UpdateApr 28, 2025 am 11:07 AM

New Google Leak Reveals Handy Google Photos Feature UpdateApr 28, 2025 am 11:07 AMA report found that an updated interface was hidden in the code for Google Photos Android version 7.26, and each time you view a photo, a row of newly detected face thumbnails are displayed at the bottom of the screen. The new facial thumbnails are missing name tags, so I suspect you need to click on them individually to see more information about each detected person. For now, this feature provides no information other than those people that Google Photos has found in your images. This feature is not available yet, so we don't know how Google will use it accurately. Google can use thumbnails to speed up finding more photos of selected people, or may be used for other purposes, such as selecting the individual to edit. Let's wait and see. As for now

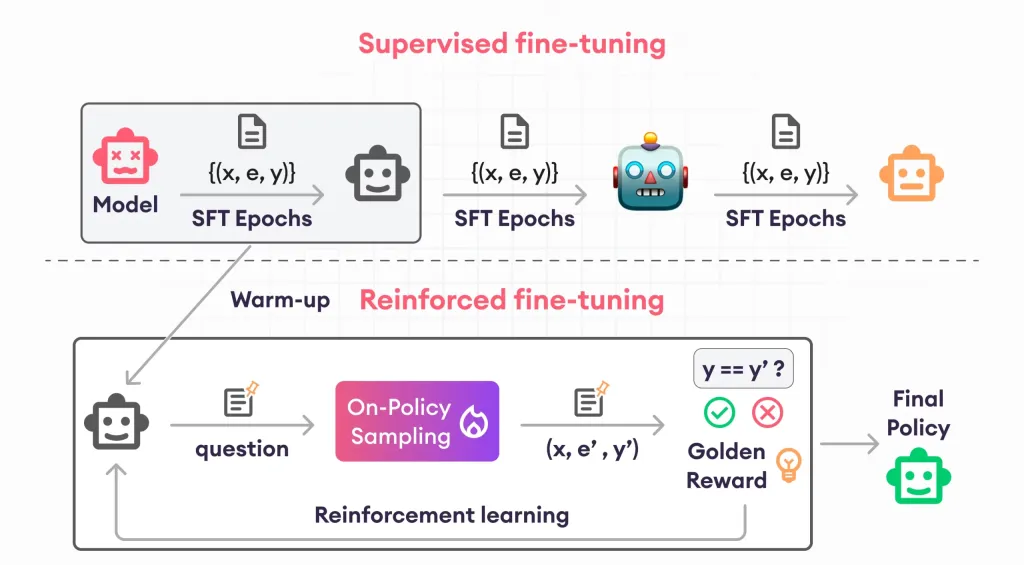

Guide to Reinforcement Finetuning - Analytics VidhyaApr 28, 2025 am 09:30 AM

Guide to Reinforcement Finetuning - Analytics VidhyaApr 28, 2025 am 09:30 AMReinforcement finetuning has shaken up AI development by teaching models to adjust based on human feedback. It blends supervised learning foundations with reward-based updates to make them safer, more accurate, and genuinely help

Let's Dance: Structured Movement To Fine-Tune Our Human Neural NetsApr 27, 2025 am 11:09 AM

Let's Dance: Structured Movement To Fine-Tune Our Human Neural NetsApr 27, 2025 am 11:09 AMScientists have extensively studied human and simpler neural networks (like those in C. elegans) to understand their functionality. However, a crucial question arises: how do we adapt our own neural networks to work effectively alongside novel AI s

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

WebStorm Mac version

Useful JavaScript development tools

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment

SublimeText3 Chinese version

Chinese version, very easy to use

PhpStorm Mac version

The latest (2018.2.1) professional PHP integrated development tool