Used for lidar point cloud self-supervised pre-training SOTA!

Thesis idea:

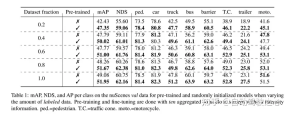

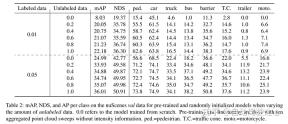

masked autoencoding has become a successful pre-training paradigm for Transformer models of text, images, and most recently point clouds. Raw car datasets are suitable for self-supervised pre-training because they are generally less expensive to collect than annotation for tasks such as 3D object detection (OD). However, the development of masked autoencoders for point clouds has only focused on synthetic and indoor data. Therefore, existing methods have tailored their representations and models into small and dense point clouds with uniform point density. In this work, we investigate masked autoencoding of point clouds in automotive settings, which are sparse and whose density can vary significantly between different objects in the same scene. . To this end, this paper proposes Voxel-MAE, a simple masked autoencoding pre-training scheme designed for voxel representation. This paper pre-trains a Transformer-based 3D object detector backbone to reconstruct masked voxels and distinguish empty voxels from non-empty voxels. Our method improves 3D OD performance of 1.75 mAP and 1.05 NDS on the challenging nuScenes dataset. Furthermore, we show that by using Voxel-MAE for pre-training, we require only 40% annotated data to outperform the equivalent data with random initialization.

Main contributions:

This paper proposes Voxel-MAE (a method of deploying MAE-style self-supervised pre-training on voxelized point clouds) , and evaluated it on the large automotive point cloud dataset nuScenes. The method in this article is the first self-supervised pre-training scheme using the automotive point cloud Transformer backbone.

We tailor our method for voxel representation and use a unique set of reconstruction tasks to capture the characteristics of voxelized point clouds.

This article proves that our method is data efficient and reduces the need for annotated data. With pre-training, this paper outperforms fully supervised data when using only 40% of the annotated data.

Additionally, this paper finds that Voxel-MAE improves the performance of Transformer-based detectors by 1.75 percentage points in mAP and 1.05 percentage points in NDS, compared with existing self-supervised methods. , its performance is improved by 2 times.

Network Design:

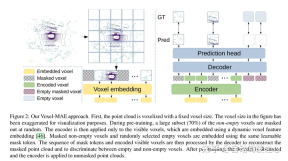

The purpose of this work is to extend MAE-style pre-training to voxelized point clouds. The core idea is still to use an encoder to create a rich latent representation from partial observations of the input, and then use a decoder to reconstruct the original input, as shown in Figure 2. After pre-training, the encoder is used as the backbone of the 3D object detector. However, due to fundamental differences between images and point clouds, some modifications are required for efficient training of Voxel-MAE.

Figure 2: Voxel-MAE method of this article. First, the point cloud is voxelized with a fixed voxel size. Voxel sizes in the figures have been exaggerated for visualization purposes. Before training, a large portion (70%) of non-empty voxels are randomly masked. The encoder is then applied only to visible voxels, embedding these voxels using dynamic voxel features embedding [46]. Masked non-empty voxels and randomly selected empty voxels are embedded using the same learnable mask tokens. The decoder then processes the sequence of mask tokens and the encoded sequence of visible voxels to reconstruct the masked point cloud and distinguish empty voxels from non-empty voxels. After pre-training, the decoder is discarded and the encoder is applied to the unmasked point cloud.

Figure 1: MAE (left) divides the image into fixed-size non-overlapping patches. Existing masked point modeling methods (middle) create a fixed number of point cloud patches by using farthest point sampling and k-nearest neighbors. Our method (right) uses non-overlapping voxels and a dynamic number of points.

Experimental results:

##

##

引用:

Hess G, Jaxing J, Svensson E, et al. Masked autoencoder for self-supervised pre-training on lidar point clouds[C]//Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision. 2023: 350-359.

The above is the detailed content of Used for lidar point cloud self-supervised pre-training SOTA!. For more information, please follow other related articles on the PHP Chinese website!

You Must Build Workplace AI Behind A Veil Of IgnoranceApr 29, 2025 am 11:15 AM

You Must Build Workplace AI Behind A Veil Of IgnoranceApr 29, 2025 am 11:15 AMIn John Rawls' seminal 1971 book The Theory of Justice, he proposed a thought experiment that we should take as the core of today's AI design and use decision-making: the veil of ignorance. This philosophy provides a simple tool for understanding equity and also provides a blueprint for leaders to use this understanding to design and implement AI equitably. Imagine that you are making rules for a new society. But there is a premise: you don’t know in advance what role you will play in this society. You may end up being rich or poor, healthy or disabled, belonging to a majority or marginal minority. Operating under this "veil of ignorance" prevents rule makers from making decisions that benefit themselves. On the contrary, people will be more motivated to formulate public

Decisions, Decisions… Next Steps For Practical Applied AIApr 29, 2025 am 11:14 AM

Decisions, Decisions… Next Steps For Practical Applied AIApr 29, 2025 am 11:14 AMNumerous companies specialize in robotic process automation (RPA), offering bots to automate repetitive tasks—UiPath, Automation Anywhere, Blue Prism, and others. Meanwhile, process mining, orchestration, and intelligent document processing speciali

The Agents Are Coming – More On What We Will Do Next To AI PartnersApr 29, 2025 am 11:13 AM

The Agents Are Coming – More On What We Will Do Next To AI PartnersApr 29, 2025 am 11:13 AMThe future of AI is moving beyond simple word prediction and conversational simulation; AI agents are emerging, capable of independent action and task completion. This shift is already evident in tools like Anthropic's Claude. AI Agents: Research a

Why Empathy Is More Important Than Control For Leaders In An AI-Driven FutureApr 29, 2025 am 11:12 AM

Why Empathy Is More Important Than Control For Leaders In An AI-Driven FutureApr 29, 2025 am 11:12 AMRapid technological advancements necessitate a forward-looking perspective on the future of work. What happens when AI transcends mere productivity enhancement and begins shaping our societal structures? Topher McDougal's upcoming book, Gaia Wakes:

AI For Product Classification: Can Machines Master Tax Law?Apr 29, 2025 am 11:11 AM

AI For Product Classification: Can Machines Master Tax Law?Apr 29, 2025 am 11:11 AMProduct classification, often involving complex codes like "HS 8471.30" from systems such as the Harmonized System (HS), is crucial for international trade and domestic sales. These codes ensure correct tax application, impacting every inv

Could Data Center Demand Spark A Climate Tech Rebound?Apr 29, 2025 am 11:10 AM

Could Data Center Demand Spark A Climate Tech Rebound?Apr 29, 2025 am 11:10 AMThe future of energy consumption in data centers and climate technology investment This article explores the surge in energy consumption in AI-driven data centers and its impact on climate change, and analyzes innovative solutions and policy recommendations to address this challenge. Challenges of energy demand: Large and ultra-large-scale data centers consume huge power, comparable to the sum of hundreds of thousands of ordinary North American families, and emerging AI ultra-large-scale centers consume dozens of times more power than this. In the first eight months of 2024, Microsoft, Meta, Google and Amazon have invested approximately US$125 billion in the construction and operation of AI data centers (JP Morgan, 2024) (Table 1). Growing energy demand is both a challenge and an opportunity. According to Canary Media, the looming electricity

AI And Hollywood's Next Golden AgeApr 29, 2025 am 11:09 AM

AI And Hollywood's Next Golden AgeApr 29, 2025 am 11:09 AMGenerative AI is revolutionizing film and television production. Luma's Ray 2 model, as well as Runway's Gen-4, OpenAI's Sora, Google's Veo and other new models, are improving the quality of generated videos at an unprecedented speed. These models can easily create complex special effects and realistic scenes, even short video clips and camera-perceived motion effects have been achieved. While the manipulation and consistency of these tools still need to be improved, the speed of progress is amazing. Generative video is becoming an independent medium. Some models are good at animation production, while others are good at live-action images. It is worth noting that Adobe's Firefly and Moonvalley's Ma

Is ChatGPT Slowly Becoming AI's Biggest Yes-Man?Apr 29, 2025 am 11:08 AM

Is ChatGPT Slowly Becoming AI's Biggest Yes-Man?Apr 29, 2025 am 11:08 AMChatGPT user experience declines: is it a model degradation or user expectations? Recently, a large number of ChatGPT paid users have complained about their performance degradation, which has attracted widespread attention. Users reported slower responses to models, shorter answers, lack of help, and even more hallucinations. Some users expressed dissatisfaction on social media, pointing out that ChatGPT has become “too flattering” and tends to verify user views rather than provide critical feedback. This not only affects the user experience, but also brings actual losses to corporate customers, such as reduced productivity and waste of computing resources. Evidence of performance degradation Many users have reported significant degradation in ChatGPT performance, especially in older models such as GPT-4 (which will soon be discontinued from service at the end of this month). this

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Atom editor mac version download

The most popular open source editor

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software

VSCode Windows 64-bit Download

A free and powerful IDE editor launched by Microsoft

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.

Zend Studio 13.0.1

Powerful PHP integrated development environment