Technology peripherals

Technology peripherals AI

AI Everyone has a ChatGPT! Microsoft DeepSpeed Chat is shockingly released, one-click RLHF training for hundreds of billions of large models

Everyone has a ChatGPT! Microsoft DeepSpeed Chat is shockingly released, one-click RLHF training for hundreds of billions of large modelsEveryone has a ChatGPT! Microsoft DeepSpeed Chat is shockingly released, one-click RLHF training for hundreds of billions of large models

The dream of having a ChatGPT for everyone is about to come true?

Just now, Microsoft has open sourced a system framework that can add a complete RLHF process to model training - DeepSpeed Chat.

In other words, high-quality ChatGPT-like models of all sizes are now within easy reach!

## Project address: https://github.com/microsoft/DeepSpeed

Unlock 100 billion-level ChatGPT with one click and easily save 15 timesAs we all know, because OpenAI is not Open, the open source community has successively launched LLaMa and Alpaca in order to allow more people to use ChatGPT-like models. , Vicuna, Databricks-Dolly and other models.

However, due to the lack of an end-to-end RLHF scale system, the current training of ChatGPT-like models is still very difficult. The emergence of DeepSpeed Chat just makes up for this "bug".

What’s even brighter is that DeepSpeed Chat has greatly reduced costs.

Previously, expensive multi-GPU setups were beyond the capabilities of many researchers, and even with access to multi-GPU clusters, existing methods cannot afford hundreds of billions of parameter ChatGPT models training.

Now, for only $1,620, you can train an OPT-66B model in 2.1 days through the hybrid engine DeepSpeed-HE.

If you use a multi-node, multi-GPU system, DeepSpeed-HE can spend 320 US dollars to train an OPT-13B model in 1.25 hours, and spend 5120 US dollars to train an OPT-13B model in less than 1.25 hours. Train an OPT-175B model in one day.

Former Meta AI expert Elvis forwarded it excitedly, saying this was a big deal and expressed curiosity about how DeepSpeed Chat compared with ColossalChat.

Next, let’s take a look at the effect.

After training by DeepSpeed-Chat, the 1.3 billion parameter version "ChatGPT" performed very well in the question and answer session. Not only can you get the context of the question, but the answers given are also relevant.

In multiple rounds of conversations, the performance demonstrated by this 1.3 billion parameter version of "ChatGPT" completely exceeded the inherent impression of this scale. .

Of course, before you experience it, you need to configure the environment:

git clone https://github.com/microsoft/DeepSpeed.git cd DeepSpeed pip install . git clone https://github.com/microsoft/DeepSpeedExamples.git cd DeepSpeedExamples/applications/DeepSpeed-Chat/ pip install -r requirements.txt

A cup of coffee, after training the 1.3 billion parameter version of ChatGPT

If you only have about 1-2 hours of coffee or lunch break, You can also try to use DeepSpeed-Chat to train a "little toy".

The team has specially prepared a training example for the 1.3B model, which can be tested on consumer-grade GPUs. The best part is, when you come back from your lunch break, everything is ready.

python train.py --actor-model facebook/opt-1.3b --reward-model facebook/opt-350m --num-gpus 1

Consumer-grade NVIDIA A6000 GPU with 48GB of video memory:

一个GPU Node,半天搞定130亿参数

如果你只有半天的时间,以及一台服务器节点,则可以通过预训练的OPT-13B作为actor模型,OPT-350M作为reward模型,来生成一个130亿参数的类ChatGPT模型:

python train.py --actor-model facebook/opt-13b --reward-model facebook/opt-350m --num-gpus 8

单DGX节点,搭载了8个NVIDIA A100-40G GPU:

超省钱云方案,训练660亿参数模型

如果你可以使用多节点集群或云资源,并希望训练一个更大、更高质量的模型。那么只需基于下面这行代码,输入你想要的模型大小(如66B)和GPU数量(如64):

python train.py --actor-model facebook/opt-66b --reward-model facebook/opt-350m --num-gpus 64

8个DGX节点,每个节点配备8个NVIDIA A100-80G GPU:

具体来说,针对不同规模的模型和硬件配置,DeepSpeed-RLHF系统所需的时间和成本如下:

DeepSpeed Chat是个啥?

DeepSpeed Chat是一种通用系统框架,能够实现类似ChatGPT模型的端到端RLHF训练,从而帮助我们生成自己的高质量类ChatGPT模型。

DeepSpeed Chat具有以下三大核心功能:

1. 简化ChatGPT类型模型的训练和强化推理体验

开发者只需一个脚本,就能实现多个训练步骤,并且在完成后还可以利用推理API进行对话式交互测试。

2. DeepSpeed-RLHF模块

DeepSpeed-RLHF复刻了InstructGPT论文中的训练模式,并提供了数据抽象和混合功能,支持开发者使用多个不同来源的数据源进行训练。

3. DeepSpeed-RLHF系统

团队将DeepSpeed的训练(training engine)和推理能力(inference engine) 整合成了一个统一的混合引擎(DeepSpeed Hybrid Engine or DeepSpeed-HE)中,用于RLHF训练。由于,DeepSpeed-HE能够无缝地在推理和训练模式之间切换,因此可以利用来自DeepSpeed-Inference的各种优化。

DeepSpeed-RLHF系统在大规模训练中具有无与伦比的效率,使复杂的RLHF训练变得快速、经济并且易于大规模推广:

- 高效且经济:

DeepSpeed-HE比现有系统快15倍以上,使RLHF训练快速且经济实惠。

例如,DeepSpeed-HE在Azure云上只需9小时即可训练一个OPT-13B模型,只需18小时即可训练一个OPT-30B模型。这两种训练分别花费不到300美元和600美元。

- Excellent scalability:

DeepSpeed-HE can support training models with hundreds of billions of parameters and run on multi-node multi-GPU systems Demonstrates excellent scalability.

Therefore, even a model with 13 billion parameters can be trained in only 1.25 hours. For a model with 175 billion parameters, training using DeepSpeed-HE only takes less than a day.

- Popularizing RLHF training:

Only a single GPU, DeepSpeed-HE can support training for more than A model with 13 billion parameters. This enables data scientists and researchers who do not have access to multi-GPU systems to easily create not only lightweight RLHF models, but also large and powerful models to cope with different usage scenarios.

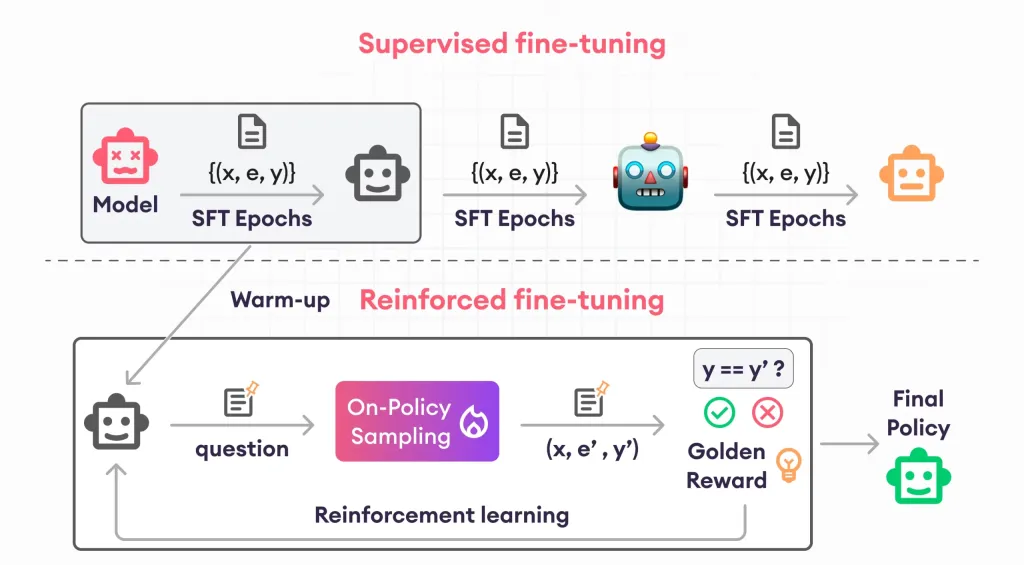

Complete RLHF training process

In order to provide a seamless training experience, the researcher followed InstructGPT and used it in DeepSpeed-Chat It contains a complete end-to-end training process.

##DeepSpeed-Chat’s RLHF training process diagram, including some optional functions

The process consists of three main steps:

- Step 1:

Supervised fine-tuning (SFT), using Featured human answers to fine-tune pre-trained language models to respond to various queries.

- Step 2:

Reward model fine-tuning, trained with a dataset containing human ratings of multiple answers to the same query An independent (usually smaller than SFT) reward model (RW).

- Step 3:

RLHF training. In this step, the SFT model is trained from the RW model by using the approximate policy optimization (PPO) algorithm. The reward feedback is further fine-tuned.

In step 3, the researcher also provides two additional functions to help improve the quality of the model:

-Exponential Moving Average (EMA) collection, you can select an EMA-based checkpoint for final evaluation.

- Hybrid training, mixing the pre-training objective (i.e. next word prediction) with the PPO objective to prevent performance regression on public benchmarks (such as SQuAD2.0).

EMA and hybrid training are two training features that are often ignored by other open source frameworks because they do not hinder training.

However, according to InstructGPT, EMA checkpoints tend to provide better response quality than traditional final training models, and hybrid training can help the model maintain pre-training baseline solving capabilities.

Therefore, the researchers provide these functions for users so that they can fully obtain the training experience described in InstructGPT.

In addition to being highly consistent with the InstructGPT paper, the researcher also provides functions that allow developers to use a variety of data resources to train their own RLHF models:

- Data abstraction and hybrid capabilities:

DeepSpeed-Chat is equipped with (1) an abstract dataset layer to unify the format of different datasets; and (2) data splitting Split/Blend functionality, whereby multiple datasets are blended appropriately and then split in 3 training stages.

DeepSpeed Hybrid Engine

Indicates steps 1 and 2 of the guided RLHF pipeline, similar to conventional fine-tuning of large models, which are driven by ZeRO-based optimization and flexible parallelism in DeepSpeed training A combination of strategies to achieve scale and speed.

And step 3 of the pipeline is the most complex part in terms of performance impact.

Each iteration needs to effectively handle two stages: a) the inference stage, used for token/experience generation, to generate training input; b) the training stage, updating actors and The weights of the reward model, as well as the interaction and scheduling between them.

It introduces two major difficulties: (1) memory cost, since multiple SFT and RW models need to be run throughout the third stage; (2) speed of the answer generation stage Slower, if not accelerated correctly, will significantly slow down the entire third stage.

In addition, the two important functions added by the researchers in the third stage-exponential moving average (EMA) collection and hybrid training will incur additional memory and training costs.

#To address these challenges, researchers have combined all system capabilities of DeepSpeed training and inference into a unified infrastructure , that is, Hybrid Engine.

It utilizes the original DeepSpeed engine for fast training mode, while effortlessly applying the DeepSpeed inference engine for generation/evaluation mode, providing a better solution for the third stage of RLHF training. Fast training system.

As shown in the figure below, the transition between the DeepSpeed training and inference engines is seamless: by enabling the typical eval and train modes for the actor model, when running the inference and training processes , DeepSpeed has chosen different optimizations to run the model faster and improve the throughput of the overall system.

##DeepSpeed hybrid engine design to accelerate the most time-consuming part of the RLHF process

During the inference execution process of the experience generation phase of RLHF training, the DeepSpeed hybrid engine uses a lightweight memory management system to handle KV cache and intermediate results, while using highly optimized inference CUDA cores and tensor parallel computing , compared with existing solutions, the throughput (number of tokens per second) has been greatly improved.

During training, the hybrid engine enables memory optimization technologies such as DeepSpeed’s ZeRO family of technologies and Low-Order Adaptation (LoRA).

The way researchers design and implement optimization of these systems is to make them compatible with each other and can be combined together to provide the highest training efficiency under a unified hybrid engine.

The hybrid engine can seamlessly change model partitioning during training and inference to support tensor parallel-based inference and ZeRO-based training sharding mechanisms.

It can also reconfigure the memory system to maximize memory availability in every mode.

This avoids the memory allocation bottleneck and can support large batch sizes, greatly improving performance.

In summary, the Hybrid Engine pushes the boundaries of modern RLHF training, delivering unparalleled scale and system efficiency for RLHF workloads.

Compared with existing systems such as Colossal-AI or HuggingFace-DDP, DeepSpeed-Chat has over an order of magnitude throughput, enabling training larger actor models at the same latency budget or training similarly sized models at lower cost.

For example, DeepSpeed improves the throughput of RLHF training by more than 10 times on a single GPU. While both CAI-Coati and HF-DDP can run 1.3B models, DeepSpeed can run 6.5B models on the same hardware, which is directly 5 times higher.

On multiple GPUs on a single node, DeepSpeed-Chat is 6-19 times faster than CAI-Coati in terms of system throughput, HF- DDP is accelerated by 1.4-10.5 times.

The team stated that one of the key reasons why DeepSpeed-Chat can achieve such excellent results is the acceleration provided by the hybrid engine during the generation phase.

The above is the detailed content of Everyone has a ChatGPT! Microsoft DeepSpeed Chat is shockingly released, one-click RLHF training for hundreds of billions of large models. For more information, please follow other related articles on the PHP Chinese website!

The Hidden Dangers Of AI Internal Deployment: Governance Gaps And Catastrophic RisksApr 28, 2025 am 11:12 AM

The Hidden Dangers Of AI Internal Deployment: Governance Gaps And Catastrophic RisksApr 28, 2025 am 11:12 AMThe unchecked internal deployment of advanced AI systems poses significant risks, according to a new report from Apollo Research. This lack of oversight, prevalent among major AI firms, allows for potential catastrophic outcomes, ranging from uncont

Building The AI PolygraphApr 28, 2025 am 11:11 AM

Building The AI PolygraphApr 28, 2025 am 11:11 AMTraditional lie detectors are outdated. Relying on the pointer connected by the wristband, a lie detector that prints out the subject's vital signs and physical reactions is not accurate in identifying lies. This is why lie detection results are not usually adopted by the court, although it has led to many innocent people being jailed. In contrast, artificial intelligence is a powerful data engine, and its working principle is to observe all aspects. This means that scientists can apply artificial intelligence to applications seeking truth through a variety of ways. One approach is to analyze the vital sign responses of the person being interrogated like a lie detector, but with a more detailed and precise comparative analysis. Another approach is to use linguistic markup to analyze what people actually say and use logic and reasoning. As the saying goes, one lie breeds another lie, and eventually

Is AI Cleared For Takeoff In The Aerospace Industry?Apr 28, 2025 am 11:10 AM

Is AI Cleared For Takeoff In The Aerospace Industry?Apr 28, 2025 am 11:10 AMThe aerospace industry, a pioneer of innovation, is leveraging AI to tackle its most intricate challenges. Modern aviation's increasing complexity necessitates AI's automation and real-time intelligence capabilities for enhanced safety, reduced oper

Watching Beijing's Spring Robot RaceApr 28, 2025 am 11:09 AM

Watching Beijing's Spring Robot RaceApr 28, 2025 am 11:09 AMThe rapid development of robotics has brought us a fascinating case study. The N2 robot from Noetix weighs over 40 pounds and is 3 feet tall and is said to be able to backflip. Unitree's G1 robot weighs about twice the size of the N2 and is about 4 feet tall. There are also many smaller humanoid robots participating in the competition, and there is even a robot that is driven forward by a fan. Data interpretation The half marathon attracted more than 12,000 spectators, but only 21 humanoid robots participated. Although the government pointed out that the participating robots conducted "intensive training" before the competition, not all robots completed the entire competition. Champion - Tiangong Ult developed by Beijing Humanoid Robot Innovation Center

The Mirror Trap: AI Ethics And The Collapse Of Human ImaginationApr 28, 2025 am 11:08 AM

The Mirror Trap: AI Ethics And The Collapse Of Human ImaginationApr 28, 2025 am 11:08 AMArtificial intelligence, in its current form, isn't truly intelligent; it's adept at mimicking and refining existing data. We're not creating artificial intelligence, but rather artificial inference—machines that process information, while humans su

New Google Leak Reveals Handy Google Photos Feature UpdateApr 28, 2025 am 11:07 AM

New Google Leak Reveals Handy Google Photos Feature UpdateApr 28, 2025 am 11:07 AMA report found that an updated interface was hidden in the code for Google Photos Android version 7.26, and each time you view a photo, a row of newly detected face thumbnails are displayed at the bottom of the screen. The new facial thumbnails are missing name tags, so I suspect you need to click on them individually to see more information about each detected person. For now, this feature provides no information other than those people that Google Photos has found in your images. This feature is not available yet, so we don't know how Google will use it accurately. Google can use thumbnails to speed up finding more photos of selected people, or may be used for other purposes, such as selecting the individual to edit. Let's wait and see. As for now

Guide to Reinforcement Finetuning - Analytics VidhyaApr 28, 2025 am 09:30 AM

Guide to Reinforcement Finetuning - Analytics VidhyaApr 28, 2025 am 09:30 AMReinforcement finetuning has shaken up AI development by teaching models to adjust based on human feedback. It blends supervised learning foundations with reward-based updates to make them safer, more accurate, and genuinely help

Let's Dance: Structured Movement To Fine-Tune Our Human Neural NetsApr 27, 2025 am 11:09 AM

Let's Dance: Structured Movement To Fine-Tune Our Human Neural NetsApr 27, 2025 am 11:09 AMScientists have extensively studied human and simpler neural networks (like those in C. elegans) to understand their functionality. However, a crucial question arises: how do we adapt our own neural networks to work effectively alongside novel AI s

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

WebStorm Mac version

Useful JavaScript development tools

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment

SublimeText3 Chinese version

Chinese version, very easy to use

PhpStorm Mac version

The latest (2018.2.1) professional PHP integrated development tool