Technology peripherals

Technology peripherals AI

AI 16 top scholars debate AGI! Marcus, the father of LSTM and MacArthur Genius Grant winner gathered together

16 top scholars debate AGI! Marcus, the father of LSTM and MacArthur Genius Grant winner gathered together16 top scholars debate AGI! Marcus, the father of LSTM and MacArthur Genius Grant winner gathered together

After a one-year hiatus, the annual Artificial Intelligence Debate organized by Montreal.AI and New York University Professor Emeritus Gary Marcus returned last Friday night and was once again held as an online conference like in 2020.

This year’s debate – AI Debate 3: The AGI Debate – focused on the concept of artificial general intelligence, i.e., machines capable of integrating myriads of near-human-level reasoning capabilities.

## Video link: https://www.youtube.com/watch?v=JGiLz_Jx9uI&t=7s

This discussion lasted for three and a half hours, focusing on five topics related to artificial intelligence: Cognition and Neuroscience, Common Sense, Architecture, Ethics and Morality, and Policy and Contribution.

In addition to many big names in computer science, 16 experts including computational neuroscientist Konrad Kording also participated.

This article briefly summarizes the views of five of the big guys. Interested readers can watch the full video through the link above.

Moderator: MarcusAs a well-known critic, Marcus quoted his article in the New Yorker ""Deep Learning" is Is artificial intelligence a revolution in development? 》, once again poured cold water on the development of AI.

Marcus said that contrary to the decade-long wave of enthusiasm for artificial intelligence after Li Feifei's team successfully released ImageNet, the "wishes" to build omnipotent machines have not been realized. .

Dileep George, a neuroscientist from Google DeepMind, once proposed a method called "innateness" the concept of.

To put it simply, it is certain ideas that are "built in" in the human mind.

So for artificial intelligence, should we pay more attention to innateness?

In this regard, George said that any kind of growth and development from an initial state to a certain stable state involves three factors.

The first is the internal structure in the initial state, the second is the input data, and the third is the universal natural law.

"It turns out that innate structures play an extraordinary role in every area we discover."

For those who are considered The classic example of learning, like acquiring a language, once you start to break it down, you realize that the data has almost no impact on it.

Language has not changed since the dawn of man, as evidenced by the fact that any child in any culture can master it.

George believes that language will become the core of artificial intelligence, giving us the opportunity to figure out what makes humans such a unique species.

University of Washington professor Yejin ChoiYejin Choi, a professor of computer science at the University of Washington, predicts that the performance of AI will become increasingly amazing in the next few years.

But since we don’t know the depth of the network, they will continue to make mistakes on adversarial and corner cases.

"For machines, the dark matter of language and intelligence may be common sense."

Of course , the dark matter mentioned here is something that is easy for humans but difficult for machines.

Jürgen Schmidhuber, the father of LSTM

Marcus said that we can now obtain a large amount of knowledge from large language models, but in fact this paradigm needs to be transformed. Because the language model is actually "deprived" of multiple types of input.

Jürgen Schmidhuber, director of the Swiss Artificial Intelligence Laboratory IDSIA and the father of LSTM, responded, "Most of what we are discussing today, at least in principle, has been adopted by General Purpose many years ago. Neural Networks" are solved." Such systems are "less than human."

Schmidhuber said that as computing power becomes cheaper every few years, the "old theory" is coming back. "We can do a lot of things with these old algorithms that we couldn't do at the time."

Then, IBM researcher Francesca Rossi asked Schmidhuber a question: "How can we still see A system without the functions we want? What do you think? Those defined technologies still have not entered the current system?"

In this regard, Schmidhuber believes that the current main issue is computing cost:

Recurrent networks can run arbitrary algorithms, and one of the most beautiful aspects of them is that they can also learn learning algorithms. The big question is what algorithms can it learn? We may need better algorithms. Options for improving learning algorithms.

The first such system appeared in 1992. I wrote my first paper in 1992. There was little we could do about it at that time. Today we can have millions and billions of weights.

Recent work with my students has shown that these old concepts, with a few improvements here and there, suddenly work so well that you can learn new learning algorithms that are better than backpropagation.

Jeff Clune, associate professor at the University of British Columbia

The topic discussed by Jeff Clune, associate professor of computer science at the University of British Columbia, is "AI Generating Algorithms: The Fastest Path to AGI."

Clune said that today’s artificial intelligence is taking an “artificial path”, which means that various learning rules and objective functions need to be completed manually by humans.

In this regard, he believes that in future practice, manual design methods will eventually give way to automatic generation.

Subsequently, Clune proposed the "three pillars" to promote the development of AI: meta-learning architecture, meta-learning algorithm, and automatically generating effective Learning environment and data.

Here, Clune suggests adding a "fourth pillar", which is "utilizing human data." For example, models running in the Minecraft environment can achieve "huge improvements" by learning from videos of humans playing the game.

Finally, Clune predicts that we have a 30% chance of achieving AGI by 2030, and that doesn’t require a new paradigm.

It is worth noting that AGI is defined here as "the ability to complete more than 50% of economically valuable human work."

To summarize

At the end of the discussion, Marcus gave all participants 30 seconds to answer a question: “If you could give students one piece of advice, e.g. , Which question of artificial intelligence do we need to study most now, or how to prepare for a world where artificial intelligence is increasingly becoming mainstream and central, what are the suggestions?"

Choi said: "We The alignment of AI with human values has to be addressed, particularly with an emphasis on diversity; I think that's one of the really key challenges we face, more broadly, addressing challenges like robustness, generalization and explainability."

George gave advice from the perspective of research direction: "First decide whether you want to engage in large-scale research or basic research, because they have different trajectories."

Clune: "AGI is coming. So, for researchers developing AI, I encourage you to engage in technologies based on engineering, algorithms, meta-learning, end-to-end learning, etc., because these are most likely to be absorbed into our AGIs are being created. Perhaps the most important for non-AI researchers is the question of governance. For example, what are the rules when developing AGIs? Who decides the rules? And how do we get researchers around the world to follow them? Set rules?"

At the end of the evening, Marcus recalled his speech in the previous debate: "It takes a village to cultivate artificial intelligence."

"I think that's even more true now," he said. "AI used to be a child, but now it's a bit like a rambunctious teenager who has not yet fully developed mature judgment."

He concluded: "This moment is both exciting and dangerous. 》

The above is the detailed content of 16 top scholars debate AGI! Marcus, the father of LSTM and MacArthur Genius Grant winner gathered together. For more information, please follow other related articles on the PHP Chinese website!

The Hidden Dangers Of AI Internal Deployment: Governance Gaps And Catastrophic RisksApr 28, 2025 am 11:12 AM

The Hidden Dangers Of AI Internal Deployment: Governance Gaps And Catastrophic RisksApr 28, 2025 am 11:12 AMThe unchecked internal deployment of advanced AI systems poses significant risks, according to a new report from Apollo Research. This lack of oversight, prevalent among major AI firms, allows for potential catastrophic outcomes, ranging from uncont

Building The AI PolygraphApr 28, 2025 am 11:11 AM

Building The AI PolygraphApr 28, 2025 am 11:11 AMTraditional lie detectors are outdated. Relying on the pointer connected by the wristband, a lie detector that prints out the subject's vital signs and physical reactions is not accurate in identifying lies. This is why lie detection results are not usually adopted by the court, although it has led to many innocent people being jailed. In contrast, artificial intelligence is a powerful data engine, and its working principle is to observe all aspects. This means that scientists can apply artificial intelligence to applications seeking truth through a variety of ways. One approach is to analyze the vital sign responses of the person being interrogated like a lie detector, but with a more detailed and precise comparative analysis. Another approach is to use linguistic markup to analyze what people actually say and use logic and reasoning. As the saying goes, one lie breeds another lie, and eventually

Is AI Cleared For Takeoff In The Aerospace Industry?Apr 28, 2025 am 11:10 AM

Is AI Cleared For Takeoff In The Aerospace Industry?Apr 28, 2025 am 11:10 AMThe aerospace industry, a pioneer of innovation, is leveraging AI to tackle its most intricate challenges. Modern aviation's increasing complexity necessitates AI's automation and real-time intelligence capabilities for enhanced safety, reduced oper

Watching Beijing's Spring Robot RaceApr 28, 2025 am 11:09 AM

Watching Beijing's Spring Robot RaceApr 28, 2025 am 11:09 AMThe rapid development of robotics has brought us a fascinating case study. The N2 robot from Noetix weighs over 40 pounds and is 3 feet tall and is said to be able to backflip. Unitree's G1 robot weighs about twice the size of the N2 and is about 4 feet tall. There are also many smaller humanoid robots participating in the competition, and there is even a robot that is driven forward by a fan. Data interpretation The half marathon attracted more than 12,000 spectators, but only 21 humanoid robots participated. Although the government pointed out that the participating robots conducted "intensive training" before the competition, not all robots completed the entire competition. Champion - Tiangong Ult developed by Beijing Humanoid Robot Innovation Center

The Mirror Trap: AI Ethics And The Collapse Of Human ImaginationApr 28, 2025 am 11:08 AM

The Mirror Trap: AI Ethics And The Collapse Of Human ImaginationApr 28, 2025 am 11:08 AMArtificial intelligence, in its current form, isn't truly intelligent; it's adept at mimicking and refining existing data. We're not creating artificial intelligence, but rather artificial inference—machines that process information, while humans su

New Google Leak Reveals Handy Google Photos Feature UpdateApr 28, 2025 am 11:07 AM

New Google Leak Reveals Handy Google Photos Feature UpdateApr 28, 2025 am 11:07 AMA report found that an updated interface was hidden in the code for Google Photos Android version 7.26, and each time you view a photo, a row of newly detected face thumbnails are displayed at the bottom of the screen. The new facial thumbnails are missing name tags, so I suspect you need to click on them individually to see more information about each detected person. For now, this feature provides no information other than those people that Google Photos has found in your images. This feature is not available yet, so we don't know how Google will use it accurately. Google can use thumbnails to speed up finding more photos of selected people, or may be used for other purposes, such as selecting the individual to edit. Let's wait and see. As for now

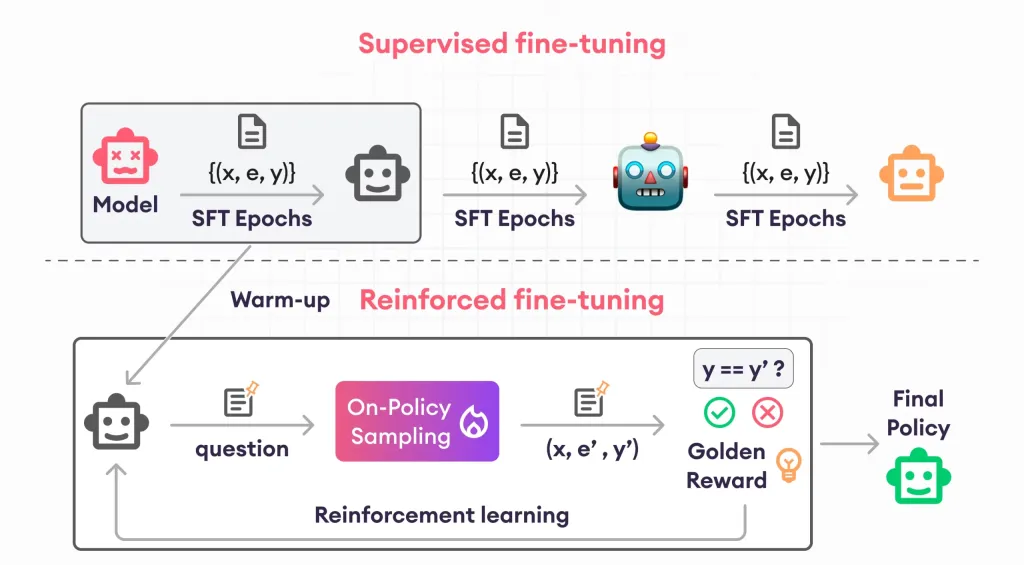

Guide to Reinforcement Finetuning - Analytics VidhyaApr 28, 2025 am 09:30 AM

Guide to Reinforcement Finetuning - Analytics VidhyaApr 28, 2025 am 09:30 AMReinforcement finetuning has shaken up AI development by teaching models to adjust based on human feedback. It blends supervised learning foundations with reward-based updates to make them safer, more accurate, and genuinely help

Let's Dance: Structured Movement To Fine-Tune Our Human Neural NetsApr 27, 2025 am 11:09 AM

Let's Dance: Structured Movement To Fine-Tune Our Human Neural NetsApr 27, 2025 am 11:09 AMScientists have extensively studied human and simpler neural networks (like those in C. elegans) to understand their functionality. However, a crucial question arises: how do we adapt our own neural networks to work effectively alongside novel AI s

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

SublimeText3 Chinese version

Chinese version, very easy to use

Safe Exam Browser

Safe Exam Browser is a secure browser environment for taking online exams securely. This software turns any computer into a secure workstation. It controls access to any utility and prevents students from using unauthorized resources.

EditPlus Chinese cracked version

Small size, syntax highlighting, does not support code prompt function

SublimeText3 Linux new version

SublimeText3 Linux latest version

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.