Technology peripherals

Technology peripherals AI

AI After the overwhelming emergence of large models, computer science finally became a 'natural science'

After the overwhelming emergence of large models, computer science finally became a 'natural science'After the overwhelming emergence of large models, computer science finally became a 'natural science'

The current artificial intelligence (AI) is in a wonderful era, and amazing tacit knowledge often appears (Polanyi’s Revenge and the new romance and tacitness of artificial intelligence) Knowledge, https://bit.ly/3qYrAOY), but it is certain that computers will not be able to complete this task for a long time in the future. Interesting research that has recently emerged is on large-scale learning systems based on the Transformer architecture, based on large network-scale multi-modal corpora and billions of parameters for training. Typical examples are large language models, GPT3 and PALM that respond to arbitrary text prompts, language/image models DALL-E and Imagen that convert text into images (even models with general behavior like GATO).

The emergence of large-scale learning models has fundamentally changed the nature of artificial intelligence research. When researchers recently used DALL-E, they believed that it seemed to have developed its own unique language. If humans could master it, they might be able to interact with DALL-E better. Some researchers have also found that GPT3's performance on reasoning problems can be improved by adding certain magical spells (such as "Let's think step by step") in the prompt. Now large learning models like GPT3 and DALL-E are like "alien species" and we have to try to decode their behavior.

This is undoubtedly a strange turning point for artificial intelligence. Since its emergence, artificial intelligence has been a "no man's land" between engineering (systems with specific functions) and science (discovering the laws of natural phenomena). The scientific part of AI stems from its original claims, which were insights into the nature of human intelligence; while the engineering part stems from a focus on intelligent capabilities (allowing computers to exhibit intelligent behavior) rather than insights into human intelligence.

The current situation is changing rapidly, especially as artificial intelligence has become synonymous with large-scale learning models. The current status quo is that no one knows anything about how the trained models have a specific function, or even other functions they may have (such as PALM's so-called ability to "explain jokes"). Even their creators often have no idea what these systems can do. Exploring these systems to understand their “functional” scope has become a recent trend in artificial intelligence research.

It is becoming increasingly clear that some parts of artificial intelligence are straying from their engineering roots. Today it is difficult to think of large learning systems as engineering designs with specific goals in the traditional sense. After all, one cannot say that one's children are "designed." The field of engineering doesn't typically celebrate unexpected new properties of systems it designs (just as civil engineers don't celebrate with excitement when a bridge they designed to withstand a Category 5 hurricane is found to levitate).

There is growing evidence that the study of these large, trained (but not designed) systems is destined to become a natural science: observing system functionality; doing ablation studies; conducting qualitative analysis of best practices. analyze.

Considering the fact that appearances are currently studied rather than what’s inside, this is similar to the ambitious goal in biology of trying to “figure it out” without actual evidence. Machine learning is a research endeavor that focuses more on why a system does what it does (think of doing "MRI" studies of large learning systems) rather than proving that the system was designed to do so. The knowledge gained from these studies can improve the ability to fine-tune systems (just like in medicine). Of course the study of surface settings allows for more targeted intervention than in internal settings.

Artificial intelligence becomes a natural science and will also have an impact on the entire computer science, considering that artificial intelligence will have a huge impact on almost all computing fields. The word "science" in computer science has also been questioned and ridiculed. But that has changed now, as artificial intelligence has become a natural science that studies large-scale artificial learning systems. Of course, there may be a lot of resistance and opinions to this transition, because computer science has long been the holy grail of "correct by construction". From the beginning, computer science has been equivalent to living in a system full of incentives. It's as correct as a well-trained dog, just like a human being.

Back in 2003, Turing Award winner Leslie Lamport sounded the alarm about the possibility that the future of computing would be biology rather than logic, saying computer science would allow us to live in a world of homeopathy and faith healing. At that time, his anxiety was mainly about complex software systems programmed by humans, rather than today's more mysterious large-scale learning models.

When moving from a field primarily concerned with intentional design and “correctness by construction” to trying to explore or understand existing (undesigned) artifacts, the methodological shift it will bring is worth thinking about. Unlike biology's study of wild creatures, artificial intelligence studies artificial artifacts created by humans that lack a "sense of design." Ethical issues will definitely arise when it comes to creating and deploying artificial artifacts that are not understood. Large learning models are unlikely to be guaranteed to support provable capabilities, whether with regard to accuracy, transparency, or fairness, yet these are critical issues in deploying and practicing these systems. While humans are also unable to provide evidence as to the correctness of their own decisions and actions, legal systems do exist to subject humans to punishments such as fines, reprimands, and even imprisonment. For large-scale learning systems, what are the equivalent systems?

The aesthetics of computational research will also change. Current researchers can evaluate papers by the proportion of them containing theorems and definitions. But as the goals of computer science become more and more like the goals of natural sciences such as biology, there is a need to develop new computational aesthetic methodologies (because the zero theorem will not be very different from the zero definition ratio). There are signs that computational complexity analysis has taken a backseat in AI research.

The above is the detailed content of After the overwhelming emergence of large models, computer science finally became a 'natural science'. For more information, please follow other related articles on the PHP Chinese website!

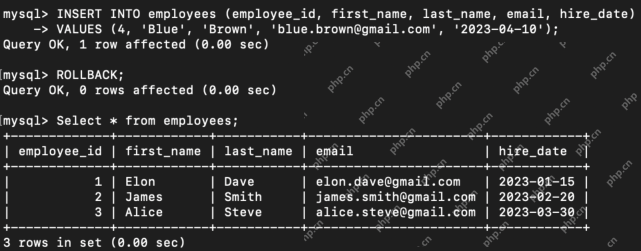

What are the TCL Commands in SQL? - Analytics VidhyaApr 22, 2025 am 11:07 AM

What are the TCL Commands in SQL? - Analytics VidhyaApr 22, 2025 am 11:07 AMIntroduction Transaction Control Language (TCL) commands are essential in SQL for managing changes made by Data Manipulation Language (DML) statements. These commands allow database administrators and users to control transaction processes, thereby

How to Make Custom ChatGPT? - Analytics VidhyaApr 22, 2025 am 11:06 AM

How to Make Custom ChatGPT? - Analytics VidhyaApr 22, 2025 am 11:06 AMHarness the power of ChatGPT to create personalized AI assistants! This tutorial shows you how to build your own custom GPTs in five simple steps, even without coding skills. Key Features of Custom GPTs: Create personalized AI models for specific t

Difference Between Method Overloading and OverridingApr 22, 2025 am 10:55 AM

Difference Between Method Overloading and OverridingApr 22, 2025 am 10:55 AMIntroduction Method overloading and overriding are core object-oriented programming (OOP) concepts crucial for writing flexible and efficient code, particularly in data-intensive fields like data science and AI. While similar in name, their mechanis

Difference Between SQL Commit and SQL RollbackApr 22, 2025 am 10:49 AM

Difference Between SQL Commit and SQL RollbackApr 22, 2025 am 10:49 AMIntroduction Efficient database management hinges on skillful transaction handling. Structured Query Language (SQL) provides powerful tools for this, offering commands to maintain data integrity and consistency. COMMIT and ROLLBACK are central to t

PySimpleGUI: Simplifying GUI Development in Python - Analytics VidhyaApr 22, 2025 am 10:46 AM

PySimpleGUI: Simplifying GUI Development in Python - Analytics VidhyaApr 22, 2025 am 10:46 AMPython GUI Development Simplified with PySimpleGUI Developing user-friendly graphical interfaces (GUIs) in Python can be challenging. However, PySimpleGUI offers a streamlined and accessible solution. This article explores PySimpleGUI's core functio

8 Mind-blowing Use Cases of Claude 3.5 Sonnet - Analytics VidhyaApr 22, 2025 am 10:40 AM

8 Mind-blowing Use Cases of Claude 3.5 Sonnet - Analytics VidhyaApr 22, 2025 am 10:40 AMIntroduction Large language models (LLMs) rapidly transform how we interact with information and complete tasks. Among these, Claude 3.5 Sonnet, developed by Anthropic AI, stands out for its exceptional capabilities. Experts o

How LLM Agents are Leading the Charge with Iterative Workflows?Apr 22, 2025 am 10:36 AM

How LLM Agents are Leading the Charge with Iterative Workflows?Apr 22, 2025 am 10:36 AMIntroduction Large Language Models (LLMs) have made significant strides in natural language processing and generation. However, the typical zero-shot approach, producing output in a single pass without refinement, has limitations. A key challenge i

Functional Programming vs Object-Oriented ProgrammingApr 22, 2025 am 10:24 AM

Functional Programming vs Object-Oriented ProgrammingApr 22, 2025 am 10:24 AMFunctional vs. Object-Oriented Programming: A Detailed Comparison Object-oriented programming (OOP) and functional programming (FP) are the most prevalent programming paradigms, offering diverse approaches to software development. Understanding thei

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),

Dreamweaver CS6

Visual web development tools

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment