Technology peripherals

Technology peripherals AI

AI The most complete collection of Transformers in history! LeCun recommends: Create a catalog for 60 models. Which paper have you missed?

The most complete collection of Transformers in history! LeCun recommends: Create a catalog for 60 models. Which paper have you missed?The most complete collection of Transformers in history! LeCun recommends: Create a catalog for 60 models. Which paper have you missed?

If there is something that has supported the development of large-scale models in the past few years, it must be Transformer!

Based on Transformer, a large number of models are springing up in various fields. Each model has a different architecture, different details, and a name that is not easy to explain.

Recently, an author conducted a comprehensive classification of all popular Transformer models released in recent years. And index, try to provide a comprehensive but simple catalog . The article includes an introduction to Transformer innovation and a review of the development process.

Paper link: https://arxiv.org/pdf/2302.07730.pdf

Turing Award winner Yann LeCun expressed his approval.

##The author of the article, Xavier (Xavi) Amatriain, graduated with a PhD from Pompeu Fabra University in Spain in 2005 and is currently an engineer at LinkedIn Vice President of the Department, mainly responsible for product artificial intelligence strategy.

Transformer is a type of deep learning model with some unique architectural features. It first appeared in the famous "Attention is All you Need" paper published by Google researchers in 2017. The paper was published in In just 5 years, it has accumulated an astonishing 38,000 citations.

The Transformer architecture also belongs to the encoder-decoder model (encoder-decoder), but in the previous models, attention was only one of the mechanisms, and most of them were based on LSTM. (Long Short-Term Memory) and other variants of RNN (Recurrent Neural Network).

One of the key insights of the paper that proposes Transformer is that the attention mechanism can be used as the only mechanism to derive the dependency between input and output. This paper does not intend to To delve into all the details of the Transformer architecture, interested friends can search the "The Illustrated Transformer" blog.

Blog link: https://jalammar.github.io/illustrated-transformer/

Only the most important components are briefly described below.

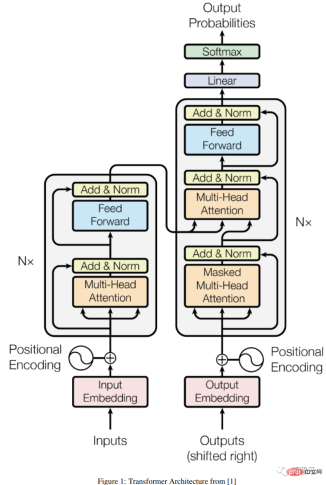

Encoder-Decoder Architecture

a The general encoder/decoder architecture consists of two models, the encoder takes the input and encodes it into a fixed-length vector; the decoder takes the vector and decodes it into an output sequence.

The encoder and decoder are jointly trained to minimize the conditional log-likelihood. Once trained, the encoder/decoder can generate an output based on a given input sequence, or it can score a pair of input/output sequences.

Under the original Transformer architecture, both the encoder and the decoder have 6 identical layers. In each of these 6 layers, the encoder has two sub-layers: a multi-head attention layer, and a Simple feedforward network with one residual connection and one layer normalization for each sub-layer.

The output size of the encoder is 512, and the decoder adds a third sub-layer, another multi-head attention layer on the encoder output. In addition, another multi-head layer in the decoder is masked out to prevent information leakage from applying attention to subsequent positions.

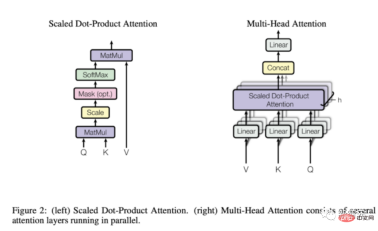

Attention mechanism

From the above description As can be seen, the only "strange" element in the model's structure is the attention of the bulls, and this is where the model's entire power lies.

The attention function is a mapping between query and a set of key-value pairs to the output. The output is calculated as a weighted sum of values, where the weight assigned to each value is given by Calculated by the compatibility function between query and corresponding key.

Transformer uses multi-head attention, which is the parallel calculation of a set of attention functions, also known as scaling dot product attention.

Compared with recurrent and convolutional networks, the attention layer has several advantages. The more important ones are its lower computational complexity and higher connectivity, which is good for learning sequences. Particularly useful for long-term dependencies in .

What can Transformer do? Why did it become popular?

The original Transformer was designed for language translation, mainly from English to German, but the first version of the paper Experimental results have shown that the architecture generalizes well to other language tasks.

This particular trend was quickly noticed by the research community.

In the next few months, the rankings of any language-related ML task will be completely occupied by some version of the Transformer architecture, such as the question and answer task Squad soon It was killed by various Transformer models.

One of the key reasons why Transofrmer can occupy most NLP rankings so quickly is: their ability to quickly adapt to other tasks, that is, transfer learning; pre-trained Transformer models can be very Easily and quickly adapt to tasks for which they have not been trained, a huge advantage over other models.

As an ML practitioner, you no longer need to train a large model from scratch on a huge data set, just reuse the pretrained model on the task at hand, maybe Just tweak it slightly with a much smaller data set.

The specific technique used to adapt pre-trained models to different tasks is so-called fine-tuning.

It turns out that Transformers are so adaptable to other tasks that although they were originally developed for language-related tasks, they quickly became useful for other tasks, From visual or audio and music applications all the way to playing chess or doing math.

Of course, none of these applications would be possible if it weren't for the myriad of tools readily available to anyone who can write a few lines of code.

Transformer was not only quickly integrated into major artificial intelligence frameworks (i.e. Pytorch and TensorFlow), but there were also some companies that were entirely built for Transformer.

Huggingface, a startup that has raised over $60 million to date, was built almost entirely around the idea of commercializing their open source Transformer library.

GPT-3 is a Transformer model launched by OpenAI in May 2020. It is a subsequent version of their earlier GPT and GPT-2. The company created a lot of buzz by introducing the model in a preprint, claiming that the model was so powerful that they were not qualified to release it to the world.

Moreover, OpenAI not only did not release GPT-3, but also achieved commercialization through a very large partnership with Microsoft.

Today, GPT-3 provides underlying technical support for more than 300 different applications and is the foundation of OpenAI’s business strategy. That's significant for a company that has received more than $1 billion in funding.

RLHF

From human feedback (or preferences ), also known as RLHF (or RLHP), has recently become a huge addition to the artificial intelligence toolbox.

This concept first came from the 2017 paper "Deep Reinforcement Learning from Human Preferences", but recently it has been applied to ChatGPT and similar conversational agents, and has achieved quite good results. The effect has attracted public attention again.

The idea in the article is very simple. Once the language model is pre-trained, it can produce different effects on the dialogue. responses and have humans rank the results, these rankings (also known as preferences or feedback) can be used to train rewards using reinforcement learning mechanisms.

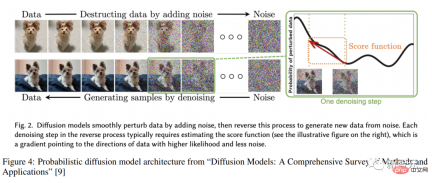

Diffusion modelDiffusion##

The diffusion model has become The new SOTA for image generation has a tendency to replace GANs (Generative Adversarial Networks).

The diffusion model is a type of trained latent variable model of variational inference. In practice, it means training a deep neural network to use a certain noise function. Blurred images are denoised.

A network trained in this way is actually learning the latent space represented by these images.

After reading the introduction, let’s start the retrospect journey of Transformer!

The above is the detailed content of The most complete collection of Transformers in history! LeCun recommends: Create a catalog for 60 models. Which paper have you missed?. For more information, please follow other related articles on the PHP Chinese website!

Tesla's Robovan Was The Hidden Gem In 2024's Robotaxi TeaserApr 22, 2025 am 11:48 AM

Tesla's Robovan Was The Hidden Gem In 2024's Robotaxi TeaserApr 22, 2025 am 11:48 AMSince 2008, I've championed the shared-ride van—initially dubbed the "robotjitney," later the "vansit"—as the future of urban transportation. I foresee these vehicles as the 21st century's next-generation transit solution, surpas

Sam's Club Bets On AI To Eliminate Receipt Checks And Enhance RetailApr 22, 2025 am 11:29 AM

Sam's Club Bets On AI To Eliminate Receipt Checks And Enhance RetailApr 22, 2025 am 11:29 AMRevolutionizing the Checkout Experience Sam's Club's innovative "Just Go" system builds on its existing AI-powered "Scan & Go" technology, allowing members to scan purchases via the Sam's Club app during their shopping trip.

Nvidia's AI Omniverse Expands At GTC 2025Apr 22, 2025 am 11:28 AM

Nvidia's AI Omniverse Expands At GTC 2025Apr 22, 2025 am 11:28 AMNvidia's Enhanced Predictability and New Product Lineup at GTC 2025 Nvidia, a key player in AI infrastructure, is focusing on increased predictability for its clients. This involves consistent product delivery, meeting performance expectations, and

Exploring the Capabilities of Google's Gemma 2 ModelsApr 22, 2025 am 11:26 AM

Exploring the Capabilities of Google's Gemma 2 ModelsApr 22, 2025 am 11:26 AMGoogle's Gemma 2: A Powerful, Efficient Language Model Google's Gemma family of language models, celebrated for efficiency and performance, has expanded with the arrival of Gemma 2. This latest release comprises two models: a 27-billion parameter ver

The Next Wave of GenAI: Perspectives with Dr. Kirk Borne - Analytics VidhyaApr 22, 2025 am 11:21 AM

The Next Wave of GenAI: Perspectives with Dr. Kirk Borne - Analytics VidhyaApr 22, 2025 am 11:21 AMThis Leading with Data episode features Dr. Kirk Borne, a leading data scientist, astrophysicist, and TEDx speaker. A renowned expert in big data, AI, and machine learning, Dr. Borne offers invaluable insights into the current state and future traje

AI For Runners And Athletes: We're Making Excellent ProgressApr 22, 2025 am 11:12 AM

AI For Runners And Athletes: We're Making Excellent ProgressApr 22, 2025 am 11:12 AMThere were some very insightful perspectives in this speech—background information about engineering that showed us why artificial intelligence is so good at supporting people’s physical exercise. I will outline a core idea from each contributor’s perspective to demonstrate three design aspects that are an important part of our exploration of the application of artificial intelligence in sports. Edge devices and raw personal data This idea about artificial intelligence actually contains two components—one related to where we place large language models and the other is related to the differences between our human language and the language that our vital signs “express” when measured in real time. Alexander Amini knows a lot about running and tennis, but he still

Jamie Engstrom On Technology, Talent And Transformation At CaterpillarApr 22, 2025 am 11:10 AM

Jamie Engstrom On Technology, Talent And Transformation At CaterpillarApr 22, 2025 am 11:10 AMCaterpillar's Chief Information Officer and Senior Vice President of IT, Jamie Engstrom, leads a global team of over 2,200 IT professionals across 28 countries. With 26 years at Caterpillar, including four and a half years in her current role, Engst

New Google Photos Update Makes Any Photo Pop With Ultra HDR QualityApr 22, 2025 am 11:09 AM

New Google Photos Update Makes Any Photo Pop With Ultra HDR QualityApr 22, 2025 am 11:09 AMGoogle Photos' New Ultra HDR Tool: A Quick Guide Enhance your photos with Google Photos' new Ultra HDR tool, transforming standard images into vibrant, high-dynamic-range masterpieces. Ideal for social media, this tool boosts the impact of any photo,

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Atom editor mac version download

The most popular open source editor

SublimeText3 Linux new version

SublimeText3 Linux latest version

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),

Zend Studio 13.0.1

Powerful PHP integrated development environment

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.